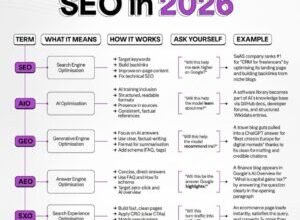

How does Google AI overview work? Inside the Algorithm: Understanding What Prompts a Google AI Overview

Introduction: why Google AI Overviews matter for SEO (and why I’m unpacking them for beginners)

Here’s how I’m thinking about the current state of search as an SEO practitioner: for years, we optimized for ten blue links. Now, we are optimizing for a conversation. When Google AI Overviews (formerly SGE) first rolled out, the panic was palpable. I remember looking at a client’s analytics for a small business FAQ page—a page that used to drive reliable traffic—and seeing the clicks evaporate because Google simply answered the question right there on the SERP.

It’s confusing for beginners. You might be asking, “Why is Google stealing my answer?” or “Is this a ranking factor or a completely separate system?” In this guide, I’m going to break down exactly how does Google AI overview work, from the query triggers to the Gemini 3 models powering it. I’ll skip the hype and focus on the mechanics, the SEO workflow you need to adapt, and the business realities we’re all facing. We will look at what works, what doesn’t, and how to survive the shift.

Quick answer: how does Google AI overview work in Google Search?

If you need the mental model immediately, think of an AI Overview not as a search result, but as a generated answer that sits on top of search results. It is a system designed to reduce the friction of searching.

Here is the breakdown of the ecosystem:

- What users see: A shaded box at the top of the results containing a synthesized answer, carousel links to sources, and often a “Show more” button to expand the context.

- What Google is optimizing for: Satisfaction and speed. They want to answer complex questions without making the user click three different links.

- What publishers should care about: We are trading traditional organic clicks for “visibility” and citations. The game has shifted from “getting the click” to “earning the citation.”

If you remember only one thing: AI Overviews are dynamic. Just because a query triggers one today doesn’t mean it will tomorrow. It depends entirely on whether Google’s systems deem a summary “helpful” at that specific moment.

How does Google AI overview work behind the scenes? A beginner-friendly model-to-SERP flow

To understand how to rank in these things, you have to understand the pipeline. It’s not magic; it’s a process of retrieval and synthesis. Currently, these overviews are powered largely by Gemini 3, which became the default model around November 2025 . The feature is now live in over 200 countries and 40+ languages , meaning this is a global standard, not a test.

Think of the model like a research assistant that has to show its receipts. It can’t just make things up (hallucinate) without risk, so it relies heavily on “grounding” its answers in real content—hopefully yours.

Here is the conceptual flow I check when I’m auditing SERPs:

Step 1: the query gets interpreted (intent, entities, and ambiguity)

It starts with query understanding. Google analyzes the search terms to decipher not just the keywords, but the search intent and the entities involved. For example, if I search for “best running shoes,” Google knows I want a list of products. But if I search “why do my shins hurt after running in flat shoes,” the system recognizes a complex, informational problem.

I’ve seen this go wrong on simple queries. If the wording is vague, the system might misinterpret the intent and offer a definition when you actually wanted a product. But generally, Gemini 3 is excellent at parsing semantic complexity.

Step 2: Google decides whether an AI Overview is “helpful”

This is the gatekeeper. Google doesn’t waste computing power on every query. Based on what Google and industry data suggest, the system calculates a “helpfulness” score. If the query is complex, multi-step, or requires synthesizing info from multiple sites, an Overview is triggered. If it’s a simple fact (e.g., “population of NYC”), it usually skips the AI and shows a Knowledge Graph or standard snippet.

Step 3: retrieval + synthesis (where sources come from)

Once triggered, the system performs retrieval augmented generation (RAG). It fetches relevant documents from its index—ranking top-performing organic pages—and feeds them into the Gemini model. Then comes synthesis. The AI summarizes the retrieved info into a coherent paragraph. A good synthesis connects the dots; a bad one just lists facts.

Step 4: grounding, citations, and safety checks before the SERP shows it

Before rendering, the system performs grounding. It matches the AI-generated claims back to the URLs it retrieved. If a sentence can’t be supported by a source, it’s often discarded or rewritten. This is where AI citations happen. Finally, strict safety checks run to ensure the content isn’t harmful, hateful, or sexually explicit. Only then does the box appear on your screen.

What triggers a Google AI Overview (and when it won’t show)

One of the most common questions I get is, “Which of my keywords are at risk?” Based on data from late 2025, we know that What triggers a Google AI Overview is heavily dependent on the query type. While usage peaked at nearly 25% of all queries in mid-2025, it settled to under 16% as Google got more selective .

Here is a framework I use to triage keywords:

| Query Pattern | Likelihood | Why it Triggers | Notes for SEO |

|---|---|---|---|

| Informational / How-to | High | Requires summarizing steps or concepts. | Your content must be structured clearly to be cited. |

| Commercial | Rising (~18%) | Users want comparisons (Product A vs Product B). | Focus on “best for” use cases and comparison tables. |

| Transactional | Moderate (~14%) | Finding specific deals or purchase paths. | Price points and stock status schema help here. |

| Navigational | Low (~10%) | User wants a specific website (e.g., “Facebook login”). | Usually unnecessary, but growing for brand research. |

Trigger patterns I see most often (complexity, multi-step tasks, comparisons, “best way to…”)

I notice Overviews show up consistently for queries that imply a “journey.”

- Complex questions: “How does solar panel efficiency change in winter vs summer?”

- Multi-step queries: “Plan a 3-day itinerary for Tokyo with kids.”

- Nuanced comparisons: “Difference between Roth IRA and Traditional IRA for high earners.”

In these cases, a single blue link rarely has the full answer, so the AI synthesis adds massive value.

When Google tends to avoid AI Overviews (simple facts, sensitive topics, strong navigational intent)

There are zones where Google plays it safe. Safety sensitive queries (YMYL – Your Money or Your Life) regarding medical diagnoses or legal advice often suppress AI Overviews to avoid liability. Similarly, if I search “weather Los Angeles,” I get a widget, not an AI essay. Simplicity and safety are the enemies of AI Overviews.

How “Show more” can lead into AI Mode (and why that changes search behavior)

The feature that changed my SEO strategy the most is AI Mode. When a user clicks “Show more,” they aren’t just expanding text; they are entering a conversational interface. They can ask follow-up questions like, “What about for vegans?” without restating the original query. This means we need to optimize for the second and third click, anticipating the follow-up questions a user might ask and answering them on our page.

An SEO-ready workflow to earn citations in AI Overviews (what I’d implement on a business site)

Knowing how it works is nice, but knowing how to capitalize on it is better. If I were running SEO for a business today, I wouldn’t try to “beat” the AI. I would try to feed it. We want to be the source that Gemini 3 cites.

If you need a way to standardize briefs and QA across many pages to ensure this level of quality, you might look at an SEO content generator that focuses on intelligence and structure rather than just bulk text output. But regardless of the tools you use, here is the manual workflow I follow:

Step 1: pick keywords where an Overview is likely (intent + complexity check)

I start by scanning the SERP. I look for the “shimmer” of the AI Overview. If it’s there, I check the citations. Are they blogs? Major publishers? Reddit threads? I triage my keyword list by AI Overview likelihood. For a local plumber, “how to unclog a drain” is an Overview magnet. “Emergency plumber near me” is likely a map pack. I prioritize the former for informational content.

Step 2: design the page to be easy to summarize (clear answer-first layout)

The model is looking for answers, not fluff. I use an answer-first architecture.

- The Direct Answer: The first 2-3 sentences under the H1 should directly answer the query.

- Structured Details: Use definitions, bullet points, and steps immediately after.

- No Preamble: If I see an intro that says “In today’s digital world…” I delete it. If it takes 600 words to reach the point, I rewrite it.

Step 3: strengthen “groundability” with sources, first-hand details, and consistent terminology

This is about E-E-A-T. To make your content “sticky” for the AI, you need verifiable claims. Instead of saying “prices vary,” say “prices typically range from $50 to $100 based on 2025 market rates .” Use consistent terminology that matches the query entities. When I validate Overviews, I often see the AI citing pages that cite other primary sources. Be the primary source.

Step 4: apply on-page SEO basics where they matter (titles, headings, schema, internal links)

Don’t abandon the classics. Your H2s and H3s should essentially be the FAQs users would ask in AI Mode. I use schema markup (especially Article and FAQ schema) to help Google parse the page structure, though it’s not a magic switch. Internal linking is critical here—connect your “definition” pages to your “deep dive” pages so the crawler understands the relationship.

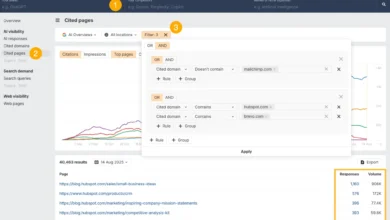

Step 5: publish, measure, and iterate (what to track in Search Console)

You won’t always get a clean A/B test—so I look for directional trends and corroborating SERP checks. In Google Search Console, I watch my impressions. If impressions stay high but CTR drops, I know an AI Overview is likely answering the query. I annotate these dates. I also track “citation traffic”—referrals that come from the specific expansion links in the Overview.

Common mistakes that reduce your chance of appearing in AI Overviews (and how I fix them)

I audit a lot of sites, and I see the same patterns killing visibility in AI results. Here are the top mistakes I usually see sites get stuck on:

- Mistake: Burying the Answer.

Why it matters: Gemini 3 minimizes computing cost; it won’t read 2,000 words to find a “yes” or “no.”

The Fix: Move your core answer to the top 10% of the page. - Mistake: Thin or Generic Content.

Why it matters: If your content says exactly what 10 other sites say, the AI doesn’t need to cite you.

The Fix: Add unique data, a proprietary image, or a specific counter-argument. - Mistake: Misaligned Intent.

Why it matters: Optimizing for “what is X” when the user wants “how to buy X.”

The Fix: Match the H1 and content format to the current live SERP. - Mistake: Neglecting Structure.

Why it matters: Walls of text are hard for the model to parse into a structured summary.

The Fix: Break content into lists, tables, and clear H2s/H3s.

Business impact: clicks, attribution, and the regulatory conversation around AI Overviews

So, what does this mean for the bottom line? If I’m reporting performance to a CMO, I separate “visibility” from “visits” and explain why they diverge. The reality is mixed. For many publishers, CTR decline is real on informational queries. However, evidence suggests that AI Overviews drive over a 10% increase in total Google Search usage for queries that have them . Users search more because the experience is better, even if they click less per query.

| Stakeholder | Upside | Trade-off |

|---|---|---|

| Users | Faster answers, less clicking back and forth. | Potential over-reliance on summaries. |

| Businesses | High-visibility citations build brand authority. | Loss of top-of-funnel informational traffic. |

| Publishers | Opportunity to be the “verified source.” | Attribution battles and lower ad revenue. |

What the data suggests about where Overviews are expanding (commercial, transactional, navigational)

The scariest part for businesses is the creep into commercial intent. Commercial queries triggering Overviews jumped from 8% to nearly 18% in late 2025 . Even transactional queries are sitting at around 14%. If you sell products, your “best pricing” pages are now competing with an AI that summarizes pricing for you.

Regulatory pressure & publisher options: what to watch (without speculation)

Regulators are watching this closely. The UK CMA is currently proposing rules regarding publisher opt-out and transparency, with consultations open until February 25, 2026 . There is a push to allow publishers to block their content from AI training without vanishing from Google Search entirely. My suggestion? Assign someone on your team to monitor these updates quarterly. The rules of engagement are being written right now.

FAQs: Google AI Overviews, Gemini 3, and AI Mode (beginner answers)

What triggers a Google AI Overview?

Typically, semantic complexity triggers it. If a query implies multiple questions in one (e.g., “compare iphone 16 vs 15 battery life and camera”), Google sees an opportunity to be helpful by synthesizing the answer. Simple, navigational queries (like searching for a specific URL) trigger them far less often.

How advanced are the Gemini models behind AI Overviews?

They are significantly more capable than previous iterations. Gemini 3, which launched in November 2025 , handles multimodal inputs (text, images, video) and has better reasoning capabilities. In practice, this means it’s better at stitching together multi-part answers without losing the thread or hallucinating as often.

Can users interact further with AI Overviews?

Yes, through AI Mode. By clicking “Show more” or asking a follow-up, users enter a conversational loop. The system retains the context of the first search, so if you ask “what about the price?” it knows you are still talking about the previous topic. This transforms search from a directory into a dialogue.

Are publishers affected by AI Overviews?

Yes, significantly. While some sites see a boost in qualified traffic from citations, many report a drop in standard organic clicks, especially for simple Q&A content. It varies by niche—I’ve seen some pages lose clicks while brand visibility improves via citations, creating a complex attribution picture.

Summary + next actions I recommend (so you can adapt without guesswork)

We’ve covered a lot, but here is the recap if you’re about to brief your team:

- Mechanics: Google uses Gemini 3 to synthesize answers for complex queries, prioritizing helpfulness and speed.

- Triggers: Complex, multi-step, and commercial comparison queries are the most likely to trigger an Overview.

- Optimization: You earn citations by structuring content with clear, direct answers (“Answer First”) and grounding claims in verifiable sources.

What I’d do this week:

- Audit your top 20 keywords: Check the live SERP. Are Overviews appearing? Take screenshots.

- Rewrite your intros: Ensure your high-traffic pages answer the user’s core question in the first 120 words.

- Scale your content production: Consider using a high-quality AI article generator to help draft structured, intent-matched content efficiently, then edit for human insight.

- Monitor GSC: Watch for impressions without clicks—this is your signal that an Overview might be “answering” for you.

I re-check SERPs monthly because this feature evolves rapidly. The best strategy is to remain flexible, keep your content structured, and always verify your sources.