Introduction: AI-ready content and why I’m focusing on AI content optimization tools now

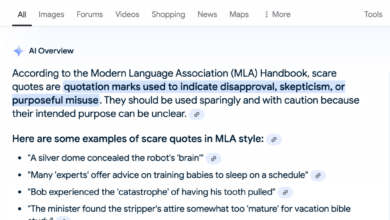

If you manage content for a living, you’ve likely noticed a frustrating pattern recently: impressions in Google Search Console are climbing, but clicks remain flat or are even dipping. That is usually my cue to audit a strategy—not for better rankings, but for better extraction. The reality is that users are increasingly getting their answers directly on the results page via AI Overviews, or they are bypassing traditional search engines entirely in favor of ChatGPT or Gemini.

We are moving from an era of “ranking and clicking” to one of “ranking and being cited.” For US-based growth leads and content managers, this requires a shift in tooling. We don’t just need keyword tools anymore; we need AI content optimization tools that help us structure information so machines can confidently read, understand, and summarize it.

In this guide, I’ll walk you through a newsroom-grade approach to selecting these tools—covering the difference between GEO (Generative Engine Optimization) and traditional SEO, a practical decision framework to avoid buying overlapping software, and a step-by-step workflow I use to ensure content is “AI-ready” before we hit publish.

Search intent and how to use this guide

If you are here, you likely have one of two goals: you want a credible list of tools to investigate, or you want a repeatable workflow to adapt your content strategy for the AI era. I have structured this article to serve both needs.

First, I’ll provide a comparison framework and table so you can quickly shortlist the right software. Then, I’ll share a practical, step-by-step workflow—from research to monitoring—that you can implement immediately. My goal is to give you a system that produces consistent, high-quality outputs, not just a list of features.

Quick definition: what “AI-ready content” means

Think of your website like a filing system. Traditional SEO was about putting a sticky note on the file folder so the librarian (Google) could find it. AI-ready content is about organizing the papers inside that folder so clearly that an assistant (the LLM) can read it, summarize it, and answer questions about it without making things up. It means clear entities, structured data, explicit definitions, and corroborating evidence that makes your content the safest source to cite.

GEO vs traditional SEO: what changes when people get answers from LLMs

We are witnessing the rise of Generative Engine Optimization (GEO). While traditional SEO focuses on driving traffic to a specific URL, GEO focuses on maximizing the visibility of your brand and content within AI-generated responses. This isn’t just a buzzword; it is a fundamental shift in how information is retrieved. Data suggests that AI Overviews appeared in over 50% of U.S. Google search results by August 2025 , drastically increasing the number of “zero-click” interactions.

If you only do SEO, what you might miss in 2026 is the vast segment of the market that discovers products through conversation rather than navigation. A potential buyer might ask ChatGPT, “What is the best CRM for a small dental practice?” If your brand isn’t part of the LLM’s training data or accessible via live retrieval, you simply don’t exist in that conversation.

FAQ-style clarity: What is Generative Engine Optimization (GEO)?

GEO is the practice of optimizing content specifically for discovery by generative AI models (like ChatGPT, Gemini, and Claude) and AI-augmented search engines (like Google’s AI Overviews). The goal is to ensure your content is cited, mentioned, or used as the primary source for AI-generated summaries.

How GEO differs from SEO (what I track instead of just rankings)

While I still track rankings for transactional keywords, my dashboard has expanded. Here is what I watch week-to-week for GEO:

- Visibility in Summaries: Is my content being used to answer the “What is X?” questions in AI Overviews?

- Brand Mentions/Citations: How often is my brand recommended as a solution in conversational queries?

- Entity Association: Does the AI understand clearly what my business does, or does it hallucinate features we don’t have?

- Consistency: Is the information about my pricing and features consistent across the web (reviews, partners, home page) so the LLM trusts it?

What makes content “AI-ready” (and what LLMs tend to reward)

When I edit content for AI readiness, I stop thinking about “keyword density” and start thinking about “information gain” and “authority.” LLMs are probability engines; they predict the next best word based on patterns. To be cited, your content needs to look like the most probable, high-authority answer.

LLMs tend to reward content that has high “E-E-A-T” (Experience, Expertise, Authoritativeness, and Trustworthiness). They look for clear definitions of entities (people, places, concepts), strong topical coverage that connects related ideas, and structured formatting that makes extraction easy. If your content is a wall of text with vague headings, an LLM might skip it for a source that uses a clean list.

Example: The Rewrite

Before (Hard for AI to extract):

“When you’re looking at different software options for project management, it’s really important to think about how your team works. Some tools are good for agile and others are better for waterfall, which can be confusing if you don’t know the difference.”

After (AI-Ready):

“Choosing Project Management Software: Agile vs. Waterfall

To select the right tool, evaluate your team’s methodology:

1. Agile Tools (e.g., Jira, Asana): Best for iterative development and sprint planning.

2. Waterfall Tools (e.g., Microsoft Project): Best for linear, sequential construction projects.”

The second version gives the LLM clear categories and examples to pull directly into a summary.

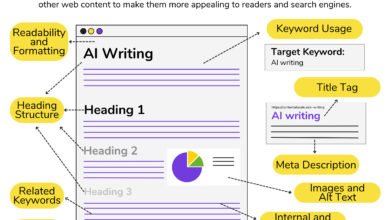

The AI-ready checklist (reader can copy/paste)

- Structure: Use H2/H3 tags to organize hierarchy. Use lists for steps or features.

- Definition: Include a direct, 1–2 sentence answer immediately following a question-based heading.

- Evidence: Cite primary sources and data to back up claims.

- Entities: Use consistent, industry-standard terminology for products and concepts.

- Context: Ensure the article creates a complete topical cluster (linking to related definitions).

Where on-page SEO still matters (titles, headings, internal links, schema)

Don’t throw out your traditional SEO playbook. Descriptive title tags and H1s help the AI understand the primary topic. Internal links are critical because they help LLMs map the relationship between your pages (e.g., establishing that “Feature A” belongs to “Product B”). Schema markup is perhaps the most direct way to speak to machines—we’ll cover that in the technical section.

How I evaluate AI content optimization tools (categories, criteria, and a comparison table)

The term “AI content optimization tool” has become a bit of a junk drawer. It can mean anything from a grammar checker to a sophisticated market simulator. To make a smart purchase, I categorize them into distinct buckets. You likely don’t need one of everything.

Below is a breakdown of the landscape. I’ve included established names like Semrush and emerging players like Azoma, but remember: the tool is only as good as the strategy behind it.

| Tool Category | Primary Goal | Best For… | Typical Output |

|---|---|---|---|

| GEO / AI Visibility Monitoring (e.g., Azoma, Otterly.ai) |

Track brand mentions in LLMs | Enterprise brands & high-competition sectors | Share of Voice in ChatGPT, Sentiment Score |

| Traditional SEO Suites (e.g., Semrush, Ahrefs) |

Keyword research & technical health | All-around digital marketing teams | Rankings, Backlinks, Keyword Volume |

| Content Optimization (e.g., Surfer, Frase, Clearscope) |

Optimize individual pages for search intent | Editors & writers improving specific posts | Content Score (0-100), NLP Keyword List |

| Content Intelligence & Generation (e.g., SEO content generator platforms) |

Scale production with built-in optimization | Growth teams needing volume + quality | Full drafts, Briefs, Bulk Articles |

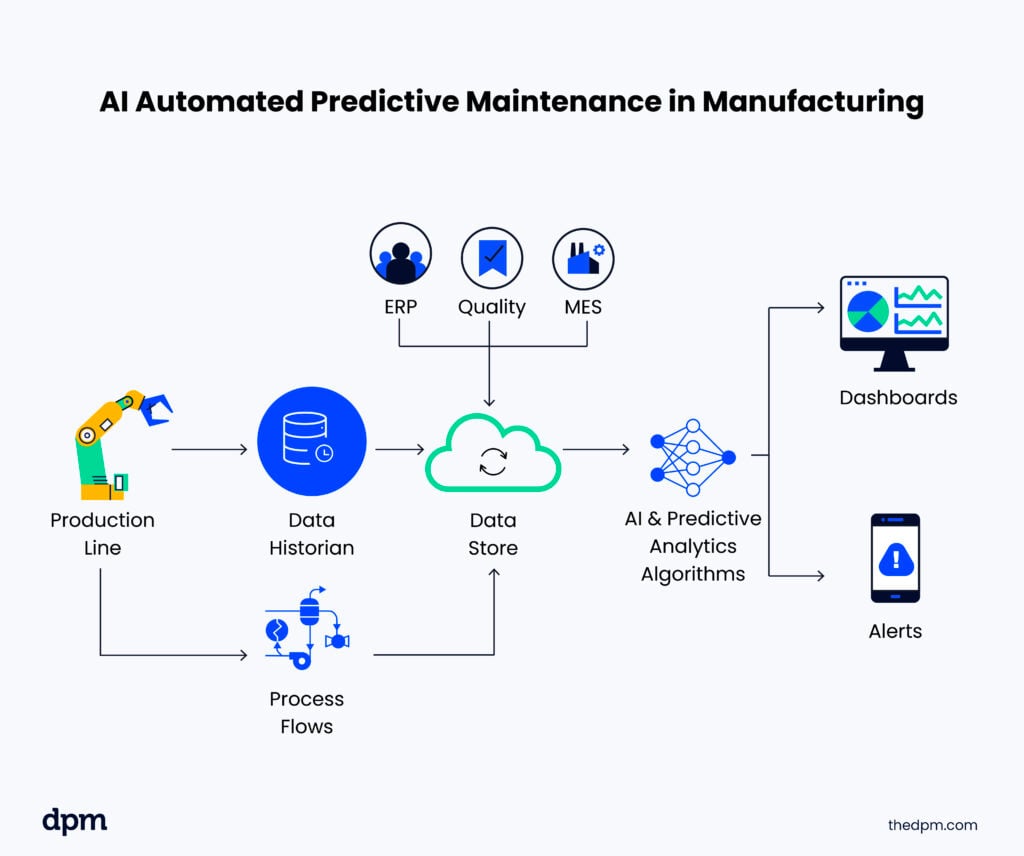

Tool category 1: GEO / AI visibility monitoring (what it measures)

These are the newest tools on the block. Platforms like Azoma or Otterly.ai typically work by simulating thousands of prompts (digital twins) to see how LLMs respond. They answer questions like: “If a user asks for top accounting software, is my brand listed?” If you are in a crowded market, this data is invaluable. However, for smaller local businesses, manual checking might suffice for now.

Tool category 2: traditional SEO platforms with AI visibility features

The giants like Semrush and Ahrefs are adapting quickly. They are integrating “AI visibility” metrics into their existing dashboards. If you already pay for one of these, check their beta features before buying a standalone GEO tool. I still rely on these for technical audits and backlink data—foundational elements that LLMs still use to judge authority.

Tool category 3: content optimization + briefing (from SERP to outline to score)

Tools like Surfer, Frase, and Clearscope analyze top-ranking pages to tell you which topics and terms to cover. A word of caution: don’t optimize blindly to the score. I have seen writers stuff irrelevant keywords just to turn a bar green. Use these tools to identify gaps in your topic coverage, not as a replacement for editorial judgment.

Decision table: which tools to pick based on your goal

- Goal: Improve existing rankings? → Use Content Optimization tools (Surfer/Clearscope).

- Goal: Monitor brand reputation in AI? → Use GEO Monitoring (Azoma/Otterly).

- Goal: Publish high-quality content at scale? → Use Content Intelligence/Generation tools.

- Goal: Fix technical crawl issues? → Use Traditional SEO Suites (Semrush/Ahrefs).

A beginner-friendly workflow to use AI content optimization tools

Here is the exact workflow I follow when I need a piece of content to perform in both Google Search and AI answer engines. This process ensures we aren’t just creating noise, but building assets that machines can cite.

Step 1: Start with questions, not keywords (LLM-style research)

Keyword volume doesn’t tell the whole story anymore. I start by gathering real questions. I look at “People Also Ask” boxes, Reddit threads, and I even ask ChatGPT: “What are the top 10 specific questions a [Job Title] has about [Topic]?” This gives me the conversational queries that actual humans use—and that LLMs are trained to answer. I save these prompts in a shared doc so my team doesn’t have to reinvent the wheel.

Step 2: Build a brief that an LLM (and a human) can follow

Never start writing without a brief. A good brief ensures you cover the entities and context the AI needs. My template always includes:

- User Intent: What is the reader trying to achieve?

- Primary Question: The one thing this article must answer.

- Entities to Include: Specific terminology (e.g., “API,” “SaaS,” “Churn Rate”).

- Required Sources: Primary data or studies we must cite.

Step 3: Draft fast, then edit like an editor (where AI writing fits)

Drafting is where you can leverage an AI article generator to move quickly. I treat AI drafts like work from a talented intern—they are helpful starting points, but they are not publish-ready. The AI can get words on the page and structure the arguments, but my job (and yours) is to infuse it with unique brand perspective, verify the facts, and ensure the tone is right. This human-in-the-loop approach is non-negotiable for quality.

Step 4: Optimize for extraction (headings, lists, definitions, TL;DR blocks)

Once the draft exists, I optimize it for extraction. I look for long, winding paragraphs and break them down. If we are defining a term, I ensure the definition is concise and immediately follows the heading. I add “TL;DR” summaries at the top of complex sections. This isn’t “dumbing it down”; it’s formatting for readability—both for busy humans and scraping bots.

Step 5: Add credibility signals (sources, author info, real-world proof)

Trust is the currency of the AI era. LLMs are being tuned to prioritize factual accuracy. I make sure every claim is backed by a source. If I quote a statistic, I link to the original report. I also ensure the author bio is visible and links to LinkedIn or a profile page. This helps establish E-E-A-T.

Step 6: Publish and distribute for corroboration (SEO + PR alignment)

Publishing is just the start. For an LLM to “trust” a fact, it helps if that fact appears in multiple places. When we publish a key article using an Automated blog generator system, we also distribute snippets of it to social media, newsletters, and partner sites. This “corroboration” across the web signals to the AI that this information is widely accepted and accurate.

Step 7: Monitor results (rankings + AI visibility + conversions)

Finally, I measure impact. If you don’t have a dedicated GEO tool yet, start simple. Create a spreadsheet. Once a month, search for your core brand terms in ChatGPT and Google’s AI Overview. Note the sentiment and accuracy. Are they getting your pricing right? Are they mentioning your competitors? This manual check takes 30 minutes but provides massive insight.

Multimodal + technical upgrades that help LLM search

You don’t need to be a developer to make small technical upgrades that have a big payoff for AI visibility. LLMs are increasingly multimodal, meaning they can “see” images and read code structures.

Schema and structured data: what I implement first

Schema is code that helps machines understand your content. I prioritize implementing Article schema on every blog post. If we have a true FAQ section, I add FAQPage schema. For local businesses, Organization and LocalBusiness schema are critical. A common misconception is that schema guarantees a rich snippet—it doesn’t, but it does make your content unambiguous to the crawler.

Multimodal signals: captions, visuals, and how summaries may use them

Don’t ignore your images. I ensure every chart or diagram has a descriptive caption and alt text. There is emerging research on “Caption Injection” suggesting that placing text captions closer to images helps multimodal models understand the context better. If you have a workflow, include a diagram. It increases the time-on-page for humans and gives the AI another data point to analyze.

Common mistakes with AI content optimization tools (and how I fix them)

- Optimizing to the Score: I’ve made this mistake—stuffer keywords to get a “90/100” score. It ruins readability. Fix: Use the score as a guide for topic coverage, not a rule for word choice.

- Publishing Thin AI Drafts: Shipping raw AI content without editing is a fast track to mediocrity. Fix: Always add unique examples or data that an AI wouldn’t know.

- Ignoring Internal Links: If you don’t link your own content, the AI won’t know how it connects. Fix: Add 3-5 internal links per post to relevant topics.

- Forgetting Sources: Claims without evidence are hallucinations waiting to happen. Fix: Link to primary data sources for every statistic.

- Neglecting Distribution: Content that lives only on your blog is harder for AI to corroborate. Fix: Share key insights across social and newsletters.

Mini QA checklist before I hit publish

- Fact Check: Are all numbers and names accurate?

- Source Check: Are external links working and reputable?

- Scan Check: Can I understand the main points just by reading the headers?

- Schema Check: Did I validate the structured data?

- Human Touch: Did I add at least one personal insight or real-world example?

FAQs + summary: what to do next with AI content optimization tools

FAQ: What types of tools support AI content optimization?

Support comes from three main categories: GEO/Monitoring tools (like Azoma) that track visibility, SEO Suites (like Semrush) that handle technical foundations, and Content Intelligence tools (like Kalema) that assist in creating and optimizing the content itself.

FAQ: Why should businesses care about GEO now?

With AI Overviews taking up significant real estate on search result pages and zero-click searches rising, businesses that ignore GEO risk becoming invisible. It’s about protecting your brand’s presence in the conversation where decisions are increasingly being made.

FAQ: How can content creators optimize for AI answer engines?

Focus on structure and authority. Use clear headings, provide direct answers to questions, use lists and tables for data, cite high-quality sources, and ensure your technical schema is correct.

Summary & Next Steps

To recap, the shift to AI-ready content is about clarity and authority. GEO isn’t replacing SEO; it’s evolving it. We need to write for machines that read like humans—valuing structure, evidence, and clear entities.

If I were starting this week, here is exactly what I would do:

- Audit one key post: Take your highest-traffic article and rewrite the headers and definitions to be “extraction-ready.”

- Build a brief template: Create a standard document that forces you to define user intent and required entities before writing.

- Start a “Digital Twin” check: Once a month, ask ChatGPT questions about your brand and products to see what it says.

- Add Schema: Ensure your top pages have valid Article and Organization schema.

The tools are there to help us, but the strategy—the commitment to quality and clarity—starts with you.