The 2026 Leaderboard: The best rank trackers for LLM search (and what “accuracy” really means)

Introduction: Why I’m tracking LLM search visibility in 2026 (and why you probably should, too)

Last month, I ran a simple experiment. I asked ChatGPT the exact same buying question—”best enterprise CRM for data privacy”—ten times in a row, clearing the context between each run. The result? My client’s brand appeared in the answer four times. A competitor appeared six times. In three instances, the answer was completely different.

If you are coming from traditional SEO, this variability is terrifying. We are used to a world where rank #3 is rank #3. But in 2026, with Generative Engine Optimization (GEO) taking center stage, we are dealing with probabilistic systems, not static lists. If you aren’t measuring this volatility, you aren’t seeing the whole picture.

This article isn’t about “hacking” AI. It’s about establishing a rigorous baseline. I’m going to share the leaderboard of tools I trust, my personal framework for defining “accuracy,” and how to turn that data into a workflow. Because once you have the data, you need an execution layer—whether that’s your content team manually updating pages or using an AI SEO tool like Kalema to operationalize those insights into better content.

Here is what works, what doesn’t, and where the market stands right now.

What makes rank tracking for LLM search different from traditional SEO rank tracking?

To understand the tools, you have to understand the mechanism. Traditional rank tracking is like checking a billboard; you drive by, and it’s either there or it isn’t. LLM visibility tracking is more like listening to a crowded room to hear how often your name is mentioned.

The core differences:

- Deterministic vs. Probabilistic: Google Search (mostly) delivers a fixed list of blue links. LLMs generate answers word-by-word based on probability, meaning the output can change based on “temperature” settings and slight prompt variations.

- Position vs. Citation: You don’t usually “rank #1” in an AI answer. You are either cited as a source, recommended in a list, or mentioned in the text.

- Single Check vs. Sampling: Because of the variability I mentioned earlier, checking once is statistically useless. Credible tools run the same prompt multiple times (sampling) and average the results to give you a “visibility score.”

The new “units” of measurement: mentions, citations, and answer real estate

When I look at reports, I’m not looking for a ranking number. I’m looking for share of voice. Here are the metrics that actually impact business decisions:

- Citation Rate: In a sample of 10 or 100 runs, what percentage of time is your URL cited as a source? This is the closest proxy to “ranking.”

- Share of Voice (SoV): How much text real estate does your brand occupy compared to competitors?

- Sentiment: Is the AI recommending you, or just listing you? Tools now analyze the adjectives used around your brand name (e.g., “expensive,” “reliable,” “industry standard”).

Which LLM platforms are most commonly supported (and what that means for US marketers)

Not all tools track all engines. Your choice should depend on where your customers actually search. Currently, the landscape covers:

- The Big Three: ChatGPT (OpenAI), Gemini (Google), and Claude (Anthropic).

- Search-Native AI: Google AI Overviews (formerly SGE) and Perplexity.

- Open Models: Llama (Meta) and Mistral.

(If I had to pick just two to start tracking today, I’d focus on Google AI Overviews for volume and ChatGPT/Perplexity for high-intent B2B research.)

My accuracy framework for choosing the best rank trackers for LLM search

I’ve learned the hard way that more data doesn’t fix bad prompts. You can spend thousands on enterprise tracking, but if your methodology is flawed, you’re just tracking noise. Before we look at the specific tools, here is the checklist I use to evaluate them. This is the “why” behind the leaderboard.

| Criterion | Why it matters | What to look for |

|---|---|---|

| Sampling Depth | LLMs are variable. One check is luck; ten checks is a trend. | Transparency. Does the tool disclose iterations? (Target 5–20+ per prompt). |

| Prompt Flexibility | Real users don’t just search keywords; they ask complex questions. | Ability to input full natural language questions, not just keywords. |

| Citation Transparency | You need to know exactly which URL is driving the mention. | Direct links to the source URL cited in the answer. |

| Historical Logging | You need to prove ROI over time. | Archives of the actual AI text responses, not just a score. |

Step 1: Build a prompt set that mirrors real customer intent (not SEO keywords)

Don’t just upload your keyword list. Translate keywords into questions. Here is the prompt template I use:

- Informational: “How do I [solve problem] with [software category]?”

- Commercial Investigation: “Best [product category] for [specific persona] in 2026.”

- Comparison: “[Competitor A] vs [My Brand] vs [Competitor B] for enterprise.”

- Transactional: “Price of [My Brand] implementation.”

- Brand Navigational: “Is [My Brand] legit?”

Step 2: Decide your “statistical” settings: iterations, variants, and time windows

Consistency is key. Research suggests that sampling ranges vary wildly—from 5 to up to 500 iterations per prompt depending on the tool . For most mid-market brands, you don’t need 500 iterations. You need a statistically significant sample relative to your budget. I usually aim for 10–20 iterations per prompt on a weekly cadence. This balances cost with data stability.

Step 3: Choose metrics you can act on (visibility, citation rate, competitors, sentiment)

When my stakeholders ask “how are we doing?”, I don’t give them a raw dump of text. I track:

- Visibility Score: If it drops, I check technical access (robots.txt).

- Citation Rate: If it drops, I check content freshness.

- Negative Sentiment: If this spikes, I check third-party review sites, which LLMs heavily rely on.

The 2026 leaderboard: the best rank trackers for LLM search (ranked by accuracy signals)

This isn’t a pay-to-play list. This is a breakdown based on the accuracy signals I described above: sampling rigor, transparency, and usability. The market has split into three categories: specialized GEO tools (depth), SEO suites (breadth), and enterprise platforms (governance).

Quick comparison table (coverage, sampling, metrics, and who it’s for)

How to read this table: “Sampling” refers to how many times the tool runs a prompt to get an average score. If “Not disclosed,” treat the data as directional, not absolute.

| Tool | Key Platforms | Sampling/Iterations | Core Metrics | Best For |

|---|---|---|---|---|

| AI Search Watcher | ChatGPT, Gemini, Claude, Llama | Multi-run (Configurable) | Visibility %, Citations | SEO pros wanting pure visibility data |

| Peec AI | Perplexity, ChatGPT, AIO | High-volume sampling | Share of Voice, Sentiment | Brand reputation managers |

| SE Ranking | Google AIO, ChatGPT, Gemini | Daily updates (Single/Avg) | AIO presence, Snippet text | Teams wanting one SEO dashboard |

| Rankability | Major LLMs | Prompt-level testing | Content optim. + Visibility | Content teams |

| Evertune AI | All major LLMs | 1M+ responses/mo [Source needed] | Recommendation frequency | Enterprise / Large Brands |

Specialized AI visibility (GEO-first) tools: when you need depth over breadth

These tools were built from the ground up for the probabilistic nature of AI. They generally offer better sampling controls.

AI Search Watcher (Mangools)

What I like: It’s straightforward. It tracks presence across multiple models (ChatGPT, Gemini, Claude) and focuses heavily on citations. They use multiple prompt executions to average out the noise.

Watch-outs: It’s a separate tool, so you can’t see it overlaid on your keyword rankings easily.

Best for: Dedicated SEOs who need rigorous data.

Peec AI & RankLens

What I like: These tools lean heavily into structured monitoring. RankLens, for instance, utilizes high-volume sampling to detect patterns. Peec AI excels at sentiment analytics—telling you not just that you appeared, but how the AI described you.

Watch-outs: Can be pricier per keyword due to the compute cost of sampling.

Best for: Brand managers and PR teams.

SEO suites adding AI visibility modules: best if you want one dashboard

If you are already paying for an SEO suite, checking their AI module is the logical first step. It’s often “good enough” for baselines, even if it lacks granular sampling controls.

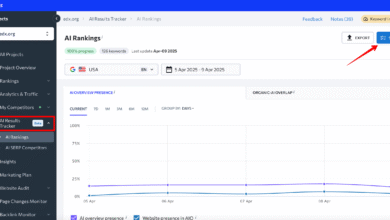

SE Ranking AI Visibility Tracker

What I like: It unifies ChatGPT and Google AI Overviews visibility with your standard rank tracking. The historical logs are fantastic—you can go back and see exactly what the AI said on a specific date.

Watch-outs: You have less control over the specific “prompt engineering” compared to specialized tools.

Best for: SMBs and Agencies who want consolidated reporting.

Surfer AI Tracker

What I like: Surfer integrates this directly into the content workflow. If you see you aren’t ranking, you can jump straight into optimization. It provides prompt-level insights and source transparency.

Watch-outs: Primarily focused on content optimization contexts.

Best for: Content-heavy teams using Surfer for writing.

Enterprise-grade monitoring: compliance, scale, and governance

For large brands (healthcare, finance), incorrect AI answers are a liability risk, not just an SEO problem.

Profound & Evertune AI

What I like: The scale is massive. Evertune AI claims to process over one million AI responses monthly to ensure statistical significance . Profound offers hallucination monitoring and compliance integrations, which is critical if an LLM starts inventing fake features for your product.

Watch-outs: Enterprise pricing and complexity. Overkill for a local business.

Best for: Fortune 1000 and regulated industries.

How I implement LLM visibility tracking in a real content workflow (beginner-friendly)

Tracking numbers is satisfying, but it doesn’t move the needle. You have to connect the data to an operational cadence. Here is how I structure my weeks. (I use Kalema’s AI article generator during the “Action” phase to speed up the creation of optimized briefs based on these insights).

Step-by-step workflow (from prompt library to weekly reporting)

- Day 1: Build the Prompt Library. Identify your top 20 money pages. Create 3 questions per page (Informational, Comparative, Transactional).

- Day 2: Set Baseline. Run these prompts through your chosen tracker. Do not look at the data yet. Let it run for a week to stabilize.

- Day 3: Analyze Gaps. Look at the “Citations” column. Where are competitors cited but you aren’t? Open those competitor URLs. What do they have? (Usually: unique data, clear tables, or better schema).

- Day 4: Action / Update. Refresh your content. Add the missing data points. If you are managing a large site, this is where an Automated blog generator helps keep the publishing cadence consistent while you focus on the strategy.

- Day 5: Annotate & Wait. Mark the date of the update in your tracker. AI models don’t update instantly. Re-check in 2–4 weeks.

Where classic SEO still matters: on-page, technical, and entity signals that LLMs cite

There is a misconception that GEO replaces SEO. It doesn’t. LLMs need structured data to understand your content. In my experience, technical SEO is the bridge to AI visibility.

I’ve seen citations stabilize simply by tightening up a definition section and wrapping it in `FAQPage` schema. Why? because it makes the information machine-readable. Ensure your “About” page is robust, your entity signals (who you are, what you do) are clear, and your site speed allows for fast crawling. You are essentially trying to make your content the easiest, most authoritative citation for the AI to grab.

Common mistakes I see with LLM rank tracking (and how to fix them)

I’ve audited enough setups to know that most people treat AI tracking like Google tracking. That’s a recipe for frustration.

Mistake #1: Expecting a stable ‘position’ like classic Google rankings

The Fix: Stop asking “What rank are we?” and start asking “What is our probability of citation?” Embrace the percentage, not the integer.

Mistake #2: Running too few samples (or changing prompts every week)

The Fix: Start with 10 prompts x 10 runs. Do not change the wording of your prompts for at least a quarter. If you change the input, you break the baseline.

Mistake #3: Tracking visibility but not linking it to a content action plan

The Fix: If a metric drops, have a playbook ready. For me, if visibility drops, I audit the competitor gaining share. What specific sources are they referencing? Usually, it’s a third-party review or a fresh statistic I missed.

Mistake #4: Ignoring platform differences (ChatGPT vs AI Overviews vs Perplexity)

The Fix: ChatGPT favors authoritative, conversational synthesis. Perplexity favors recent news and direct citations. Customize your content updates based on which platform matters most to you.

Mistake #5: Not keeping historical logs and annotations

The Fix: Use annotations. When you launch a PR campaign or update a product page, note it. This is the only way to prove cause-and-effect to your boss later.

FAQs about LLM rank trackers (accuracy, platforms, and whether it’s worth it)

What makes rank tracking for LLM search different from traditional SEO rank tracking?

Traditional tracking monitors a static list of links. LLM tracking monitors a probabilistic conversation. It measures how often your brand appears in generated text across multiple attempts, rather than a fixed position on a page.

Which LLM platforms are most commonly supported by visibility tools?

Most tools cover ChatGPT, Gemini, and Google AI Overviews. Advanced tools also cover Claude, Perplexity, and open-source models like Llama. If you are just starting, focus on where your traffic comes from—usually Google AI Overviews or ChatGPT.

How do tools ensure accuracy given AI variability?

They use multi-prompt sampling. By running the same query 5 to 500 times, they calculate an average “visibility score.” It’s not about perfect repeatability; it’s about statistical confidence.

Should brands start tracking AI visibility now?

Yes. With AI Overviews appearing in over 50% of US search results by mid-2025 , the shift is already here. Establishing a baseline now gives you a competitive advantage before the market fully matures.

Conclusion: My 3 takeaways + next steps to start tracking this week

We are in the early days of GEO, but the winners are already being decided by who adapts their measurement strategy first.

The Recap:

- Accuracy = Sampling. If a tool doesn’t check multiple times, it’s guessing.

- Context is King. Don’t just track keywords; track questions your customers actually ask.

- Action over Vanity. Only track metrics that you can influence with content updates or technical SEO.

Your Next Steps:

- Pick one platform to start (e.g., AI Search Watcher or your current SEO suite’s module).

- Build a 20-prompt library based on high-intent buyer questions.

- Run a baseline report this week and don’t touch the content yet.

- Review the data next week to find your first “citation gap” to fix.