Generative Search KPIs: GEO Metrics That Prove ROI

Introduction: Measuring generative search KPIs (and why I built this beginner guide)

I still remember the first time I sat in a Monday morning performance review where the numbers didn’t add up. Our impressions were steady, but clicks were flatlining. Yet, anecdotal evidence suggested our brand was everywhere—prospects were mentioning they saw us in AI summaries, and our name was popping up in ChatGPT answers during sales demos.

We were visible, but our traditional dashboard said we were invisible. That’s the paradox of generative search (enabled by Google AI Overviews, ChatGPT, Bing Copilot, and Perplexity). It fundamentally changes what “visibility” means. If an AI reads your content and answers the user’s question perfectly while citing your brand, you’ve won the influence battle, even if you didn’t get the click.

I wrote this guide for marketers—specifically intermediate teams in the US—who need to prove the value of this new visibility. By the end of this article, you’ll be able to set up a simple KPI dashboard, define a baseline for GEO metrics (Generative Engine Optimization), and report on AI citations with the same confidence you have in organic traffic.

Why traditional SEO metrics break in generative search (and what replaces them)

For two decades, we’ve relied on a linear formula: Ranking → Click → Conversion. But in an AI-mediated search environment, that chain is broken. As of mid-2025, data suggests AI Overviews appear in over 50% of Google search results . Furthermore, industry reports indicate that nearly 60% of searches now end without a click .

Why? Because the AI satisfies the user intent directly on the SERP (Search Engine Results Page). If you are judging your content’s performance solely by click-through rate (CTR) or session duration, you are likely underreporting your actual market presence. You might be the primary source for an answer that thousands of people read, yet your analytics show zero sessions.

We need to shift our lens from “traffic” to “influence.” The new funnel looks like this:

- Retrieval: Is your content chunk found by the AI?

- Citation: Is your brand credited as the source?

- Influence: Did the user trust the answer provided?

- Conversion: Does that trust eventually lead to a branded search or direct visit?

The bottom line: In the era of zero-click searches, visibility is about being used, cited, and synthesized—not just visited.

What success looks like now: being retrieved, cited, and trusted

Think of it this way: Being ranked #1 is like having a billboard on a busy highway. Being cited in an AI answer is like being quoted in a front-page news story. You influence the narrative, establish authority, and frame the problem for the user. Even if the reader doesn’t visit your website immediately, you have successfully entered their consideration set.

The minimum measurement shift every beginner team can make

You don’t need to overhaul your entire reporting stack today. Here is the minimum viable shift to get started:

- Audit your top 20 queries: Manually check them in Google AI Overviews and ChatGPT.

- Track citations, not just ranks: Log how often your URL appears as a source bubble or footnote.

- Tag outcomes: Add a “How did you hear about us?” field to your forms to capture “AI Search” or “ChatGPT” responses.

The essential generative search KPIs I track (the core scoreboard)

When I’m building a report for executives, I strip away the vanity metrics and focus on what proves market presence. Below is the core scoreboard I use. Note: The benchmark ranges are based on observational data across different verticals.

| KPI | What it measures | Why it matters | How to measure (Beginner) | Cadence |

|---|---|---|---|---|

| AI Citation Frequency | % of times your brand/URL is cited in AI responses for a query set. | The foundational metric of GEO. No citation = no visibility. | Manual logging or scraping tools. | Weekly |

| Share of Voice (SOV) | Your citations vs. total citations of all competitors. | Contextualizes your visibility against the market. | Spreadsheet formula (see below). | Monthly |

| Answer Inclusion Rate | How often your content forms the basis of the synthesized text. | Indicates high trust; you own the narrative. | Qualitative check: “Did the AI use my specific stats/points?” | Monthly |

| Brand Mention Rate | Mentions of your brand name without a direct link/citation bubble. | Builds subconscious brand awareness. | Text search (Ctrl+F) in AI responses. | Weekly |

Benchmarks : Healthcare sites with high trust signals often see 40–60% citation rates. B2B SaaS typically averages 15–30%. New sites or those with weak authority often struggle to break 5%.

AI citation frequency (the new ‘ranking’)

This is your bread and butter. AI citation frequency tracks how often your content is cited by AI-generated search results. To track this, I take a list of high-intent queries (e.g., “best CRM for small business” or “how to calculate ROI”) and plug them into the target platform. I look for my URL in the citation carousel or the footnotes. If it’s there, it’s a win. I usually grab a screenshot for the monthly report because seeing is believing for stakeholders.

Share of Voice (SOV) in AI search (visibility vs competitors)

Execs love this metric because it answers the question, “Are we beating Competitor X?” Even if your traffic is flat, increasing your SOV means you are crowding competitors out of the AI answer.

The Formula:

SOV = (My Total Citations / Total Citations of Me + Top 3 Competitors) × 100

It’s simple math, but it tells a powerful story about competitive visibility.

Answer inclusion rate + brand mention rate (am I in the synthesized answer?)

There is a difference between being listed in a footnote and being the answer. For a query like “best payroll software,” being in a list is good. For a query like “how to file an LLC in Texas,” having the AI synthesize your step-by-step guide is better. I track answer inclusion rate to see if our content is effectively training the model on the topic.

Advanced GEO metrics (when you’re ready to go beyond the basics)

Once you have the basics down, you might hear terms like RAG (Retrieval-Augmented Generation) thrown around. Don’t panic—you don’t need to be a data scientist to understand the advanced metrics driving retrieval-based search mechanics.

Quick Glossary:

Chunk: A small section of text (like a paragraph) the AI pulls from your page.

Embedding: A numerical representation of the meaning of that text.

Vector Index: The library where the AI stores these meanings.

Chunk retrieval frequency (are my sections being pulled into answers?)

Modern search doesn’t always retrieve a whole page; it retrieves a specific chunk that answers the query. I try to map specific sections of my content (like a pricing table or an FAQ block) to specific queries. If the AI pulls your pricing table but not your competitor’s, your content chunking strategy is working.

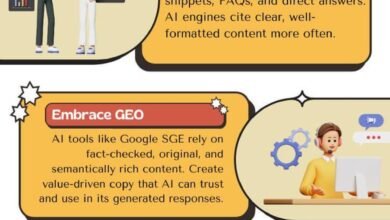

Embedding relevance + vector index presence (do I match the model’s meaning?)

You can’t see the vector index directly without advanced tools, but you can approximate embedding relevance. Does your content use the entities and language that the AI associates with the topic? If you write about “fiscal planning” but users ask about “budgeting,” and the AI prefers “budgeting,” your semantic relevance score is low. I improve this by ensuring clear, entity-rich headers and structured data.

How I measure generative search KPIs: a step-by-step workflow (beginner-friendly)

When I first started trying to track this, I wasted hours jumping between tabs. Now, I use a streamlined workflow. If you are creating a lot of content, you might eventually use an AI article generator to help structure pages for retrieval, but first, you need to know what to measure.

Step 1: Pick a tight query set that matches business intent

Don’t try to track 1,000 keywords. Pick 20–50 that matter. I mix them up:

- Informational (TOFU): “What is [Industry Term]?”

- Comparative (MOFU): “[Brand A] vs [Brand B]”

- Transactional (BOFU): “Pricing for [Service]”

- Troubleshooting: “How to fix [Problem]”

For example, if I were running SEO for a coffee roaster, I’d track “best espresso beans for beginners” (MOFU) and “how to grind beans for french press” (Troubleshooting).

Step 2: Track across platforms (Google AI Overviews, ChatGPT, Perplexity, Bing)

Google isn’t the only game in town anymore. Google AI Overviews are huge, but tech-savvy buyers often start with ChatGPT or Perplexity. I check my core query set weekly on Google and monthly on the others. Keep in mind: results vary by user location and personalization, so consistency in your browser/prompt setup is key to reducing noise.

Step 3: Log citations, mentions, and answer inclusion (with proof)

I maintain a “Query Tracking Sheet.” It has columns for: Date, Query, Platform, Did we appear? (Y/N), Citation Type (Link/Mention), and Screenshot URL.

Trust me on the screenshots. When you report a 20% increase in AI visibility, stakeholders will want to see what that looks like. It’s your insurance policy against skepticism.

Step 4: Connect AI visibility to conversions (UTMs, assisted conversions, CRM)

This is the hardest part. Attribution in generative search is imperfect. However, you can triangulate value. I look for:

- Branded Search Lift: Are more people searching for our brand name after we secured high AI visibility?

- Direct Traffic Trends: Is direct traffic growing alongside our citation rate?

- Self-Reported Attribution: As mentioned, asking “How did you hear about us?” in the CRM often reveals “ChatGPT” as a source long before analytics does.

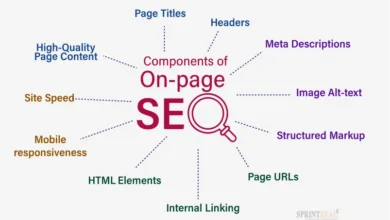

Step 5: Publish updates that increase citations (structure, schema, freshness)

Once you have a baseline, you optimize. I’ve seen that adding FAQ schema, citing external authoritative sources, and organizing content into clear H2s and H3s tends to help the AI parse the content better. Content freshness is also huge—AI models prioritize recent data, so updating old stats can often win back a lost citation.

Dashboard + reporting: turning generative search KPIs into a business story

Reporting on this can feel like the Wild West. To bring order to the chaos, I use a specific dashboard structure. When you are ready to scale this research and monitoring across hundreds of pages, using an AI SEO tool becomes necessary, but you can start with a spreadsheet.

My beginner dashboard template (what I track weekly vs monthly)

Weekly Tracker (The Pulse):

- Citation Frequency on Top 20 “Money Keywords”

- Brand Mention Rate in AI Overviews

- Any negative/hallucinated mentions (crisis prevention)

Monthly Deep Dive (The Strategy):

- Share of Voice (SOV) vs. Top 3 Competitors

- Answer Inclusion Rate qualitative review

- Correlation between Citation Rate and Branded Search Volume

How I tie AI visibility to ROI (traffic, leads, revenue, paid ad dependency)

Here is how I frame the ROI conversation. While these are examples from successful campaigns and not guarantees, they show the potential: Campaigns focusing on GEO have seen up to a 72% uplift in AI-sourced traffic and a 37% reduction in reliance on paid ads .

I explain it to leadership like this: “Citations are a leading indicator. If we are cited today, we build trust tomorrow, and we get the lead next month.” It takes time, but the revenue growth comes from capturing high-intent users who trust the AI’s recommendation.

Scaling content + publishing without losing quality (so KPIs actually improve)

The temptation when you see these metrics is to flood the zone with content. Don’t. AI models are getting better at detecting fluff. If you are using a Bulk article generator to scale your topic clusters, you need strict content operations in place.

Quality gates I use before I publish (especially for YMYL topics)

I won’t ship a piece of content unless it passes these checks:

- Fact-Check Integrity: Are the statistics recent and sourced?

- Structure Validity: Are headers logical and chunkable?

- Schema Validation: Is the code clean and accurate?

- Internal Link Logic: Do we link to other relevant chunks?

- The “So What?” Test: Does this add value, or is it just noise?

This is especially critical for YMYL (Your Money or Your Life) topics where trust signals dictate visibility.

Common mistakes when tracking generative search KPIs (and how I fix them)

I’ll be honest—I messed this up when I started. I spent weeks tracking vanilla keywords that never triggered AI answers. Here are the pitfalls to avoid:

- Inconsistent Prompts: If you ask ChatGPT the question differently each week, your data is garbage. Fix: Save your exact prompts in your tracking sheet.

- Ignoring Competitors: Tracking only yourself tells you nothing about the market. Fix: Always log the top 3 cited URLs alongside your own.

- Confusing Citations with Mentions: A mention is text; a citation is a link/bubble. Fix: Track them separately; citations are worth more for traffic.

- No Baseline: You can’t prove growth if you don’t know where you started. Fix: Spend the first week just logging data before optimizing anything.

- Obsessing Over Tools: Buying expensive software before you have a process. Fix: Start with a spreadsheet. Process first, tools second.

Mistake patterns I see most often in small business teams

Small teams often think they need to track everything. They get overwhelmed and stop. The mistake is process gaps. It’s easy to miss a week of manual tracking, and then your trendline breaks. The fix is to make the “Friday Audit” a non-negotiable 30-minute calendar block.

FAQs about generative search KPIs

Why are traditional SEO metrics insufficient for generative search?

Traditional metrics like rankings and CTR measure traffic to a website. Generative search often answers the user’s query on the results page (zero-click), meaning you can be highly visible and influential without receiving a click or a session.

What is AI citation frequency?

AI citation frequency is the percentage of times your URL or brand is cited as a source in AI-generated responses for a specific set of keywords. It is the primary metric for measuring visibility in platforms like Google AI Overviews and Perplexity.

What is Share of Voice in AI search?

Share of Voice in AI search measures your brand’s presence relative to your competitors. If there are 10 citations in an answer and you own 3 of them, your SOV is 30%. It tracks your dominance in the conversation.

Which advanced metrics should I track for modern generative search?

Beyond citations, track chunk retrieval frequency (how often specific sections of your content are used) and answer inclusion rate (how often your content shapes the synthesized answer). These advanced GEO metrics indicate semantic relevance.

Is measuring GenAI KPIs worth the effort?

Absolutely. With less than 20% of organizations currently tracking these KPIs , doing so gives you a massive competitive advantage. It allows you to optimize for where search is going, not where it has been.

Conclusion: My 3-bullet recap + next actions for the next 30–90 days

We’ve covered a lot, but if you take nothing else away, remember this:

- Visibility is now about retrieval and citation, not just ranking and clicking.

- You must differentiate between citations (links) and mentions (text) to understand your true impact.

- A simple manual dashboard beats a complex automated one that you never look at.

Your Next Moves:

- This Week: Build your “Top 20” query list and run your first baseline audit.

- Next Month: Implement FAQ schema and clear structural updates on pages that aren’t getting cited.

- By Day 90: Establish a “GEO Scoreboard” that you review monthly with leadership, tying citations to pipeline growth.

The shift to generative search isn’t coming; it’s here. Start tracking what matters today, and you’ll be the one explaining the future to your competitors tomorrow.