Monitoring the Machines: Best AI Rank Tracking Tools for Beginners

Introduction: Why I’m tracking rankings inside AI answers now (not just Google’s blue links)

It started with a confusing sales call. I was looking at our analytics, satisfied that our core pages were ranking in the top three positions on Google. But the prospect on the phone said something that stopped me cold: “I asked ChatGPT about the best tools for my budget, and it didn’t mention you guys at all. It recommended your competitor instead.”

That was the moment the ground shifted for me. I realized that while we were winning the SEO battle for blue links, we were completely invisible in the conversational answers where decisions were actually being made. This isn’t just about “ranking” anymore; it’s about being cited, recommended, and accurately represented by the machines answering your customers’ questions.

In this guide, I’m going to walk you through the emerging world of AI rank tracking. I’ll explain exactly what these tools measure, how to choose one that fits your budget (without falling for marketing hype), and most importantly, how to turn that messy data into an SEO strategy that actually works. If you are a marketer or business owner trying to figure out where you stand in the age of AI, this is for you.

What you’ll get from this guide

- Clear Definitions: Understand the difference between traditional rank tracking and AI visibility monitoring.

- Tool Shortlist: A no-nonsense comparison of the best AI rank tracking tools for 2024–2025.

- Selection Criteria: How to evaluate pricing models and data reliability.

- Actionable Workflow: A step-by-step cycle to measure, diagnose, and improve your AI presence.

- Risk Management: How to spot hallucinations before they damage your brand.

What is AI-based rank tracking (and what I actually measure)

At its simplest, AI-based rank tracking is the process of monitoring your brand’s visibility within Large Language Model (LLM) responses. Unlike traditional SEO, where you track a static position (e.g., “Rank #3”), AI tracking tries to quantify a probabilistic answer. You aren’t looking for a rank number; you are looking for presence.

Think of it like this: Traditional SEO is like checking if your book is on the library shelf. AI rank tracking is like asking the librarian for a recommendation and checking if she mentions your book, what she says about it, and if she points to it as a source.

When I run an AI tracking audit, I’m looking at specific measurement objects that might feel new to traditional SEOs:

- Prompts vs. Keywords: We track full questions (e.g., “Who offers the best CRM for small agencies?”) rather than just keywords.

- Engines: We monitor across multiple platforms, not just Google.

- Citations: We check if the AI provides a clickable link (a citation) to our content.

- Sentiment: Is the AI recommending us enthusiastically, neutral, or—worst case—negatively?

Where AI answers show up (US-focused examples)

In the US market, consumer behavior has shifted rapidly. Your customers are likely searching on:

- Google AI Overviews: The summary box at the top of search results.

- ChatGPT: Specifically the browsing-enabled models (like GPT-4o) that search the live web.

- Perplexity: A dedicated “answer engine” that is heavily citation-focused.

- Gemini & Copilot: Microsoft and Google’s chat interfaces integrated into browsers and workspaces.

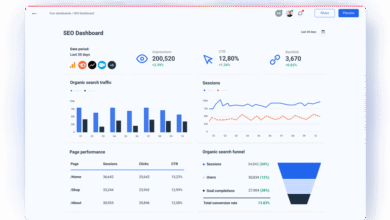

The metrics that matter for beginners

Don’t get bogged down in data. Here are the 6 metrics I actually pay attention to:

- Mention Rate: The percentage of times your brand appears in the answer across multiple runs.

- Citation Rate: How often the AI links back to your site as a source. (Crucial for traffic).

- AI Share of Voice (SoV): Your visibility compared to competitors in the same prompt set.

- Sentiment Tracking: A qualitative score (Positive/Negative/Neutral) of how the AI describes you.

- Hallucination Detection: Flagging if the AI claims you offer features or pricing you don’t actually have.

- Topical Coverage: Which categories of questions you appear in versus where you are invisible.

GEO/AEO vs traditional SEO: how optimization changes when the ‘rank’ is an AI response

You might see terms like Generative Engine Optimization (GEO) or Answer Engine Optimization (AEO) thrown around. Essentially, these disciplines combine standard SEO with optimization for AI summarization. While traditional SEO targets a keyword to win a click, GEO targets user intent to win a summary.

The stakes are changing fast. Industry data suggests that AI Overviews could appear in over 50% of Google search queries by mid-2025 , and early 2025 data showed them already present in over 13% of US desktop searches .

For businesses, this requires a shift in tactics:

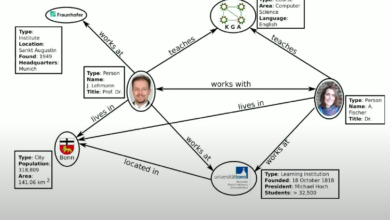

- From Backlinks to Citations: It’s less about link juice and more about being a trusted, verifiable source in the knowledge graph.

- From Keywords to Entities: You need to ensure the AI understands what your product is (an entity), not just the keywords on your page.

- From Long-form to Structured Answers: AI models love concise, structured data (tables, lists) that they can easily parse and summarize.

Why businesses can’t ignore AI visibility (even if organic rankings look fine)

I recently had to explain this to a CEO: “If a customer asks Perplexity for the ‘best payroll software,’ and Perplexity gives a detailed summary of three competitors but ignores us, we didn’t just lose a click—we lost the consideration set entirely.”

The business risk is threefold: Invisible Pipeline (prospects never see you), Misinformation (AI hallucinates your pricing is double what it is), and Competitor Conquesting (rivals are recommended as the “better” alternative). Ignoring this is like ignoring Yelp reviews a decade ago.

How I evaluate the best AI rank tracking tools (beginner-friendly checklist)

I’ve tested a dozen of these tools, and here is the hard truth: Methodology matters more than the dashboard. Because AI models are probabilistic (they can give different answers to the same question), a tool that only checks once is useless. You need rigor.

Here is the checklist I use to vet any new tool:

| Criteria | Why it matters | What to look for |

|---|---|---|

| Sampling Methodology | AI answers change based on time, temperature, and chance. | Does the tool run the prompt 3–5 times (reruns) to get an average? |

| Citation Capture | Visibility without a link is just vanity. | Does it capture specific URLs and quoted passages? |

| Engine Coverage | Your customers aren’t just on ChatGPT. | Look for Google AIO, Perplexity, Gemini, and Claude support. |

| Localization | Results vary by location, especially for local biz. | Can you set the query location to US/City level? |

| Pricing Model | Costs can spiral if you track too many prompts. | Subscription vs. Pay-as-you-go (Wallet) models. |

Methodology 101: why AI visibility data can be noisy (and how good tools handle it)

I remember running a test manually on ChatGPT. I asked, “Best CRM for startups” and got one list. I opened a new chat, asked the exact same thing five minutes later, and got a completely different list. That’s volatility.

Good tools handle this by mitigating variables. They use consistent prompt templates, they timestamp every run so you can correlate changes with model updates, and they perform multiple “reruns” to give you a confidence score. If a tool tells you “You rank #1” based on a single API call, be skeptical. I’d rather trust a smaller dataset with clean reruns than a massive dashboard built on single-sample noise.

Pricing models that fit SMB vs agency vs enterprise

Your choice often comes down to budget structure:

- Pay-as-you-go (Wallet): Best for SMBs or sporadic testing. You load $50 and burn credits only when you run a check. (e.g., AI Rank Checker).

- Subscription (SaaS): Best for agencies needing predictable costs and automated weekly reporting. (e.g., Peec AI, LLMrefs).

- Enterprise: Custom contracts for massive scale, API access, and security compliance.

Best AI rank tracking tools: comparison table + what each one is best for

No single tool is perfect yet. The market is fragmented, with some tools excelling at ad-hoc checks and others building robust governance suites. Below is a comparison of the top contenders based on current capabilities.

| Tool | Best For | Key Features | Pricing Model | Watch-outs |

|---|---|---|---|---|

| AI Rank Checker | Budget / Ad-hoc testing | Multi-engine (ChatGPT, Claude, Gemini, Perplexity), Wallet payment | Pay-as-you-go | Manual feel; less automated historical trending. |

| Peec AI | Agencies & Reporting | Share of Voice, Sentiment analysis, Structured dashboards | Subscription | Newer player; ensure engine coverage matches needs. |

| AthenaHQ | Optimization Intelligence | Visibility tracking + Optimization recommendations, Localization | Subscription | Complex for simple users; feature-heavy. |

| Waikay.io | Brand Governance | AI Brand Score, Hallucination detection, Entity monitoring | Enterprise/SaaS | Focus is more on brand protection than aggressive SEO. |

| Semrush/Ahrefs | Convenience | Integrated into existing SEO workflow | Add-on / Tiered | Often limited to Google AI Overviews; less depth on LLMs. |

Budget pick: AI Rank Checker (pay-as-you-go flexibility)

If you are just dipping your toes in, AI Rank Checker is a solid starting point. Its wallet model means you aren’t locked into a monthly fee if you only want to audit your brand once a quarter. It supports a wide range of engines including ChatGPT, Gemini, Claude, Perplexity, and Copilot.

My advice: Start with a small “sanity check” set of 5–10 prompts to see where you stand before buying tons of credits.

Reporting & agencies: Peec AI and LLMrefs (structured dashboards and multi-engine tracking)

For those who need to report to clients or a VP of Marketing, Peec AI and LLMrefs offer the structure you need. They excel at aggregating data into “Share of Voice” charts that look great in a monthly deck. They handle the heavy lifting of tracking sentiment (positive/negative mentions) and distinguishing between branded vs. non-branded prompts. I find these tools essential when you need to prove momentum over time, not just a snapshot.

Optimization intelligence: AthenaHQ, Scrunch AI, and Profound (measure + recommendations)

Measuring is one thing; fixing is another. AthenaHQ positions itself as a GEO hub that doesn’t just track visibility but offers guidance on how to optimize content to rank better. Along with tools like Scrunch AI and Profound, they aim to close the loop between data and action. AthenaHQ is particularly noted for localization support , which is critical if you are a local business.

Warning: Recommendations are only useful if your content team has the bandwidth to actually implement them.

Brand governance: Waikay.io (hallucination detection and citation monitoring)

If you are in a regulated industry (finance, healthcare) or a large enterprise, Waikay.io is a standout for risk management. Instead of just “ranking,” it uses knowledge-graph technology to assign an AI Brand Score and monitor for hallucinations. Imagine the AI says your product costs $500 when it’s actually $50—Waikay is designed to catch that. It’s the tool I’d recommend if your primary fear is reputational damage rather than just traffic loss.

If you already use Semrush/Ahrefs/SE Ranking: when an “add-on” is enough

Let’s be practical: you might not want another login. Major platforms like Semrush (with its AI Visibility Tracker) and Ahrefs are rapidly integrating these metrics. If you are an SMB owner and your main focus is still Google Search, these add-ons are often sufficient for monitoring Google AI Overviews. However, if you need deep insight into ChatGPT or Perplexity specifically, you will likely need a specialist tool.

My step-by-step workflow: the AI visibility optimization cycle (measure → diagnose → improve → govern)

Tools are useless without a process. Over the last year, I’ve developed a monthly cycle that keeps our AI visibility moving up without overwhelming the team. Here is the exact workflow I use.

Step 1–2: Choose engines + build a prompt set you can defend

Don’t try to boil the ocean. Pick 2–4 engines that matter most to your customers (usually Google AIO, ChatGPT, and Perplexity). Then, build a Prompt Library of 15–20 questions. I categorize them like this:

- Branded: “What is [My Brand]?”, “Is [My Brand] legit?”

- Comparison: “[My Brand] vs [Competitor]”

- “Best of” (Category): “Best [Product Category] for [User Type]”

Quick tip: Avoid vanity prompts. Use the actual questions your sales team hears on calls.

Step 3–4: Run tracking correctly (reruns, timestamps, citation capture)

Run your tracking. If your tool allows it, configure it for 3–5 reruns per prompt to smooth out the noise. Ensure you are capturing the full response text and the citations. I look for the “Citation Gap”—instances where the AI mentions us by name but fails to link to our site. That is low-hanging fruit for technical SEO fixes.

Step 5: Turn insights into content changes (formats AI answers tend to cite)

This is where the rubber meets the road. If I see we aren’t ranking for “Best X,” I audit our page. usually, the problem is structure. I will go in and:

- Add a concise definition block (40-60 words) near the top.

- Insert a clear comparison table using HTML tags (AI models love tables).

- Add FAQ schema to clearly answer related questions.

- Improve internal linking to signal authority on the topic.

When you have a lot of content to update based on these insights, manual rewriting can be slow. I use tools like Kalema’s AI SEO tool to help draft structured, intent-matched sections at scale. Whether it is generating a new comparison section or refactoring an outdated guide, using a AI article generator speeds up the “fix” phase so I can get back to measuring.

Step 6–7: Validate improvements + add governance (hallucination and sentiment checks)

After shipping updates, wait 2–3 weeks (or request re-indexing) and re-run your tracker. Compare your Share of Voice. At the same time, check your Governance report. If you spot a hallucination, I have a simple escalation path: Marketing verifies the error → Product updates the documentation → We publish a correction or press release if the error is severe.

Common mistakes I see beginners make (and how I fix them)

I’ve made plenty of mistakes so you don’t have to. Here are the most common ones:

- Mistake: Trusting a single run.

Fix: AI is probabilistic. Always look at the trend across multiple runs or weeks, never a single snapshot. - Mistake: ignoring citations.

Fix: Being mentioned is nice; being linked is profitable. Optimize your content to be a referenceable source (stats, original data). - Mistake: “Optimizing” for keywords only.

Fix: Stop keyword stuffing. Optimize for entities and direct answers. The AI needs to understand what you are, not just match a string of text. - Mistake: No Brand Governance.

Fix: I learned this the hard way—you need to check for negative sentiment or hallucinations regularly, or your brand reputation can bleed out silently.

Quick troubleshooting: when your results swing wildly week to week

If your graphs look like a rollercoaster, don’t panic. It’s normal. First, check if the model updated (e.g., GPT-4 to GPT-4o). Check if your prompt was too vague. And finally, remember that volatility is a feature of AI, not a bug. Focus on the 3-month moving average, not the daily spike.

FAQs about AI-based rank tracking (GEO/AEO beginners)

What is AI-based rank tracking?

AI-based rank tracking is the practice of monitoring your brand’s visibility, sentiment, and citations within AI-generated responses from engines like ChatGPT, Google AI Overviews, Gemini, and Perplexity. It shifts the focus from “where do I rank on the page” to “am I part of the answer.”

How does GEO/AEO differ from traditional SEO?

Traditional SEO optimizes for a position in a list of links (SERP), often focusing on keywords and backlinks. GEO (Generative Engine Optimization) optimizes for inclusion in a synthesized answer, focusing on content structure, authority, and entity clarity so the AI can easily summarize and cite your information.

Which tools are best for small businesses or agencies on a budget?

For occasional checks or small budgets, pay-as-you-go tools like AI Rank Checker are excellent because you only pay for what you use. For agencies needing regular reporting without enterprise costs, Peec AI and LLMrefs offer affordable subscription tiers with good visualization.

What should enterprise teams consider when choosing a tool?

Enterprises should prioritize governance, collaboration, and data methodology. Look for tools like Waikay.io or AthenaHQ that offer hallucination detection, robust sampling (reruns), and features that support compliance and brand safety audit trails.

Why is AI visibility important for businesses today?

As consumers increasingly use AI for research, being invisible in these answers means disappearing from the buyer’s journey. With AI Overviews projected to impact over 50% of searches by mid-2025 , maintaining accurate brand presence in AI results is critical for protecting market share and reputation.

Conclusion: a simple plan to choose a tool and start tracking this month

The shift to AI search can feel overwhelming, but you don’t need to overhaul your entire strategy overnight. The goal right now is visibility and defense.

Your 3-step plan for this month:

- Pick one tool: Use a pay-as-you-go option if you are unsure, or a subscription if you have clients to report to.

- Build a “Sanity Set”: Create 10–15 prompts covering your brand name and your top 3 product categories.

- Establish a Baseline: Run your first audit. Don’t try to fix everything yet—just understand where you are invisible.

Once you know where the gaps are, consistency is key. If you only do one thing, track a small prompt set consistently—consistency beats complexity here. And when you are ready to scale your content updates to capture that AI visibility, tools like Kalema’s automated blog generator can help you operationalize the necessary content refreshes without burning out your editorial team.