Introduction: The “SEO Autopsy” approach

I see this scenario constantly: A marketing team is publishing three blog posts a week, the site looks clean, and the technical “health score” on their tool dashboard says 92%. Yet, organic traffic is flatlining, and leads from search are virtually non-existent.

When I step in to diagnose this, I don’t run a generic scan. I perform what I call an SEO Autopsy. Unlike a standard audit that just lists “errors,” a forensic autopsy looks for the cause of death—or in this case, the cause of stagnation.

In this guide, I will walk you through the exact forensic workflow I use to diagnose sites in 2025. We will move beyond basic checklists to uncover hidden technical blockers, validate conflicting tool data manually, and address the new reality of AI Overviews and zero-click searches. If you are ready to stop guessing and start fixing, this is how to do an SEO audit that actually moves the needle.

Forensic SEO audit vs. traditional SEO audit: what’s different

I used to make the mistake of trusting the red warning lights on my crawl tools implicitly. If the tool said “Critical Error,” I panicked. If it said “Good,” I moved on. That was a mistake.

A traditional SEO audit usually involves exporting a PDF from a tool and handing it to a developer. It focuses on surface-level metrics: meta tag lengths, broken links, and missing alt text. While these matter, they rarely crash a business’s organic performance on their own.

A forensic SEO audit is different. It prioritizes manual validation. It asks: “Is this ‘error’ actually hurting us, or is it a false positive?” More importantly, it looks for the invisible killers that automated tools often miss, such as JavaScript rendering failures, indexation loopholes, and content that AI engines can’t parse.

Fact: Google advises treating automated SEO audit scores as diagnostic aids rather than definitive measures—manual validation is essential .

In 2025, this distinction is critical. With AI Overviews appearing in over 50% of U.S. desktop searches , a site might be technically perfect but invisible to the generative engines answering user queries directly. We have to audit for visibility, not just code compliance.

What I mean by “hidden issues” (quick examples)

These are the problems standard tools often gloss over, but forensic analysis uncovers:

- Canonical Conflicts: The tool sees a canonical tag and marks it “valid,” but manual inspection shows it points to the homepage on every single page, effectively telling Google to ignore the entire site.

- The JavaScript Trap: The HTML source looks fine, but the navigation links are only generated after a user interaction (like a click), meaning Googlebot can’t crawl deeper than the homepage.

- Template Bloat: A “noindex” tag accidentally left on a specific page template during a staging push that wipes out a specific product category from search results.

- Intent Mismatch: You rank #1, but the bounce rate is 90% because your content answers “what is” while the user wants to “buy.”

Before I start: access, tools, and baselines

If I don’t have access to Google Search Console (GSC), I pause the audit. Without GSC, I am just guessing based on external signals. To run a forensic audit effectively, I need to see the site exactly how Google sees it.

Before you change a single pixel, you need a baseline. I always document: organic sessions, conversions/leads (not just traffic), top 10 landing pages, and current Core Web Vitals status. This protects you. If traffic drops after a “fix,” you need to know if it was your change or a seasonal trend.

While this audit focuses on diagnosis, remediation often requires scaling content updates. Tools like Kalema’s AI article generator become useful later when you need to rewrite thin content or produce structured briefs based on your findings, but for now, we focus purely on the data.

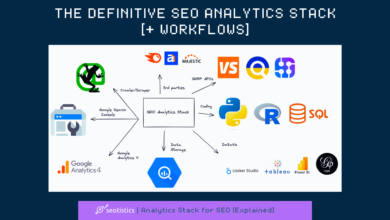

The Forensic Toolkit

| What I Check | Where I Get It | Why It Matters | Common Beginner Mistake |

|---|---|---|---|

| Indexing & Rankings | Google Search Console (GSC) | The only source of truth for how Google treats your URLs. | Ignoring the “Excluded” tab thinking it’s irrelevant. |

| Traffic & Behavior | GA4 / Adobe Analytics | Validates if traffic actually converts to business value. | Confusing “users” with “sessions” or ignoring sampling. |

| Technical Health | Screaming Frog / Sitebulb | Simulates a crawl to find broken paths and architecture issues. | Trusting the default crawl settings without checking robots.txt. |

| User Experience | PageSpeed Insights | Measures Core Web Vitals (CWV) 2.0. | Obsessing over mobile scores for a B2B desktop-heavy site. |

Setup checklist (copy/paste)

- Access: Verified GSC and GA4 access (Admin or Restricted read-only is fine).

- Crawl Tool: Configure to crawl JavaScript (if your site uses it) and respect robots.txt.

- Date Range: Set GSC to the last 16 months to spot long-term trends; set GA4 to the last 6 months year-over-year.

- Device Check: Ensure your crawl tool is using the “Googlebot Smartphone” user-agent.

- Staging: If auditing a redesign, ensure the staging site is password-protected so Google doesn’t index it prematurely.

How to do an SEO audit (Part 1): the technical forensic workflow

This is the backbone of the operation. My workflow is strictly sequential: Crawl → Index → Render → Speed → Architecture. If the crawler can’t reach the page, speed doesn’t matter. If the page is fast but no-indexed, architecture doesn’t matter.

In 2025, technical SEO has evolved. It’s no longer just about status codes; it’s about how browsers render content and how metrics like Interaction to Next Paint (INP) impact the user experience.

Step 1 — Crawl the site like Google (and don’t trust one crawl)

I start by running a crawl with a tool like Screaming Frog. If the site is massive (over 200k URLs), I sample intelligently rather than waiting days for a full crawl. I’m looking for broad patterns, not every single 404 error.

Autopsy note: I always run two mini-crawls: one with JavaScript rendering enabled and one without. If the “without” crawl returns zero internal links or empty content, I know immediately that the site relies heavily on client-side rendering, which is a major risk factor.

Step 2 — Indexation reality check in Google Search Console

This is where I spend 40% of my time. I go to the Pages (Indexing) report in GSC. I’m looking for the discrepancy between “Crawled – currently not indexed” and “Discovered – currently not indexed.”

- Crawled – not indexed: Google saw the page but decided it wasn’t worth indexing. This is usually a content quality or duplicate issue.

- Discovered – not indexed: Google knows the URL exists but hasn’t bothered to crawl it yet. This is usually a crawl budget or site authority issue.

I also check the “Duplicate, Google chose different canonical than user” bucket. If Google is ignoring your canonical tags, it means your signals are weak or confusing.

Step 3 — Rendering & JavaScript: confirm Google can actually see your content

Just because you see text on the screen doesn’t mean Googlebot does. I use the URL Inspection tool in GSC to “View Crawled Page” → “HTML.” I search for my primary keyword or main body text in that code.

If the text isn’t in the HTML, or if important internal links are missing, the site has a rendering gap. I often see this in Single Page Applications (SPAs) built with React or Vue where the developers forgot to implement server-side rendering (SSR) or static generation.

Step 4 — Performance & Core Web Vitals 2.0 (INP + TTFB included)

Speed isn’t a vanity metric—slow pages break crawling efficiency and conversions. In 2025, we look beyond LCP to Interaction to Next Paint (INP) and Time to First Byte (TTFB).

| Metric | What it Measures | Common Failure Cause | Quick Win |

|---|---|---|---|

| INP | Responsiveness to clicks | Heavy JavaScript executing on the main thread. | Defer non-essential JS; break up long tasks. |

| TTFB | Server response time | Slow database queries or no caching. | Implement server-side caching or a CDN. |

| LCP | Loading performance | Giant unoptimized hero images. | Convert images to WebP and preload the LCP image. |

Step 5 — Site architecture signals: internal links, depth, and orphan pages

Think of your site architecture like a building’s hallway system. If a room (page) doesn’t have a door (link), no one can enter. These are orphan pages—URLs that exist but have no internal links pointing to them. They rarely rank well.

I check the “Crawl Depth” report. If important money pages are more than 3-4 clicks away from the homepage, they are buried too deep. I also check for “internal link traps”—places where the navigation links to a redirect or a 404, wasting equity.

How to do an SEO audit (Part 2): on-page, content quality, and intent matching

Once I confirm the technical foundation is solid, I shift to content. The question changes from “Can Google access it?” to “Should Google rank it?”

This is where I often find the biggest growth opportunities. Many sites suffer from “content bloat”—thousands of thin, low-value pages that drag down the authority of the whole domain. My goal here is to identify which pages need to be updated to match current user intent and E-E-A-T standards.

If you find that you have hundreds of pages needing structured updates or rewrites, this is where a system like Kalema helps. By using intelligent SEO content generators, you can ensure that every rewritten page follows the structural best practices we identify in the audit, scaling your remediation efforts without losing editorial quality.

A simple content triage: Keep, Improve, Merge, or Remove

I don’t delete pages just because traffic is low—sometimes they assist conversions. However, I do ruthlessly categorize every page into one of four buckets:

- Keep: High traffic, high conversion, current information. (Action: Monitor).

- Improve: Good topic, but low rankings or high bounce rate. (Action: Update content, add depth, improve AI content writer workflows).

- Merge: Two or more pages competing for the same keywords (Cannibalization). (Action: Consolidate into one strong asset and 301 redirect the rest).

- Remove: Outdated, thin, or irrelevant content with no backlinks or traffic. (Action: 410 Gone or 301 Redirect).

On-page checklist (beginner-friendly)

- Title Tags: Is the primary keyword near the front? Is it under 60 characters?

- H1 Alignment: Does the H1 match the user’s search intent immediately?

- Headings: Are H2s and H3s used to structure the logic, not just for size?

- Content Depth: Does the content answer the query fully, or does the user have to go back to Google?

- Internal Links: Are there 3-5 links to related relevant content?

- Schema: Is Article, Product, or FAQ schema applied correctly?

Schema & snippet formatting for zero-click and AI summaries

With zero-click searches rising, your content must be structured to be “snippet-able.” This is crucial for Answer Engine Optimization (AEO). I look for long walls of text and break them down.

If I see a paragraph explaining a process, I convert it into a numbered list. If I see a definition buried in fluff, I pull it out into a concise bolded sentence. This formatting makes it easier for Google to extract Featured Snippets and for AI models to cite your answer.

Off-page & reputation signals: backlinks, brand mentions, and trust basics

I keep this section tight. Most sites don’t need a disavow file—what I care about is relevance and obvious manipulation. I check the backlink profile for velocity trends (did we suddenly lose 500 links?) and anchor text diversity.

If 90% of your backlinks use the exact same commercial anchor text (e.g., “buy cheap insurance”), that’s a red flag for algorithmic penalties. I also check for consistent NAP (Name, Address, Phone) data if it’s a local business. Trust starts with consistency.

GEO/AEO checks: audit for AI Overviews, LLM citations, and “share of AI voice”

This is the newest layer of the forensic audit. Generative Engine Optimization (GEO) isn’t about replacing SEO; it’s about optimizing for the machines that summarize the web. In 2025, if your content isn’t cited by AI, you are missing a massive chunk of visibility.

We know that AI Overviews are appearing in over 50% of US desktop searches . To audit this, I don’t just look at rankings. I look at quotability. Does your content provide facts, data, and definitions in a way that an LLM can easily ingest and attribute?

| Traditional SEO KPI | AI-Era Visibility KPI | How to Measure (Basic) |

|---|---|---|

| Rank Position (1-10) | Generative Appearance | Check top queries: Do AI Overviews trigger? Are you in the carousel? |

| Click-Through Rate (CTR) | Citation Frequency | Are you linked as a source in the AI response? |

| Organic Sessions | Referral Traffic | Check analytics for referrals from ChatGPT, Perplexity, or Gemini. |

Beginner-friendly AI visibility checklist (what I look for)

- Entity Clarity: Do you explicitly name concepts and people? (e.g., “Elon Musk, CEO of Tesla” vs just “He”).

- Direct Answers: Do you have clear, 40-60 word definitions for key terms immediately following a heading?

- Data Originality: Do you provide unique stats or tables that AI models can’t find elsewhere?

- Source Transparency: Do you cite your sources and have a clear author byline? Trust signals matter for AI citation.

Turn findings into a prioritized fix plan (and avoid common audit traps)

The worst audit is the one that sits in a drawer because it’s too overwhelming. To prevent this, I prioritize everything based on Impact vs. Effort. I use a simple 1-5 scale for each.

If I only have 2 hours to fix things, I look for the “High Impact, Low Effort” wins. These are usually technical blockers like a wayward “noindex” tag or a broken robots.txt file. Content rewrites are usually “High Impact, High Effort”—they go on the roadmap for the next quarter.

Common SEO audit mistakes (and what I do instead)

- Obsessing over score perfection: I don’t waste time trying to get a 100/100 score by fixing minor CSS validation errors. I focus on issues that impact revenue.

- Ignoring the “why”: I don’t just say “fix broken links.” I ask “why are links breaking?” (e.g., is a plugin causing it?).

- Changing URLs without redirects: This is a classic traffic killer. I always verify 301 redirects are in place before any URL change.

- Forgetting to re-crawl: I never mark an audit as “done” until I’ve validated the fixes with a fresh crawl and a GSC inspection.

- Chasing volume over intent: I check if we are ranking for high-volume keywords that deliver zero leads. If so, I deprioritize them.

What a good audit deliverable looks like (1-page summary)

When I present an audit to stakeholders, I don’t show them the raw data spreadsheet first. I give them a 1-page Executive Summary containing:

- The Diagnosis: One paragraph explaining the site’s health status.

- Top 3 Critical Blockers: The immediate fires to put out.

- The Opportunity: A conservative estimate of growth if fixed.

- The Roadmap: A 30/60/90 day plan assigning owners to tasks.

FAQs (for beginners doing their first forensic SEO audit)

What is the difference between a traditional and a forensic SEO audit?

A traditional audit relies on automated tool scores. A forensic audit uses manual validation to investigate the root causes of issues and checks for “hidden” problems tools miss, like rendering gaps.

Why does GEO/AEO matter in 2025 audits?

With AI Overviews taking up prime real estate and zero-click searches rising, audits must ensure content is structured for AI citation, not just traditional blue links.

Are SEO audit tool scores reliable?

Treat them as starting points, not verdicts. Tools often flag non-issues as critical or miss severe rendering problems. Always validate manually.

How do I optimize for zero-click and AI summaries?

Use concise, direct answers (40-60 words) right after headings. Use structured data (Schema) and formatting like tables and lists to make your content easy for AI to parse and quote.

Conclusion: my 3-point recap + next actions

If you only remember three things from this forensic playbook, make them these:

- Validate, don’t just export. Never trust a tool’s error report without checking the page yourself.

- Audit for the machine and the user. Ensure Google can render your JS, and ensure AI can parse your facts.

- Prioritize by revenue impact. Fix the blockers that stop crawling first, then move to content improvements.

Your next actions for the next 72 hours: Run a full crawl with JavaScript rendering enabled. Export your “Pages” report from GSC to find indexing gaps. Identify your top 5 revenue-generating pages and manually inspect them for rendering or intent issues.

Once you have your diagnosis, the real work of fixing and creating content begins. This is where leveraging content intelligence tools like Kalema can help you implement your remediation strategy at scale, ensuring every new page meets the high standards you’ve just defined.