Auditing for AI with LLM audit software: what I’m checking and why it matters now

Last month, I ran a simple test. I asked ChatGPT to recommend three alternatives to a software product I manage. The result was a wake-up call: it listed my biggest competitor and described their features perfectly. Then it listed a tool that shut down two years ago. My product? Nowhere to be found.

For years, we’ve obsessed over Google rankings. But today, with 58% of users reportedly turning to AI tools for product recommendations , the battleground has shifted. We aren’t just fighting for clicks anymore; we are fighting to be part of the answer.

This is where LLM audit software and the discipline of Generative Engine Optimization (GEO) come in. Unlike traditional SEO, where you track a static rank, auditing AI visibility is about measuring probabilities. It’s stochastic—answers change based on how a user phrases a prompt.

In this guide, I’ll show you exactly how to track this volatile new channel. We will look at the tools that actually work, how to build a repeatable audit workflow, and how to fix the gaps you find.

What you’ll learn:

- How to measure your brand’s “share of voice” in AI models like ChatGPT and Gemini.

- The best tools for every budget (from $20/mo startups to enterprise suites).

- My step-by-step workflow for running a monthly audit.

- How to translate messy AI outputs into a clean action plan.

Quick answer: what LLM visibility auditing actually is (in one paragraph)

Think of an LLM visibility audit like sending a team of mystery shoppers into a store, over and over again. Because AI models are probabilistic, you can’t just ask once. An audit involves systematically prompting platforms (like ChatGPT or Perplexity) with hundreds of variations of user questions to measure how often your brand appears, whether the sentiment is positive, and if the citations are accurate. It turns anecdotal screenshots into directional data you can actually act on.

Why audit my brand’s visibility in LLMs (and which platforms I prioritize in the US)

The stakes are higher than just “brand awareness.” When a user asks an LLM for advice, they are often lower in the funnel—they want a specific solution, not a list of ten links. If an AI overview summarizes your product incorrectly (e.g., listing old pricing or missing features), you aren’t just losing a click; you’re losing a qualified lead who trusts the machine’s answer.

But you can’t audit everything. If I only had two hours this month to check visibility, I would prioritize based on where my specific audience asks questions. In the US market, the hierarchy usually looks like this:

- ChatGPT: The default for general knowledge and broad “how-to” queries.

- Google AI Overviews (Gemini): Critical for high-volume search traffic and “best X for Y” queries.

- Perplexity: Increasingly popular for research-heavy B2B evaluations because it cites sources heavily.

- Claude & Bing Copilot: Secondary priorities, though Claude is rising for technical/coding audiences.

Note: If you are in a regulated industry like finance or healthcare, treat this as a compliance audit. Hallucinations here aren’t just marketing fails; they are legal risks.

How GEO tools differ from traditional SEO tools (in plain terms)

If you are coming from an SEO background, you need to shift your mental model slightly:

- SEO tools track positions; GEO tools track patterns. In SEO, you are #1 or you aren’t. In GEO, you might appear in 60% of answers for a specific prompt.

- SEO tracks keywords; GEO tracks prompts. Humans don’t type “best crm” into chatbots; they type “I need a CRM for a small real estate team that integrates with Slack.” GEO tools simulate these long-tail conversations.

- SEO values backlinks; GEO values citations. It’s not just about who links to you, but which sources the AI actually reads and cites in its answer construction.

Platforms checklist: where I start for most SMB audits

If you are setting up your first audit, don’t overcomplicate it. Here is my standard SMB starting point:

- Pick 2 Core Platforms: Usually ChatGPT (for volume) and Perplexity (for citation tracking).

- Set a Baseline Date: AI models update constantly. Always log the date of your test.

- Select 10–20 Prompts: Mix high-level category questions with specific product comparisons.

- Define “Good”: A mention isn’t enough. Did they get the pricing right? Did they link to the correct landing page?

What I measure in an LLM audit: visibility, citations, sentiment, and hallucinations

When I first started auditing, I was just looking for my brand name. That was a mistake. I remember one audit where a client had a high “visibility score,” but when I read the actual outputs, the AI was recommending their 2019 product version which no longer existed. They were visible, but they were bleeding leads.

To avoid that trap, I track four specific signals. Be aware that tool capabilities vary, and features like “daily updates” should always be verified .

- Visibility Score / Share of Voice: The percentage of times your brand appears in the answer for a specific set of prompts.

- Citation Analysis: Which URLs is the AI pulling from? Are they your site, or third-party reviews like G2 or Reddit?

- Sentiment & Hallucinations: Is the description positive? Crucially, is it factual? Detecting hallucinations (misinformation) is the most valuable part of this process.

- Topic & Brand Relevance: How strongly does the model associate your brand with a specific topic? (e.g., Does it link “Kalema” with “content intelligence” or just “AI writing”?)

Table: LLM visibility audit metrics and the action I take when they’re low

| Metric | What it tells you | Action if the score is low |

|---|---|---|

| Presence Rate / Visibility | How often you appear in the consideration set. | Revise “About” pages and entity schema to clearly define what you do. |

| Citation Quality | Whether the AI trusts your own content as a source. | Update data-rich content (pricing, specs) and add structured data (FAQPage). |

| Sentiment Score | If the AI describes you positively or neutrally. | Audit third-party review sites and Reddit threads; AIs often pull sentiment from there. |

| Hallucination Rate | How often the AI invents fake features or prices. | Correct the record immediately with clear, direct statements on your homepage and FAQs. |

My step-by-step workflow: running a repeatable LLM visibility audit (from baseline to fixes)

The biggest mistake I see teams make is treating this like a one-off experiment. Because LLM outputs are stochastic—meaning they have an element of randomness—a single screenshot proves nothing. You need a process that stabilizes the noise.

Here is the workflow I use. I recommend running this monthly for fast-moving industries and quarterly for everyone else.

Step 1–2: define scope (products, locations, competitors) and what “visibility” means for me

First, define the boundaries. If you sell globally, you must specify the region, as ChatGPT’s answers in the US often differ from those in the UK. I typically pick my brand, my top product, and 3–5 direct competitors.

Example Scope:

“Audit visibility for ‘Enterprise CRM’ queries in the US market. Success = appearing in the top 3 bullet points with accurate pricing.”

Step 3–5: build prompt sets (keyword-based + persona-based)

Don’t just use keywords. We need to mimic real users. I like to use a mix of “category prompts” (broad) and “persona prompts” (specific pain points), inspired by methodologies like Gumshoe.AI.

Example Prompts:

- “What are the best CRM tools for a real estate agency with 5 agents?”

- “Compare HubSpot vs [My Brand] for email marketing features.”

- “I need a tool that handles automated invoicing and integrates with Stripe. What do you recommend?”

- “Is [My Brand] compliant with GDPR?”

- “What are the downsides of using [Competitor X]?”

Step 6–8: run tests consistently and log outputs (the minimum viable documentation)

I’ve been burned by this before: running a great audit, finding a hallucination, and then realizing I didn’t save the exact text or the chat link. When I tried to show the Product team a week later, the AI gave a different answer, and I looked like I was crying wolf.

Always log the raw output. If you are doing this manually, use a spreadsheet with columns for: Date, Platform, Prompt, Answer Text (Full), Mention (Y/N), Sentiment, and Citations. If you are using software, ensure it allows you to export this data. You need the evidence trail.

Step 9–10: score findings and translate them into an action backlog

Once you have the data, prioritize it. I use a simple Impact × Effort matrix.

- High Impact, Low Effort: Updating your pricing page because ChatGPT is quoting 2022 rates. Do this today.

- High Impact, High Effort: Creating a brand new comparison hub to displace a competitor. Plan this for the quarter.

The best LLM audit software in 2026: tools by category + side-by-side comparison

The market for these tools is exploding. We have specialized startups (GEO-first), established SEO giants adding features, and budget-friendly options. Note: Pricing and feature sets change overnight in this industry, so please verify details on vendor sites .

Category 1: GEO-first platforms (built for AI answer monitoring)

These tools are designed specifically for the non-deterministic nature of AI. Tools like Evertune AI, Ranketta, and cuemarc focus on statistical confidence. For example, Evertune AI reportedly processes over one million responses per brand per month to give you a score you can actually trust, rather than a random snapshot.

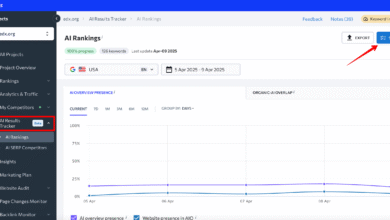

Category 2: SEO suites adding AI visibility (for teams already living in rank trackers)

If my team already pays for a suite, I start there before adding another vendor. Semrush and SEOmonitor have integrated AI visibility into their existing dashboards. This is great for correlation—you can see if a drop in traditional rankings matches a drop in AI visibility. However, they sometimes lack the deep persona-based modeling of the specialized tools.

Category 3: brand monitoring + chat visibility (when PR and reputation matter)

Brand24’s Chatbeat approaches this from a PR angle. They are monitoring mentions across the web and now include chatbot responses. If your primary concern is reputation management or crisis monitoring rather than pure “ranking,” this is a strong contender.

Category 4: budget-friendly tools (good enough to start)

You don’t need an enterprise budget to start tracking. Rankscale (starting around $20/mo ) and Otterly ($29/mo ) offer excellent entry points. They might have lower prompt limits or fewer integrations, but for a solo marketer or SMB, they provide 80% of the value. I often recommend running a 30-day pilot on these tools to prove the value to stakeholders.

Required table: LLM audit software comparison (features, pricing, and best use case)

| Tool | Best For | Key Metrics | Est. Start Price | Notes |

|---|---|---|---|---|

| Evertune AI | Enterprise / High-volume | Brand Relevance, Topic Ownership | Contact Sales | High statistical confidence; strictly GEO-focused. |

| Semrush (AIO) | SEO Teams | Share of Voice, Sentiment | Included in higher tiers | Best for integrated SEO+GEO workflows. |

| Ranketta | E-commerce / Retail | Product visibility, Sentiment | Contact Sales | Strong on product-level tracking. |

| Brand24 | PR & Reputation | Mention volume, Sentiment | ~$99/mo (Suite) | Focuses on reputation and listening. |

| Rankscale | SMBs / Freelancers | Rank tracking in LLMs | ~$20/mo | Great budget starter option. |

| Gumshoe.AI | Content Strategy | Persona-based prompts | Contact Sales | Reverse-engineers user intent effectively. |

If you only remember one thing: Start with a budget tool or your existing SEO suite to establish a baseline before investing in an enterprise platform.

How I choose LLM audit software: a beginner-friendly buying checklist (SMB vs enterprise)

Buying software in a new category is risky. Vendors often promise “proprietary algorithms” that are just black boxes. To protect your budget (and your reputation), use this checklist when evaluating tools.

Checklist: what I validate in a free trial or pilot

- Prompt Management: Can I customize the prompts, or am I stuck with generic keywords?

- Reruns/Volatility: Does the tool run the prompt multiple times to average the score? (One run is not enough).

- Citation Capture: Does it list the URLs the AI cited? I can’t fix my SEO if I don’t know who the AI is reading.

- Export Data: Can I download the raw CSV? Dashboards are pretty, but I need raw data for my pivot tables.

- Regional Support: Can I test from the US, UK, and Germany separately?

Red flags: when tool data looks impressive but isn’t actionable

Be wary of tools that give you a single “Optimization Score” without explaining why. If the tool tells you your visibility is “45/100” but can’t show you the exact response text where you were mentioned (or omitted), it’s a vanity metric. Another red flag is a lack of history; if they don’t track changes over time, you can’t correlate your fixes with improvements.

Turning audit findings into wins: the content + technical fixes I implement next

Auditing is useless if you don’t fix the problems. Once I have my backlog, I move to implementation. This isn’t just about “writing better content”; it’s about structuring data so machines can read it confidently.

If your audit shows you are missing from comparison queries, you likely need a dedicated comparison page that fairly evaluates you against competitors. If you are being cited with wrong information, you need to update your product specs and wrap them in structured data. For teams struggling to produce high-quality, citation-ready content at scale, tools like an AI article generator can help build out these structural assets efficiently, provided you maintain strict editorial oversight.

Template: my LLM audit action backlog (Impact × Effort)

I keep this simple in Google Sheets or Notion:

- Issue: “ChatGPT claims we don’t offer API access.”

- Evidence: [Link to screenshot/log]

- Fix: Update the ‘Integrations’ page and add an FAQ item: “Do you offer an API?”

- Owner: Product Marketing

- Impact: High (Blocking Enterprise deals)

- Effort: Low

Where schema and on-page SEO fit in (without overdoing it)

Schema markup (like FAQPage, Organization, and Product) acts like a clarifier. It doesn’t guarantee a mention, but it makes it much easier for the LLM to parse your facts correctly. Think of it as labeling the ingredients for a chef; it ensures they use the right elements when cooking up an answer.

Common mistakes in LLM visibility auditing (and how I fix them)

I’ve made plenty of mistakes so you don’t have to. Here are the most common ones:

- Testing Only Once: I once panicked because a client disappeared from ChatGPT, only to realize it was a random fluke. Re-running the prompt 5 minutes later brought them back. Fix: Always run prompts 3–5 times and average the result.

- Using SEO Keywords as Prompts: Typing “email marketing software” is not how users talk to chatbots. Fix: Use conversational prompts like “Help me choose an email tool for a Shopify store.”

- Ignoring the Sources: Focusing only on your own site. AIs read G2, Reddit, and Wikipedia heavily. Fix: Your audit must cover your reputation on third-party sites.

- Chasing Score Changes Without Context: Panicking over a 5% drop when the model itself just updated. Fix: Annotate model updates (e.g., “GPT-4o launch”) on your dashboard.

Troubleshooting: when results swing wildly week to week

If your visibility graph looks like a rollercoaster, don’t panic. This is often due to model updates or “temperature” settings in the AI. Look for the trend line over 30–60 days rather than obsessing over daily fluctuations. Directional truth is what we are after.

FAQs + my recommended next steps (a simple 30-day plan)

Auditing LLMs feels new, but it’s just the next evolution of digital presence. The goal is to move from guessing to knowing.

Your 30-Day Starter Plan:

- Week 1: Select one tool (start with a budget option or free trial) and define your top 5 competitors.

- Week 2: Build a library of 20 conversational prompts and run your baseline audit.

- Week 3: Identify the top 3 “hallucinations” or gaps and ship content fixes (FAQs, schema updates).

- Week 4: Re-test and report on the “before and after” to leadership.

If you need help creating the kind of structured, authoritative content that earns citations in these new engines, consider using a specialized SEO content generator designed for this exact purpose.

FAQ: Why audit my brand’s visibility in LLMs?

Because your customers are already there. As search behavior shifts toward chat, being invisible in AI answers means you are invisible to a growing segment of high-intent buyers. It also reduces support costs by ensuring the AI gives accurate answers about your product.

FAQ: How do GEO tools differ from traditional SEO tools?

Traditional SEO tools measure your rank on a static list. GEO tools measure the probability of your brand being mentioned, cited, or recommended in a conversational answer. They are complementary, not interchangeable.

FAQ: Which platforms should I prioritize for auditing?

Start with ChatGPT due to its massive user base. Then, add Google Gemini (AI Overviews) if you rely on organic search traffic, and Perplexity if you are in B2B or tech, as it’s a favorite for researchers.

FAQ: Are there budget-friendly tools available?

Yes. Tools like Rankscale (approx. $20/mo) and Otterly (approx. $29/mo) are excellent for small teams . They allow you to track essential visibility metrics without the enterprise price tag.

FAQ: How often should I perform LLM visibility audits?

For most industries, a monthly cadence is perfect. It allows you to catch hallucinations before they set in but doesn’t overwhelm you with data. If you are in a highly volatile niche (like AI tech itself), you might check weekly.

FAQ: What types of insights do these tools provide?

They give you a “Share of Voice” (how often you appear), sentiment analysis (are you recommended?), hallucination detection (is the info true?), and a list of sources the AI is citing. These insights tell you exactly which content to update.