Monitoring the GPT: Best AI Monitoring Tools for Tracking AI Search Mentions

Introduction: Why I Started Tracking AI Search Mentions (and Why You Might Need To)

It happened during a routine pipeline review. A prospect told our sales team, “I asked ChatGPT for the top three vendors in your category. It listed two of your competitors, but you weren’t there.” That was the moment it clicked for me: our traditional SEO was solid—we ranked number one for those keywords on Google—but we were invisible where it mattered most for this specific buyer.

If you are leading growth or SEO at a mid-market company or SMB, you are likely facing the same anxiety. As AI assistants increasingly shape discovery, brands risk being invisible—or worse, misrepresented—without ever knowing it. We can’t manage what we don’t measure.

In this guide, I’m not just listing software. I’m going to break down the difference between Generative Engine Optimization (GEO) and traditional SEO, compare the best AI monitoring tools available right now, and walk you through the exact workflow I use to turn those insights into content updates. This is about moving from “guessing” to knowing.

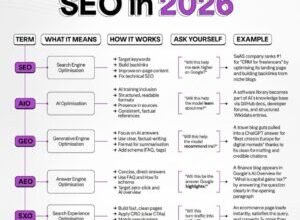

GEO vs. SEO: What AI Visibility Monitoring Is (and Isn’t)

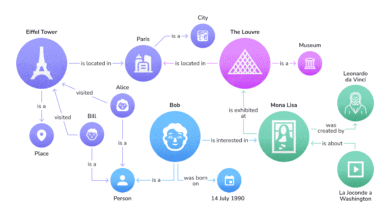

To understand why we need specialized tools, we have to respect the medium. Generative Engine Optimization (GEO) is fundamentally different from SEO. If SERP rankings are like stocking products on a supermarket shelf, AI search mentions are like a concierge recommendation at a hotel. The concierge (the AI) creates a custom answer based on training data and live retrieval.

Here is how I distinguish the two when explaining this to stakeholders:

- SEO Tools (Semrush, Ahrefs): Tell me where I rank on a static list of links. They track position, volume, and clicks.

- AI Visibility Monitoring: Tells me if I am part of the narrative. It tracks share of voice, sentiment, citation frequency, and prompt-level recommendations.

Why traditional rank trackers fail here:

AI answers are dynamic. If I ask Gemini, “Best CRM for small business,” the answer might change based on the phrasing, the day, or the model version. Furthermore, AI models hallucinate. I’ve seen tools report that a brand offers features they don’t actually have. SEO tools cannot catch that reputation risk; only dedicated LLM brand monitoring can.

How AI Mention Tracking Tools Actually Collect Data

When evaluating these tools, the first question I ask is: “How are you getting this data?” This isn’t just technical trivia; it affects the accuracy of your reporting. Generally, tools fall into two camps: API-based and scraping/simulation.

| Method | Pros | Cons | Best For |

|---|---|---|---|

| Direct API Queries | Fast, cost-effective, easy to scale across thousands of prompts. | May not perfectly reflect the “web browsing” experience of a real user; lacks some live web retrieval context. | High-volume baselining and trend tracking. |

| Interface Scraping / Simulation | Mimics actual user experience (e.g., using ChatGPT with Search enabled). | Slower, more expensive, higher risk of breaking when AI platforms update UIs. | Deep-dive audits and verifying specific citation sources. |

| Panel / User Data | Real data from real humans (often via browser extensions). | Limited scale; privacy concerns; smaller sample sizes. | Understanding broad consumer behavior trends. |

For most US-based marketing teams, a hybrid or API-based approach usually offers the best balance of cost and cross-model tracking. I treat these results as directional signals rather than absolute truth. If a tool tells me my visibility dropped 10% on Claude, I assume the trend is real, even if the exact percentage varies slightly.

Metrics That Matter: What I Track to Measure AI Brand Performance

When I first started, I tried to track everything. That was a mistake. Now, I focus on a simple dashboard that answers: “Are we there?” and “Is it good?”

- Share of Voice in AI (SoV): The percentage of times your brand appears in answers for your target prompts. How I use it: This is my headline metric for leadership.

- Brand Relevance: How strongly the AI associates your brand with a specific topic (e.g., “email marketing”). How I use it: To see if our positioning is sticking.

- Citation Frequency: How often the AI links to your site as a source. How I use it: This is the new backlink. High citations usually correlate with referral traffic.

- Hallucination / Accuracy Rate: The percentage of answers containing false information about pricing or features. How I use it: Immediate flag for the product or comms team.

- Sentiment Analysis: Is the mention positive, neutral, or negative? How I use it: I honestly ignore minor fluctuations here. I only look for deep negatives.

- Visibility Decay: How quickly your brand drops out of answers over time. How I use it: To time our content refreshes.

Note on Hallucination Detection: This is critical. I’ve seen hallucination detection save a deal when we realized an AI was telling prospects our software didn’t integrate with Salesforce (it did). We fixed the public documentation, and the AI answer corrected itself within weeks.

Best AI Monitoring Tools: A Practical Comparison (SMB vs. Enterprise)

The market is split. You have agile startups focusing purely on GEO, and established SEO giants adding AI features. Here is how the leading best AI monitoring tools stack up based on my research and experience.

| Tool | Best For | Models Covered | Key Features | Price Range [Needs Source] | Limitations |

|---|---|---|---|---|---|

| Otterly.ai | SMBs & Agencies | ChatGPT, Gemini, Claude, Perplexity | Simple UI, monitoring focused, Slack alerts. | ~$29 – $200/mo | Less depth on “why” you ranked; primarily tracking. |

| Siftly | Growth Teams | Major LLMs | Claims to boost AI mentions; competitor intel; sales cycle impact. | Mid-range | Focus is more on optimization advice than broad enterprise reporting. |

| Evertune AI | Brand Managers | ChatGPT, Perplexity, Gemini | Deep statistical significance (high volume runs), Topic Relevance metrics. | Enterprise / Custom | Can be overkill for small teams just starting out. |

| Ranketta | eCommerce / Product | LLMs + Search | Product-level visibility, source citation tracking. | ~€100 – €500/mo | Newer to US market; focused heavily on product visibility. |

| Semrush (Enterprise AIO) | Existing Semrush Users | ChatGPT, Google SGE | Integrated into SEO workflow, Share of Voice, Shopping analytics. | Enterprise Add-on | Requires existing Semrush subscription; enterprise pricing tier. |

| Profound | Enterprise Comms | ChatGPT, Perplexity, Gemini | Narrative control, publisher coverage, reputation risk focus. | Custom / High | Geared toward corporate communications rather than pure SEO execution. |

| LLM Tracker | Technical SEOs | 50+ Models | Real-time alerts, massive model coverage, 99.9% uptime. | Tiered | UI is more utilitarian; data-heavy. |

A simple decision framework: pick your “must-haves” first

- Coverage: Do you need to track niche models (Llama, Grok) or just the big three (ChatGPT, Gemini, Perplexity)?

- Actionability: Do you just want a report (reporting-only), or do you want the tool to tell you which content to update (optimization guidance)?

- Alerting: If you are a small team, Slack/Teams integration is non-negotiable. You won’t log in every day.

Budget reality: what you can get under $200/month vs enterprise pricing

If you are scrappy, tools like Otterly.ai or TryGrav.ai can get you started for under $100/month. You will get baseline tracking and alerts, which is often enough to find your biggest gaps. Enterprise GEO platforms (like Evertune or Profound) often run into the thousands per month because they offer features like “share of voice” across millions of simulated queries and dedicated support. Don’t overbuy early; prove the value with a cheaper tool first.

My Step-by-Step Workflow: From AI Mentions to Content and SEO Improvements

Buying a tool doesn’t fix your visibility. You have to do the work. Here is exactly how I execute a GEO optimization cycle. This usually takes me about 2–3 hours to set up, and then 30 minutes a week to monitor.

Step 1–2: Define goals + build a prompt library that mirrors real customer questions

Don’t just track your brand name. I use a simple formula to build my prompt library:

[Role] + [Problem] + [Constraints] + [Ask]

Example: “I am a VP of Sales (Role) looking to reduce admin time (Problem). What is the best CRM for a 10-person team (Constraint) that integrates with Slack (Constraint)?”

I cluster these prompts into categories: Awareness (What is X?), Consideration (Best tools for X), and Evaluation (X vs Y). For beginners, start with 20–30 high-intent prompts.

Step 3–5: Establish a baseline, set alerts, and diagnose citations + inaccuracies

Run your initial check. Do not panic if the data is noisy. I look for the “Zeroes”—prompts where we should be recommended but aren’t. I also check citations. If ChatGPT recommends us but cites a random blog post from 2019, that’s a risk. I set up Slack alerts for negative sentiment or “competitor only” mentions so I can react fast.

Step 6–8: Turn insights into a content plan (pages to create, update, and retire)

This is where the magic happens. If I see we are missing from “Best AI writing tools” prompts, I know I need to update my product pages or create a comparison guide.

To speed this up, I use the AI SEO tool from Kalema to analyze the top-ranking content for those entities. Then, I use the AI article generator to draft structured, fact-heavy sections (tables, lists, clear definitions) that LLMs love to cite. If I need to roll out updates across multiple cluster pages, the Automated blog generator helps me publish these “AI-citable” updates faster than my manual workflow allowed.

Step 9: Validate changes with before/after tests (without fooling yourself)

After updating the content, I wait two weeks and re-run the prompts. I track this in a simple spreadsheet:

Date | Prompt | Model | Mentioned? (Y/N) | Citation Source | Notes

Expect volatility. Some weeks your visibility drops for no clear reason. Look for trends over 30–60 days, not single data points.

Common Mistakes I See (and How to Fix Them)

I’ve made plenty of mistakes in this new field. Here are the ones to avoid so you don’t waste budget.

Mistake 1: Treating one AI answer like ‘the truth’

I once panicked because Gemini ignored us in one query. My engineer ran it again, and we were #1. Prompt variance is real. Fix this by using tools that offer sampling (running the prompt 10+ times) to get a statistically significant answer.

Mistake 2: Only tracking brand-name prompts (missing category discovery)

It’s vanity to only track “What is [My Brand]?” The money is in non-brand prompts like “Best alternative to [Competitor].” If you aren’t tracking unbranded category searches, you are blind to 80% of the opportunity.

Mistake 3: Ignoring citations and source quality

Being mentioned without a link (or with a bad link) is a wasted win. Perform a “citation audit” monthly. If you are recommended, check why. Is the AI pulling from your pricing page or a third-party review? Optimize the source it prefers.

Mistake 4: Chasing vanity metrics instead of business impact

Don’t obsess over “100% Share of Voice.” Focus on prompt-to-funnel mapping. Being visible on “Free cheap tool” might actually hurt you if you are an enterprise solution. Prioritize revenue-relevant topics.

Mistake 5: Not setting up alerts and workflows (insights die in dashboards)

If the data stays in the tool, it’s useless. Connect AI mention alerts to Slack or email. Assign an owner. Even 30 minutes a week to review alerts ensures you catch reputation issues early.

Mistake 6: Forgetting brand safety and hallucination monitoring

Inaccuracies can linger. I treat hallucination detection as a brand safety drill. If the AI says our product costs $500 (and it’s actually $50), we lose leads. Correcting the record on your own site is the first step to fixing this.

FAQs About AI Visibility Monitoring Tools

What is the difference between AI visibility monitoring and traditional SEO tools?

AI visibility monitoring tracks dynamic, generated answers from models like ChatGPT, focusing on share of voice and citations. Traditional SEO tools track static rankings on search engine results pages (SERPs). Think of SEO as winning a shelf spot, and AI monitoring as winning a verbal recommendation.

Which tools are best for small businesses vs. enterprises?

For SMB AI monitoring, I recommend starting with Otterly.ai or similar lightweight tools—they are affordable and cover the basics. For enterprise AI monitoring, platforms like Profound, Evertune, or Semrush Enterprise AIO offer the scale, governance, and reporting depth large teams need.

How do these platforms gather AI mention data?

Most use a mix of API queries (sending prompts to the model’s developer tools) and scraping (simulating a user on the web interface). Some also use statistical sampling to account for variance, while others rely on consumer panels for real-world user data.

What metrics should businesses track for AI brand performance?

Start simple. Track Share of Voice in AI (how often you appear), Brand Relevance (contextual alignment), and citation quality. Once you have a baseline, you can expand to sentiment and more complex topic authority metrics.

How can brands act on these insights?

Identify content gaps where competitors appear but you don’t. Create specific comparison pages, update FAQs with clear direct answers, and ensure your key product data (pricing, features) is easy for bots to read. Small, consistent updates to your site often lead to improved prompt intent alignment.

Summary and Next Steps: Choosing the Best AI Monitoring Tools and Getting Value Fast

The shift to AI-driven discovery is not coming; it is here. Tracking your presence in these engines is now as critical as checking your Google rankings. To recap:

- GEO is distinct from SEO: It requires different metrics (Share of Voice, citations) and a different mindset (influencing the answer, not just ranking).

- Data method matters: Understand if your tool uses APIs or scraping, as this affects accuracy and cost.

- Workflow is king: The best tool is useless without a process to update content based on findings.

Your Action Plan for This Week:

- Pick one tool: Don’t overthink it. Start with a trial of a tool that fits your budget (e.g., Otterly for SMB, Semrush/Evertune for Enterprise).

- Build your “Top 20” Prompts: Create a list of the 20 most critical questions your customers ask before buying.

- Run a Baseline: Document where you stand today. Are you mentioned? Are you accurate?

- Set a Weekly Review: Block 30 minutes on Friday to review alerts and assign one content fix.

By taking these steps, you move from anxiety about the “black box” of AI to having a clear, actionable strategy for Generative Engine Optimization.