How to Track Rank in Large Language Models: A Beginner’s Guide to LLM Visibility Metrics

Introduction: Why I’m learning how to track rank in large language models (and why you should too)

A few months ago, a prospect told our sales team they explicitly asked ChatGPT for the “best tools for [our category]” and we simply didn’t exist in the answer. We rank #3 on Google for that exact term. I kept refreshing my rank tracker, staring at that green “3” on the dashboard, realizing for the first time that my dashboard was measuring a game that fewer people were playing.

This isn’t just a hypothetical shift. Consumer adoption of AI tools grew from 14% to 29.2% between early 2024 and mid-2025 . If you are leading SEO or content strategy today, “visibility” is no longer just about 10 blue links; it’s about being cited, recommended, and accurately framed by generative engines.

In this guide, I’m going to walk you through the framework I use to measure brand visibility inside LLMs. I’ll share the exact metric stack, a downloadable-style workflow, and the templates I use to report this to leadership. This isn’t about prompt hacking or spamming models; it’s about building a sustainable, newsroom-grade system to track how the world’s most powerful engines see your brand.

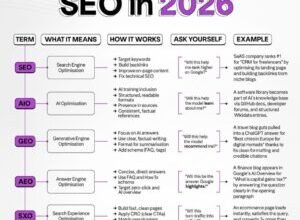

What does “rank inside an LLM” mean (and how it differs from Google rank)?

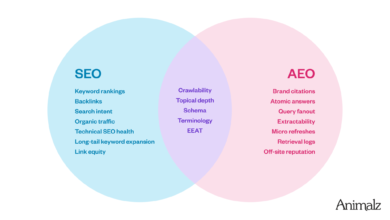

When we talk about “rank” in traditional SEO, we are talking about a linear position on a page: Slot 1, Slot 2, Slot 3. You win the slot, you likely win the click. But inside a Large Language Model (LLM), the concept of “rank” is fluid. It’s about ordering, mention frequency, and citation within a narrative answer.

If you are new to this, here’s the mental model I use: In Google, you are fighting for shelf space. In an LLM, you are fighting for a recommendation from a knowledgeable clerk.

Here is how the two worlds compare:

| Feature | Traditional SERP Rank | LLM Rank / Visibility |

|---|---|---|

| What it measures | Fixed position on a results page. | Probability of being mentioned or recommended. |

| Where it shows up | A list of blue links. | Within a paragraph, bulleted list, or table. |

| Primary Goal | Traffic (Clicks). | Share of Mind (Citations & Answers). |

| Volatility | Relatively stable day-to-day. | High variance based on prompt phrasing/seed. |

This shift matters because AI platforms are projected to influence about 14.5% of organic traffic by 2026 . But even more importantly, the quality of the visibility is different. In a recent SaaS case study, a company ranking fourth on Google achieved a 67% LLM citation rate, while the #1 Google competitor only had 23% . The result? The #4 player was being recommended more often, even if they were seen less on Google.

Quick definitions I’ll use (visibility, citation, favorability, consistency)

- Visibility: Did the model mention your brand or product at all? (e.g., “Yes, Brand X is an option.”)

- Citation/Mention: The specific instance of your brand name appearing in the output.

- Favorability: Is the context positive, neutral, or negative? (e.g., “Good for beginners” vs. “Lacks advanced features.”)

- Factuality: Are the claims made about your product actually true? (e.g., Does it list a feature you actually have?)

- Consistency: Do you appear across multiple regenerations of the same prompt?

- Actionability: Does the model suggest a next step, like “Visit their site” or “Try a demo”?

The metric stack: what to measure when tracking rank inside LLMs

If I could only track three things this month, I wouldn’t worry about complex math. I would track Citation Rate, Sentiment, and Share of Voice. However, to really understand what is happening, we need a dual-layer strategy: the external metrics that impact business revenue, and the internal diagnostics that explain why models rank things the way they do.

As of late 2025, sophisticated enterprise teams are using these lenses to audit their performance . Here is the breakdown:

| Metric | What it tells me | Cadence | What ‘Good’ looks like |

|---|---|---|---|

| Citation Rate | How often you appear in relevant answers. | Weekly | >50% for core terms. |

| Effective Rank | Average position in list-based answers. | Weekly | Top 3 positions. |

| Share of Voice | Your mentions vs. competitor mentions. | Monthly | Higher than your market share. |

| Factuality Score | Are the hallucinations hurting us? | Monthly | 100% accurate on pricing/features. |

External metrics (what users experience in answers)

- Mention Frequency: Simply counting how many times your brand appears across a set of test prompts.

- Effective Rank (List Position): If the LLM generates a numbered list, what number are you? (Being #1 matters for click-through, just like Google).

- Share of Voice (SOV): If there are 10 total brand mentions in an answer, and you are 2 of them, your SOV is 20%.

- Sentiment Score: A basic Positive/Neutral/Negative tag applied to the text surrounding your mention.

- Stability: The percentage of times your rank remains consistent across 5-10 runs of the exact same prompt. (Don’t panic if this fluctuates; LLMs are probabilistic).

- Actionability: Whether the model provides a link or a navigational instruction to find you.

Internal/diagnostic metrics (what research says correlates with quality and ordering)

Note: This is advanced context. You generally cannot access these effectively for closed models like GPT-4, but they are crucial for understanding the future of “Reference-Free Evaluation.”

Researchers have found that internal geometric properties of an LLM’s state correlate with how it ranks quality. Effective Rank and Intrinsic Dimensionality are metrics derived from the internal representations of the model; studies in 2025 showed these consistently rank text quality in the same order across different LLMs .

Another emerging metric is Diff-eRank. It’s a rank-based evaluation that correlates with loss and accuracy metrics and tends to increase with model size . Think of it as checking the “shape” of the model’s understanding rather than just asking a human to grade the output. While you might not calculate these manually in a spreadsheet, they are the backend signals that future SEO tools will likely rely on.

How to track rank in large language models: my step-by-step workflow (beginner friendly)

You don’t need a data science degree to start tracking this. You need a spreadsheet, a list of prompts, and about two hours a week. Here is the exact loop I use: Define → Build → Run → Score → Report.

Step 1: Define the use case (brand discovery, comparison, troubleshooting, buying intent)

You cannot just ask “Who is [Brand]?” and expect useful data. You need to mimic the user journey. I bucket my tracking into four intents:

- Discovery: “What are the best CRM tools for small agencies?”

- Comparison: “HubSpot vs Salesforce for startups.”

- Troubleshooting: “How to fix email deliverability issues.” (Does the model recommend your tool as the fix?)

- Buying Intent: “Pricing for enterprise project management software.”

Step 2: Build a controlled query set (the “test suite”)

My first test suite was too small—I used 5 prompts and the results were noisy. I learned that you need volume to smooth out the randomness. Aim for 25–50 prompts to start.

- Do: Use natural language variants. Mix short queries (“best CRMs”) with long-tail questions.

- Do: Run buyer-intent queries weekly, as these fluctuate most .

- Don’t: Include your brand name in every prompt. You want to see if you appear organically in non-branded searches.

Step 3: Run across models and settings (and record everything)

I recommend starting with the “Big Three” for your region (usually GPT-4o, Claude 3.5, and Gemini). Crucially, you must keep your Temperature (randomness setting) consistent if you are using an API, or acknowledge the variance if using the web interface.

The Spreadsheet Log:

If you are doing this manually, your columns should look like this: Date | Prompt ID | Model Version | Output Text | Brand Mentioned? (Y/N) | Rank Position | Sentiment.

Step 4: Score outputs (rank, mentions, sentiment, factuality, and stability)

This is where the rubber meets the road. I use a simple rubric to turn text into data.

| Signal | Score (0-2) | Example |

|---|---|---|

| Presence | 0 = No, 1 = Passing mention, 2 = Core recommendation | “Try [Brand X]” = 2. |

| Rank | 0 = Not listed, 1 = Bottom half, 2 = Top 3 | Listed #2 in a bulleted list. |

| Sentiment | 0 = Negative, 1 = Neutral, 2 = Positive/Glowing | “Highly reliable” = 2. |

I’d rather have consistent neutral mentions (score 1) across 100 prompts than occasional #1 rankings (score 2) that disappear the next day. Stability is the long-game metric.

Templates and tables: how I calculate “citation rate” and share-of-voice (with an example)

When I present this to stakeholders, I never show the raw log. They don’t care about prompt #34. They care about Share of Voice. Here is a sample of how I aggregate the data for a Project Management tool.

Sample Data Log (Snippet):

| Prompt | Model | Mentioned? | Rank Position | Notes |

|---|---|---|---|---|

| Best PM tool for agencies | GPT-4o | Y | 2 | Called “Great for visuals” |

| Free timeline software | GPT-4o | N | – | Competitor A listed first |

| Enterprise task management | GPT-4o | Y | 4 | Listed as “Expensive option” |

The Executive Summary Table:

| Model | Citation Rate | Avg Rank (when visible) | Stability |

|---|---|---|---|

| GPT-4o | 67% | 3.0 | High |

| Claude 3.5 | 40% | 1.5 | Medium |

| Gemini | 23% | 5.0 | Low |

In this example, even if we are ranking poorly on Google, a 67% citation rate on GPT-4o means we are dominating the conversation there. That’s a win we can take to the board.

A simple reporting cadence I use (weekly tests, monthly insights)

Consistency beats perfection here. If you try to test everything every day, you will burn out.

- Weekly Report: Run the “Buyer Intent” prompt set (10-15 high-value queries). Check for sudden drop-offs or new hallucinations.

- Monthly Report: Run the full 50-prompt suite. Analyze Share of Voice vs. Competitors. Identify content gaps (e.g., “We never show up for ‘integration’ queries”).

The tooling layer: observability, logging, and governance for LLM rank tracking

As you scale this process, spreadsheets eventually break. If you are running thousands of tests or integrating LLM checks into your product, you need observability. This isn’t just about rank; it’s about cost and governance. You can’t optimize what you can’t reliably reproduce.

Tools like Portkey, Traceloop, and Langtrace are emerging to handle this logging layer. They track the “tokens in” and “tokens out,” giving you a paper trail of every generation. If you are also publishing content at scale to fix the gaps you find, using an Automated blog generator can help you operationalize the content production, but you still need the logging to verify the impact.

What to log every time (minimum viable dataset)

I once ran a huge weekend test and forgot to log the model temperature. The results were wild, and I couldn’t replicate them. I had to throw the whole batch out. Learn from my fail. Always log:

- Prompt ID & Text: The exact string sent to the model.

- Intent Tag: (e.g., “Pricing,” “Comparison”).

- Model Provider & Version: (e.g., “gpt-4-0613”).

- Parameters: Temperature, top_p settings.

- Tokens In/Out: For cost estimation.

- Latency: How long did the answer take?

How to keep experiments clean (reduce noise)

If you change three things at once—the prompt, the model version, and the temperature—you won’t know what caused the rank change. Keep it scientific. Use fixed seeds where available. Separate your “marketing” prompts from your “evaluation” prompts so you don’t pollute your own dataset.

How to track rank in large language models and improve it (without chasing hacks)

There is a dark side to this. Research into methods like RAF (Rank Anything First) has shown that LLMs can be manipulated. RAF uses two-stage token optimization to subtly steer rankings using natural-sounding prompt perturbations. In tests on Llama-3.1-8B, RAF reduced the average rank of targeted items to 3.26 .

I mention this not so you can exploit it—that is a short-term game that will get patched—but so you understand that rank is fragile. This is why we measure stability. The best defense against volatility isn’t a hack; it’s strong Entity SEO.

To genuinely improve your standing, you need to be the most authoritative entity in the model’s training data. If you are using an AI SEO tool to assist your strategy, ensure it focuses on Entity optimization, not just keyword stuffing.

Content actions that tend to move the needle (beginner checklist)

Here is what I actually do when I see a drop in citation rate:

- Clarify “Who We Are”: I check our homepage and About page. Is our h1 clear? “We are the #1 project management tool for creative agencies.” Don’t be vague.

- Create Comparison Pages: Models love to cite “X vs Y” pages. If you don’t have one, the model will find a third-party review site instead.

- Strengthen Structured Data: Add Organization, Product, and FAQ schema. This is machine-readable food for the model.

- Consistent NAP: Ensure your Name, Address, and Phone/Product details are identical across Crunchbase, LinkedIn, and your site.

- Publish Answerable Content: Create FAQ blocks that directly answer the questions you are testing.

Stability and safety: how I interpret volatility and possible manipulation

If your citation rate drops from 60% to 10% overnight on one model, don’t panic. Check if the model version changed. Rerun the test with fixed settings. If the drop persists across multiple models, you have a content problem (or a competitor has launched a massive campaign). If it’s just one model, it might be a retrieval glitch.

Common mistakes I see (and how I fix them) + FAQs + next steps

It’s easy to get lost in the data. Here are the traps I fell into early on, so you don’t have to.

Common mistakes & fixes (5–8 items)

- Mistake: Testing 5 prompts and calling it a strategy. Fix: Build a suite of 30+ prompts to get statistical significance.

- Mistake: ignoring “Negative” mentions. Fix: Track sentiment. Being ranked #1 with a warning label is worse than not ranking.

- Mistake: Chasing daily fluctuations. Fix: Look at monthly trends. Models are probabilistic; noise is normal.

- Mistake: Not logging the system prompt. Fix: Always record the instructions given to the AI, not just the user query.

- Mistake: Assuming Google Rank = LLM Rank. Fix: Treat them as separate channels with separate KPIs.

FAQs (from real search questions)

What does rank inside an LLM actually mean?

It refers to the ordering of your brand or product within an AI-generated list, or the frequency with which you are cited as a solution. Unlike Google, there are no “pages,” only answers.

Can internal metrics really predict quality?

Yes. Research shows that metrics like Effective Rank and Intrinsic Dimensionality correlate with how humans perceive text quality, but these are currently difficult for average businesses to access in closed models like GPT-4.

Is prompt manipulation (like RAF) a real threat?

It is a technical vulnerability where optimized tokens can shift rankings. However, for most businesses, the biggest threat isn’t hackers; it’s simply having unclear content that the model ignores.

Why does Citation Rate matter more than SERP rank?

Because users often stop at the AI answer. If you are cited in the answer, you get the mindshare. If you are a link below the answer, you might never be seen.

Summary and next actions (recap + checklist)

Tracking rank in LLMs is about moving from “winning the click” to “winning the argument.” By monitoring your Citation Rate, Share of Voice, and Sentiment, you can visualize your invisible performance.

Your checklist for this week:

- Build a spreadsheet with the 30 queries your customers ask most.

- Run them through ChatGPT, Claude, and Gemini manually.

- Score your “Citation Rate” (Did we show up? Y/N).

- Identify the biggest gap (e.g., “We are invisible in comparison queries”).

- Use an AI article generator to draft the specific comparison pages or FAQs needed to fill that gap.

If you want a simple start: just do the prompt suite and the citation rate. That single percentage will tell you more about your future readiness than any keyword ranking ever could.