Best AI Tracking Software: AI Tracking Leaderboard for Accurate AI Overviews

I distinctly remember the moment tracking AI behavior went from a “nice-to-have” to a “crisis priority” for me. I kept seeing a mid-size SaaS client’s competitor being quoted in Google AI Overviews for queries that were specifically about my client’s product features. The traffic wasn’t just dropping; it was being misdirected based on factual errors generated by an LLM.

That experience clarified a messy reality: knowing your rankings isn’t enough anymore. You need to know what the AI is actually saying.

However, the market for best AI tracking software is currently confusing. Vendors often conflate three very different problems: engineering reliability (is the server up?), model integrity (is the math drifting?), and search visibility (is ChatGPT recommending us?). If you are an SEO or Growth Ops Manager, buying the wrong one is an expensive mistake.

I built this guide and AI tracking leaderboard to cut through the hype. I’m not here to sell you a “magic bullet.” I’m here to help you build a newsroom-grade tracking stack that alerts you when AI accuracy drifts or your visibility drops—so you can actually fix it.

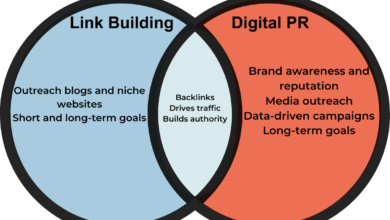

What “AI tracking” means in practice (and why it’s not the same as analytics)

If you are new to this, here is how I think about the landscape. “AI Tracking” is an umbrella term that usually refers to three distinct jobs. In 2026, you cannot treat these as the same thing, or your reporting will make no sense to your stakeholders.

I categorize the tools into these three buckets:

- AI Observability (The Infrastructure Monitor): This is for DevOps and SREs. It answers: “Is the system slow? Is the GPU overheating?” It uses AI observability tools to detect anomalies in logs and traces.

- Model Monitoring (The Gradebook): This is for ML Engineers. It answers: “Is the model getting dumber?” It tracks model drift detection, embedding drift, and RAG pipeline monitoring to ensure the output quality remains high.

- AI Search Visibility (The Reputation Tracker): This is for SEOs and Marketers. It answers: “How does my brand appear in Google AI Overviews or ChatGPT?” It measures share of voice and sentiment in generative results.

Before we go further, here is a quick glossary of terms you will see in the dashboard screenshots:

- Drift: When the live data (or model output) moves away from the historical baseline, causing accuracy to drop.

- Embedding: The numerical representation of text. If these change unexpectedly, your search results break.

- RAG (Retrieval-Augmented Generation): The process where an AI looks up your documents to answer a question. Monitoring this ensures it pulls the right document.

- Prompt Set: A standardized list of questions you ask an AI every day to measure changes in its answers.

The 3 buckets I use: observability, model performance, and AI visibility

To keep your sanity, map your business needs to these owners:

- Bucket A (Observability): Owned by DevOps/SRE. Focus: Latency, cost, errors.

- Bucket B (Model Performance): Owned by ML Engineers. Focus: Accuracy, hallucination rate, retrieval quality.

- Bucket C (AI Visibility): Owned by SEO/Brand. Focus: AI search visibility tracking, citations, sentiment.

FAQ-level clarity: what differentiates AI observability tools from traditional monitoring?

A common misconception is that if you have Datadog for your servers, you are “monitoring AI.” Not necessarily. Traditional monitoring tells you if the application crashed. AI observability tools tell you why the application gave a wrong answer. They correlate infrastructure spikes with model behavior (like a sudden drop in confidence scores), which standard server logs simply miss.

AI Tracking Leaderboard (2026): the most accurate software for AI Overviews and beyond

There is no single “winner” because a brand manager needs different data than a machine learning engineer. Below is my segmented AI tracking leaderboard based on verified capabilities, scale, and accuracy.

I have scored these based on their specialized ability to detect drift detection, execute prompt monitoring, and provide actionable root cause analysis.

| Tool Name | Primary Focus | Standout Capability | Best For |

|---|---|---|---|

| Datadog / Dynatrace | Full-Stack Observability | Correlating infra metrics with AI model behavior | Enterprise DevOps & SRE |

| Arize AI | ML Model Performance | Embedding drift & RAG troubleshooting | ML Engineering Teams |

| Peec AI / LLMrefs | AI Search Visibility | Multi-engine prompt tracking (ChatGPT + Google) | SEO Agencies & Brand Managers |

| Evertune | Enterprise AI Visibility | High-volume statistical monitoring (1M+ prompts) | Large Brands needing rigorous data |

| AgentSight / eACGM | Research / Emerging | Instrumentation-free monitoring via eBPF | Security & R&D Teams |

How I interpret this table: If you are trying to fix your rankings in AI Overviews, skip straight to the visibility tools (Peec, LLMrefs, Evertune). If you are building an internal AI bot for customers, you need Arize AI. If you just want to make sure the AI isn’t crashing your cloud budget, stick with Datadog.

How I score “accuracy” for AI tracking (what to look for, what to ignore)

Accuracy isn’t about a pretty dashboard. In this field, accuracy means statistical significance. For example, if a tool tells you your “Share of Voice” dropped, did they run 5 prompts or 5,000?

I look for rigor. Evertune, for instance, runs over 1 million custom prompts monthly per brand . That is statistical power. AgentSight achieves monitoring with less than 3% performance overhead . If a tool can’t explain its sampling methodology or why a result changed, I don’t treat it as tracking—I treat it as a random screenshot.

Enterprise observability leaders: Datadog, Dynatrace, Grafana Labs

For teams running production AI at scale, Datadog AI Ops and Dynatrace Davis AI are the heavyweights. They excel at full-stack observability. The key here is context. An alert from Datadog doesn’t just say “Latency is high.” It says, “Latency spiked because the vector database re-indexing slowed down the model inference.”

Grafana Labs is the go-to if you want open-source flexibility. It allows you to build custom visualizations that mash up your GPU metrics with your user feedback logs. It’s powerful, but it requires assembly.

Best for monitoring production model accuracy and drift: Arize AI

When I talk to ML engineers, their biggest fear is “silent failure”—where the model works, but the answers get slightly worse every day. Arize AI is purpose-built for this. It monitors embedding drift (did the meaning of the data change?) and offers specific dashboards for RAG monitoring.

A typical workflow here looks like this: Baseline the model → Monitor live embeddings → Alert on accuracy drop → Investigate the specific retrieval chunk that caused the bad answer.

AI Overviews + LLM visibility trackers: Peec AI, LLMrefs, Evertune, Otterly.ai

This is the fastest-growing category. These tools are essentially “Rank Trackers for the AI Era.”

- Peec AI and LLMrefs are excellent for spotting trends across multiple engines. They can tell you if ChatGPT loves your brand while Google AI Overviews ignores it.

- Otterly.ai focuses heavily on brand representation in LLM responses, helping you catch when an AI is hallucinating bad press about you.

- Evertune is the choice for enterprise scale. It handles the volume needed to prove to a CMO that a drop in visibility is a real trend, not a fluke.

One warning: These tools give you the signal, but they don’t fix the content. They are the thermometer, not the medicine.

Emerging (research) approaches: monitoring AI agents without modifying code (AgentSight, eACGM)

I treat these as signals of where the industry is going. AgentSight and eACGM utilize eBPF monitoring to watch AI agents without requiring you to rewrite your code (instrumentation-free). This is critical for security teams who need to monitor AI agents without modifying code to detect prompt injection attacks or resource loops. While mostly in the research/early-adoption phase, this “low overhead” approach is the future of secure AI monitoring.

How I choose the best AI tracking software: a beginner-friendly checklist (by use case)

Choosing software in a hype cycle is dangerous. Teams usually overbuy features they don’t have the staff to manage. Here is the decision framework I use to keep budgets under control.

Step 1: Define what you’re tracking (visibility vs. accuracy vs. reliability)

Don’t say “I want to track AI.” Be specific:

- Goal A: “I need to know if our competitor is ranking above us in AI Overviews.” (You need AI visibility tools).

- Goal B: “I need to know if our chatbot is hallucinating answers.” (You need model accuracy monitoring).

- Goal C: “I need to ensure our AI API doesn’t time out.” (You need reliability monitoring).

Step 2: Build a test prompt set (the simplest way to measure change)

Before you buy a tool, you need a test prompt set. This is your control group. I keep my first prompt set small (20–50 prompts) so I actually look at it. It should include:

- Branded Prompts: “What is [Your Brand]?”

- Competitor Prompts: “Best alternatives to [Your Brand].”

- Commercial Prompts: “Top rated software for [Your Category].”

Consistency is key. You cannot measure AI Overviews tracking trends if you change the questions every week.

Step 3: Evaluate methodology + reporting (how the tool proves its claims)

When you get on a demo call, ask these specific questions to cut through the marketing fluff:

- How often do you refresh the data? (Real-time vs. weekly matters).

- How do you simulate location? (AI Overviews vary wildly by geo).

- Do you provide screenshots or just text summaries?

- How do you calculate “sentiment”? (Is it a black box or transparent?)

- Can I export the raw data for my own root-cause analysis?

Step 4: Match deployment + budget to your reality (SMB vs. enterprise)

The best tool is the one you will keep configured three months from now. If you are an SMB, start with a SaaS solution like LLMrefs or Peec AI. They have low time-to-value. If you are a large enterprise with sensitive data, you might need open-source observability (Grafana) or on-prem capable solutions to meet compliance. Don’t buy an enterprise monitoring suite if you don’t have a dedicated engineer to run it.

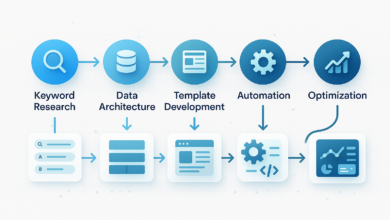

Implementation: how I set up AI Overviews tracking and alerts in the first 7 days

Buying the tool is the easy part. The hard part is building a workflow that actually improves your visibility. Once your tracking software reveals gaps—like your brand missing from key answers—you need to close the loop.

This is where I integrate content operations. When I find a gap, I use an AI content writer to help draft the missing semantic entities. If we need to restructure an entire topic cluster to win the AI Overview, I rely on an AI article generator to build structured, schema-ready drafts. For ongoing maintenance, an Automated blog generator helps me publish and test new content variations at a pace that matches the AI’s update cycle. The goal is a seamless loop: Track → Generate with SEO content generator → Publish → Measure again.

A simple 7-day rollout plan (who does what)

- Day 1 (Definition): SEO and Product leads agree on the “Golden Prompt Set” (Top 50 queries).

- Day 2 (Baseline): Run the first manual or automated scan. Screenshot everything.

- Day 3 (Configuration): Set up dashboards. distinct views for “Brand Defense” vs. “Opportunity.”

- Day 4 (Integrations): Connect alerts to Slack/Teams. (Constraint: Only alert on >10% drops to avoid fatigue).

- Day 5 (Analysis): First review. Identify one clear content gap.

- Day 6 (Action): Update the specific page or article targeting that gap.

- Day 7 (Reporting): Send the “Weekly Visibility Snapshot” to stakeholders.

Where on-page SEO fits: titles, headers, schema, internal links (without over-optimizing)

I’ve found that LLMs rely heavily on structure. If your tracking shows you are cited but not featured, check your on-page SEO. Clear H2s/H3s help the model parse your content. Schema markup (especially Organization and FAQPage) provides distinct signals about entities. And don’t underestimate internal links; they help models understand the relationship between your products and your informational guides.

Common mistakes I see when teams buy AI tracking tools (and how I fix them)

I have made plenty of mistakes in this space. Here are the ones you should avoid:

- Alert Fatigue: Teams turn on every notification. Result: Nobody looks at Slack. Fix: Only alert on “Critical Brand Risk” (negative sentiment) or massive visibility drops.

- No Baseline Monitoring: Reacting to a single bad result. Fix: Never panic over one data point. Look for a 3-day trend in your prompt set.

- Ignoring Geography: Assuming the AI sees what you see. Fix: Ensure your tool proxies from your target customer’s region.

- Mixing Metrics: Confusing “Observability” (uptime) with “Visibility” (rankings). Fix: Keep separate dashboards for Engineering and Marketing.

Mistake patterns by category (visibility tools vs. observability vs. model monitoring)

In visibility tracking, the biggest mistake is tracking too many low-value keywords. In observability, it’s failing to filter out noise from test environments. In model monitoring, it is forgetting to update the “ground truth” dataset, making drift detection useless.

FAQs about AI tracking software (quick, practical answers)

What differentiates AI observability tools from traditional monitoring platforms?

AI observability tools go beyond CPU and RAM metrics to track model-specific behaviors like hallucination rates and confidence scores. Traditional monitoring tells you if the server is hot; AI observability tells you if the answer is wrong. They layer model-centric metrics on top of infrastructure telemetry.

Which tool is best for monitoring production ML model accuracy and drift?

Arize AI is widely considered the leader for monitoring model accuracy and drift detection in production. It provides deep visibility into embedding changes, which is critical for debugging why an LLM’s performance is degrading over time.

Are there cost-effective options for AI search visibility tracking?

Yes. If you need cost-effective AI visibility tracking, LLMrefs offers an entry-level tier suitable for smaller teams. Peec AI provides broader platform coverage at competitive rates, while Evertune is geared towards enterprise budgets requiring massive scale.

Can I monitor AI agents at the system level without modifying code?

Emerging research tools like AgentSight and eACGM allow you to monitor AI agents without modifying code using eBPF technology. This is currently more of a technical implementation for security and R&D teams rather than a turnkey SaaS solution.

How can marketers track brand representation in AI-generated content?

Marketers should use tools like Otterly.ai to track brand representation. These platforms run prompts specifically designed to see how LLMs describe your brand attributes, allowing you to catch misinformation or negative sentiment early.

Conclusion: my 3 takeaways + what I’d do next if I were starting today

If I had to start my AI tracking journey over today, I would keep it simple:

- Know your bucket: Don’t buy an observability tool to solve an SEO visibility problem.

- Segment the leaderboard: Use the right tool for the specific job (Arize for models, Peec/Evertune for visibility).

- Start small: A consistent 50-prompt baseline is better than a messy 5,000-prompt one.

Next Steps: Pick one use case (Visibility or Model Performance) and shortlist two tools. Run a simple 2-week pilot with a defined prompt set. Don’t try to boil the ocean—just turn the lights on.