Tracking Perplexity AI: how to monitor Perplexity AI performance (Beginner Guide for US Businesses)

Last Tuesday, I noticed something strange in my analytics: a sudden spike in referral traffic labeled "perplexity.ai." My first instinct was to celebrate—more traffic usually means more leads, right? But when I checked my conversion dashboard, the numbers were flat. The traffic was there, but the downstream impact wasn’t.

That was my wake-up call. I realized I was shipping content and integrations for the "new web" without actually knowing how to measure them. I couldn’t tell my VP if that traffic was valuable or just accidental noise. If you are a growth marketer, content lead, or light technical operator in a US business, you might be in the same boat.

Monitoring Perplexity isn’t just about watching a line on a graph go up. It requires a specific workflow to track both the user side (referrals, engagement) and the technical side (latency, API errors) if you are building on their platform. In this guide, I’ll walk you through the exact metrics I track, how I set up my dashboard, and the common mistakes I made so you don’t have to. Whether you are optimizing for search or integrating their SDK, having the right AI SEO tool or monitoring stack makes all the difference.

Search intent: informational + how-to (what I’m actually measuring)

Let’s be clear about what we are doing here. This is a practical, how-to monitoring playbook, not a collection of vague statistics. When I say "performance," I mean two things:

- User Outcomes: Are the people coming from Perplexity (or using my Perplexity-powered features) converting, staying, and returning?

- Technical Outcomes: Is the connection reliable? Are latency and error rates within acceptable limits to scale?

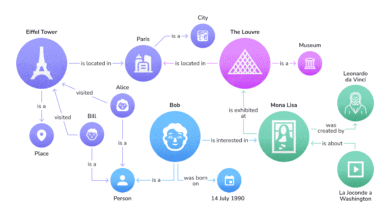

You won’t find native analytics inside Perplexity that tell you how you rank. Instead, we have to rely on "what I can measure from my side"—our logs, our analytics, and our instrumentation.

Quick answer: the 5-step monitoring loop

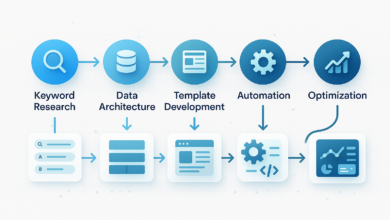

If you are short on time and just need the roadmap, here is the loop I use to keep things sane:

- Define the Business Goal: Are you trying to drive leads (referral) or power a tool (API)?

- Choose Your Metrics: Don’t track everything. Pick 1 primary KPI (like Cost Per Lead) and 2 technical guardrails (like p95 Latency).

- Instrument Collection: Set up your SDK logging or analytics tags properly.

- Visualize & Alert: Build a one-page dashboard and set alerts for when things break.

- Weekly Review: Spend 15 minutes a week checking trends and refining your setup.

Why tracking Perplexity AI performance matters (and what’s different about the “new web”)

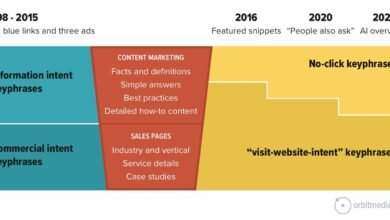

You might wonder why we can’t just treat Perplexity like Google or a standard API. The reality is that the "answer engine" model changes user behavior fundamentally. Users aren’t just clicking links; they are getting answers directly on the platform. If they do click through, they are often highly qualified—or completely lost.

The scale here is massive and growing fast. As of mid-2025, reports suggest Perplexity processes around 780 million queries monthly . That is a lot of volume. But here is the kicker for US businesses: desktop usage is dominant here (often 80-90% in B2B contexts), meaning these are users at their desks, likely doing deep research or work tasks. If your site or integration is slow, or if your content snippet is misleading, you lose them instantly.

We also have to consider the business models, because they dictate what "success" looks like. Perplexity isn’t just a search engine; it’s a subscription business (Pro), an enterprise utility, and a developer platform.

A fast reality check: Perplexity scale and engagement (context, not vanity)

- 780M+ Monthly Queries: The volume is there. If you have an integration, a 1% error rate could mean thousands of failed interactions a month.

- ~22M Monthly Active Users: The audience is significant, but it’s niche—tech-savvy and information-hungry .

- 9–12 Minute Sessions: Users stick around. If they are spending 10 minutes on Perplexity, your content needs to be deep enough to answer the specific follow-up questions they have when they finally click through .

Business models that change what I measure

Understanding how Perplexity makes money helps me figure out what to track:

- Perplexity Pro ($20/mo): These are power users. If they click through, they expect high-quality, ad-free experiences. I track engagement depth here.

- Enterprise Pro (~$40/seat): This is for internal teams. If you are building an internal tool using their API, the metric isn’t "clicks," it’s task completion rate.

- API Licensing: If you are the one paying for the API, every token costs money. Here, latency and token usage efficiency are my top metrics.

- Sponsored Follow-ups: This is their ad model. It’s experimental, but if you are participating, you need to track attribution heavily to prove ROI.

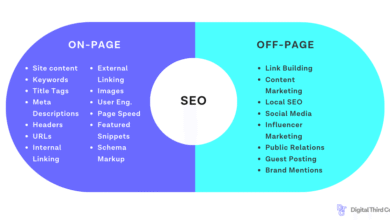

What to measure: a practical framework for how to monitor Perplexity AI performance

I organize my monitoring into three layers. This keeps me from getting overwhelmed by data. If I only tracked three things, it would be Conversion Rate (User), p95 Latency (Technical), and Cost Per Outcome (Business).

Here is a breakdown of the metrics that actually move the needle:

| Metric | What it tells me | How to collect | Good starting threshold |

|---|---|---|---|

| Conversion Rate | Is the traffic/usage valuable? | GA4 Events / Mixpanel | > 2% (highly dependent on industry) |

| p95 Latency | The speed experienced by the slowest 5% of requests. | Server Logs / SDK | < 2 seconds (for API calls) |

| Error Rate | How often the system fails. | SDK Error Catching | < 1% |

| Throughput (RPS) | Volume of requests per second. | Load Balancer / API Gateway | Baseline + 20% headroom |

Layer 1 — User experience metrics (what the audience feels)

This is mostly about your referral traffic. When Perplexity sends a user your way, are they happy? I look at Session Duration and Bounce Rate, but with a grain of salt. A high bounce rate isn’t always bad—it might mean the user found the answer immediately. That’s why I prefer Return Visitor Rate. If they come back, I know Perplexity users trust my brand.

One thing I learned the hard way: Attribution is messy. Much of this traffic is Direct or labeled generically. I always try to isolate "perplexity" in the referral source or UTMs where possible.

Layer 2 — Technical/API metrics (what my systems experience)

If you are using the Perplexity API or SDK, this is your bread and butter. You need to track:

- p95 Latency: This measures the "worst" delays. If your p95 spikes, your users are frustrated, even if the average speed looks fine.

- Timeouts: How often does the request just hang?

- Connection Pooling: Are you reusing connections efficiently?

- Async Concurrency: Are you handling multiple requests at once without blocking?

Layer 3 — Business outcomes (what leadership cares about)

When my CEO asks, "Is this Perplexity integration worth the cost?" I don’t show him latency logs. I show him ROI.

- Cost Per Lead: How much API spend does it take to generate one qualified lead?

- Retention: Do users who use the AI features stay longer?

- KPI Framework: I stick to one primary KPI (e.g., successful search sessions) and two supporting ones (e.g., latency, error rate).

Step-by-step: how I set up monitoring for Perplexity AI (analytics + SDK + alerts)

This is the workflow I use. You can treat this as a checklist. It works whether you have a full engineering team or you are just a marketer with a AI article generator and a dream.

Step 1 — Define the use case and success criteria

Before writing code, I ask: "What is the job to be done?" If I’m building a customer support assistant, success is Deflection Rate (users not needing a human). If it’s a research workflow, success might be Time on Page. I recently killed a "cool" feature because while usage was high, it wasn’t driving any downstream value—it was a vanity metric trap.

Step 2 — Instrument user analytics (what I can measure on my site/app)

In Google Analytics (GA4) or whatever tool you use, create a segment specifically for Perplexity.

- UTM Tagging: Be consistent. I use

utm_source=perplexityandutm_medium=referral(orcpcif it’s paid). - Funnel Tracking: Watch the drop-off. Do Perplexity users bounce at the pricing page? That tells you the answer engine set the wrong price expectation.

- Cohort Analysis: Compare "Perplexity Users" vs. "Google Users." I often find Perplexity users convert higher but have lower session volume.

Step 3 — Instrument technical performance with SDK/logging

If you are integrating the API, you must log the details. Don’t just log "success" or "fail." You need the timing.

Here is the logic I use in my code (pseudocode):

start_timer = Now()

response = await PerplexityClient.query(user_prompt)

end_timer = Now()

duration_ms = end_timer - start_timer

Logger.log({

"event": "perplexity_api_call",

"duration_ms": duration_ms,

"status": response.status,

"error_type": response.error ? response.error.code : null,

"request_id": response.headers['request-id'],

"retry_count": current_retry_attempt

})Why this matters: By capturing retry_count and duration_ms, I can see if the API is struggling even if it eventually succeeds. That is a leading indicator of an outage.

Example template: a lightweight log schema

If you are setting up your logs in Datadog or even a spreadsheet, include these fields: Timestamp, Environment (Prod/Dev), Endpoint, Duration (ms), Status Code, Error Type, Concurrency Level. Trust me, having the "Concurrency Level" saved me once when I realized we were accidentally DDoS-ing ourselves.

Step 4 — Build a dashboard that answers business questions

I have a rule: if a dashboard takes more than 5 seconds to understand, it’s broken. My dashboard has four widgets:

- Reliability: Error rate (Red if > 1%).

- Speed: p95 Latency (Yellow if > 2s).

- Scale: Requests Per Second (Trend line).

- Outcome: Conversion Rate / Successful Tasks (The money metric).

Step 5 — Add alerts and an incident playbook (beginner-friendly)

Don’t let alerts wake you up for nothing. I set my alerts to trigger only if the Error Rate exceeds 5% for more than 5 minutes. My "Incident Playbook" is simple:

- Confirm: Is it Perplexity or us?

- Rollback: Did we just deploy bad code?

- Rate Limit: Slow down traffic to let the system recover.

- Communicate: Tell the team.

Benchmarks: how I interpret Perplexity AI performance data (and what “good” looks like)

People always ask me for benchmarks. "What’s a good latency?" "What’s a good bounce rate?" Here is the honest truth: Benchmarks are starting points; your baseline matters more.

However, you need somewhere to start. Based on general research and typical API performance:

- Latency (API): Aim for a p95 of under 2–3 seconds for complex queries. Anything over 5 seconds feels broken to a user.

- Bounce Rate (Referral): 33–47% is typical for high-intent traffic . If yours is 80%, your content isn’t matching the citation.

- Return Rate: 68–85% implies strong loyalty .

Latency: p50 vs p95 and why p95 is where issues hide

Think of latency like airline flights. p50 is the "average" flight that arrives on time. p95 is that one flight that gets delayed for 4 hours. That 4-hour delay is what makes you hate the airline. That is why I obsess over p95—it represents the frustrated users who will churn.

Reliability: error rate, timeouts, and retry ceilings

Errors happen. The key is how you handle them. I categorize errors into "Client" (we sent bad data) and "Server" (Perplexity is down). Also, watch your retries. I once saw a bill spike because we kept retrying a dead endpoint 10 times per second. Cap your retries at 3.

Engagement: using session duration, bounce, and return rate without fooling myself

Longer sessions aren’t always better. If a user spends 20 minutes on my "Quick Start" guide, they are probably confused, not engaged. I always pair session duration with Task Completion to be sure.

Common mistakes when monitoring Perplexity AI performance (and how I fix them)

I’ve made plenty of mistakes. Here are the ones I see most often in US businesses trying to adopt AI tools.

Mistake 1–3: measuring the wrong thing (traffic-only, no KPIs, no cohorts)

I used to celebrate traffic spikes until I realized they were mostly bots or accidental clicks. The Fix: Create a "Perplexity Cohort" in your analytics. Only count the traffic that triggers a key event (signup, demo request). Quality over quantity.

Mistake 4–6: weak instrumentation (no p95, no error categories, retries masking failures)

Reporting "Average Latency" is the biggest trap. It hides the outliers. The Fix: Log the duration of every request and calculate the p95. Also, tag your errors. Knowing that 90% of errors are "Rate Limits" is a very different problem to solve than 90% "500 Server Errors."

Mistake 7–8: no operational rhythm (no review loop, no playbook)

The best dashboard in the world is useless if nobody looks at it. The Fix: I have a calendar invite for Friday mornings: "Metrics Review." It takes 15 minutes. We look at the trend, annotate any new deployments, and decide if we need to optimize.

Scaling and publishing at scale: keeping performance stable as usage grows

When things start working, you scale. You publish more content, or you ramp up API usage. This is usually when things break.

If you are using an Automated blog generator to scale your content presence on Perplexity, you need to ensure your site infrastructure can handle the crawler activity and the referral spikes. On the technical side, scaling means implementing Connection Pooling and Caching. Why ask the API for the same answer twice? Cache it.

When scale changes the math: throughput, concurrency, and cost per successful outcome

As you scale, concurrency (simultaneous requests) becomes your enemy. If you have 100 users hitting the API at once, your error rate will spike if you don’t use Async calls. I also keep a close eye on "Cost Per Successful Outcome." If our bill doubles but our leads only go up 10%, we have a scaling problem.

Quality controls that protect performance (content + technical)

I never ship a major change without an annotation in my dashboard. "Deployed new prompt logic" or "Updated blog schema." If performance tanks 2 hours later, I know exactly what to roll back. It’s a simple discipline that saves hours of debugging.

FAQs + summary: how to monitor Perplexity AI performance moving forward

To wrap this up, monitoring Perplexity isn’t rocket science, but it does require intent. You can’t just "set it and forget it."

FAQ: How can I track Perplexity AI’s performance for my usage?

Start simple. If you are a dev, log the duration and outcome of every SDK call. If you are a marketer, use UTMs and segment your analytics by referral source. You can always get fancier later.

FAQ: What performance metrics matter most when monitoring Perplexity usage?

If you are overwhelmed, track these three: p95 Latency (for speed), Error Rate (for reliability), and Conversion Rate (for business value).

FAQ: Why is tracking performance on Perplexity AI important for businesses?

Because reliability equals trust. If your integration is slow or your content link is broken, users won’t give you a second chance. Plus, you need to prove the ROI to justify the spend.

FAQ: What business models does Perplexity use?

They use subscriptions (Pro/Enterprise), API licensing, and advertising (Sponsored Questions). Knowing which one you interact with helps you choose the right success metric.

Next Steps for You:

- This Week: Check your analytics for "perplexity" referrals and set up a basic segment.

- Next Week: Ask your dev team to add "duration" and "status" to your API logs.

- This Month: Build a one-page dashboard and review it once.

When I need to scale content experiments, I rely on clear measurement first, then tools second. Start measuring today, and you’ll sleep better tomorrow.