How to audit keywords on a page: efficiency hacks for live-page checks (Beginner-friendly guide)

I recently reviewed a client’s product page that was supposedly ‘perfectly optimized.’ Their primary keyword appeared six times. Technically, the keyword density was fine. But when I actually looked at the live page, four of those mentions were buried in a ‘Recommended Products’ slider in the footer. The actual body content? It barely touched the topic. We were celebrating a technicality while the page was failing users.

This is why keyword audits often feel like busywork. You can spend hours manually hitting Ctrl+F on dozens of pages, or you can get lost in complex tools that flag issues that don’t actually impact rankings. The goal isn’t just to count words; it’s to confirm relevancy and intent quickly so you can get back to strategy.

In this guide, I’ll walk you through a workflow that works for the real world. We will cover a 60-second single-page check you can do in any browser, a scalable method using crawlers to check hundreds of URLs at once, and—most importantly—how to use GA4 and Search Console to confirm if those keywords are actually driving business value. This is built for intermediate practitioners who need to move fast.

What I’m actually auditing (presence vs intent vs value) + the 10-minute setup

When I audit a page, I don’t start with rankings. Rankings are a lagging metric. I start by asking three questions in a specific order. If I skip this mental framework, I end up optimizing pages that don’t matter or fixing ‘problems’ that aren’t actually hurting us.

We need to distinguish between three layers of the audit:

- Presence: Does the keyword (or its variant) physically appear in the HTML elements Google values most (Title, H1, URL)?

- Intent: Does the content actually solve the problem implied by the keyword? You can have the keyword ‘CRM software’ on a page, but if the content is a history of software engineering, you’ve failed the intent check.

- Value: Is the page driving outcomes? This is where we look at business data.

For the setup, I keep it lean. I use a spreadsheet to track URLs, a crawler (like Screaming Frog) for bulk checks, and Google Search Console (GSC) paired with GA4 for performance data. Here is the distinction:

| Audit Layer | What I Check | Primary Tools |

|---|---|---|

| Presence | Physical location of terms in Title, H1, Body | Browser (View Source), Screaming Frog |

| Intent | Content depth, entity coverage, user journey | Manual review, AI content intelligence tools |

| Value | Impressions, clicks, conversions, revenue | GSC (Performance), GA4 (Landing Pages) |

If you are producing updates across many URLs, content intelligence tooling can help translate these audit findings into publishable drafts while still requiring your editorial review. But before we automate anything, we need to understand the basics.

Quick glossary (beginner-safe): title tag, H1, H2, canonical, indexation, cannibalization

- Title Tag: The blue link text in search results; the strongest on-page signal for relevance.

- H1 (Heading 1): The main headline visible on the page; confirms to the user they clicked the right result.

- H2 (Heading 2): Subheaders that structure your argument; crucial for targeting secondary keywords.

- Canonical Tag: A line of code that tells Google, ‘This is the master version of this page.’

- Indexation: Whether Google has stored the page in its library (index) or not.

- Cannibalization: When two of your own pages fight for the same keyword, confusing Google and splitting your traffic.

60-second method: how to audit keywords on a page (manual live-page check)

Sometimes you don’t need a full site crawl. You just need to sanity-check a specific URL before a meeting or after a publish. When I need to audit a single page manually, I don’t rely on what I see on the screen alone because visual layout can be deceiving.

Here is my manual workflow:

- Use ‘View Page Source’ (Ctrl+U): Do not just use ‘Inspect Element’ initially. View Source shows you the raw HTML delivered by the server. This confirms the keyword exists in the code Google sees before JavaScript renders.

- Ctrl+F for the Primary Keyword: Type in your main term. Note the count.

- Check the Title Tag: Look for

<title>. Is the keyword near the front? - Check the Description: Look for

name="description". Is it compelling? - Check the H1: Search for

<h1>. There should only be one. - Scan the First 100 Words: Visually check the first paragraph. Does the topic appear naturally immediately?

Field note: Be careful with false positives. If you search for ‘marketing’ and see 15 matches, make sure 10 of them aren’t in your footer navigation or sidebar widget. I ignore navigation occurrences when I’m validating topical relevance.

The fastest checklist (copy/paste friendly)

If you need to audit a page right now, run through this list. I start here because these elements have the highest impact-to-effort ratio.

- Title Tag: Primary keyword included? (Ideally start/middle).

- URL Slug: Is it clean and does it contain the keyword? (e.g.,

/blog/audit-keywordsvs/blog?id=123). - H1 Header: Does it match the Title Tag’s intent?

- First Paragraph: Is the topic defined clearly in the first 2-3 sentences?

- H2 Subheaders: Do they cover the ‘Who, What, Why’ of the topic?

- Internal Links (Incoming): Do other pages link to this one using the keyword as anchor text?

- Image Alt Text: Does at least one image describe the keyword concept? (Don’t force it).

- Visible Body Copy: Is the term used naturally throughout the discussion?

Common gotcha: content hidden by tabs, accordions, or JavaScript rendering

Modern websites love tabs, ‘Load More’ buttons, and accordions. For a user, this is clean design. For a bot, it can be a wall. If your content is loaded entirely via JavaScript (client-side rendering), ‘View Page Source’ might show a blank page. While Google is much better at rendering JavaScript now than in the past, relying on it adds risk. If you see your keyword on the screen but not in the source code, you need to ensure Google is actually rendering that content. You can test this using the ‘URL Inspection’ tool in Google Search Console to see the rendered HTML.

Scale it up: crawl your site to audit keyword presence in minutes (Screaming Frog-style workflow)

The manual method breaks down once you have more than 10 pages. You cannot Ctrl+F your way through an enterprise site. This is where I switch to a crawler. I use Screaming Frog (free for up to 500 URLs), but the logic applies to other tools like Sitebulb or cloud-based auditors.

The goal isn’t just to crawl standard data, but to use Custom Extraction. This allows you to tell the crawler: ‘Go to every page, find the H1, find the first paragraph, and count how many times ‘X’ appears.’

Step-by-step extraction workflow:

- Configuration: Go to Configuration > Custom > Extraction.

- Set XPath/Regex: To extract the H1 text, use the XPath

//h1. To find specific keywords in the body, you can use a ‘Contains’ filter. - Run the Crawl: Let the tool scan your list of live URLs.

- Export to CSV: You now have a spreadsheet where every row is a URL and columns show exactly what is in the Title, H1, and body.

If you find significant gaps across hundreds of pages, you might need help creating the content to fill them. A bulk article generator can help operationalize the creation of supporting content, but always remember that the audit data must guide the input.

| Element to Audit | Extraction Method | Why It Matters |

|---|---|---|

| H1 Text | XPath: //h1 |

Ensures the visible headline matches your target keyword map. |

| Target Keyword Count | Custom Search (Contains ‘X’) | Identifies thin pages where the topic is barely mentioned. |

| Publish Date | XPath (variable based on site) | Helps identify outdated content that needs a refresh. |

Free-tool path: what you can do without paying

You do not need an enterprise budget to do this. I often start with the free version of Screaming Frog for small sites. Google Search Console is completely free and provides the most accurate data on what queries you are already ranking for (even if you didn’t optimize for them). You can export performance data to Google Sheets and use simple formulas like =SEARCH() or =REGEXMATCH() to check if your target keyword appears in the URL or Title column of your export. It requires a bit more manual spreadsheet work, but the cost is zero.

Spreadsheet pattern: turn crawl exports into ‘present / missing / weak’ signals

This is the sheet layout I reach for when I need answers fast. I create a simple status column using a formula:

- Column A: URL

- Column B: Target Keyword (Manual Input)

- Column C: Title Tag (From Crawl)

- Column D: H1 (From Crawl)

- Column E: Status Formula:

IF(ISNUMBER(SEARCH(B2,C2)), "Present", "MISSING")

I apply Conditional Formatting to turn “MISSING” cells bright red. This gives me an instant visual heat map of where my basic on-page hygiene is failing.

What to check on the page (and what ‘good’ looks like): a practical on-page keyword checklist

Auditing is useless if you don’t know what you’re trying to achieve. Beginners often think ‘optimization’ means jamming the keyword in as many times as possible. That is 2010 SEO. Today, we audit for clarity and natural language.

Here is my rubric for evaluating what I find:

| Element | Best Practice Rule of Thumb | Red Flag | Quick Fix |

|---|---|---|---|

| Title Tag | 50-60 chars, keyword early. | Keyword at the very end or truncated. | Rewrite to front-load the main topic. |

| Meta Description | Writes for the click (CTR), not ranking. | Just a list of keywords. | Write a sentence that answers “Why click?” |

| H1 | Describes the page content perfectly. | Duplicate of the Title Tag exactly. | Make H1 slightly more conversational than Title. |

| Body Copy | Uses entities and synonyms. | Repeats exact match phrase 5x in intro. | Replace repeats with natural variations. |

| Internal Links | Anchors describe the destination. | “Click here” or generic anchors. | Update anchor text to be descriptive. |

Mini example: before/after title + H1 that improves clarity without stuffing

Scenario: A SaaS company selling project management software for creative agencies.

Before (Weak):

Title: Project Management Software | Solutions | SaaS Tool

H1: Welcome to our Software

After (Optimized):

Title: Project Management Software for Creative Agencies | [Brand Name]

H1: Streamline Your Agency’s Workflow with Creative Project Management

Why this wins: The ‘After’ version signals intent immediately. It targets the specific audience (agencies) and uses a variation in the H1 (workflow) rather than just repeating the keyword blindly.

Presence isn’t the point: measure keyword value with Google Search Console + GA4

Here is the hard truth: You can have the keyword in the Title, the H1, and the URL, and still fail. Presence is the easiest part; value is the point. I don’t optimize for ‘presence’ unless it connects to rankings, CTR, or conversions.

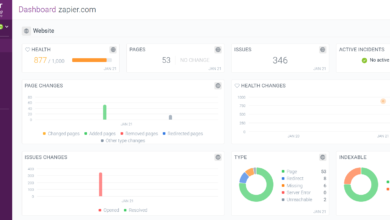

To do this, I look at Google Search Console (GSC) for the ‘Pre-Click’ data and GA4 for the ‘Post-Click’ data. I look for mismatches.

- High Impressions, Low CTR: Google likes your page (presence is good), but users don’t. This is usually a Title/Meta Description issue.

- High Traffic, Low Conversions: Users like the promise, but the page disappoints them. This is an Intent issue.

- Conversions with Low Traffic: This is a hidden gem. Optimize this page immediately to get more volume to a high-performer.

Recent industry discussions emphasize that pairing GA4 segments with Search Console data is the only reliable way to spot ‘hollow traffic’—pages that rank well but generate zero business value .

Beginner workflow: map Page → Query → Outcome (in a simple sheet)

This is the moment I stop guessing and start prioritizing. I build a simple mapping sheet with these headers:

- URL

- Primary Query (From GSC)

- Average Position (From GSC)

- Conversion Rate (From GA4 Landing Page report)

- Action (Keep / Rewrite / Prune)

If I see a page ranking in Position 5 for a commercial keyword but it has a 0% conversion rate, I know I need to audit the content (layout, CTA, offer), not just stuff the keyword in one more time.

The hidden blockers that make keyword relevance ‘invisible’ (technical + content issues)

I’ve seen beautiful content fail because of invisible technical walls. If you fix titles but the page isn’t indexed, nothing changes. These are the blockers that silence your keywords:

- Internal Linking Gaps: You wrote a great page, but no other page on your site links to it. To Google, this page is an orphan. It has no authority flowing to it.

- Index Bloat: If you have thousands of low-quality pages (tags, archives) indexed, Google spends its time crawling junk instead of your optimized pages.

- Keyword Cannibalization: If you have 4 different pages all optimized for ‘best running shoes,’ Google rotates them in the rankings, and usually, none of them rank #1.

- Thin Content: The keyword is there, but the page is only 200 words long while competitors have deep guides. Google views this as low value.

- Canonical/Noindex Errors: The classic foot-gun. You accidentally left a

noindextag on the page from the staging environment.

Fast diagnostic checklist (spot it in under 15 minutes)

Before you rewrite any content, run these diagnostics:

- Site Search: Type

site:yourdomain.com "keyword"into Google. See which pages actually show up. Are there duplicates? - GSC Indexing Report: Check ‘Pages > Not Indexed’. Is your target URL listed there?

- Link Count: In GSC, check ‘Links > Internal Links’. Does your important page have fewer than 5 internal links?

- Duplicate Titles: Does your crawler show multiple pages with the exact same title tag?

Steal the right ideas: competitor ‘claims-and-gaps’ keyword mapping (quick-win content plan)

Once I’ve audited what I have, I look at what I’m missing. I use a ‘Claims-and-Gaps’ matrix. This is simply looking at a competitor who outranks you and asking: ‘What claim are they making that I am not?’

Maybe they have a section on ‘Pricing Models’ and I don’t. Maybe they cover ‘Integration with Slack’ and I missed it. These are gaps. I map these out in a matrix:

| Competitor Topic/Claim | My Coverage | Action | Priority |

|---|---|---|---|

| “Enterprise Security” | Not Mentioned | Add H2 section | High |

| “Free Trial vs Demo” | Mentioned briefly | Expand to full table | Medium |

I use this to avoid random content ideas and focus on measurable gaps. If you identify extensive gaps, an AI article generator can assist in drafting these missing sections or new pages quickly, allowing you to close the competitive gap at speed while you focus on the strategy.

Prioritization rule: impact × effort × confidence

I don’t fix everything. I score potential fixes from 1-5:

- Impact: How much traffic/money could this bring?

- Effort: Is this a 5-minute title tweak or a 5-day rewrite?

- Confidence: Am I sure this fix will work?

If confidence is low, I run a small test before rewriting 50 pages. I always start with high-impact, low-effort tasks (like Title Tag updates on striking-distance keywords).

Common mistakes, quick fixes, and mini FAQs (so you don’t waste hours)

I’ve lost time doing audits the hard way. Here are the lessons I’ve learned so you don’t have to repeat my mistakes.

Mistake #1–#7: what it looks like + the fix (bulleted)

- Mistake: Obsessing over exact-match keywords.

Fix: Write for topics and entities. Google understands synonyms. - Mistake: Editing titles without checking internal links.

Fix: Always add 2-3 internal links to any page you are trying to boost. - Mistake: Trusting visual checks over code.

Fix: Always use ‘View Source’ or GSC’s ‘Test Live URL’. - Mistake: Ignoring the footer trap.

Fix: Discount keywords that appear in global navigation; they don’t signal specific page relevance. - Mistake: Auditing pages with zero impressions.

Fix: If it has zero impressions, check indexation first. Don’t optimize a page Google hasn’t seen.

FAQ: What’s the fastest way to check if a keyword appears on a live page?

For a single page, use Ctrl+F on the ‘View Page Source’ screen. For multiple pages (more than 10), use a crawler like Screaming Frog with a custom extraction or ‘Contains’ filter. If it is more than 10 URLs, I strictly stop doing it manually to avoid burnout and errors.

FAQ: How do I know if a keyword is valuable beyond presence?

Look at the outcome. Combine Search Console (Query) data with GA4 (Conversion) data. If a keyword brings 1,000 visitors but zero demo requests, it is likely the wrong intent, even if the presence is ‘perfect.’

FAQ: Can I audit keyword presence without expensive tools?

Absolutely. You can use the free version of Screaming Frog (up to 500 URLs), Google Search Console (free), and Google Sheets (free). Paid tools are great for competitive intelligence, but you can audit your own site effectively for free.

FAQ: What technical issues most often hide keyword relevance?

Internal linking is the big one. If a page is buried deep in your site architecture with no links pointing to it, Google deems it unimportant regardless of your keyword usage. Index bloat (too many junk pages) and cannibalization are also common culprits.

Conclusion: my 3-bullet recap + the next 5 actions I take after a keyword audit

We’ve covered a lot, but the workflow is actually quite simple. Here is the recap:

- Verify Presence: Use ‘View Source’ or a crawler to ensure the keyword exists in the Title, H1, and Body.

- Validate Value: Use GA4 and GSC to confirm the page is driving business outcomes, not just vanity traffic.

- Check Blockers: Ensure technical issues like internal linking or noindex tags aren’t hiding your work.

Your 5-Step Action Plan:

- Export your top 20 pages from Search Console that have high impressions but ranking positions 4–10.

- Run the manual 60-second checklist on these 20 pages.

- Update Title Tags and H1s where the primary keyword is missing or unclear.

- Add 3–5 internal links from relevant parent pages to these target URLs.

- Document the changes in a spreadsheet and check Search Console in 2–4 weeks to monitor the lift.