Technical SEO Audit Checklist: The Core Vitals Audit for Speed, Security, and Mobile Usability (2026)

Introduction: A Core Vitals audit that actually improves rankings (and revenue)

I recently audited a mid-sized SaaS site where the marketing director was baffled. Their organic traffic was holding steady, but demo requests from iPhones had dropped by 15% after a theme update. The content hadn’t changed, and their keywords were stable. The issue wasn’t what they were saying; it was how the site felt to the user.

In the US business context, users have zero patience. If a page feels slow, shifts while they are trying to click, or throws a security warning, they bounce. In this guide, I’m going to walk you through the exact workflow I use for a targeted technical SEO audit. This isn’t a theoretical “digital landscape” overview; it is a practical checklist focused on the three pillars that matter most right now: Speed (Core Web Vitals), Security, and Mobile Usability.

With the evolution toward Core Web Vitals 2.0—which introduces smarter metrics like the Visual Stability Index (VSI) and session-level analysis—traditional checklists are becoming obsolete. This guide is designed to help you measure, prioritize, fix, and validate your technical health in 2026.

What this Core Vitals-focused technical SEO audit checklist covers (and what it doesn’t)

Before we open a single tool, we need to define our scope. Beginners often confuse a targeted performance audit with a sprawling enterprise technical audit. If your goal is measurable UX and SEO lift within 30 days, you cannot fix everything at once. You need to focus on the elements that directly impact how Google crawls your site and how users experience it.

This checklist focuses on three specific pillars, plus a critical structural layer:

- Speed & Performance: Moving beyond simple load times to interaction readiness (INP) and visual stability.

- Security & Trust: Ensuring the connection is secure and the environment is locked down.

- Mobile Usability: Verifying that the mobile version is the primary version in Google’s eyes.

- Structural Health: Checking for index bloat and orphan pages that dilute the impact of the first three pillars.

Deliverables you should expect to create:

- A baseline performance report (Field vs. Lab data).

- A prioritized list of template-level fixes (dev tickets).

- A validation plan to confirm improvements.

Out of scope (for now): In most cases, I exclude deep log-file analysis, complex international hreflang debugging, and advanced JavaScript rendering issues from this specific checklist. Those are vital, but they are separate projects.

The three pillars: speed, security, mobile usability

I like to explain these pillars with a simple analogy regarding a physical store. Speed is how fast the automatic door opens; if it lags, customers walk away. Security is whether the door is locked or looks sketchy; if the lock is broken, nobody enters. Mobile Usability is whether the hallway is wide enough for the customer’s cart; if they get stuck, they leave without buying. All three must work together to support the transaction.

What many audits miss (preview): structural issues that sabotage otherwise “good” vitals

Here is the part most teams skip. You can have perfect Core Web Vitals scores, but if you have 5,000 low-quality tag pages indexed or critical pages that are orphaned (not linked to), you are wasting crawl budget. I have seen sites optimize their LCP to the millisecond while accidentally blocking their best content via canonical inconsistencies. We will address these structural killers in Step 5 because they silently sabotage performance signals.

My setup: tools, access, and data sources (so the audit is measurable)

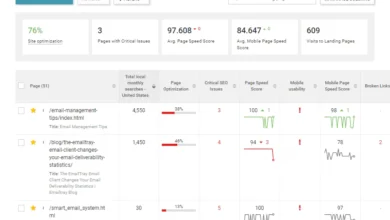

If you only do one thing, start with the Google Search Console (GSC) Core Web Vitals report. It is based on real user data, which is the only data Google ranks you on. However, to debug specific issues, you need lab tools. Here is the stack I use to keep the audit objective.

| Tool | Data Type | Best For | Common Pitfall |

|---|---|---|---|

| Google Search Console (GSC) | Field (Real Users) | Identifying failing URLs and trends over time. | Data is delayed by ~28 days (rolling average). |

| PageSpeed Insights (PSI) | Lab & Field | Quick snapshots of a specific URL’s health. | Looking only at the “Performance Score” rather than metric breakdowns. |

| Chrome User Experience Report (CrUX) | Field | Historical performance data on competitors. | Requires enough traffic to generate data. |

| Lighthouse (DevTools) | Lab (Simulated) | Debugging specific code issues locally. | Simulations don’t always match real-world device drag. |

Lab vs field data: why both matter

It is crucial to understand the difference. Field data (CrUX/GSC) tells you what is actually happening to your users. Lab data (Lighthouse) helps you reproduce the problem so you can fix it. A page can score 95 in Lighthouse on your fast MacBook but still frustrate users on a mid-tier Android device on a 4G network. Always prioritize field data when deciding what to fix.

The access checklist (what I ask for before touching anything)

I can’t effectively audit a site if I can’t see under the hood. However, I don’t need admin rights to everything. Here is my standard access request list:

- Google Search Console: Restricted or Full User (to see validation reports).

- Google Analytics (GA4): To correlate speed drops with engagement metrics.

- CMS Access: Read-only is fine; I just need to see how plugins and templates are configured.

- CDN/Hosting Dashboard: To check caching rules and edge configurations.

- Staging Environment: Ideally, a place to test fixes before they go live.

Step 1 — Measure Core Web Vitals 2.0 and prioritize the pages that matter

Core Web Vitals have evolved. While we still rely on the “Big Three”—LCP, INP, and CLS—CWV 2.0 introduces a more nuanced view, including the Visual Stability Index (VSI) and context-aware thresholds. Google is getting better at understanding session-level friction, not just single page loads.

Here are the baselines we are aiming for in 2026:

| Metric | What it feels like | ‘Good’ Threshold | Where to check |

|---|---|---|---|

| LCP (Largest Contentful Paint) | “The page has loaded.” | ≤ 2.5 seconds | GSC / PSI |

| INP (Interaction to Next Paint) | “The page responds when I click.” | ≤ 200 milliseconds | GSC / Chrome DevTools |

| CLS (Cumulative Layout Shift) | “The page is stable.” | ≤ 0.1 score | Layout Shift Regions (DevTools) |

| VSI (Visual Stability Index) | “Smoothness over the whole session.” | [Context Dependent] | RUM Tools / Advanced Analytics |

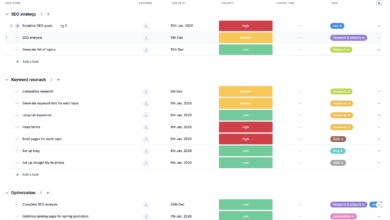

The page-priority method I use (templates first, then money pages)

If you try to fix every URL individually, you will fail. I use a “Template First” approach. Most performance issues are baked into the page template (e.g., the blog post layout, the product page layout). If you fix the template, you fix 1,000 pages at once.

My priority order for a typical US business site:

- Revenue Generators: Product pages, Service pages, Checkout flow.

- High Traffic Entry Points: Top-performing blog posts and the Homepage.

- High-Scale Templates: Category pages or Tag archives.

Why under ~1.5s initial load time is a 2026 expectation

Users’ internal clocks have sped up. While Google says 2.5 seconds is “Good,” business reality says otherwise. Data suggests that initial page load times above 1.5 seconds can begin to reduce conversion rates. Furthermore, sites with load times over 3 seconds often see mobile abandonment rates exceeding 50%. I treat 2.5 seconds as the absolute limit, but I aim for 1.5 seconds for money pages.

Step 2 — Speed audit checklist: fix LCP, INP, and TTFB without chasing scores

This is where we get our hands dirty. The goal isn’t a perfect 100 score; it is removing the friction that kills conversions. I typically find that fixing server response times (TTFB) and optimizing image delivery yields the highest ROI before tackling complex JavaScript refactoring.

| Check | How to Test | Typical Fix | Expected Impact |

|---|---|---|---|

| Server Response (TTFB) | PSI (Time to First Byte) | Implement Edge Caching / CDN | High (Improves everything) |

| LCP Element | Lighthouse “LCP Element” | Preload image + fetchpriority=”high” | High (LCP) |

| Render-Blocking Resources | Coverage Tab (DevTools) | Defer non-critical CSS/JS | Medium (FCP/LCP) |

| Interaction Delays (INP) | Performance Profiler | Debounce inputs / Code splitting | High (INP/UX) |

Server & network: TTFB, caching, CDN/edge, and HTTP/3 basics

If your server is slow to reply, the fastest code in the world won’t save you. I check Time to First Byte (TTFB) immediately. If it is over 600ms, it is a red flag. Modern Edge computing and CDN strategies can reduce TTFB by up to 60%.

The Checklist:

- Caching: Are

cache-controlheaders set for static assets? - CDN: Is a CDN (like Cloudflare or Cloudfront) serving images and JS?

- Compression: Is Brotli (or Gzip) enabled?

- Protocol: Is the server using HTTP/2 or the newer HTTP/3 (QUIC)?

LCP fixes: images, fonts, critical CSS, and render-blocking resources

If your LCP is a large hero image, that is usually the first place I look. Often, the image is 2400 pixels wide but displayed at 400 pixels on mobile.

Quick Wins for LCP:

- Format: Serve images in AVIF 2.0 or WebP.

- Preload: Add

<link rel="preload">for the hero image and primary font. - Priority: Use

fetchpriority="high"on the LCP image. - Lazy Load: Ensure images below the fold are lazy-loaded, but never lazy-load the hero image.

INP fixes: reduce JavaScript work and third-party drag

Interaction to Next Paint (INP) measures responsiveness. If you click a filter and the page freezes for a second, that is a poor INP. The usual suspects are heavy JavaScript execution and third-party tracking scripts.

Action Items:

- Identify long tasks (over 50ms) in Chrome DevTools.

- Defer or delay non-essential third-party scripts (chat widgets, excessive tracking).

- Implement code splitting so users only download the JS needed for the current page.

CLS/VSI basics: prevent layout shifts that break trust

Nothing erodes trust faster than a button moving right as someone tries to click it. This is Cumulative Layout Shift (CLS). The new Visual Stability Index (VSI) takes this further by looking at shifts throughout the session.

The Fix: Always reserve space for images, ads, and embeds by setting explicit width and height attributes. Also, ensure web fonts use font-display: swap (or optional) to prevent text from flashing and changing size.

Step 3 — Security audit checklist: the baseline trust signals Google expects

I treat security like seatbelts—nobody brags about them, but you don’t drive without them. Google expects a secure baseline. If these are missing, you risk browser warnings that kill traffic instantly.

HTTPS enforcement and redirect hygiene

It is not enough to just “have” an SSL certificate. You must enforce HTTPS site-wide. I often find mixed content warnings where an old image is hardcoded as http:// on an https:// page.

Checklist:

- Verify valid SSL certificate (check expiry dates).

- Ensure all HTTP requests 301 redirect to HTTPS.

- Scan for mixed content (resources loaded over HTTP).

- Check canonical tags to ensure they reference the HTTPS version.

HSTS, certificate monitoring, and staging exposure

HTTP Strict Transport Security (HSTS) is a header that tells browsers, “Only talk to me securely, ever.” It prevents downgrade attacks. While you are at it, check your staging environment. I have seen staging sites outrank production for brand queries because someone forgot to password-protect them or add a noindex tag. Don’t let that be you.

Step 4 — Mobile usability audit checklist (mobile-first indexing rules everything)

Google predominantly uses the mobile version of the content for indexing and ranking. If your desktop site is rich and structured, but your mobile site cuts corners, your rankings will suffer.

Content parity and hidden elements (what Google actually indexes)

I always spot-check parity. Does the mobile version have the same internal links, the same structured data, and the same primary content as desktop? It is okay to use accordions (tap-to-expand) on mobile, as long as the content is in the HTML. However, removing content entirely to “save space” is a SEO risk.

Touch, readability, and UX friction checks

If I can’t tap your phone number without zooming in, that represents a lost lead. Google flags these issues in GSC under “Mobile Usability.”

- Tap Targets: Buttons and links should be at least 48×48 pixels and spaced apart.

- Viewport: Ensure the

meta name="viewport"tag is set correctly. - Font Size: Base font size should be at least 16px to avoid “text too small to read” errors.

- Interstitials: Ensure pop-ups do not cover the main content immediately upon load (intrusive interstitials penalty).

Step 5 — Structural checks most audits miss (but they control crawl, quality, and growth)

You can fix every speed metric, but if your site architecture is a mess, you are building a fast house on a swamp. These structural issues confuse search engines about which pages are important.

| Issue | How I detect it | Fix | Validation |

|---|---|---|---|

| Index Bloat | GSC “Indexed, not submitted in sitemap” | Noindex low-value tags/filters | Index count drops |

| Orphan Pages | Crawling tool (Screaming Frog) | Add internal links / Add to sitemap | Crawler finds page |

| Content Decay | GA4/GSC (Traffic drop > 6mo) | Refresh, Merge, or Prune | Traffic stabilizes |

| Canonical Chaos | Audit tool “Non-indexable” check | Point self-referencing canonicals | GSC acceptance |

Index bloat and crawl waste: how to find it fast

Index bloat happens when Google indexes pages that have no value—like thousands of WordPress tag pages, search result URLs, or weird parameter combinations. This wastes crawl budget. If you have 500 pages of content but 5,000 pages indexed, you have bloat. Use the noindex directive to tell Google to ignore these low-quality URLs.

Internal linking hierarchy and orphan pages

Internal links are the wires that pass authority (PageRank) through your site. An “orphan page” is a page with zero internal links pointing to it. If you can’t reach a key service page in 2–3 clicks from the homepage, Google assumes it is unimportant. I usually fix this by adding “Related Articles” modules or updating the footer/navigation.

Canonical inconsistencies and content decay: keep signals consolidated

Content decay is silent. Pages that used to rank well slowly lose relevance. I keep a running list of “pages that used to work” and revisit them quarterly. Also, ensure every page has a self-referencing canonical tag unless it is a deliberate duplicate. This prevents scrapers and parameter URLs from confusing Google.

Troubleshooting + automation: how I speed up audits with AI (without losing quality)

I use automation to save time on repeating patterns, but I still validate every fix with real-user data. Tools like Kalema are excellent for standardizing checklists and helping draft the actual developer tickets needed to fix these issues. AI can quickly cluster Lighthouse issues or suggest optimized code snippets for image loading.

However, AI cannot judge context. It might suggest removing “unused CSS” that is actually used for a critical pop-up that loads later. Use AI to speed up the diagnosis and ticket writing, but always have a human verify the implementation.

What I automate vs what I always verify manually

- Automate: Issue discovery, grouping URLs by template, drafting Jira tickets, and regression testing (did the score drop?).

- Manual Verify: Visual stability checks (looking at the phone), deciding which pages to

noindex, and reviewing security headers.

Common mistakes, FAQs, and my next-step plan (so you can execute this week)

Audits can be overwhelming. To keep you moving, here are the most frequent pitfalls I see and a plan to get you started.

Common mistake #1: Optimizing only for Lighthouse score (and ignoring real users)

I once saw a team celebrate a “100” Performance score in Lighthouse, only to realize their Real User Monitoring (CrUX) showed a failing grade. Why? They tested on a fast corporate Wi-Fi connection, while their users were on spotty 4G. Always trust GSC/CrUX data over a lab score.

Common mistake #2: Fixing images but ignoring TTFB (the hidden bottleneck)

You can compress images all day, but if your server takes 1.5 seconds to even start sending data, you will never pass Core Web Vitals. If TTFB is high, focus on server caching and CDN configuration first.

Common mistake #3: Letting third-party scripts destroy INP

Marketing teams love adding tracking pixels. But if you have 20 different trackers firing on page load, the browser gets clogged, destroying your INP score. Implement a tag policy: if a script doesn’t drive revenue or critical data, it goes.

Common mistake #4: “Mobile-friendly” design that still fails mobile-first indexing

Just because a site shrinks down doesn’t mean it works. I often see mobile sites where the text is readable, but the clickable elements are too close together. Test on a real device, not just by resizing your browser window.

Common mistake #5: Missing the structural problems (index bloat, orphan pages, canonicals)

I’d rather fix 20 orphan pages that matter than shave 50ms off a page nobody visits. Don’t let the pursuit of speed blind you to basic architectural health.

FAQ: What’s new about Core Web Vitals 2.0 compared to the original metrics?

In plain English: Google is getting better at measuring the entire experience, not just the initial load. CWV 2.0 retains the core metrics (LCP, INP, CLS) but adds predictive scoring and session-based analysis to understand if a user had a smooth experience throughout their entire visit .

FAQ: What security elements should a Core Vitals audit include?

At a minimum: HTTPS enforcement, valid SSL certificates, checking for mixed content, and HSTS headers. These ensure user safety and prevent browser warnings.

Recap + next actions (my 30-minute plan to get started)

You don’t need perfection today—you just need a process. If you want to use this technical SEO audit checklist effectively, here is your plan for the next 30 minutes:

- Recap: We measure field data first, fix speed/security/mobile issues on a template level, clean up structural bloat, and then validate.

- Action 1: Open GSC and look at the Core Web Vitals report. Identify your poorest-performing group of URLs.

- Action 2: Run a PageSpeed Insights test on one representative URL from that group to find the specific technical cause.

- Action 3: Check Kalema to see how you can document these findings into a clear SOP for your team.

Start with the templates that drive your business. Fix the door, lock it tight, and make sure the hallway is clear.