Content Pruning for SEO: ROI of Deleting Pages That Drag

The ROI of Deletion: content pruning for SEO (and why I treat it like revenue work, not housekeeping)

There is a specific moment in almost every site audit I perform where the mood shifts. We’re looking at a spreadsheet of 2,000 or 5,000 URLs, and I point out that 30% of them haven’t received a single organic visit in six months. The initial reaction is usually defensive—“We spent money writing those!”—but the data doesn’t negotiate.

I view content pruning for SEO not as digital janitorial work, but as capital reallocation. When you have hundreds of low-performing pages, you aren’t just storing old files; you are actively diluting your site’s authority and wasting Google’s crawl budget on dead ends. In my experience, sites that publish endlessly without pruning eventually hit a “content plateau,” where new articles struggle to index and rankings stagnate despite good technical health.

This article isn’t just about deleting pages. It is about the ROI of focus. We will cover exactly what content pruning is, the five specific actions I use (deletion is just one), and the SEO mechanisms that drive ranking lifts. Most importantly, I’ll share the exact ROI formula I use to get CFOs on board, along with a step-by-step audit workflow and decision matrix you can apply this week to stop the drag on your performance.

What content pruning for SEO is (and how it’s different from simply deleting posts)

If you imagine your website as a garden—a cliché, I know, but it works—simply letting everything grow wild results in a tangled mess where nothing thrives. Content pruning is the strategic process of evaluating every URL on your site and deciding its future based on performance and utility. It is not just a mass deletion event.

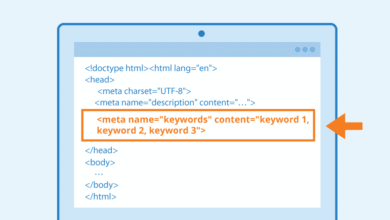

Effective pruning for SEO involves a mix of actions designed to consolidate authority and improve user experience. While deletion is the most drastic measure, it is often the last resort. The goal is to present Google with a leaner, denser site where every page serves a clear purpose, possesses high expertise (E-E-A-T), and satisfies user intent. When you prune correctly, you solve issues like keyword cannibalization, wasted link equity, and “discovered – currently not indexed” errors in Google Search Console.

Deletion vs redirect vs consolidation vs noindex vs refresh: the five actions I actually use

I don’t just look for pages to kill. I sort URLs into five distinct buckets. Here is the logic I use for each:

- Deletion (404/410): I choose this when a page has zero traffic, zero backlinks, no historical value, and contains outdated information that cannot be salvaged (e.g., a 2017 event announcement).

- 301 Redirect: I choose this when a page has traffic or backlinks but is redundant. I point it to the most relevant current equivalent to preserve its link equity.

- Consolidation: I choose this when I find 2–3 weaker posts competing for the same topic. I merge the best parts into one “pillar” page and redirect the others to it.

- Noindex: I choose this for pages that are useful for users but useless for search engines, such as tag archives, thank-you pages, or internal admin logins.

- Refresh: I choose this when a page targets a valid keyword and has good bones but is slipping in rankings due to outdated data or thin content.

When pruning is the right move for a US business site (and when it’s not)

Before I touch a single URL, I run a “smell test” to ensure we aren’t deleting business value that doesn’t show up in SEO metrics.

Pruning is usually the right move when:

- You have a legacy blog running for 5+ years with unmaintained posts.

- You have migrated domains and carried over thousands of URLs blindly.

- You see evidence of cannibalization (multiple pages swapping rankings for one query).

I pause and reconsider when:

- Sales Enablement: If the Sales team uses a zero-traffic PDF or blog post in their email sequences, it stays.

- Legal/Compliance: Privacy policies or mandatory disclosures often have low engagement but are legally required.

- Seasonal Content: A “Black Friday” page might look dead in July. I never delete these; I update the year and keep the URL stable.

Why pruning boosts rankings: the SEO mechanisms I watch (crawl budget, cannibalization, and quality signals)

Clients often ask, “How does having fewer pages make me rank higher?” It seems counterintuitive until you look at the mechanics of how search engines operate. Google’s resources are finite, and their recent Helpful Content and Core updates have made it clear: they prioritize quality over quantity. Pruning works by optimizing three specific levers: crawl efficiency, link equity distribution, and sitewide quality signals.

Crawl efficiency and indexation: fewer wasted URLs, faster attention on important pages

Googlebot has a “crawl budget”—an approximate number of pages it is willing to crawl on your site within a given timeframe. If your site is bloated with 5,000 low-quality tag pages or parameter URLs, Google spends its time there instead of finding your new, high-value product pages. I often see this manifest in GSC as “Discovered – currently not indexed.” By pruning the junk, I effectively force Google to focus on the content that actually drives revenue. Sites that prune 15–20% of underperforming pages often see significantly better indexation rates for their remaining content .

Authority and link equity: preserving what’s valuable with consolidation and redirects

This is where the “deletion” myth gets dangerous. If you simply delete a page that has 50 external backlinks, you are throwing away authority. That link equity evaporates into a 404 error. By using 301 redirects or content consolidation, I preserve that equity and channel it into a stronger page. Imagine taking the “juice” from five mediocre posts and pouring it all into one master guide. That guide is now significantly stronger than any of the individual posts were.

Quality + UX: pruning thin/outdated pages can lift engagement and Core Web Vitals

Google evaluates sites holistically. If a large percentage of your indexed URLs are thin content (under 400–500 words of unique value) or have high bounce rates, it can drag down the “quality score” of the entire domain. Pruning these pages removes the dead weight. Furthermore, removing heavy, unused legacy templates can improve Core Web Vitals scores like CLS and LCP by 20–30% . I don’t want Google spending its time on pages I wouldn’t be proud to send a customer to.

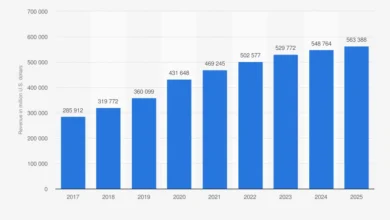

The ROI of deletion: how I quantify content pruning for SEO in traffic, conversions, and dollars

Getting buy-in for pruning is hard because it looks destructive. To get approval from a CMO or VP of Growth, I never frame it as “cleaning up.” I frame it as “revenue optimization.” I use a specific formula to project the Return on Investment (ROI) of a content audit.

When you have a leaner site, you can focus your resources on high-impact pages. This is where tools like SEO content generator platforms come in handy—not to create clutter, but to help you rapidly rebuild and upgrade the priority pages that survive the cut. Similarly, using a smart AI SEO tool can help you identify gaps in your refreshed content, ensuring the new versions are significantly better than what you removed.

A simple ROI formula I use (with a beginner-friendly example)

I calculate the potential upside by estimating the lift on the remaining or consolidated pages. I typically assume I’m wrong, so I discount the upside to be safe. Here is the basic logic:

(Estimated Incremental Traffic × Conversion Rate × Value per Conversion) − (Audit Hours × Hourly Cost) = Projected Net ROI

Example:

Let’s say we consolidate 10 weak posts into 1 pillar page. We expect the new pillar to rank higher and bring in an extra 500 visits/month. If your conversion rate is 2% and a lead is worth $100:

- Gain: 500 visits × 0.02 × $100 = $1,000/month extra value.

- Cost: It takes 5 hours to audit and rewrite. At $100/hr internal cost, that’s $500 one-time.

- Result: You pay back the effort in two weeks, and everything after is profit.

Table: content pruning ROI worksheet (copy/paste template)

You can copy this structure into Google Sheets to track your work. I recommend tracking the baseline for 28–90 days before making changes so you have clean data.

| Page / Cluster | Current Clicks (30d) | Proposed Action | Est. Effort (Hrs) | Projected Lift (%) | Exp. Value ($) | Notes |

|---|---|---|---|---|---|---|

| /blog/legacy-tools-list | 15 | Consolidate into Pillar | 3 | +150% | $450/mo | Merge 4 old posts |

| /blog/2018-event | 0 | Delete (410) | 0.2 | N/A | $0 | Crawl budget save |

| /services/old-offer | 5 | Redirect (301) | 0.5 | +10% (retained) | $50/mo | Redirect to main service |

What ROI looks like in the real world (case snapshots)

Results vary, but the pattern is consistent when pruning is disciplined. For instance, QuickBooks famously deleted about 2,000 legacy posts (nearly 40% of their blog) and saw a 20% lift in traffic within weeks, which eventually peaked at a 44% increase .

In another case, HomeScienceTools removed 10% of their blog content, resulting in a 104% jump in organic sessions and a significant boost in revenue . I’ve personally seen a SaaS client prune 40% of their blog to gain an estimated $78K MRR impact from a relatively small audit investment. The lesson? Less is often more.

My step-by-step workflow for a content audit that leads to safe pruning (tools + metrics)

A good audit requires a process that protects you from making emotional decisions. I use a specific stack of tools—Google Search Console (GSC), Google Analytics (GA4), and a crawler like Screaming Frog—to get the truth. I recommend timeboxing your first pass: spend 60–90 minutes just flagging obvious candidates so you don’t get overwhelmed.

When you reach the stage of rebuilding or refreshing content, using an AI article generator can significantly speed up the drafting process for your new consolidated pieces. However, the strategy comes first.

Step 1: pull a full URL inventory (and separate templates from content)

First, I crawl the site (using Screaming Frog or similar) and cross-reference it with the XML sitemap. I export this list to a spreadsheet. I immediately add columns for Content Type (Blog, Landing Page, Help Center) and Topic Cluster. A practical trick I use is to color-code rows by folder path (e.g., /blog/ vs /products/) so patterns pop immediately. I also separate technical URLs (like tag archives or internal search results) from actual content pages.

Step 2: join performance data (GSC + Analytics) so decisions are evidence-based

Next, I map performance data to each URL. If you’re new to this, don’t overthink the metrics. Start with the basics from the last 6–12 months:

- Impressions (GSC): Is anyone even seeing this in search?

- Clicks (GSC): Is it driving traffic?

- Conversions/Assists (GA4): Is it making money?

- Backlinks (Ahrefs/SEMrush): Does it have authority?

If a page has zero clicks but 50 conversions, it’s a keeper (likely a support or sales page). If it has high impressions but zero clicks, the title/intent is wrong—that’s a “Refresh,” not a “Delete.”

Step 3: flag candidates with simple rules (thin, outdated, duplicate intent, low value)

Now I run the “shortlist.” I filter the spreadsheet to find rows that meet my specific criteria for pruning. A flag isn’t a verdict—it’s just a reason to look closer. I flag pages that:

- Have 0 clicks and impressions for 6+ months .

- Are “thin content” (<400–500 words) with low engagement/time-on-page .

- Have no internal links or external backlinks (Orphan pages).

- Are clearly outdated (e.g., “Best trends for 2019”).

Step 4: map each URL to search intent and a topic cluster (to spot cannibalization)

This is the most critical step for fixing cannibalization. I look at URLs within the same topic cluster. If I see three different articles targeting “best project management tools,” I know I have a problem. They are likely splitting the traffic and confusing Google. I map the Primary Query and User Intent (Informational vs. Commercial) for each. If they overlap, they get marked for consolidation.

Step 5: assess E-E-A-T and ‘helpfulness’ (what I look for on the page)

For the pages that survived the data filter, I do a manual spot check. I ask: Does this page demonstrate Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T)? Does it have a clear author? Are the statistics recent? If I can’t explain exactly who the page is for, or if I wouldn’t show it to a client, it’s a candidate for removal or a heavy rewrite.

Step 6: plan the update path (refresh, merge, or rebuild) and draft faster when needed

Finally, I assign an action to every URL. The output is a project plan. For pages marked “Refresh” or “Consolidate,” I create a content brief. I prioritize the “quick wins”—pages that just need a title tweak or a few fresh paragraphs—before tackling the massive consolidation projects. I keep a “sources to verify” checklist so that our updates don’t accidentally introduce new inaccuracies.

The action playbook: a decision matrix for content pruning for SEO (delete, redirect, consolidate, noindex, refresh)

Analysis is useless without action. I use a decision matrix to determine exactly what happens to a URL based on its signals. This standardized approach keeps the team aligned and reduces the fear of “deleting the wrong thing.”

When you are dealing with hundreds of pages that need to be refreshed or consolidated, operations can get messy. This is where a Bulk article generator capability helps in operationalizing the updates at scale, ensuring you can publish the refreshed versions consistently without stalling your calendar.

Table: decision matrix (scenario → best action → how I implement → risk)

| Scenario / Signals | Best Action | Implementation Steps | Primary Risk |

|---|---|---|---|

| Zero traffic, No backlinks, Outdated/Low quality | Delete (410) | Remove page; update internal links to remove references; ensure 410 status. | Minimal (if truly no backlinks). |

| High traffic/backlinks, but Wrong Intent or Old | Refresh / Update | Keep URL same; rewrite content; update publish date. | Losing rankings temporarily during crawl. |

| Multiple pages targeting same keyword | Consolidate | Merge content to strongest URL; 301 redirect others to it. | Redirect chains; losing nuance of sub-topics. |

| Valuable to users, Zero SEO value (Tags, Admin) | Noindex | Add meta robots “noindex”; keep page live. | Accidentally noindexing vital pages. |

| Good backlinks, but Content is irrelevant | 301 Redirect | Redirect to closest relevant category or parent page. | Soft 404s if destination isn’t relevant. |

How I handle on-page SEO when consolidating (titles, headings, schema, internal links)

When I merge three posts into one, the new page needs to work harder. I rewrite the Title Tag and H1 to be broader, covering the full scope of the merged intent. I structure the headings (H2s, H3s) to cover the subtopics that used to be separate posts. Crucially, I update internal links across the site. If other posts linked to the deleted URLs, I update those links to point directly to the new consolidated URL, rather than relying on the redirect.

Redirect hygiene: how I avoid chains, soft 404s, and lost equity

I once saw a site lose 15% of its traffic because they created a “Redirect Chain”—Page A redirected to B, which redirected to C, which redirected to D. Google eventually stops following. I always ensure a 1-to-1 mapping: Old URL → Final Destination. I also watch out for “Soft 404s,” where I redirect a specific article to a generic homepage. Google treats this as a 404 anyway. Always redirect to the most relevant specific page possible.

Common mistakes that make pruning backfire (and how I prevent them)

I’ve made mistakes early in my career, so I know exactly how pruning can go wrong. It usually happens when we get too aggressive or ignore the data. Here are the traps to avoid:

- Deleting pages with backlinks without redirects: This is the cardinal sin. You sever the connection to your site’s authority. Fix: Check backlink data in Ahrefs/SEMrush for every single URL before deletion.

- Pruning based on a single metric: Looking only at traffic ignores pages that assist conversions or support customers. Fix: Always cross-reference traffic, conversions, and backlinks.

- Breaking internal link structure: removing a page that is the bridge between two clusters leaves users at a dead end. Fix: Crawl the site immediately after pruning to find broken links.

- Consolidating mismatched intent: Merging a “How-to” guide with a “Product” page usually fails because the user intent is different. Fix: Only merge informational with informational, commercial with commercial.

- Expecting overnight results: SEO is a slow ship. Fix: Wait 2–3 months before judging the success of a prune.

Mistake-to-fix checklist (5–8 items)

- Did I check with Sales/Support? Ensure no one is using the URL in active campaigns.

- Is the Redirect Map clean? No chains, loops, or redirects to 404s.

- Did I update the Sitemap? Remove deleted URLs from your XML sitemap immediately.

- Did I annotate GA4? Add a note on the date of execution to correlate future traffic changes.

- Did I run a post-launch crawl? Use Screaming Frog to catch any 4xx or 5xx errors generated by the changes.

FAQs + my next steps checklist (so you can start pruning confidently this week)

Content pruning is one of the highest-leverage activities you can do for SEO, but it requires courage. If you only do one thing, start with the pages that haven’t earned a click in 6+ months. Pruning isn’t about destroying work; it’s about shining a light on the content that actually matters.

FAQ: Will deleting content harm my SEO?

No, not if done strategically. In fact, it typically helps by consolidating authority. The risk comes from deleting pages indiscriminately. To stay safe:

- Always redirect URLs with backlinks.

- Never delete your top traffic drivers.

- Use “noindex” instead of delete if you aren’t sure.

FAQ: How often should I prune content?

I’d rather do a lightweight quarterly sweep than a painful once-a-year overhaul. For large sites (1,000+ pages), I recommend a quarterly audit. For smaller or corporate sites, a biannual check is usually sufficient to keep the bloat away.

Your Next Steps for Monday Morning:

- Export your URL list from your CMS and Sitemap.

- Pull the last 6 months of data from GSC and GA4.

- Flag the “Zero-Click” pages that have no backlinks.

- Pilot a prune on just one topic cluster (e.g., /blog/events/) to test the workflow.

- Measure the impact on your index coverage and rankings after 30 days.