Lost in Translation: Cross Cultural Search Intent Mapping for Beginners

I still remember the sinking feeling I had staring at a client dashboard a few years ago. I was managing an expansion into the French market for a SaaS company. We had done everything “right” by the traditional playbook: we exported our top-performing US keywords, hired a professional translator to localize them, and launched translated versions of our best landing pages.

The rankings climbed. Traffic started flowing. But the conversion rate was flatlining at zero.

It took me two weeks to figure out why. I had translated a high-intent keyword that, in the US, meant “I want to buy software.” But in France, the direct translation of that specific term was used almost exclusively by students looking for definitions of the methodology. The intent wasn’t transactional; it was purely informational. I was trying to sell a tool to people who just wanted a dictionary definition.

That failure taught me the most important lesson in international SEO: Translation changes words, but culture changes meaning.

In the era of AI-mediated search, this is even more critical. AI engines don’t just match keywords; they match intent, context, and semantic nuance. If you are just swapping English words for Spanish or Japanese ones, you aren’t optimizing—you’re guessing. This guide is the workflow I now use to map search intent across cultures, ensuring that when we land in a new market, we actually answer the user’s real question.

Quick answer: Why mapping intent beats translating keywords

Think of keyword translation like dubbing a movie. You can translate the dialogue perfectly, but if the cultural references, humor, and emotional context aren’t adapted, the joke lands flat. Technically, it’s accurate; emotionally, it’s a void.

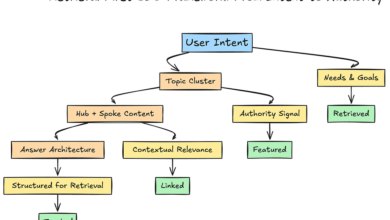

In SEO, cross cultural search intent mapping is the process of identifying the underlying motivation behind a search in a specific target market, rather than just finding a dictionary equivalent of a keyword. With AI search systems now prioritizing localized context and semantic relevance, translated pages that lack unique market intent are increasingly ignored as redundant data.

Why you can’t just translate keywords: culture changes what people mean (and what they do next)

One of the most common questions I get from stakeholders is, “Why can’t we just translate our high-volume keywords and be done with it?”

The answer lies in how different cultures process information. In communication theory, we talk about high-context and low-context cultures. This isn’t just academic theory; it directly impacts what people type into Google or ChatGPT.

In low-context cultures (like the US, Germany, or Scandinavia), communication is explicit. Users tend to search directly for what they want: “best crm for small business price.” The intent is clear, direct, and often transactional.

In high-context cultures (like Japan, much of the Arab world, or France), communication relies heavily on implicit understanding. Users might use indirect phrasing or broader terms, expecting the search engine to infer the context. A Japanese user might search for “business relationship improvement methods” when they are actually looking for CRM software. If you only target the direct translation of “software,” you miss the entire research phase of the buyer’s journey.

The consequences of ignoring this are tangible:

- Wrong Page Type: You publish a sales page when the market wants a guide.

- Low Engagement: Users bounce because the tone feels too aggressive or too vague for their cultural norms.

- Zero Conversions: You rank for traffic that has no intention of buying.

The three layers I map: language, context, and behavioral signals

When I analyze a new market, I don’t just look at the dictionary. I look at three distinct layers to understand what is really happening:

- Language Layer: The actual words, synonyms, and local slang. Watch out: Direct translations often miss the vernacular “street” terms people actually type.

- Context Layer: The invisible constraints. Is budget a taboo topic? Are there strict regulations (like GDPR in Europe) that change the user’s priority?

- Behavioral Layer: What are they clicking on? Do they prefer video, long-form text, or quick tables? Watch out: Just because a format works in the US doesn’t mean it works in Brazil.

How AI search changes global intent: exploratory, comparative, and synthesis queries dominate

We used to optimize for ten blue links. Now, we are optimizing for AI engines that read, summarize, and rewrite queries. This has fundamentally shifted how we need to look at intent.

Research suggests that AI-driven platforms are fostering new dominant intent types. It’s no longer just “informational” or “transactional.” We are seeing:

- Exploratory Intent: “Help me understand the landscape of X.” (e.g., “How do German companies handle data privacy?”)

- Comparative Research: “Weigh the pros and cons of A vs B for a specific scenario.”

- Synthesis Intent: “Give me a plan based on X, Y, and Z.”

Data indicates that up to 79% of queries are rewritten by AI Search Generative Engines before they even fetch results . This means if your content answers only the literal keyword, the AI might bypass you entirely because it has rewritten the query to look for a broader answer.

Furthermore, early tests show that 60–80% of pages missing multi-step answers or FAQs were excluded from AI summary features. This is a wake-up call: in an AI world, single-intent pages (like a thin glossary definition) are becoming obsolete.

What “multi-intent” content looks like (in plain English)

To survive this shift, I’ve stopped writing single-purpose pages. Instead, I structure content to satisfy multiple intents in one go. A good pattern to follow is:

Direct Answer (The “What”) → Comparison/Context (The “Vs”) → Decision Criteria (The “How to Choose”) → Proof/Steps → FAQs.

If you only do one thing differently this week, start adding a robust “People also ask” section to the bottom of your localized pages. It feeds the AI summaries directly.

My step-by-step framework for cross cultural search intent mapping (from US HQ to any market)

This is the exact workflow I use. It’s designed to be run market-by-market, not language-by-language. I typically spend about 45 minutes per topic cluster on this research phase before I ever brief a writer.

Step 1: Define the market scenario (audience, job-to-be-done, constraints)

Before looking at keywords, I write down a one-paragraph hypothesis about the market. Who is searching? What is their “Job to be Done”? And crucially, what constraints are they under?

For example, in the US, a user searching for “credit card processing” might care about speed. In Germany, that same user might be obsessed with security compliance and integration with local banking standards. If I don’t map that constraint, I miss the intent. I write this down as an assumption to test.

Step 2: Collect seed queries and local variations (beyond direct translation)

I start with my English seed keywords, but I don’t just put them into Google Translate. I use native keyword research tools or look at local competitors to find the phrasing.

I look for: autosuggest results in the local language (using a VPN), local forum discussions, and “related searches.” I create a spreadsheet column for “Implied Context” next to the translated keyword. Beginner trap: Don’t trust a bilingual dictionary. It will give you the formal word, not the one people type at 2 AM.

Step 3: Label intent using SERPs and content formats (what Google/AI is rewarding)

This is the most critical step. I look at the top 5 results in the local SERP and label what I see. I treat this like a hypothesis until the SERP confirms it. I record:

- Page Types: Are they blogs, product pages, PDFs, or government sites?

- SERP Features: Is there a local map pack? A “buying guide” carousel? Videos?

- Freshness: Are the top results from 2015 or last week?

If the French SERP for a software term is 80% Wikipedia-style definitions, I know the intent is informational. If the US SERP for the same term is 80% “Best of” lists, the intent is commercial investigation. I label these differently in my content plan.

Step 4: Map cultural cues (directness, politeness, risk, trust) to content angles

I treat this like user research. I look for tendencies in the top-ranking content. Is the tone authoritative and distant? Or is it friendly and peer-to-peer?

In high-uncertainty-avoidance cultures (like Germany or Japan), I often find that content needs to lead with risk mitigation—certifications, guarantees, and technical specs—before getting to the marketing fluff. In the US, we often lead with benefits and speed.

Step 5: Design multi-intent clusters for AI search (answer → compare → decide)

Once I have my map, I don’t just write one article. I build a cluster. I need a pillar page that answers the broad question, supported by specific comparison pages. This is where I often lean on technology to help scale the execution. I use the AI article generator from Kalema to help draft the structural skeleton of these pages. I don’t use it to replace the cultural insight I’ve just gathered; I use it to ensure every single page rigidly follows the multi-intent structure (Answer → Context → Steps) that we know AI search engines prefer.

Minimum Viable Cluster: If you are overwhelmed, start with 1 Pillar Page (The Guide) + 2 Supporting Pages (The Comparison + The Case Study).

Practical examples: mapping the same topic to different intents (with a comparison table)

Let’s look at how this plays out in the real world. Here is a comparison of how I might adapt a single seed topic—”Email Marketing Software”—across three different markets based on typical cultural intent signals.

Table: One seed topic, different cultural intent signals

| Market | User Focus (Tendency) | Likely Query Phrasing | Primary Intent | Winning Content Format |

|---|---|---|---|---|

| USA (Low Context) | Speed, ROI, Features | “Best email marketing software for small business” | Commercial / Comparison | Listicle / “Top 10” Review with heavy feature comparison. |

| Germany (Risk Averse) | Compliance (GDPR), Security, Integration | “Email marketing software DSGVO compliant” | Safety / Technical Vetting | Technical Guide highlighting server location & legal compliance. |

| Japan (High Context) | Trust, Support, Reputation | “Email delivery system reputation ranking” | Reputation / Validation | Detailed article featuring long-term case studies & vendor history. |

What surprised me the first time I mapped this out was how often the “best” page in the US (the listicle) completely failed in markets that prioritize security or relationships over feature lists.

Template: a one-page “Intent Map” I use before writing anything

Copy and paste this into your brief. I refuse to let a writer start without filling this out:

- Target Market: [e.g., France]

- User Persona: [Who are they?]

- What are they really asking? (The Core Intent): [e.g., Is this legal?]

- Top 3 SERP Competitors: [Links]

- Required Content Format: [e.g., Detailed Guide vs. Quick Tool]

- Cultural “Must-Haves”: [e.g., Mention specific local regulations]

- Trust Signals Needed: [e.g., Local reviews, certifications]

Implementation essentials: on-page SEO, localization beyond text, and local authority signals

You have your map. Now you need to publish. This is where execution often falls apart because we treat localization as a text-only exercise. To really rank in the AI era, you need to localize the entire user experience and build authority that the local market respects.

Content intelligence platforms like Kalema are game-changers here because they allow you to maintain high editorial standards across multiple languages without losing the structural integrity that AI search demands. But remember: tools amplify your strategy; they don’t replace the need for local nuance.

Localization beyond translation: UX, examples, and proof that feels local

I don’t call a page “localized” until I’ve checked these UX elements:

- Currency & Units: If I see “$” or “inches” on a European page, I immediately lose trust.

- Visuals: Do the screenshots show the software interface in English or the local language?

- Examples: If you use a generic “John Smith” example in a Brazilian article, it feels foreign. Use local names and local business scenarios.

Local authority for AI search: citations, authorship, and market-relative trust

Here is a hard truth: Global authority doesn’t always translate. AI systems weigh market-relative trust heavily. A link from the New York Times is great, but for a German user, a citation from a respected Munich trade journal might be a stronger relevance signal.

To build this, start small:

- Local Review: Have a local expert review your content and list them as a reviewer.

- Local Citations: Cite local laws, local studies, or local news sources.

- Entity Consistency: Ensure your brand name, address, and details are consistent across local directories.

Common mistakes (and fixes) when mapping intent across cultures

I’ve made most of these mistakes, so hopefully, you don’t have to.

- The “One-to-One” Trap:

Mistake: Assuming one US keyword equals exactly one foreign keyword.

Fix: Map topics, not words. Often, one US topic splits into three specific topics in another market.

- The “Format” Fail:

Mistake: Publishing a 3,000-word guide when the local SERP is full of calculators or tools.

Fix: Always validate the format ranking in the top 3 positions before writing.

- The “Trust” Gap:

Mistake: Using US-based testimonials for a Japanese landing page.

Fix: If you don’t have local customers yet, use global logos but emphasize your local support/presence.

- The “Measurement” Error:

Mistake: Tracking rankings but ignoring engagement.

Fix: Track conversions by intent cluster. If rankings are high but conversions are low, you have an intent mismatch.

Troubleshooting signals: when rankings improve but conversions drop

This is the classic symptom of intent mismatch. I once saw a page rocket to #1 in Spain for a query I thought was transactional. Traffic tripled. Sales dropped. Why? Because the query was actually used by people looking for free templates, not paid software. The fix wasn’t better copy; it was changing the offer to a “free trial” to bridge the gap.

FAQs + summary: what to do next with cross cultural search intent mapping

We’ve covered a lot, from cultural context to SERP analysis. To wrap up, here are the answers to the most frequent questions I hear.

FAQ: Why can’t I just translate my keywords for different markets?

Literal translation misses local phrasing, cultural nuance, and the actual intent behind the search. It often leads to the wrong type of content ranking for the right keywords, resulting in high bounce rates and low conversions.

FAQ: What does AI search demand differently than traditional Google SEO?

AI search demands multi-intent coverage. You need content that provides direct answers, allows for comparative research, and supports synthesis tasks. If your content can’t survive being rewritten by an AI, it won’t be visible.

FAQ: How can I detect cultural differences in search intent?

Start by manually comparing the SERPs. Look at the types of pages that rank (informational vs. transactional), the directness of the titles, and the depth of the content. Validating with a local reviewer is also a high-value step.

FAQ: What role do local authority signals play in cross-cultural SEO?

They are critical. AI systems prioritize sources that are validated within the specific region. Citations from local entities, local authorship, and adherence to local regulations build the trust required to rank.

FAQ: How should I structure content for global audiences?

Use a modular structure: Direct Answer → Context → Detailed Comparison → Localized Proof → FAQs. This satisfies both the human user’s need for detail and the AI’s need for structured data.

Recap:

- Translation handles words; intent mapping handles meaning.

- Culture dictates query structure (direct vs. indirect).

- AI search requires multi-intent content structures (Exploratory, Comparative, Synthesis).

If I were starting from zero this week, here is exactly what I would do:

- Pick one market: Don’t try to fix the whole world at once.

- Run the 45-minute analysis: Use the template above to map the intent for your top 5 topic clusters in that market.

- Audit your top page: Check if the format matches the local SERP. If not, rewrite the brief.

- Add “People Also Ask”: Add a localized FAQ section to your key pages to feed AI summaries.