Introduction: Tracking AI mentions with AI brand monitoring tools (what I’ll help you do)

I recently asked ChatGPT for the “best enterprise CRM for a marketing agency,” and the result was a wake-up call. The usual SEO leaders were missing. Instead, the LLM recommended three specific platforms based on criteria I hadn’t even typed out. If I were the marketing lead for one of those excluded CRMs, I would be panicking right now.

The reality is simple: I can’t optimize what I can’t measure. As search behavior shifts from typing keywords into Google to asking complex questions in ChatGPT, Claude, and Gemini, brand visibility is no longer just about rankings. It is about being the recommended answer.

For intermediate marketers and SEOs, this creates a massive blind spot. You suspect AI is influencing your pipeline and reputation, but you lack the data to prove it. In this guide, I will walk you through the emerging landscape of AI brand monitoring tools. I’ll explain how they work, compare the top vendors based on facts rather than hype, and give you a repeatable workflow to start tracking your AI share of voice this week.

We are seeing data suggesting that up to 60% of searches now end without a website click, and AI-generated responses account for a rapidly growing share of interactions. If you aren’t monitoring this, you aren’t just losing traffic; you’re losing the narrative.

Quick definitions: AI visibility, LLM mentions, and GEO (in plain English)

Before we dive into the tools, let’s agree on the language. The industry is moving fast, and acronyms are getting thrown around loosely. Here is how I define the essentials to my clients:

- AI Visibility (or Share of Voice): This measures how often your brand appears in AI-generated responses for relevant prompts compared to your competitors. It’s not about a position on a page; it’s about frequency of recommendation.

- Generative Engine Optimization (GEO): Think of this as the successor to SEO. While SEO optimizes for a search engine’s index, GEO optimizes content to be cited and synthesized by Large Language Models (LLMs).

- Prompt-Level Tracking: The practice of monitoring specific questions (e.g., “who are competitors to Salesforce?”) rather than just keywords.

- Citations/Sources: The URLs that an LLM links to as evidence for its answer. In the world of AI, a citation is the new backlink.

If I had to use an analogy: Traditional SEO measures who gets the best billboard on the highway. AI visibility measures who the concierge at the hotel recommends when a guest asks for a good restaurant.

Why AI brand monitoring tools are necessary now (and not optional)

I often hear marketers ask, “Can’t I just manually check ChatGPT once a week?” The short answer is no. LLMs are non-deterministic; they generate different answers based on user history, location, and slight variations in phrasing. Relying on a single screenshot is dangerous.

Here is why dedicated monitoring is becoming a table-stakes requirement for US businesses:

- The Zero-Click Reality: With up to 60% of searches potentially ending without a click , users are getting their answers directly on the result page or chat interface. If you aren’t in the answer, you don’t exist.

- Brand Risk and Hallucinations: I have seen instances where an AI confidentially stated a product was discontinued when it wasn’t. Without monitoring, misinformation can drift into the training data of millions of users unchecked.

- Competitive Conquesting: If a user asks for “alternatives to [Your Brand],” and the AI provides a compelling pitch for your competitor, you have lost the deal before they ever visited your site.

- Compliance Exposure: For regulated industries like finance or healthcare, an AI hallucinating your terms of service or pricing isn’t just a marketing problem; it’s a legal one.

If you are in a high-stakes vertical, have widely distributed products, or rely on “best of” lists for traffic, you need to start monitoring this month. The cost of ignorance is simply getting too high.

What to monitor: models, prompts, sentiment, citations, and share-of-voice

When I set up a dashboard, I don’t try to boil the ocean. I focus on the metrics that actually map to revenue or reputation. It is easy to get distracted by vanity metrics, so here is a practical breakdown of what actually matters.

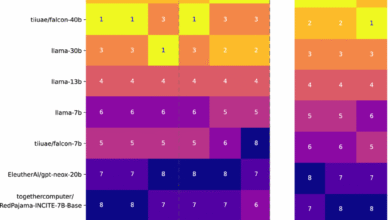

First, prioritize the models your audience uses. For B2B, ChatGPT, Microsoft Copilot, and Perplexity are usually critical. For B2C, Google’s AI Overviews (formerly SGE) and Gemini are often the heavy hitters.

Next, you need to categorize your prompts. I usually split them into two buckets:

| Prompt Category | Why Monitor This? | Example (Ecommerce) | Example (B2B) |

|---|---|---|---|

| Brand Navigational | Check for factual accuracy and sentiment. | “Nike Pegasus reviews” | “Is HubSpot hard to learn?” |

| Commercial Investigation | These drive the highest intent purchases. | “best running shoes under $150” | “best CRM for small business” |

| Competitor Conquesting | See who is poaching your potential leads. | “running shoes for flat feet similar to Brooks” | “cheaper alternatives to Salesforce” |

The Data Points That Matter:

- Share of Voice: In a list of 10 recommendations, how many times does your brand appear?

- Sentiment: Is the AI recommending you with enthusiasm, or with caveats like “however, some users report bugs”?

- Citation Velocity: Which domains are fueling the AI’s answer? This tells you where you need to earn press coverage next.

How AI brand monitoring tools work (and what data I trust)

This is where teams often get tripped up. Understanding how these tools get their data is critical to trusting it. Unlike Google Search Console, which gives you direct data from the source, AI monitoring tools rely on sampling.

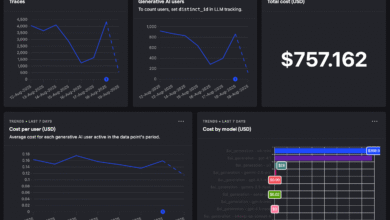

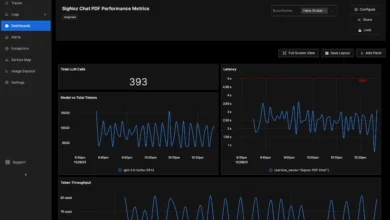

Here is the mechanism: A tool like Evertune AI or Profound creates a “virtual agent.” This agent queries the LLM (e.g., ChatGPT-4) using your specific prompts. Because LLMs vary their answers, good tools will run the same prompt multiple times—sometimes dozens or hundreds of times—to establish a statistical probability.

For example, Evertune AI processes over one million AI responses per brand monthly to determine visibility trends. This high volume is what smooths out the noise and reveals the signal. If a tool only checks a prompt once a week, I frankly wouldn’t trust the data for high-stakes decisions.

My Data Quality Checklist:

- Reproducibility: Can I run the same prompt set weekly and compare apples to apples?

- Scale: Does the tool sample enough times to account for AI “temperature” (randomness)?

- Context: Does the tool account for location? An answer in New York might differ from one in London.

- Citation Transparency: Does it just tell me I was mentioned, or does it tell me why (i.e., which URL the AI read)?

Best AI brand monitoring tools to track your brand in LLMs (comparison + who they’re for)

The market is flooding with new entrants. I’ve analyzed the top players—from established SEO giants to agile startups—to help you build your shortlist. Note that pricing and features change rapidly in this space.

| Tool | Best For | Key Models | Standout Feature |

|---|---|---|---|

| Evertune AI | Statistical Accuracy | ChatGPT, Gemini, Claude | 1M+ monthly response processing |

| Profound | Broad Coverage | 10 Engines (Perplexity, Grok, etc.) | Tracks across 10 major engines |

| Ranketta | Ecommerce/Product | ChatGPT, Gemini, Perplexity | Source analysis & product visibility |

| Otterly.ai | Competitive Benchmarking | ChatGPT, Perplexity | Semrush partnership integration |

| Brand24 (Chatbeat) | Social + AI Hybrid | GPT-4, Gemini, Claude | Massive historical data (25B mentions) |

| Semrush AI Visibility | SEO Teams | AI Overviews (SGE) | Integration with existing SEO workflow |

Tool Mini-Reviews

Evertune AI

Best for data-heavy teams who need statistical significance. Their approach of repeated querying (over a million responses processed monthly) makes their trendlines highly reliable. The tradeoff is that this level of depth can be overkill for small local businesses.

Profound

Founded in August 2024, Profound is excellent if your audience is fragmented. They track mentions across ten major AI engines, including niche ones like Grok and DeepSeek. It gives a fantastic holistic view, though the dashboard can get busy due to the sheer volume of data.

Ranketta

If you are in ecommerce, pay attention to Ranketta. Launched in 2025 with €1M in pre-seed funding, they focus heavily on product-level visibility and citation sources. It’s invaluable for seeing which product pages are actually feeding the AI answers. [Pricing: ].

Otterly.ai

Emerging from stealth in late 2024 and partnering with Semrush, Otterly is great for competitive benchmarking. It’s gained traction fast (1,000+ users) because it simplifies the “us vs. them” view. If you need a slide for your CMO showing you beating a competitor, this is a strong choice.

Brand24 (Chatbeat)

Brand24 is a veteran in social listening, and their Chatbeat tool applies that muscle to LLMs. With over 100,000 brands monitored, they have a massive dataset. It’s ideal if you want to track social mentions and AI mentions in a single ecosystem.

Semrush AI Visibility & Others

For teams already living in Semrush, their AI Visibility Toolkit is the path of least resistance. It integrates well, particularly for tracking Google’s AI Overviews. Other notables include Peec AI (great for near real-time alerts), Scrunch (good persona-based filters), and Riff Analytics or Parse.gl for lower-cost, credit-based audits suitable for freelancers.

How I choose AI brand monitoring tools: a simple decision framework

Choosing a tool can feel overwhelming. When I evaluate a platform, I look for alignment with the team’s maturity level rather than just feature count. Here is a decision matrix to help you decide:

- For Startups / Solopreneurs: Look for credit-based pricing (pay-per-query). You likely need to run a monthly audit rather than continuous monitoring. Recommendation: Riff Analytics or Parse.gl.

- For Mid-Market B2B: You need competitive benchmarking and share-of-voice reporting to justify budget. Recommendation: Otterly.ai or Brand24.

- For Enterprise / Ecommerce: You need statistical significance, API access, and deep product-level tracking. Recommendation: Evertune AI, Profound, or Ranketta.

Questions to ask in the demo:

- How often do you refresh the data? (Real-time vs. Weekly)

- Can I export the raw response text, or just the charts?

- How do you handle personalization bias in your sampling?

- Does this integrate with my current AI article generator workflow to help me produce content that fixes the gaps I find?

Implementation playbook: set up monitoring, reporting, and content fixes in 7 steps

Buying the tool is the easy part. Building the workflow is where the value is. If I only had two hours a week to manage this, here is exactly what I would do.

- Define Your Entities: List every variation of your brand name, key product names, and executive names. Don’t forget common misspellings.

- Build Your Prompt Library: Create a spreadsheet with 20-50 high-priority prompts. Mix brand-specific questions (“is X reliable?”) with generic category questions (“best software for X”).

- Set Baselines: Run your initial scan. Don’t panic at the results. This is your “Day Zero.”

- Configure Alerts: Set up notifications for negative sentiment or brand omission. You don’t need an alert for every mention, just the problems.

- Diagnose the Citations: Look at where the AI is getting its info. Is it an old press release? A competitor’s comparison page? A Reddit thread?

- Create an Action Backlog: This is crucial. If the AI says your pricing is opaque, update your pricing page schema. If it recommends a competitor because they have a “vs” page, create a better one. Tools like the SEO content generator from Kalema can help you spin up these authoritative pages and FAQs quickly once you identify the gaps.

- Measure and Iterate: Review trends monthly. Are you appearing in more answers? Has the sentiment shifted from neutral to positive?

Common mistakes, FAQs, and next actions (so I don’t waste time)

I’ve seen smart teams fail at this because they treat AI monitoring like a vanity project. Here are the traps to avoid:

- Mistake 1: Ignoring Non-Brand Prompts. Only tracking your own name is vanity. The money is in the category searches (e.g., “best running shoes”).

- Mistake 2: Over-reacting to Noise. LLMs hallucinate. If one response is weird but 99 are correct, don’t rewrite your whole website. Look for trends, not glitches.

- Mistake 3: No Owner. If no one is responsible for fixing the content gaps the tool finds, the subscription is a waste of money.

Frequently Asked Questions

Why is this different from social listening?

Social listening tracks what humans say about you. AI monitoring tracks what machines synthesize about you. The latter is often viewed as more “objective” by users, making it higher stakes.

Can I just use Google Search Console?

No. GSC tracks clicks from Google’s search engine. It does not track what ChatGPT or Claude is saying to a user in a chat interface.

Can these tools detect misinformation?

Yes, most have sentiment analysis and can flag when a response contains factually incorrect information about your pricing or features.

Your Next Moves

If I were starting today, I wouldn’t overthink it. Pick 20 core prompts that drive your business. Choose one tool from the list above that fits your budget. Run a 4-week baseline. The goal isn’t to control the AI—it’s to understand it well enough to be part of the conversation.