Winning the AI Box With the best Google AI tools: The Top Tools for Google AI Overview Success

It is 2026, and if you are in SEO or content marketing, you have likely noticed a massive shift. The goal post has moved from ‖ranking #1’ to ‖winning the box.’ Specifically, earning visibility and citations in Google’s AI Overviews. But here is the frustration I hear constantly: ‖I published helpful content, but it never shows up in AI Overviews, and I don’t know why.’

The landscape is crowded. We have Gemini 3 variants (Pro, Flash, Deep Think), agentic features in Chrome like Auto Browse, and a suite of experimental Labs tools. It is easy to get paralyzed by tool overload. You don’t need to use every tool Google releases to succeed. You just need to know which ones help you structure data effectively and which ones speed up your workflow.

In this guide, I am going to map out the current Google AI ecosystem—covering Search, Chrome, Workspace, and developer tools—and explain what actually impacts AI Overview visibility. By the end, you will have a beginner-friendly workflow to pick the best Google AI tools and execute a content strategy that makes your site easy to cite.

What “Google AI Overview success” actually means (and how AI Overviews choose what to cite)

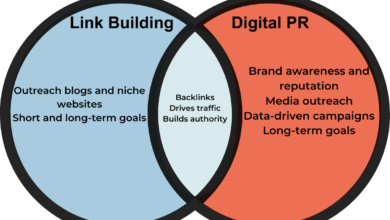

Before we look at the tools, we need to agree on what “winning” looks like. AI Overviews (AIOs) do not rank you in a linear list. They synthesize an answer and cite sources that provide specific data points to support that answer. Success means being one of those citations.

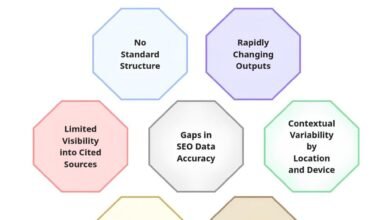

With the rollout of conversational follow-ups in Search and Gmail, the engine is looking for content that can handle depth. It wants to know if you answer the primary question and the likely follow-up questions. Here is the reality of what you can and cannot control:

- What you can control: Your content structure (headings, lists), information clarity, entity alignment (staying on topic), and technical health (schema, crawlability).

- What you can’t control: The exact probability of an AI Overview appearing for a query (this fluctuates daily) or the specific weight Google assigns to certain authority signals at any given moment .

For example, if a user searches “best invoicing software for freelancers,” the AI Overview might generate a comparison table. If your article has a messy paragraph about invoicing, you won’t get cited. If you have a clean HTML table with “Price,” “Features,” and “Pros/Cons,” you make it easy for the model to extract your data. That is the game.

The beginner’s mental model: from “ranking” to “being quotable”

When I audit pages that get cited in 2026 SERPs, the answer is usually in the first 2–3 sentences under a descriptive H2, followed by a proof block. It is rarely buried in the conclusion.

We need to shift from trying to “rank” for a keyword to becoming “quotable.” Quotable content consists of clear definitions, direct answers, scannable sections, and verifiable facts. It adheres to high editorial standards—citing sources, using dates, and being transparent about who wrote the content.

Key signals you should design for (without guessing Google’s secret sauce)

You don’t need to guess the algorithm. Focus on these signals, which I use as a field checklist for every audit:

- Search Intent Match: Does the page actually answer the specific problem?

- Topical Completeness: Do you cover the sub-questions users ask in follow-ups?

- Heading Structure: Do your H2s and H3s map directly to user questions?

- Schema Markup: Are you explicitly telling Google “this is a review” or “this is a generic FAQ”?

- Internal Linking: Do you connect this page to other relevant topical clusters on your site?

How I evaluate the best Google AI tools for business use (a simple selection framework)

With dozens of tools available, how do you choose? I evaluate tools based on utility and risk. If a tool helps me research faster or structure content better, it’s in. If it introduces hallucination risks or privacy concerns for client data, it’s out.

Here is the framework I use to decide what enters our SOPs:

| Tool Category | Best For | Inputs | Outputs | Access/Tier | Risk Notes |

|---|---|---|---|---|---|

| Gemini 3 (Pro/Flash) | Research & Drafting | Prompts, Docs | Structured Text | Pro/Ultra | Check facts manually |

| Auto Browse (Chrome) | Competitor Analysis | URLs, Instructions | Summaries, Data | Chrome AI Pro | Don’t share credentials |

| Flow (Veo 3.1) | Video Assets | Scripts, Images | Short Clips | Workspace Biz | Verify brand safety |

| Antigravity IDE | Tech SEO/Code | Code requests | Scripts/Schema | Preview/Beta | Review code before deploy |

The 5 criteria that matter most (for beginners)

- Clarity of Output: Does it give me a straight answer or fluff?

- Workflow Integration: Does it work where I work (Docs, Chrome, Gmail)?

- Reliability: Can I trust it for factual tasks, or do I need to triple-check every number?

- Collaboration: Can my team see what I’m doing?

- Governance/Permissions: Can I control who accesses the data?

Suggested visual: a one-page decision tree for picking tools

[Callout: Imagine a flowchart here. If your bottleneck is research → use Search/Gemini. If execution in browser → Auto Browse. If content production → Drafting workflow. If video → Flow.]

Here is how I’d choose in under 2 minutes: If I need to understand a topic deeply, I go to Gemini 3 Deep Think. If I need to see how competitors are structuring their pages, I use Auto Browse in Chrome. If I need to generate the actual article structure, I move to my drafting tools.

My shortlist of the best Google AI tools to win the AI Box (2026 ecosystem map)

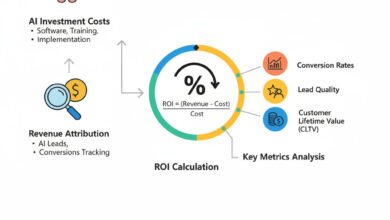

It is important to look at the whole ecosystem. While OpenAI currently leads in enterprise adoption (approx 36.8% vs Google’s 4.3% in Dec 2025 ), Google’s tools are uniquely positioned for SEO because they are built into the search engine itself. Here is my shortlist.

If you only pick 3 tools, start here:

1. Gemini 3 Pro (for reasoning and outlining)

2. Auto Browse (for research efficiency)

3. Google Search Console (for monitoring results—old school, but essential)

Foundation: Gemini 3 (Pro, Flash, Deep Think) — what it changes for everyday workflows

Gemini 3 variants (Pro, Flash, and Deep Think) are the engine under the hood. For business owners, the “Deep Think” variant is critical. It doesn’t just predict the next word; it reasons through complex topics. I’ve noticed this improves day-to-day work by providing fewer missed steps in outlines and better logical flow in arguments. It handles multimodal inputs (text, images, audio) seamlessly, which is great for analyzing competitor charts.

Search + Gmail: AI Overviews, follow-up conversations, and AI Inbox

Search has changed. The new conversational follow-ups mean users can ask, “What about for small businesses?” after an initial query. This shifts our strategy to modular content. We need to create “answer blocks” for these potential follow-ups.

In Gmail, the AI Inbox and AI Overviews help streamline operations. I personally use this to turn messy customer email threads into FAQs. If five customers ask the same question in a long thread, I let the AI summarize it, and that summary becomes a new H2 on our service page.

Chrome: Auto Browse + Nano Banana for agentic browsing and in-browser image work

Auto Browse is available to Chrome AI Pro users in the US . It allows for agentic browsing—meaning the browser can perform multi-step tasks like “Go to these 5 competitor sites and list their pricing tiers.” This is a massive time-saver for SEO QA.

Safety Note: I use Auto Browse for public data gathering. I do not use it for tasks involving my banking login or sensitive client backend access without direct supervision. Nano Banana offers lightweight image editing directly in the browser, which is great for quick screenshot markups.

Workspace + Enterprise: Gemini Enterprise and Flow (Veo 3.1) for business content ops

For those in the Workspace ecosystem, Gemini Enterprise brings these capabilities into Docs and Sheets. But the exciting addition is Flow, powered by Veo 3.1. It generates high-quality video clips (often up to 8 seconds ) from prompts.

These short clips won’t replace a full video production team, but they are perfect for “About” sections or quick social teasers that embed into your articles to increase engagement time—a positive user signal.

Developers: Antigravity IDE (agent-first coding) and when it’s worth using

Antigravity IDE is an agent-first coding environment. If you aren’t a developer, you might gloss over this, but for technical SEO, it is useful. You can ask it to “Write a Python script to scrape the H2s from this list of URLs” or “Generate JSON-LD schema for a local business.”

However, if you are not comfortable reading code, be careful. Always verify the output. If you aren’t shipping code weekly, you may not need this yet—and that’s okay.

Labs to watch: Stitch, Opal, Pomelli, Gemini Canvas (future workflow bets)

Google Labs creates experimental tools. Stitch helps design UIs from prompts, Opal builds no-code apps, and Gemini Canvas is a visual playground. I test Labs tools in a sandbox project first. I never rely on them for core revenue pages because Google has a history of sunsetting experiments. Treat them as future workflow bets, not current infrastructure.

A step-by-step workflow to earn AI Overview citations using Google AI (and where Kalema fits)

Now that we know the tools, let’s put them into a workflow. This is the exact process I use to build pages that get cited. It connects research to publishing. If you want to scale this process with a tool designed specifically for producing intent-matched, high-ranking content, you might consider using an SEO content generator like Kalema to handle the heavy lifting of drafting while maintaining editorial control.

Step 1: Start with intent and a question map (so AI Overviews can ‘see’ your coverage)

Don’t just pick a keyword. Map the questions. I use Gemini to ask, “What are the top 10 follow-up questions users ask about [Topic]?”

Question Map Template:

* Primary Query: Best CRM for Small Business

* Sub-question 1: What is the cheapest CRM?

* Sub-question 2: Which CRM integrates with Gmail?

* Sub-question 3: Is Salesforce too complex for small teams?

Step 2: Build an editorial brief (accuracy, sources, and what ‘good’ looks like)

Before writing, I create a brief. This is where I establish my “Claims Log.” I list every specific fact (pricing, tiers, dates) that needs verification. I mark them as in the brief so the writer knows they cannot just guess. Accuracy is the primary trust signal for AI Overviews.

Step 3: Draft for “answer-first, depth-second” (structure that’s easy to quote)

When drafting, structure is everything. I recommend the “Answer-First” format for every section.

Micro-Template:

1. The Answer: 2–3 sentences directly answering the H2.

2. Why it matters: Context or nuance.

3. Proof/Example: A data point or scenario.

4. Pitfall: What to avoid.

This structure allows Google to easily extract the “Answer” portion for the snippet.

Step 4: On-page SEO implementation (titles, headings, internal links, schema)

Once the draft is done, I run through this on-page checklist. I aim for titles under 60 characters where possible, but clarity wins over brevity.

- Title Tag: Includes primary keyword + hook.

- Meta Description: Summarizes the answer (for click-throughs).

- Heading Structure: H2s match user questions exactly.

- Schema Markup: FAQ or Article schema applied and validated.

- Internal Links: At least 3 links to related clusters.

- Image Alt Text: Descriptive and relevant.

Step 5: Publish + monitor (what to watch in Search Console and how to iterate)

After publishing, the work isn’t done. I monitor Google Search Console for “Query Drift”—when a page starts ranking for terms I didn’t anticipate. If that happens, I add a new section to answer those specific queries. I also suggest adding a ‘Last Updated’ date visible on the page. I personally update content quarterly for fast-moving AI topics.

Content production at scale: turning the workflow into repeatable systems (briefs → drafts → publish)

Executing this once is easy. Doing it for 100 articles requires a system. This is where operations break down if you rely solely on manual effort. To fix this, you need Standard Operating Procedures (SOPs) and potentially an AI article generator to standardize the drafting phase.

When scaling, consistency is key. You can use an Automated blog generator to handle the formatting and uploading, but you must keep human eyes on the QA process.

A simple operating model (even if it’s just me + one editor)

If you are a small team, here is the 20-minute QA routine I’d keep. The writer drafts the content using the Answer-First model. The editor (or you) verifies the “Claims Log” to ensure no hallucinations. Then, an SEO specialist (or you again) checks the internal links and schema.

Suggested table: content pipeline checkpoints and QA gates

| Stage | Output | QA Owner | Pass/Fail Criteria |

|---|---|---|---|

| Briefing | Question Map | Strategist | Does it cover intent? |

| Drafting | Draft Doc | Writer | Is it Answer-First? |

| Review | Verified Text | Editor | Are stats cited? |

| Staging | WP Preview | SEO Lead | Is Schema valid? |

Common mistakes that stop beginners from winning AI Overviews (and how I fix them)

When I see pages not getting cited, it is often because of a few repeatable errors. Here are the top mistakes I see:

- Burying the Answer: Writing 300 words of fluff before answering the H2.

- Vague Headings: Using “Conclusion” instead of “The Verdict on Tool X.”

- No Sources: Making claims without linking to evidence.

- Outdated Info: Listing pricing or features that changed months ago.

- Ignoring Schema: Failing to mark up lists and tables.

If you do nothing else: Fix your headings to be questions, and put the answer immediately below them.

Mistake-to-fix checklist (copy/paste)

- Fix: Move answers to the first sentence of the paragraph.

- Fix: Rewrite H2s to match PAA (People Also Ask) questions.

- Fix: Add a [Source] link to every statistic.

- Fix: Add a “Last Updated” block at the top.

FAQs about Google’s AI tools and AI Overviews (quick, accurate answers)

Here is how I would answer the most common questions about the ecosystem right now.

What makes Gemini 3 different from previous Google AI models?

Gemini 3 (including Pro, Flash, and Deep Think) offers significantly enhanced reasoning and multimodal capabilities compared to earlier versions like 1.5 or 2.0. In practice, that means it is better at understanding complex context, processing images and audio simultaneously, and reducing hallucinations. It powers the new reasoning capabilities in search results.

Who can access Auto Browse and AI Overviews in Gmail?

Currently, these features are primarily available to users in the U.S. who subscribe to Google AI Pro or Ultra tiers . Some features are still in Labs or beta testing for broader rollouts. I check the feature toggle in my settings area first before assuming it is missing from my account.

What is Antigravity IDE and who benefits from it?

Antigravity IDE is an agent-first coding environment designed to let developers delegate tasks to AI agents. It is best for advanced developers or technical SEOs who want to automate script writing or data analysis. If you aren’t comfortable reviewing code for security, proceed with caution.

How does Flow differ from other video creation tools?

Flow, powered by Veo 3.1, is integrated directly into the Google Workspace ecosystem. It specializes in generating short, high-quality clips (often vertical) from text or image prompts, complete with audio. It is ideal for creating quick product highlights or social assets without leaving your workflow.

Why is Google lagging in enterprise AI adoption?

While OpenAI currently holds a larger share of enterprise spending (approx 36.8% vs 4.3% for Google in late 2025 ), this often reflects purchasing cycles and legacy integrations rather than tool quality. Google is closing the gap with Gemini Enterprise and deep Workspace integrations that allow for seamless data flow in business environments.

Conclusion: my 3-part recap + next actions to start winning AI Overviews this week

Winning the AI box isn’t about magic; it’s about structure and utility. To recap:

- Shift your mindset: Write to be quoted, not just to rank.

- Use the right tools: Leverage Gemini 3 for reasoning and Auto Browse for research, but verify everything.

- Structure matters most: Use answer-first formatting and schema to make your content easy for AI to digest.

Next Actions:

1. Pick one high-priority topic and build a question map for it.

2. Draft a new article using the answer-first templates provided above.

3. Add the on-page SEO checklist to your publishing routine.

4. Monitor Search Console in two weeks to see if you are capturing new queries.