Introduction: why Perplexity visibility is hard to measure (and what I’ll show you)

Last quarter, I found myself in a frustrating meeting with our VP of Marketing. I was staring at a Google Analytics 4 dashboard that showed our organic traffic was completely flat week-over-week. Yet, our sales team was reporting that three different prospects had mentioned finding us through “that AI search thing”—specifically Perplexity and ChatGPT.

It was a classic measurement gap: our brand was being cited, recommended, and summarized by AI, but because these platforms often provide zero-click answers, our traditional tracking stack (GA4, GSC, Semrush) was completely blind to it. I realized I was optimizing for a game I couldn’t see.

If you are an SEO or Growth Lead facing this “invisible visibility” problem, this article is for you. I’m going to skip the hype and walk you through the practical reality of AEO (Answer Engine Optimization) performance tracking. We will look at what metrics actually drive decisions, how to audit software readiness (since many tools are still in beta), and a step-by-step workflow to track and improve your citations. Success here isn’t about viral hits; it’s about building a defensible reporting system that proves you are owning the conversation, even when the click doesn’t happen.

AEO performance tracking software: what it measures (vs traditional SEO)

To understand the software, you have to understand the shift in objective. Traditional SEO is a game of real estate: you want your blue link to appear as high as possible on a Search Engine Results Page (SERP) so a user clicks it. AEO performance tracking software measures something fundamentally different: citation and synthesis.

In the world of Large Language Models (LLMs), there is no “page 1.” There is usually just one answer. The goal of AEO is to ensure that when a user asks a high-intent question, the AI cites your brand as the source of truth or, better yet, recommends your product as the solution. Because this interaction happens inside the chat interface, traditional tracking pixels never fire. This is why we need specialized software that acts like a “mystery shopper,” querying these AI engines effectively and reporting back on what they say about you.

What distinguishes AEO from traditional SEO?

The distinction is stark. SEO success is measured by rankings and clicks. AEO success is measured by citation frequency (how often you are mentioned) and recommendation strength (is the sentiment positive, neutral, or negative?). Additionally, while SEO rankings are relatively stable, AI outputs are highly volatile—changing based on prompt phrasing, model temperature, and daily updates. This means measurement can’t be a one-time snapshot; it has to be iterative.

Why standard analytics tools miss Perplexity visibility

I’ve seen teams try to hack this by looking at referral traffic in GA4, but it’s often a trap. Perplexity might summarize your entire pricing page for a user, answering their question perfectly without them ever clicking through to your site. That is a marketing win, but a data loss. Furthermore, referral strings from AI apps are often stripped or inconsistent. Relying on GA4 means you are only seeing the tip of the iceberg—the rare users who needed more info—while missing the 80% who got their answer directly from the interface.

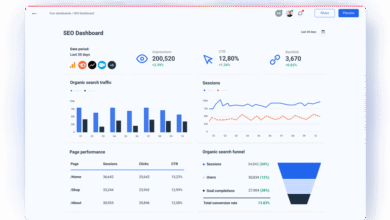

The metrics that actually matter for Perplexity visibility (and how I report them)

When I started building AEO reports, I made the mistake of trying to track everything. I quickly learned that data without decision power is just noise. In my current reporting, I narrow it down to five specific metrics that tell me if we are winning or losing ground.

First is A-SOV (Answer Share of Voice). This tells me, out of 100 relevant queries, how many times my brand appears in the answer. Second is Citedness, which specifically tracks if our URLs are linked as a footnote source. Third is Recommendation Strength—are we just listed, or are we the “top pick”? Fourth is Sentiment, because being mentioned negatively is worse than not being mentioned at all. Finally, I look at Visibility Trend Lines to smooth out the daily volatility of AI responses.

I advise monitoring these weekly for your top 20 “money keywords” and doing a comprehensive deep dive monthly. Anything more frequent usually leads to over-optimization panic.

AEO KPI cheat sheet (table)

Here is the exact framework I use to keep my reporting actionable. I share this with stakeholders to define what “good” looks like.

| Metric | What it captures | How I measure it | What I do next (Action) |

|---|---|---|---|

| A-SOV (Share of Voice) | Brand presence across a topic | % of answers containing brand name | Improve entity signals and brand mentions on third-party sites. |

| Citedness | Authority & Trust | % of answers with a direct link/footnote | Add structured data (Schema) and “quotable” definitions to content. |

| Recommendation Strength | Persuasion | Qualitative ranking (e.g., “Best Overall”) | Update comparison pages and product differentiators. |

| Sentiment | Brand Perception | Positive / Neutral / Negative scoring | Address negative reviews or unclear product specs that confuse the AI. |

How often I monitor AEO visibility (weekly vs monthly)

Consistency beats intensity here. I budget about 30–45 minutes every Friday morning for this. I run a spot check on our “Tier 1” query set—the questions that directly map to revenue (e.g., “best enterprise CRM for startups”). If I see a drop, I investigate. Then, once a month, I do the full analysis to look for macro trends. Trying to track daily fluctuations often results in chasing ghosts, as model variance can change an answer simply because the AI “felt” different that morning.

What good AEO performance tracking software must do: my readiness audit checklist

The market is currently flooded with tools claiming to be “AI-ready.” Before you subscribe, you need to audit whether they can actually do the job or if they are just repackaging rank tracking. When I evaluate a new AI SEO tool, I look past the marketing copy and check for technical rigor.

Shockingly, a recent analysis suggested that 60% of AEO tools lacked proper schema markup implementation . This is a red flag. If the tool itself doesn’t understand the technical requirements of AEO, how can it help you optimize for them? Here is the readiness audit I use to vet vendors.

Technical readiness: schema, crawlability, and “can the model access it?”

First, does the tool evaluate crawlability specifically for AI bots (like OAI-SearchBot or PerplexityBot), not just Googlebot? They are different. Second, does it validate structured data like FAQPage, HowTo, and Product schema? These are the strongest signals for earning citations. I look for tools that don’t just say “Schema present” but actually validate the syntax.

Measurement quality: query sets, repeatability, and trend lines

Does the tool allow you to save a Query Library? I’ve seen tools that require you to type prompts manually every time—that’s not a workflow, that’s a hobby. You need to be able to save a set of 50 prompts and run them automatically. Furthermore, look for timestamped snapshots. Because AI answers disappear, you need a tool that saves the HTML or text of the answer so you can prove to your boss, “Look, we were the recommended solution last week.”

Business usability: alerts, exports, and stakeholder-ready reports

Finally, can I get the data out? If I can’t export to CSV or look at a trend line over 90 days, the tool isn’t enterprise-ready. I also look for citation alerts—a slack notification or email when a specific competitor overtakes us on a high-value query. This allows for rapid response rather than waiting for the monthly report.

Best software for Perplexity visibility: a practical comparison (by category)

There isn’t one single “best” tool yet because the market is fragmented. Based on my research and testing, the landscape breaks down into three categories: Enterprise Platforms, SEO Hybrids, and Specialized Trackers. Choosing the right one depends entirely on your budget and team size.

Category 1: End-to-end enterprise AEO/GEO platforms (when governance matters)

If you are in a regulated industry or managing multiple global brands, you need governance. Platforms like Conductor and Profound are positioning themselves here. They offer end-to-end visibility, often including compliance features like SOC 2 and multi-region tracking .

Best for: Large teams where data attribution and integration with other corporate analytics (like Adobe or Salesforce) are non-negotiable.

Tradeoff: High cost and complex setup. Not for the faint of heart.

Category 2: Traditional SEO tools adding AI visibility (good for hybrid teams)

If you already pay for a suite like Semrush or SE Ranking, start here. SE Ranking has launched an AI Visibility Tracker (beta) that monitors brand mentions alongside traditional rankings. Surfer’s AI Tracker also integrates well if you are already using their content workflows.

Best for: Intermediate teams who want to dip their toes in AEO without buying a separate contract.

Tradeoff: These features are often less mature than specialized tools and might lack deep prompt library capabilities.

Category 3: Specialized AEO visibility trackers (built around citations and A-SOV)

This is where the innovation is happening. Tools like AEO Engine and AEO Amplify focus purely on the AI layer. They give you granular metrics like Sentiment and Recommendation Strength that traditional SEO tools gloss over.

Best for: Agencies and growth leads who need to prove specific ROI from AI optimization efforts.

Tradeoff: It’s another login to manage, and some are still in early stages with limited query volume.

Comparison table: which tool category fits my situation?

Here is how I guide clients on making a choice based on their current situation.

| Category | Best For | Strengths | Limitations |

|---|---|---|---|

| Enterprise (e.g., Conductor) | Large Orgs / Global Brands | Compliance, Support, Integration | Expensive, slower innovation cycle |

| Hybrid (e.g., Semrush/SE Ranking) | Mid-market / In-house SEOs | Unified workflow, familiar UI | “Lite” features, may miss niche AI signals |

| Specialized (e.g., AEO Engine) | Agencies / Growth Hackers | Deep metrics (A-SOV), fast updates | Separate tool, varying reliability |

My step-by-step workflow to track and improve Perplexity citations (using AEO performance tracking software)

Buying the software is easy; using it to actually move the needle is the hard part. Over the last year, I’ve refined a workflow that moves from “guessing” to “optimizing.” This process relies on creating high-quality, structured content—something that a sophisticated AI article generator can help operationalize by ensuring your drafts are formatted for machine readability from day one.

Step 1: Build a query set that matches revenue intent (not vanity prompts)

Don’t just track your brand name. That’s vanity. I build a list of 15–30 queries that map to the bottom of the funnel. These usually fall into three buckets:

1. Comparison: “[My Brand] vs [Competitor]”

2. Best For: “Best payroll software for small businesses”

3. Problem/Solution: “How to automate employee onboarding”

Pro Tip: Include variations. Users might ask “software” in one prompt and “platform” in another. The answers will differ.

Step 2: Establish a baseline across platforms (Perplexity + the others)

Before you change a single pixel on your website, run these queries through your tracking software to get a baseline. I focus on Perplexity, ChatGPT, and Google AI Overviews. Save the results. You need to know exactly where you stand: Are you mentioned? Are you the primary recommendation? Or are you invisible? This baseline is your defense when stakeholders ask for ROI later.

Step 3: Optimize content for “quotable” extraction (structure + schema)

Now, we optimize. I’ve found that AI models love “scannable” content. I edit our target pages to include:

- Direct Answers: A 40-50 word definition immediately following a question header (e.g., “What is AEO?”).

- Lists: Bullet points for features or benefits.

- Schema: I strictly validate FAQPage and Product schema.

Practitioners have reported that structured content formats significantly increase appearance in AI overviews , likely because it reduces the “cognitive load” for the model to parse the data.

Step 4: Re-test, log changes, and create a monthly AEO report

After updating the content, I wait. It usually takes 1-2 weeks for changes to reflect in AI answers (unlike the slower pace of Google SEO). I re-run the query set in the software and compare the new snapshots against the baseline. My monthly report is simple: “We moved from mentioned in 10% of answers to recommended in 40% of answers for these top revenue queries.” That is language leadership understands.

Common mistakes when tracking Perplexity visibility (and how I fix them)

I’ve made plenty of errors getting to this point. Here are the most common pitfalls so you can avoid them:

- Tracking too few queries: I used to track just 5 keywords. The data was too volatile to trust. Fix: Expand to at least 25–30 queries to smooth out the noise.

- Ignoring Prompt Sensitivity: I assumed “best HR tool” and “top HR software” yielded the same result. They often don’t. Fix: Standardize your prompt list and never change the wording mid-reporting cycle.

- Obsessing over daily changes: I wasted hours analyzing why we dropped out of an answer on a Tuesday only to reappear on Wednesday. Fix: Look at weekly averages, not daily snapshots.

- Forgetting the “Why”: Seeing a citation is great, but not knowing which page drove it is useless. Fix: Use software that identifies the source URL so you can double down on what works.

Troubleshooting checklist (quick scan)

If your data looks weird, run this 2-minute diagnostic:

- Did I use the exact same prompt string as last time?

- Did the AI model update (e.g., GPT-4o release) this week?

- Did my competitor launch a new comparison page?

- Is my schema markup actually validating without errors?

FAQs + next steps: choose software, start tracking, then scale responsibly

Once you have validated your workflow on a small scale, the next challenge is volume. This is where tools like a Bulk article generator become valuable—not to spam the web, but to apply your winning content structures (FAQs, clear headings) across hundreds of pages efficiently. But before you scale, let’s address the final questions I hear most often.

Which AI platforms are most important to track for AEO?

If you have limited resources, prioritize Perplexity and Google AI Overviews. These are the “search-first” AI engines. ChatGPT is massive but often used more for creativity than product discovery (though this is changing with SearchGPT). Keep an eye on Claude and Gemini, but don’t let them distract you from the big players.

What content formats perform best for AEO?

Think “encyclopedic but conversational.” The best formats are:

1. FAQ Sections using valid Schema.

2. Comparison Tables that objectively list pros/cons (AI models trust balanced views).

3. Definitions in clear, concise paragraphs (approx. 40-60 words).

For example, structure a section like this: Header: Is X good for Y? -> Answer: Yes, X is effective for Y because… -> Bullet list of 3 reasons.

How frequently should AEO visibility be monitored?

Stick to the weekly spot-check / monthly deep-dive rhythm. If you only have one hour a week, spend 15 minutes checking your top 5 revenue queries and 45 minutes improving the content on the pages that answer them.

Next actions I recommend (3–5 steps)

Ready to start? Here is your plan for this week:

- Pick your top 20 queries: Focus on high-intent comparisons and questions.

- Choose a tool category: If you’re budget-conscious, start with your existing SEO suite’s beta features. If you need deep data, trial a specialized tracker.

- Run a baseline audit: Document exactly where you appear today.

- Optimize one key page: Add an FAQ section with schema to your most important product page.

- Wait and measure: Check back in 14 days to see if you’ve earned a citation.

Start small, prove the value, and then build the system. The AI revolution isn’t coming; it’s here. The good news is, with the right tracking, it’s measurable.