Best Perplexity AI Tools: Optimizing for the Answers (A Practical Review)

Introduction: why I’m reviewing the best Perplexity AI tools (and who this is for)

I have been testing Perplexity heavily for research briefs and SEO workflows over the last year, and I have learned one lesson the hard way: a citation is not the same thing as the truth. We are seeing a massive shift in how we gather information, moving from keyword searching to “answer engines” that promise to synthesize the web for us. But for operators—those of us managing content teams, conducting competitive intelligence, or building product roadmaps—the stakes are higher than just getting a quick recipe.

This is not a hype piece about how AI will replace your job. Instead, I am treating this as a newsroom-grade review of the Perplexity ecosystem as it stands today. I will cover the specific tools you can actually use (Labs, Comet, and the Email Assistant), the friction points I encounter on Monday mornings, and a verified workflow to get usable data without risking your reputation. If you are looking for a magic button, this isn’t it. But if you want a reliable framework for “answer engine optimization” and business research, this guide is for you.

What Perplexity is now: from answer engine to a multi-tool platform (2025–2026 snapshot)

For the uninitiated, Perplexity started as an AI answer engine—think of it as a wrapper around an LLM that cites its sources. It reads the web and summarizes the answer with clickable footnotes. That transparency is its main selling point over ChatGPT. However, over the last 12 to 18 months, the company has pivoted from a single search bar into a productivity suite aimed at taking over your browser and your inbox.

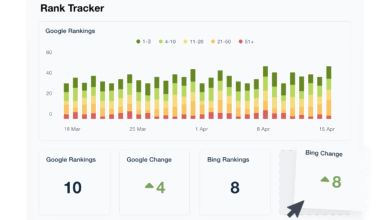

Why does this matter for business? Because simple chat isn’t enough for complex projects. We need to generate reports, navigate hundreds of tabs, and triage communication. Perplexity has responded with three major expansions: Perplexity Labs (launched May 2025) for data work; the Comet browser (desktop release July 2025) for navigation; and the high-tier Email Assistant. While these tools offer immense speed, they come with trade-offs in accuracy and cost that I will detail below.

Quick timeline: Labs, Comet, and Email Assistant (with pricing tiers)

- May 2025: Perplexity Labs launches for Pro users (~$20/mo). Enables dashboards, spreadsheets, and longer report generation.

- July 2025: Comet browser released on desktop.

- October 2025: Comet becomes free for all users (with rate limits). Comet Plus launches at $5/month (sharing 80% of proceeds with publishers).

- November 2025: Comet browser launches on Android.

- Current Status: Email Assistant features locked behind the Max tier (~$200/month).

How I evaluate the best Perplexity AI tools for business use (a simple scoring rubric)

When I evaluate tools for my stack, I don’t look for “cool features.” I look for reliability and integration. A tool that hallucinates a statistic in a client report isn’t an asset; it’s a liability. To help you decide without the guesswork, I use a specific scoring rubric geared towards intermediate business users.

I specifically look at how these tools fit into a broader content intelligence workflow. For example, once I have verified research from Perplexity, I often need to feed that data into a robust AI SEO tool to structure the final content. If the input data is bad, the output is useless. Here is the framework I use to vet the input side:

| Criteria | What good looks like | How I test it | Red flags |

|---|---|---|---|

| Reliability & Transparency | Every claim has a clickable, primary source (not an affiliate blog). | I spot-check 3 citations against the generated text. | Circular referencing or citations that lead to 404s. |

| Workflow Fit | Export options (CSV/Markdown) and ease of copy-pasting tables. | I try to move data to a Google Sheet in under 3 clicks. | Formatting breaks when pasted; cannot export data. |

| Output Depth | Can handle multi-step reasoning (compare X vs Y across 3 params). | I ask for a comparison table with specific constraints. | Generic summaries that ignore my constraints. |

| Governance | Clear data retention settings; ability to exclude sensitive docs. | I review the settings for “training on my data.” | No option to toggle off history or training. |

My default test prompt set (so you can replicate my results)

To keep things fair, I don’t just chat randomly. I use a standard set of prompts to stress-test these tools. You can copy these to run your own evaluation:

- The Hallucination Trap: “Summarize the privacy policy changes for [Competitor X] from Q3 2025. Include direct quotes, publication dates, and links to the official press release.” (I verify if the quote actually exists).

- The Data Extraction: “Create a table comparing the pricing tiers of Tool A vs Tool B vs Tool C. Include a column for ‘Hidden Fees’ and ‘API Limits’.”

- The Counter-Narrative: “Find three sources that argue against the consensus that [Industry Trend] is growing. Explain their methodology.”

Best Perplexity AI tools: what each one is best at (and where it breaks)

After months of testing, here is my honest take on the ecosystem. The reality is that user feedback—and my own experience—is mixed. While the “vibe” of the answers often feels authoritative, users across G2 and Reddit frequently report broken chat flows and UI glitches. More importantly, the value proposition varies wildly depending on which tier you buy.

| Tool | Best For | Typical Workflow | My Recommendation |

|---|---|---|---|

| Perplexity Labs (Pro) | Deep research, creating structured CSVs, and market reports. | Researching a new vertical → Exporting a competitor matrix. | YES for analysts & content leads. Best value. |

| Comet Browser (Free/Plus) | Tab triage, quick summarization, and avoiding clickbait. | Reading 20 tabs of news → Finding the one useful paragraph. | YES (Free version is enough for most). |

| Email Assistant (Max) | High-volume executive inbox management. | Auto-drafting replies and scheduling from Outlook/Gmail. | NO unless you are drowning in 200+ emails/day. |

Perplexity Labs (May 2025): reports, dashboards, and spreadsheets for longer workflows

Labs is where the real work happens. Launched for Pro users in mid-2025, this isn’t just a chat window; it’s a workspace designed for tasks that take more than 10 minutes. My favorite feature is the ability to generate spreadsheets directly from a query.

For example, I recently needed a feature matrix for payroll software. Instead of clicking through pricing pages for an hour, I used Labs to generate a dashboard comparing five competitors. It wasn’t perfect—I still had to correct two pricing cells—but it saved me 45 minutes of formatting. If you are building a quarterly insights report or a competitive landscape analysis, the ~$20/month Pro fee pays for itself here.

Caution: I still spot-check 3-5 citations before I trust a spreadsheet column. Labs is confident, but it can sometimes pull data from outdated third-party reviews rather than the official pricing page.

Comet browser (July 2025 → free in Oct 2025): summarization, navigation, and “research in the tab”

I have a “too many tabs” problem. Comet addresses this by bringing AI directly into the browser. It offers summarization, voice mode, and navigation assistance. The big news in October 2025 was the shift to a free model (with rate limits), while Comet Plus ($5/mo) offers premium access.

In practice, I use Comet to triage. If I open ten articles about a Google Core Update, I use Comet to summarize them and tell me which one actually has new data. It stops me from wasting time on fluff pieces. However, for deep reading, I turn it off. The summaries can sometimes strip away the nuance you need to understand a complex topic.

Email Assistant (Max tier): inbox triage, drafts, scheduling—high cost, narrow fit

This tool is impressive, but at the Max tier price point (~$200/month), it is a hard sell for the average operator. It integrates deeply with Gmail and Outlook to prioritize emails, draft replies based on your calendar, and manage scheduling.

If I were an executive sending generic replies to 50 people a day, this would be a lifesaver. But for most of us, the risk of an AI mishandling a sensitive client email is too high. I tested it for a week, and while the scheduling integration was smooth, I found myself rewriting most of the drafts to sound like me. If you are a small business, save the budget.

Comparison table: which Perplexity tool to use for research, content, ops, and leadership updates

| Feature | Core Search (Free) | Labs (Pro ~$20/mo) | Comet (Free/Plus $5/mo) | Email Assistant (Max ~$200/mo) |

|---|---|---|---|---|

| Primary Use | Quick answers | Deep Analysis & Reports | Browsing & Reading | Inbox Management |

| Output Format | Text/Short Lists | Dashboards/Spreadsheets | Sidebar Summaries | Email Drafts/Calendar |

| Citation Depth | Medium | High | Medium (Page context) | N/A |

| My Pick For… | Casual queries | Content Strategists | General Research | Enterprise Execs |

My step-by-step workflow: optimizing for the answers with Perplexity (from question → publishable output)

Buying the tool is the easy part. Building a process that doesn’t result in generic content is the challenge. Here is the exact workflow I use to turn a vague idea into a solid, verifiable brief. This is where you bridge the gap between gathering intelligence and using an AI article generator to scale your production.

Step 1: define the decision you’re supporting (not just the keyword)

If I can’t explain the decision I’m trying to help the reader make in one sentence, I’m not ready to research. Most beginners type “best CRM software.” A better operator types: “Compare CRM software for a 10-person real estate agency, focusing on mobile app quality and contract flexibility.” This forces the AI to look for specific parameters rather than generic marketing copy.

Step 2: run a ‘source set’ query and force diversity of citations

Don’t trust the first answer. It is often an echo chamber of the top 3 Google results. I always run a follow-up prompt: “Find 2 sources that disagree with this conclusion and explain why.” This exposes the controversy and nuance in the topic. I look for primary sources—PDFs, whitepapers, and official documentation—rather than affiliate blogs.

Step 3: extract facts into a table (and label what’s uncertain)

I never copy-paste paragraphs. I ask Perplexity to “Extract these findings into a table with columns for Claim, Source, Date, and Confidence Level.” If the AI can’t find a date, I make it mark the cell as “Unknown.” This is my sanity check. It turns a wall of text into a checklist of facts I need to verify.

Step 4: turn research into a publishable structure (titles, H2s, schema, internal links)

Once the facts are verified, I map them to on-page elements. I ask: “Based on these user pain points, what should the H2s be?” This ensures my structure answers the user’s intent directly. This is the handoff point where I would move from Perplexity (research) to a drafting tool. Note: If I can’t defend a claim with a source from my table, I cut it. No exceptions.

Reliability check: citations, hallucinations, and what I verify before I trust Perplexity

This is the elephant in the room. Is Perplexity reliable? The answer is “mostly, but…” A study focused on medical and academic queries found that Perplexity fabricated 72% of the citations checked, averaging over 3 errors per citation. That is a terrifying statistic for anyone in a regulated industry.

However, for general business research (software pricing, marketing trends), I find it significantly better than that, provided you click the links. The clickable citations are not a guarantee of truth; they are an invitation to verify. The user sentiment on Trustpilot (hovering around 2.3/5) often reflects frustration with billing or UI, but the hallucination risk is real. My rule is simple: If the output affects money, health, or the law, Perplexity is a signpost, not an advisor.

My 5-minute citation audit (beginner-friendly checklist)

I never publish a piece of content without running this drill. It takes five minutes and saves me from embarrassing corrections later:

- Click the top 3 citations: Do the links actually work? (404s are common).

- Ctrl+F the claim: Does the linked page actually contain the sentence or stat mentioned?

- Check the date: Is it citing a 2019 article for a “current” 2025 trend?

- Check the domain: Is it a reputable publisher or a “SEO spam” site?

- Triangulate: Can I find this fact on one other independent site?

Legal and ethical considerations (US context): scraping controversies, lawsuits, and safe-use guidance

We need to talk about risk hygiene. Perplexity is currently facing lawsuits from major publishers like Forbes, The New York Times, and Dow Jones over copyright infringement and content scraping. There are also allegations regarding the use of stealth web crawlers that bypass site blocks.

What does this mean for you as a business user? It’s not legal advice, but here is my practical guardrail: Assume the output is not copyright-cleared. Do not copy-paste whole paragraphs of AI-generated text onto your commercial blog without heavy editing and rewriting. Treat Perplexity outputs as “research notes.” Use the ideas, but write the words yourself (or use tools that build original drafts). Also, be careful about pasting your own confidential client data into the chat unless you have configured your privacy settings to prevent training.

Common mistakes beginners make with Perplexity (and how I fix them)

I have watched dozens of marketers try to use these tools, and they almost always make the same errors. Here is how to fix the friction points I see most often.

Mistake #1: treating citations as proof (instead of an invitation to verify)

Beginners see a little blue number [1] and assume the fact is checked. As we discussed, that number just points to where the model looked, not necessarily what it understood. The Fix: Adopt a “trust but verify” mindset. If you don’t click it, you didn’t check it.

Mistake #2: asking vague prompts that produce vague answers

If you ask “What is the state of AI?”, you get a generic blog post. The Fix: Add constraints. “What is the state of AI adoption in US healthcare for Q3 2025?” Specificity forces the model to dig deeper.

Mistake #3: not forcing source diversity (getting ‘echo chamber’ summaries)

AI tends to agree with the most popular content, which isn’t always right. The Fix: Explicitly ask for “counter-arguments” or “dissenting opinions” in your prompt to see the full picture.

Mistake #4: skipping the ‘fact table’ step (then losing track of what’s true)

It is easy to get lost in a long chat thread. The Fix: Always end your session by asking for a summary table of key facts. It acts as your “save point” for the research.

Mistake #5: using Perplexity for high-stakes advice without guardrails

I have seen people ask for legal contract clauses. This is dangerous. The Fix: Use Perplexity to understand concepts (“What is a force majeure clause?”), but never to generate the legal document itself.

Mistake #6: optimizing content for vibes, not for answers (missing structure and intent)

Some users take the raw research and write a wandering narrative. The Fix: Optimize for the answer. Structure your content with clear headings that match the questions people are actually asking. This is the core of modern SEO.

FAQs + next steps: what I’d do if I were starting today

If I were starting from scratch today, I wouldn’t try to learn every tool at once. I would pick one workflow—likely verifying a specific competitor analysis—and master that. To wrap up, here are the answers to the questions I hear most often.

FAQ: What tools has Perplexity introduced beyond search?

Beyond the core search, they have added Perplexity Labs (for reporting), the Comet browser (for navigation), and the Email Assistant (for inbox management).

FAQ: Is Comet browser still limited to paid users?

No. As of October 2025, Comet is free for all users with rate limits. There is a paid Comet Plus tier ($5/mo) for power users who want premium journalism access.

FAQ: Are Perplexity’s citations reliable?

They are better than a blind guess, but studies suggest high error rates in technical fields. Always audit the links for high-stakes business claims.

FAQ: How does Perplexity compare to competitors like ChatGPT?

Perplexity wins on transparency and citations for research tasks. ChatGPT generally offers a more fluid, creative conversational experience for drafting and brainstorming.

FAQ: Is using Perplexity legally risky?

There are ongoing legal battles regarding data scraping. To stay safe, verify facts independently and avoid using generated text verbatim for commercial products.

Next Steps for Monday Morning:

- Pilot One Tool: If you do heavy research, try Perplexity Labs. If you just browse, install the free Comet browser.

- Run the Audit: Take one piece of content you are working on and run my “5-minute citation audit” on it. You will likely find an error.

- Scale Up: Once you have a reliable research pipeline, consider how to scale the publishing side using an Automated blog generator to turn those insights into consistent traffic.

The future isn’t just about finding answers; it’s about validating them and putting them to work. Good luck.