Introduction: Measuring Perplexity visibility (and why a “rank” isn’t enough anymore)

It usually starts with a screenshot. A stakeholder or client sends me an image of a Perplexity answer or a Google AI Overview and asks, “Are we showing up here for our top terms?” It’s a fair question, but it triggers a moment of panic for most SEOs because our standard dashboard usually just shows a flat ranking line.

Here’s the reality: traditional rank tracking doesn’t fully capture whether you are part of the conversation in AI answers. If traffic is flat but you suspect you’re being cited in Perplexity, you need a different measurement approach. You need to bridge the gap between tracking “Position Zero” featured snippets and monitoring AI visibility.

In this guide, I’ll walk you through exactly what I track, the tools that actually make this measurable (beyond the hype), and the repeatable workflow I use to capture visibility in both the SERP and the answer engine.

What you’ll learn in this guide

- AEO vs. SEO definitions: Understanding the difference between ranking on a ladder and being mentioned in a generated answer.

- The metrics that matter: A practical list of what to track, from snippet ownership to citation frequency.

- Tool selection criteria: How to choose software that covers both traditional SERP features and AI visibility.

- A hands-on workflow: My step-by-step process for auditing, optimizing, and monitoring content.

- Common mistakes: The reporting errors that usually lead to bad data (and how to fix them).

AEO vs traditional SEO: what “visibility” means in Perplexity, ChatGPT, and Google AI Overviews

If traditional SEO is about fighting for the best spot on a ladder, Answer Engine Optimization (AEO) and Generative Engine Optimization (GEO) are about ensuring you’re mentioned in the conversation. In traditional SEO, measuring success is relatively binary: you are either in position 1, 2, or 3, or you aren’t.

With Perplexity, ChatGPT, and Google’s AI Overviews, visibility is fluid. The engine generates a unique answer based on the user’s specific prompt, context, and sometimes even their location. You might be the primary source for a query like “best CRM for small business,” but if the user adds “…that integrates with Slack,” the AI might swap you out for a competitor who has better schema markup around integrations.

This means we are moving from tracking static positions to tracking citations and mentions. It’s less about “ranking #1” and more about “being one of the three sources cited in the answer.” This shift requires us to rethink our reporting. We can’t just promise a position; we have to report on our Share of Voice within the generated output.

Why AI visibility is harder to measure than rankings

- Response Variability: Unlike a static SERP, AI answers can change based on how the prompt is phrased. This creates a need for prompt-level tracking rather than just keyword tracking.

- Citation Complexity: You might be mentioned in the text but not linked in the footnotes, or vice versa. This requires tools capable of nuanced citation tracking.

- Volatility: AI models are updated frequently. A model update can wipe out your visibility overnight, creating AI response volatility that traditional rank trackers might miss entirely.

Featured snippets in 2025: still worth tracking (even with the volatility)

You might be hearing that featured snippets are dying as AI Overviews take over. I treat these claims with caution. While the landscape is shifting, featured snippets remain a critical source of visibility, trust, and voice search answers.

Here is what the data suggests right now: featured snippets still appear in approximately 12–15% of all searches . More importantly, for the queries that matter most to us—specific questions—they appear 40–50% of the time .

However, I have to be honest about the volatility. Some reports indicate a significant drop in featured snippet visibility during the first half of 2025—potentially as much as 64% . This doesn’t mean we stop tracking them. It means we have to watch them like a hawk. When a snippet disappears, it often signals an opportunity to optimize for an AI Overview instead. If you aren’t tracking position zero, you won’t know when to pivot your strategy.

The formats that win: paragraphs, lists, and tables

To win either a traditional snippet or an AI citation, structure is everything. The algorithms favor content that is easy to parse.

- Paragraph Snippets: These make up the vast majority (approx. 70–82%). They work best for definitions and direct answers. Ideally, these are 40–60 words long.

- List Snippets: Great for “step-by-step” or “best of” queries. These account for roughly 10.8% of snippets.

- Table Snippets: These appear less often (5–7%) but are incredibly sticky for pricing or comparison data.

What to track: the metrics that make AEO and featured snippet tracking actionable

When I build a dashboard for a marketing lead, I don’t dump every available metric on them. I focus on the numbers that drive decisions. If a metric doesn’t help me decide what to rewrite or where to invest, I leave it out.

Below is the measurement stack I recommend for beginners. It separates classic SERP features from the emerging AI visibility tracker metrics.

| Metric | Where it applies | Why it matters | What to do next |

|---|---|---|---|

| Snippet Ownership | Google SERP | Tells you if you hold position zero or if a competitor does. | If lost, audit the competitor’s format and word count. |

| Share of Voice (SoV) | AI Overviews / Perplexity | Estimates how often your brand appears in AI answers for a topic. | If low, increase brand mentions and citations on third-party sites. |

| Citation Rate | Perplexity / LLMs | Shows how frequently your URL is cited as a source. | If cited but not clicked, optimize your page title and meta for clicks. |

| Prompt Coverage | AI Models (ChatGPT, etc.) | Indicates if you appear for different variations of a question. | Expand content to cover related sub-questions and long-tail variants. |

| Snippet Type | Google SERP | Identifies if the result is a paragraph, list, or table. | Reformat your content to match the winning type (e.g., switch to a table). |

Featured snippet tracking metrics (SERP side)

On the traditional side, the correlation is clear: pages ranking in the top 3 organic positions capture over 90% of featured snippets . If you are ranking on page 2, your odds of winning a snippet are essentially zero (~0.4%).

So, my tracking logic is simple: If I am in positions 4–10, I focus on climbing the ranks. If I hit the top 3 but don’t have the snippet, I obsess over snippet presence and format. I check if the current winner is using a list or a paragraph, and I adapt immediately.

AEO/Perplexity metrics (answer engine side)

This is the wild west. Tools are getting better, but many Perplexity citation metrics are still estimates based on sampling. I treat these numbers as directional trends rather than absolute facts.

I look for LLM mention frequency and prompt set coverage. Are we showing up for the main head term? What about the specific “how-to” variations? Even if the data isn’t perfect, a downward trend in citations is a clear signal that my content is becoming less relevant to the model’s current training or retrieval process.

How I choose the best featured snippet tracking tool for AEO (beginner-friendly checklist)

Choosing software right now is tricky because every vendor claims to be an AI SEO tool. If you aren’t careful, you’ll end up paying for a fancy dashboard that just scrapes Google’s “People Also Ask” and calls it AI intelligence.

When I evaluate a best featured snippet tracking tool or AEO software, I use a strict checklist. I need to know if the data is fresh (daily is best, weekly is bare minimum) and if it actually distinguishes between a standard organic rank and a generative citation.

Here is the decision framework I use. If you are a local business, your needs are different from a national publisher. But regardless of your size, accuracy is non-negotiable. I’ve learned the hard lesson that relying on a tool with a monthly refresh rate is useless when SERPs are volatile. By the time you see the drop, you’ve already lost weeks of traffic.

Must-have features vs nice-to-have features

Must-Have:

- SERP Feature Detection: Must clearly distinguish between snippets, maps, and organic links.

- Historical Charts: You need to see the trend line to correlate drops with algorithm updates.

- Competitor Tracking: If you can’t see who stole your snippet, you can’t win it back.

- Alerts: I need an email the morning my snippet disappears.

Nice-to-Have:

- Prompt Libraries: Pre-built sets of AI prompts to test your visibility.

- Sentiment Analysis: Is the AI saying good things or bad things about you?

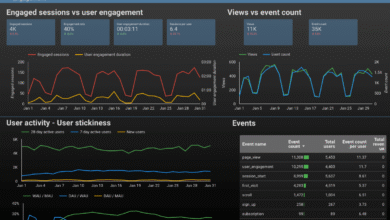

- API Access: Useful for pulling data into custom reporting dashboards.

Questions to ask before you buy (to avoid bad demos)

Don’t just watch the demo; ask these specific questions to put the sales rep on the spot:

- “How exactly do you detect snippet tracking accuracy? Is it scraped live or from a cached database?”

- “Do you support tracking for specific US cities, or is it just country-level?”

- “Can I tag my pages and annotate when I made changes to the content?”

- “What specific AI model coverage do you have? Do you track Claude and Perplexity separately from Google SGE?”

Best featured snippet tracking tool options: a practical comparison for snippet + AI visibility

Now, let’s look at the tools that are actually delivering on the promise of combined tracking. I’ve focused on three platforms that represent different approaches: Nightwatch (the hybrid tracker), SE Ranking (the balanced all-rounder), and Evertune (the specialist AEO platform).

Comparison table: snippet tracking + AEO tracking in one view

| Tool | Best For | Snippet Tracking | AI Visibility Tracking | Pros | Cons |

|---|---|---|---|---|---|

| Nightwatch | Agency & Enterprise | Excellent (local & global) | Integrated LLM Analytics | High accuracy, great visualizations | Can be pricey for small teams |

| SE Ranking | SMBs & In-house | Solid standard features | AI Visibility Tracker + SoV | User-friendly, affordable | Prompt-level depth varies |

| Evertune AI | AEO Specialists | N/A (Focuses on AI) | Deep Brand Monitoring | Specific to generative engines | Requires separate rank tracker |

How I’d read this table if I were new: Look at the “Best For” column first. If you need one tool to do everything reasonable well, look at SE Ranking. If you have a specific mandate to protect brand reputation in AI answers, you might need to layer Evertune on top of your existing stack.

Nightwatch: when you want rank tracking plus AI/LLM visibility analytics

Nightwatch rank tracker is my go-to recommendation when data granularity is the priority. It doesn’t just tell you “you rank #1”; it gives you the specific pixel height and SERP feature context. Recently, they’ve integrated LLM visibility analytics that cover models like GPT-4 and Google AI Overviews tracking.

If you are tracking thousands of keywords across multiple locations, Nightwatch handles the scale beautifully. It allows you to segment performance by device and location, which is critical for local SEO. The main downside is that it’s a powerful tool, and sometimes the sheer amount of data can be overwhelming for a beginner.

SE Ranking: solid for beginners who want SERP features + AI visibility reports

For most in-house teams, SE Ranking hits the sweet spot. Their AI Visibility Tracker is intuitive—it shows you share of voice in AI results alongside your traditional rankings. You can set it up in an afternoon and have a report ready for your boss by the next morning.

I appreciate their project-based workflow. You set up a project, add your keywords, and it automatically starts monitoring for SERP feature tracking. It’s less intimidating than enterprise tools, though it may lack some of the deep, prompt-level customization that a specialized AEO tool offers.

Evertune AI: purpose-built monitoring for brand visibility in AI-generated answers

Evertune AI is different. It’s not trying to be a rank tracker; it’s a GEO platform designed specifically for brand visibility in AI answers. If your primary concern is “What is ChatGPT saying about my brand?”, this is the tool.

It excels at Perplexity monitoring and sentiment analysis within generated text. However, it doesn’t replace your traditional SEO tools. You will still need a way to track organic traffic and technical health. Think of this as a specialized add-on for brand defense.

If you can only buy one tool: my decision shortcuts

- If you need to track snippets and local rankings primarily: Pick a robust rank tracker like Nightwatch.

- If you are on a budget and need a balanced view of SEO + basic AI stats: Go with SE Ranking.

- If your boss is obsessed with brand reputation in AI models specifically: Add Evertune AI to your stack.

My workflow: set up tracking, win snippets, and improve Perplexity/AI answer visibility

Buying a tool is easy; using it to actually move the needle is the hard part. Over the years, I’ve developed a workflow that turns data into action. It’s not magic—it’s just disciplined execution.

I use this process to systematically capture snippets and improve my standing in AI answers. It often involves using an AI article generator to help scale the initial drafting of answer blocks, but the strategy and refinement are always human-led.

Step 1: Build a question-first keyword set (because questions trigger snippets)

You can’t win a snippet if you aren’t targeting a question. I start by gathering question keywords from three places:

- People Also Ask (PAA): I scrape these directly from the SERP.

- Sales Calls: The actual questions prospects ask your sales team are gold mines.

- Support Tickets: If users are asking it, you should answer it publicly.

Remember, questions trigger snippets 40–50% of the time. These are your best snippet opportunities.

Step 2: Baseline measurement: snippet ownership + AI citations

Before I change anything, I need to know where I stand. I run a baseline report to see:

- Which queries already have snippet ownership (don’t break these!).

- Which queries have a snippet owned by a competitor.

- Where I am getting AI citations in Perplexity.

Quick tip: Start small. Pick your top 25–50 most important questions. Don’t try to boil the ocean on day one.

Step 3: Prioritize with an “effort vs impact” score

I keep a simple spreadsheet to prioritize my work. I assign points like this:

- High Impact: High search volume + Business relevant (3 points)

- Low Effort: We already rank in top 5 (3 points)

- Snippet Opportunity: A snippet exists but the current answer is weak (3 points)

I focus entirely on the high-score items first. This effort vs impact framework prevents me from wasting time on vanity keywords that won’t drive traffic.

Step 4: Optimize for the winning formats (answer-first + clean structure)

This is where the writing happens. I use an answer-first content approach. This means the very first paragraph under the H2 should answer the question directly.

Here is a template for a snippet-ready paragraph:

[Keyword] is [Definition/Direct Answer]. It works by [Process Step 1], [Process Step 2], and [Process Step 3]. Ideally, it is used for [Primary Use Case] to achieve [Benefit].

Keep it between 40–60 words. Ensure your heading structure is logical (H2 > H3).

Step 5: Add schema where it actually helps (FAQ, HowTo)

Schema markup can feel intimidating, but it doesn’t have to be. I focus on two types: FAQ schema and HowTo schema. These give search engines (and AI bots) explicit context about the content structure. I don’t overdo it—I just wrap my key questions and steps in structured data. It’s a small technical lift that often nudges you into the snippet.

Step 6: Monitor, annotate changes, and iterate

Finally, I watch the dashboard. I use SEO monitoring to track daily changes. If I make an update on Tuesday, I annotate it in the tool. If I see a jump in SERP alerts on Friday, I know exactly what caused it. This loop of content iteration is the only way to hold onto visibility long-term.

Common mistakes that break featured snippet and Perplexity visibility tracking (and how I fix them)

I’ve made plenty of mistakes in my career. I used to obsess over tracking thousands of vanity keywords, only to realize I wasn’t watching the 50 that actually drove revenue. Here are the most common featured snippet tracking mistakes I see, and how to fix them.

Mistake-to-fix list (quick scan)

- Mistake: Ignoring location data.

Fix: Always track US-based keywords with specific city/state settings if you are a local business. - Mistake: Tracking only head terms.

Fix: Shift your focus to long-tail questions where AEO tracking actually happens. - Mistake: Not annotating updates.

Fix: Use your tool’s annotation feature every time you publish an optimization. You will thank yourself later. - Mistake: Treating AI visibility as a guarantee.

Fix: Treat these metrics as probabilistic. Just because you see it doesn’t mean every user sees it. - Mistake: Over-optimizing / Keyword stuffing.

Fix: Write for the human first. If it reads like a robot wrote it, the AI (ironically) won’t cite it.

FAQs + next steps: putting AEO tracking into practice (without overcomplicating it)

Let’s wrap this up with the answers to the questions I hear most often, and a plan for your week.

FAQ: What is AEO and how does it differ from traditional SEO?

AEO (Answer Engine Optimization) and GEO focuses on optimizing content to be cited in AI-generated responses (like ChatGPT or Perplexity). Traditional SEO focuses on ranking a URL in a list of search results. For example, in SEO you want to be the first link; in AEO, you want to be the brand the AI recommends when asked, “What is the best CRM?”

FAQ: Are featured snippets still worth targeting?

Yes. Despite reports of volatility, featured snippets worth it because they build massive credibility. Even if they appear less often, when they do appear, they dominate the screen. They are also a strong signal of topical authority, which helps with overall ranking.

FAQ: Which content formats perform best for snippet capture?

The best snippet formats are simple: concise paragraph snippets (40–60 words) for definitions, bulleted list snippets for processes, and table snippets for data comparisons. Clarity wins every time.

FAQ: What tools track both featured snippets and AI visibility?

Tools like Nightwatch and SE Ranking have integrated tools that track featured snippets and AI visibility into one platform. For dedicated AI brand monitoring, Evertune is a strong contender.

FAQ: How should content creators optimize for AI-response visibility?

To optimize for AI responses, focus on high E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). Use clear headings, answer questions directly, and use schema markup to help machines understand your context.

Recap and next actions (what I’d do this week)

To summarize:

- Tracking snippets is still vital, even in an AI world.

- You need tools that measure both traditional rankings and AI citations.

- Structure and direct answers are your best weapons.

Your Next Steps:

- This Week: Identify your top 25 “question” keywords and set up a tracking project.

- This Week: Audit your existing content for those keywords. Are you answering the question in the first paragraph?

- Next Week: Use an SEO content generator to help you draft optimized definitions and FAQs for the pages you identified.

- Ongoing: Check your tool dashboard weekly for snippet wins and losses.