Introduction: Finding my AI footprint (and why I started using AI mention monitoring tools)

It happened on a Tuesday morning call. A high-value prospect told me, “We love your feature set, but ChatGPT described your platform as a ‘lightweight plugin’ suitable only for freelancers.” We are an enterprise solution. That single hallucination was costing us deals before we even got in the room.

That was the moment I stopped treating AI results as a novelty and started treating them as a reputation crisis. I realized that relying on random screenshots from my team was not a strategy. I needed a way to audit, track, and measure our presence inside the “black box” of Large Language Models (LLMs) just as rigorously as we track Google rankings.

This guide isn’t about hype; it’s about the practical reality of AI mention monitoring tools. I’ll walk you through what these tools actually do, compare the top players (from incumbents like Semrush to emerging startups like Peec AI and Ranketta), and share the exact 7-step workflow I use to diagnose and fix our AI brand footprint.

What are AI mention monitoring tools (and how they differ from traditional brand monitoring)?

If traditional social listening is like standing in a crowded room and hearing what people are saying about you, AI mention monitoring tools are like walking up to the smartest person in the room and asking, “What do you think of this brand?” and recording their answer.

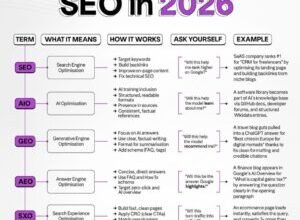

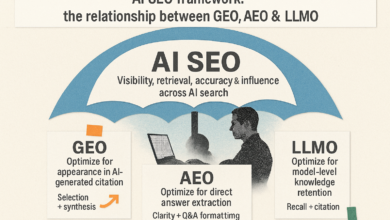

Traditional tools scrape the web for existing conversations (tweets, reddit threads, news articles). AI mention monitoring tools—sometimes called GEO (Generative Engine Optimization) tools—actively interrogate LLMs. They send specific prompts to engines like ChatGPT, Gemini, Claude, and Perplexity, capturing the generated response to analyze your brand’s visibility.

They don’t just count mentions; they analyze how you are mentioned. Are you the primary recommendation? A footnote? Is the pricing accurate? What sources is the AI citing to justify its answer? This is a fundamental shift from passive listening to active testing.

The minimum vocabulary I need (LLM, prompt set, sampling, citation)

- LLM (Large Language Model): The AI engine generating the text (e.g., GPT-4, Claude 3.5 Sonnet).

- Prompt Set: The standardized list of questions you ask the AI to test your visibility (e.g., “Best HR software for mid-sized companies”).

- Sampling: Asking the same prompt multiple times to account for AI variance (since answers change slightly each time).

- Citation: The blue link or reference the AI provides to back up its claim—this is your “backlink” in the AI world.

- Share of Voice (SOV): The percentage of times your brand appears in the answer compared to competitors.

- Hallucination: When the AI confidently states a fact about your brand that is completely false.

Why AI mention monitoring tools matter now: AI discovery is already at massive scale

We are past the early adoption phase. With ChatGPT alone hosting over 500 million weekly users [: 2025/2026 data] and Google rolling out AI Overviews to billions of search results, AI is becoming the primary discovery layer for the internet.

Here is where I see this impacting business right now:

- SaaS Comparisons: Buyers ask, “Compare Tool A vs Tool B for enterprise security.” The output often determines who gets the demo.

- Local Services: “Find me a top-rated divorce attorney in Austin.” AI is replacing the “near me” Google search.

- Troubleshooting & Support: Users ask AI how to fix your product. If the AI hallucinates a wrong step, your support tickets spike.

The scary part? I’ve seen the exact same prompt produce a glowing recommendation on Monday and a competitor pitch on Tuesday. That variance is why manual checking fails—we need tools to track patterns, not just one-off screenshots.

The risks I’m actually managing (misinfo, omission, competitor bias)

It’s not just about vanity metrics. The risks are operational. Misinformation allows an AI to tell prospects your pricing is double what it actually is. Omission means you simply don’t exist in the consideration set. Competitor Bias happens when a competitor has flooded the training data (or cited sources) so effectively that the AI treats them as the default option.

What to measure: the metrics that make AI mention monitoring tools useful

When I first started, I tried to measure everything. Now, I focus on actionable metrics. The goal isn’t just to know you were mentioned, but to know how. Most robust tools will track mentions across the “Big 5”: ChatGPT, Gemini/Google AI Overviews, Claude, Perplexity, and sometimes Bing Copilot.

However, a word of caution on sentiment: AI sentiment analysis is decent, but it struggles with nuance. I treat it as a directional signal. If a tool says “Negative,” I verify it manually. It might just be that the AI listed “high price” as a con, which for a premium brand might actually be neutral.

A simple KPI table I can copy-paste into my reporting

Here is the exact structure I use for my monthly stakeholder report:

| Metric | What it tells me | How I act on it |

|---|---|---|

| Mention Frequency | How often I appear in answers for my “money prompts.” | If low, I need to create more content targeting those specific user intents. |

| Share of Voice (SOV) | My visibility vs. my top 3 competitors. | If competitors are winning, I audit the sources the AI cites for them. |

| Sentiment Score | Whether the recommendation is positive, neutral, or negative. | If negative, I investigate if the data is outdated (e.g., old pricing). |

| Top Cited Sources | Which websites the LLM trusts for information about me. | I try to get more coverage on those specific 3rd-party sites (e.g., G2, Capterra, Reddit). |

| Accuracy Rate | Percentage of answers that are factually correct. | I prioritize fixing “hallucinations” via press releases or schema updates. |

A practical workflow: how I set up AI mention monitoring tools in 7 steps

Don’t overcomplicate this. You don’t need a data science team. You need a process. Here is the workflow I use to go from zero to a functioning monitoring system.

Step 1: Define my ‘money prompts’ (brand, category, comparison, problem-aware)

If you ask generic questions, you get generic answers. I build a “Prompt Library” of 15–25 high-intent queries. For a hypothetical US-based IT company, it would look like this:

- Brand Navigational: “What is AustinTech IT pricing?”

- Category: “Best managed IT support for 50-person companies in Texas.”

- Comparison: “AustinTech IT vs. GlobalMSP: which is better for startups?”

- Problem-Aware: “Who offers 24/7 IT support for remote teams in Austin?”

Step 2: Choose LLMs/engines to track (ChatGPT, Gemini/AI Overviews, Claude, Perplexity)

If you are B2B, you must track Perplexity and Claude, as they are heavy in research workflows. If you are B2C or local, Google AI Overviews and ChatGPT are your battlegrounds. Start where your audience hangs out. Don’t try to boil the ocean in week one.

Step 3: Run a baseline and capture prompt-response pairs (don’t rely on screenshots)

Run your prompt set through your chosen tool. Most tools will export this data. I save this file as YYYY-MM-DD_Baseline_Run.csv. Do not rely on screenshots; text is searchable, screenshots are not. You need to capture the full text of the response to analyze it later.

Step 4: Tag mentions, sentiment, and positioning (what am I “known for”?)

I manually review the first batch. I look for positioning keywords. Are they calling us “cheap” or “robust”? “Easy to use” or “complex”? Sometimes sentiment is ambiguous—I just tag it “Mixed” and move on. The goal is to spot the dominant narrative.

Step 5: Audit citations and sources (where the model is learning/quoting)

This is the most critical step for SEOs. Look at the little numbers or links at the bottom of the AI answer. Are they citing your homepage? Or are they citing a random blog from 2019? Here is the secret: LLMs value citations from authoritative listicles and press releases much more than random forum comments.

Step 6: Turn findings into actions (content updates, PR placements, fixes)

If the AI says your pricing is hidden, create a clear pricing page. If it cites a competitor’s “Best of” listicle, reach out to that publisher to get added. One action I can do today: Check my “About Us” page and ensure it clearly states what we do in simple, entity-rich language.

Step 7: Re-test on a cadence and report like a newsroom (what changed + why)

I re-run this report monthly (or weekly if we are in a PR crisis). My internal report headline usually looks like: “Feb Update: ChatGPT Positive Sentiment up 10% after Pricing Page Update.” This connects the data directly to our work.

Comparison table: the top AI mention monitoring tools (what each one is best at)

The market is flooding with tools. Some are enterprise suites; others are scrappy startups. I’ve filtered this list to the ones that are actually shipping useful features as of 2025/2026.

At-a-glance table (features, engines, pricing, best-fit)

| Tool | Best For | Engines Covered | Pricing (Est.) | Key Note |

|---|---|---|---|---|

| Semrush (Enterprise AIO) | Enterprise / SEO Teams | ChatGPT, Gemini, SGE | Starts ~$199/mo | Integrated with full SEO data; historical tracking. |

| Mint | Optimization Focus | Major LLMs | Contact Sales | Focuses on the “optimize” loop (Page Magic). |

| Peec AI | Brand Managers | ChatGPT, Perplexity, Claude | ~€89/mo | Modular pricing; great for prompt-level granularity. |

| Ranketta | Product Marketers | LLM Recommendations | Starts ~$100/mo | Tracks product-level visibility & specific citations. |

| Otterly AI | SMBs / Startups | All major LLMs | Low/freemium options | Lightweight scraping; good for simple alerts. |

| Profound | Enterprise Reporting | Broad LLM coverage | Enterprise | Deep GEO reporting metrics; founded in 2024. |

| Evertune AI | Data-Driven Teams | Statistical Sampling | Contact Sales | Uses consumer panels & massive sampling (1M+). |

Mini-reviews: what’s different about each platform

Semrush (AI Visibility Toolkit / Enterprise AIO): If you already live in Semrush for SEO, this is the logical upgrade. It’s robust and integrates AI visibility alongside your keyword rankings. I’d pick this if: I wanted to keep all my data in one ecosystem. Watch out: It can get pricey if you need to track hundreds of prompts.

Peec AI: A fast-moving player founded in 2025. They excel at multi-model tracking and offer flexible pricing tiers. I’d pick this if: I needed to specifically track my brand across Perplexity and Claude with high frequency. Watch out: As a newer tool, historical data might be limited compared to legacy giants.

Ranketta: This tool is interesting because it focuses heavily on the product level. It identifies exactly which sources are driving the recommendation. I’d pick this if: I was a Product Marketer trying to figure out why we are losing head-to-head comparisons. Watch out: It’s a specialist tool, not a general SEO suite.

Mint: Mint is aggressive about the “optimization” part of GEO. It analyzes gaps and suggests content fixes. I’d pick this if: I had a content team ready to execute changes immediately. Watch out: Ensure their “Page Magic” recommendations align with your brand voice.

Otterly AI: The best entry point for small businesses. It’s essentially a monitoring alert system for AI. I’d pick this if: I just needed to know if I was mentioned without needing complex analytics. Watch out: It lacks the deep segmentation of the enterprise tools.

How I choose between AI mention monitoring tools (small business vs enterprise)

Analysis paralysis is real here. To avoid it, I use a simple decision tree based on budget and “panic level.”

If you are a Small Business or a team of one, start with Otterly AI or the starter tier of Peec AI. You likely only need to track 10–15 prompts (your brand name and main service). You don’t need historical data going back five years; you need to know what ChatGPT is telling your customers today.

If you are an Enterprise or an Agency, you need Semrush Enterprise AIO, Profound, or Mint. You need API access, the ability to tag prompts by persona, and “share of voice” reporting to show your VP of Marketing. The ability to export data for custom visualization is non-negotiable here.

A simple rubric: budget, coverage, confidence (and what I prioritize first)

- Budget: Can I afford to track 50+ prompts continuously?

- Coverage: Does it track the engines my customers actually use? (e.g., Don’t overpay for Bing Chat tracking if your traffic is 90% Google).

- Confidence: Does the tool provide source attribution (citations)? If it just gives me a sentiment score without showing why, it’s useless to me.

- Personal Note: I would rather track 15 prompts consistently for 30 days than chase 200 prompts once. Consistency beats volume.

From monitoring to growth: how I improve brand visibility in AI-generated outputs

Once you have the data, the monitoring phase ends and the “Generative Engine Optimization” (GEO) phase begins. This is where you actually fix the problems you found. The goal is to create a content ecosystem that LLMs find authoritative and easy to parse.

If you discover that LLMs are citing outdated information or skipping your brand entirely, you need a content sprint. This isn’t just about stuffing keywords; it’s about structural clarity and entity authority. I use an AI SEO tool to help structure this content, but the strategy comes from the monitoring insights.

For example, if I notice ChatGPT is hallucinating my product features, I need to publish a definitive “Features” page with clear schema markup. To scale this, I might use an AI article generator to draft the foundational content quickly, ensuring I cover the entities and questions the LLMs are looking for. The monitoring tells me what to write; the SEO content generator helps me execute it at scale.

Tactics that tend to move the needle (and why)

- Authoritative Placements: Getting cited in industry reports or well-known news sites (Digital PR). LLMs trust these sources.

- Comparison Pages: Publishing your own honest “Us vs Them” page. If you don’t define the comparison, the AI will do it for you (poorly).

- Direct Quotes & Stats: LLMs love data. Publishing original research makes you a primary source.

- Entity Consistency: Ensuring your NAP (Name, Address, Phone) and core value prop are identical across Crunchbase, LinkedIn, and your About page.

Mini-template: turning citation gaps into a content sprint

- Identify the Gap: “AI recommends Competitor X because they are cited in ‘Top 10 CRM’ lists.”

- Draft the Asset: Create a “Definitive Guide to CRM Selection” on your site (2 hours).

- Distribute: Pitch this guide to the sources citing your competitor (1 day).

- Publish Support: Update FAQs to reinforce the points in the guide (1 hour).

- Re-test: Wait 3-4 weeks and check the prompt again.

Common mistakes I see with AI mention monitoring tools (and how I fix them)

I’ve made plenty of mistakes so you don’t have to. The biggest one? Treating LLMs like search engines. They aren’t.

Mistake 1–3: prompt chaos, weak baselines, and overreacting to variance

I used to change my prompts slightly every week. “Best shoes” one week, “Top footwear” the next. This ruined my data. The Fix: Lock your prompts. Do not change a single character for at least 90 days. Also, stop freaking out if one answer is weird. Look for the trend over 5+ samples.

Mistake 4–6: ignoring citations, misreading sentiment, skipping personas

I once saw a “Negative” sentiment tag and panicked. Turns out, the AI said, “This tool is not for beginners.” That’s actually good for our enterprise positioning. The Fix: Read the full text response, not just the score. Also, remember to track different personas. A CEO asks different questions than a developer; if you only track generic prompts, you miss the nuance.

Mistake 7–8: no workflow integration and no retesting cadence

The worst thing you can do is buy a tool and only log in when you’re bored. The Fix: Assign an owner. Put “AI Visibility Review” on the marketing calendar for the first Monday of every month. If it’s not on the calendar, it doesn’t exist.

FAQs + next steps: getting started this week with AI mention monitoring tools

FAQ: What are AI mention monitoring tools and how do they differ from traditional brand monitoring?

Traditional monitoring listens to what humans say on social media and the web. AI mention monitoring (GEO tools) actively tests what AI models (like ChatGPT) generate when asked about your brand. One is passive listening; the other is active testing of the platform’s “brain.”

FAQ: Which tools are best for small businesses vs enterprises?

For small businesses (SMBs), Otterly AI or Peec AI (Starter tiers) are great for affordably tracking a handful of prompts. For enterprises requiring API access, deep reporting, and multi-brand support, Semrush Enterprise AIO, Mint, or Profound are the standard choices.

FAQ: How can I improve brand visibility in AI-generated outputs?

Focus on Digital PR (getting cited on authoritative sites), creating high-quality data-rich content that LLMs can cite, and ensuring your technical “entity” data (schema, About page) is crystal clear. You have to feed the model good facts.

FAQ: Why is persona-based monitoring useful?

A CFO might ask, “Most cost-effective CRM,” while a CTO asks, “Most secure CRM.” Your brand might win one and lose the other. Monitoring by persona helps you identify exactly which buyer segment you are missing.

FAQ: Is sentiment analysis reliable in AI mention tools?

It is useful for spotting trends, but never trust it blindly. LLM variance means the tone can shift based on the day. Always verify “negative” alerts manually before escalating to management.

Recap: What I’d do next if I were starting from zero:

- Day 1: Write down your top 15 “money prompts” (Brand, Category, Comparison).

- Day 2: Pick one tool (even a free trial) and run a baseline report. Save it.

- Day 3: Identify the biggest “hallucination” or gap and task your content team to fix it.

If you have identified your gaps and need to rapidly build out the high-quality, structured content required to fix them, you might want to look at an automated blog generator to streamline that production. But start with the data first.