Best LLM analytics tools for AI brand visibility analysis (beginner’s guide)

Last week, I searched for my client’s core product category in ChatGPT, and we were the top recommendation. The next morning, using the exact same prompt but on a different device, we weren’t even mentioned. In traditional SEO, rankings fluctuate, but they rarely vanish overnight without a clear reason. In the world of Large Language Models (LLMs), however, this volatility is the new normal.

As a content strategist, I’ve seen firsthand how terrifying—and opportunistic—this shift is. If you are looking for the best LLM analytics tools, you likely aren’t just curious; you need to know if the AI platforms your customers use (ChatGPT, Gemini, Claude, Perplexity, Copilot) are recommending you or your competitors. This isn’t about fixed rankings anymore; it’s about probabilistic visibility.

In this guide, I’ll walk you through the emerging landscape of Generative Engine Optimization (GEO) tools. We’ll cover the difference between marketing-focused simulators and engineering-grade observability platforms, how to choose the right stack for your team, and the practical workflows I use to actually improve brand presence.

Quick answer: what “LLM visibility analysis” tells me that SEO rankings don’t

If you are used to Google Search Console, LLM analytics can feel like trying to nail jelly to a wall. Here is what these tools measure that standard SEO rankings simply cannot:

- Share of Voice (SoV): In a Google search, position #3 is relatively stable. In an LLM, your brand might appear in 40% of regenerations for the same prompt and 0% for others.

- Prompt-Level Context: You might dominate generic queries but disappear when a user adds modifiers like “budget-friendly” or “HIPAA-compliant.”

- Citation Sources: Which websites is the AI actually reading to form its opinion about you? (It’s often not your homepage).

- Sentiment & Descriptors: Is the model calling your enterprise software “legacy” or “robust”? Is it hallucinating features you don’t have?

- Model Variance: You might be a favorite in GPT-4 but invisible in Claude 3.5 Sonnet.

Why LLM visibility matters for brands (and what to measure first)

Think of LLMs less like a library index (Google) and more like a panel of always-changing sales representatives. Visibility analysis is essentially listening to what those sales reps say about you when you aren’t in the room. As consumer behavior shifts from keyword search to conversational discovery, being part of the AI’s consideration set is critical.

The challenge is that LLM responses vary based on the user’s phrasing, the model version, the time of day, and even the model’s “temperature” (randomness). Without analytics, you are flying blind, relying on anecdotal screenshots rather than data.

If I were setting up a dashboard today, I wouldn’t try to measure everything. I would focus on these core metrics first:

- Mention Rate: The percentage of times your brand appears in the answer for a specific set of prompts.

- Share of Voice (SoV): Your presence relative to competitors in the same answer.

- Citation Accuracy: Are the links provided actually yours, or are they broken/competitor links?

- Descriptor Sentiment: The specific adjectives the AI uses to describe your brand (e.g., “expensive,” “fast,” “reliable”).

- Consistency Score: How stable your presence is across multiple regenerations.

The minimum viable KPI set for a beginner team

If I only had two hours this week to prove the value of this to my boss, I would track just these six numbers. Establishing a baseline (e.g., the last 30 days) is more important than immediate optimization.

- Unbranded Visibility %: How often do you appear for “Best [Product Category]”?

- Top 3 Competitor Co-mentions: Who are you being compared to most often?

- Negative Hallucination Rate: How often does the model say something objectively false about you?

- Primary Cited Domain: Is it your documentation, your pricing page, or a third-party review site like G2?

- Model Variance: Your visibility score on ChatGPT vs. Perplexity.

- Prompt Sensitivity: Which specific keywords cause your brand to drop out of the answer?

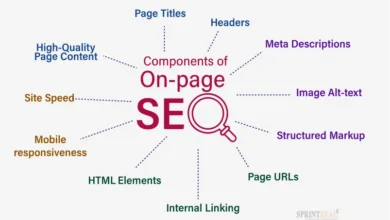

Types of LLM visibility tools: GEO platforms, observability, prompt trackers, and SEO add-ons

The market is currently flooded with tools claiming to do “AI analytics,” but they usually fall into four distinct buckets. Understanding which bucket you need will save you from buying an expensive engineering platform when you just wanted a marketing report.

GEO simulators (query simulation + mention statistics)

When I’d use this: I need statistically significant data on how often my brand is mentioned across thousands of potential user queries.

These tools work by simulating user behavior. They run thousands of variations of prompts through different LLMs to calculate a “Share of Voice.” Think of this like political polling versus asking your neighbor who they are voting for; simulators give you the aggregate data, not just anecdotes. Tools like Evertune AI and Ranketta fit here. Note that Evertune reportedly processes over one million AI-generated responses per brand per month , giving them a massive dataset to draw from.

LLM observability platforms (for production apps, RAG, and agents)

When I’d use this: My company has built an AI feature (like a chatbot or content generator) and I need to know if it’s working, how much it costs, and what users are asking it.

This is the “black box flight recorder” category. It’s less about external brand visibility and more about internal app performance. If you are an engineer or product manager, you need these to trace errors, debug “hallucinations” in your own app, and monitor token costs. Leaders in this space include LangSmith, Langfuse, Helicone, Galileo, Arize AI Phoenix, and Maxim AI.

Prompt-level trackers + citation-aware tools (what prompts cause visibility)

When I’d use this: I want to reverse-engineer exactly which questions lead to my brand being recommended.

These tools are the forensic detectives. They help you understand the causal link between a prompt and a citation. For beginners, I suggest starting with a small universe of 50–200 representative prompts. Otterly.AI, PromptWatch, and Brand Radar excel here by showing you citation maps—telling you, for example, that ChatGPT cites your “About Us” page but Perplexity cites your “Pricing” page. Otterly.AI offers tiers ranging from roughly $29 to $989 per month depending on volume .

SEO-integrated AI visibility (workflows for content teams)

When I’d use this: My team already lives in Semrush or Ahrefs and we want a low-friction way to start tracking AI alongside our keyword rankings.

If you don’t have the budget for a standalone tool, major SEO suites are adding these features. Semrush Enterprise AIO and Ahrefs Brand Radar allow you to perform gap analysis and competitive benchmarking within an interface you already know. The trade-off is often depth; they might not offer the granular trace data of a dedicated observability tool, but for content teams, the convenience is often worth it.

How I choose the best LLM analytics tools: a beginner-friendly evaluation checklist

Here is the part vendors often gloss over: integration reality. Before you sign a contract, you need to know who is going to own this tool. Is it Marketing (who wants pretty charts) or Engineering (who needs SDKs and OpenTelemetry support)?

I’ve seen teams buy complex observability platforms that Marketing couldn’t log into, or fluffy dashboard tools that Engineering refused to implement because they lacked security governance. Don’t make that mistake.

Pick your primary use case (marketing visibility vs product observability)

If I were the only person owning this, I’d ask these five questions to diagnose my need:

- Am I tracking my brand’s reputation on public platforms (ChatGPT, etc.)? -> You need a GEO Simulator or Prompt Tracker.

- Am I monitoring a chatbot we built for our customers? -> You need Observability.

- Do I need to trace specific errors in code? -> Observability.

- Do I need to report “Share of Voice” to the CMO? -> GEO Simulator / SEO Add-on.

- Do I care more about what is said (sentiment) or how fast it is said (latency)? -> Sentiment = Marketing; Latency = Engineering.

Open-source vs proprietary: what beginners should know about cost and flexibility

This is a classic “time vs. money” trade-off. Open-source tools like Langfuse (which open-sourced key modules under MIT license in June 2025 ) offer immense flexibility and prevent vendor lock-in. You can host them yourself, which is great for data privacy.

However, self-hosting means you are now responsible for the infrastructure costs and maintenance. Proprietary tools like LangSmith or Helicone (cloud versions) get you up and running in minutes but cost more as you scale. My rule of thumb: start with a cloud-hosted tier to prove value, then migrate to self-hosted open-source if your volume (and bill) explodes.

Top software for LLM visibility analysis (with a comparison table)

Methodology Note: The AI landscape changes weekly. The features listed below reflect a point-in-time audit. I strongly recommend verifying current capabilities in a live demo.

GEO platforms: Evertune AI, Ranketta

Evertune AI and Ranketta are purpose-built for the marketer who needs numbers, not traces. Ranketta, for instance, specializes in product-level visibility and identifying exactly where an LLM sourced its recommendation. They recently raised €1 million in pre-seed funding , signaling strong market interest.

Questions I’d ask in a demo:

- How do you handle “sampling bias”? (Do you run the prompt once or 100 times?)

- Can I upload my own custom list of 500 prompts?

- How do you attribute a “mention” if the AI uses a synonym for my brand?

Observability leaders: LangSmith, Langfuse, Helicone, Galileo, Arize AI Phoenix, Maxim AI

If you are building an AI app, this is your shortlist. LangSmith (launched Feb 2024 ) is the native choice if you use LangChain. Helicone is fantastic for a “low-code” setup—it acts as a proxy, so you can start logging data just by changing one line of code in your API call. Galileo and Arize Phoenix excel at deep evaluation metrics for RAG (Retrieval-Augmented Generation) systems.

What a “trace” tells me (the mini-example):

- Input: User asks “How do I reset my password?”

- Retrieval: System searches your knowledge base and finds 3 documents.

- Answer: AI generates a response based on those docs.

- Trace: Shows you exactly which docs were found and how long it took.

Prompt + citation visibility: Otterly.AI, PromptWatch, Brand Radar

Otterly.AI and Brand Radar are my go-to recommendations for teams that need to fix their content. They don’t just tell you “you aren’t ranking”; they show you “we aren’t ranking because the AI is citing a competitor’s PDF instead of our new HTML guide.” Expect to see prompt leaderboards and sentiment tracking over time.

SEO-integrated options: Semrush AIO, Ahrefs Brand Radar (and when they’re enough)

If your team is small and already paying for Semrush or Ahrefs, check their beta features first. It might be “good enough” for monthly reporting without adding another tool to your procurement list.

Comparison table: which tool to choose by goal

| Tool Name | Primary Category | Best For… | Citation Visibility | Pricing Note |

|---|---|---|---|---|

| Evertune AI / Ranketta | GEO Simulator | External Brand SOV & Market Research | High | Custom / SaaS Subscription |

| LangSmith / Langfuse | Observability | Engineering teams building AI apps | Trace-level (Internal) | Usage-based / Open Source avail. |

| Otterly.AI | Prompt Tracker | Marketing/SEO tracking specific queries | Very High | Tiered ($29–$989/mo) |

| Helicone | Observability (Proxy) | Quickest setup for developers | Trace-level | Freemium / Usage-based |

| Semrush AIO | SEO Integrated | Content teams wanting all-in-one data | Medium | Part of Ent. Suite |

Note: No single tool wins for every team. I’d pick based on who owns the budget (Marketing vs. Engineering) first.

A practical workflow: set up LLM visibility tracking, then improve what the models say

Buying a tool doesn’t fix your visibility; it just diagnoses the illness. Here is the exact workflow I use to move the needle. This involves using analytics to find the gaps, and then using a content intelligence platform like Kalema to operationalize the fix.

Step 1–3: define prompts, competitors, and baseline reporting

Don’t just track “[My Brand] review.” That’s lazy and won’t help you. Build a prompt universe that reflects real intent.

Use this template: {Role} + {User Intent} + {Constraints} + {Industry}

- Example: “As a CTO (Role), what are the best (Intent) compliant (Constraint) payroll tools for startups (Industry)?”

Baseline this against your top two competitors for 30 days. Don’t panic if the numbers jump around in week one; look for the trend line.

Step 4–6: instrument visibility + observability (when you have an LLM feature)

If you are monitoring your own app, you need to instrument your code. Start lightweight. Use a proxy-based tool like Helicone if you can—it requires minimal code changes. Ensure you have data governance in place: redact PII (Personally Identifiable Information) before it hits your analytics dashboard. You do not want user emails stored in your logs.

Step 7–9: turn insights into improvements (content, citations, prompts)

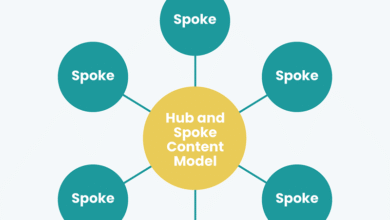

This is where the rubber meets the road. If analytics show you are missing from the “budget-friendly” conversation, you need to create content that explicitly connects your brand to that value proposition.

This is where I integrate Kalema. I don’t use it just to “write text”; I use it as a content intelligence engine. Once I know which topics I’m missing, I use Kalema’s AI article generator to draft high-quality, structured content variants that target those specific entity relationships. I then treat Kalema as my “production QA,” ensuring the content has the right schema, structure, and factual density to earn citations. It’s not about churning out spam; it’s about using an SEO content generator to update your knowledge base at the speed AI models evolve.

My mini-playbook:

- If citations are wrong: Update your “About” page and add Organization Schema markup.

- If sentiment is off: Publish case studies that explicitly use the counter-keywords (e.g., “Reliable” vs “Buggy”).

- If you never appear: You likely lack “topical authority.” Build a cluster of interlinked articles around the core entity.

Common mistakes I see in LLM visibility analysis (and how to fix them)

I’ve learned some of these the hard way, so hopefully, you don’t have to.

Mistake list (5–8 items) with practical fixes

- Sampling Bias: Mistake: Running a prompt once and assuming that’s the truth. Fix: Use tools that run the prompt 50+ times to get a probability score.

- Vanity Metrics: Mistake: Tracking only “positive sentiment” while ignoring that you have 0% visibility. Fix: Prioritize Share of Voice (SoV) over sentiment initially.

- Ignoring Citations: Mistake: obsessing over the answer text but ignoring the source link. Fix: Track which domain is driving the answer. It might be a partner site you can influence.

- Snapshot Syndrome: Mistake: Checking visibility once a quarter. Fix: AI models drift weekly. Set up automated weekly reporting.

- No Governance: Mistake: Leaking customer data into your analytics tool. Fix: Configure PII masking from day one.

- Misaligned Owners: Mistake: Marketing buys a tool that Engineering has to install. Fix: Get the dev lead on the demo call.

What I’d do this week: Pick 10 high-value prompts and manually check them across ChatGPT, Claude, and Gemini. Record the results in a spreadsheet. That is your MVP (Minimum Viable Product) dashboard.

FAQs + next steps: what to do this week to improve AI brand visibility

Why is LLM visibility important for brands?

Because user behavior is migrating from search engines to answer engines. If an LLM doesn’t know you exist, or worse, thinks you are a “legacy” solution, you lose consideration before the customer even visits your website. It’s about protecting your brand equity in the generative search era.

What types of tools exist for LLM visibility analysis?

There are four main types: GEO Simulators (for market research), Observability Tools (for app debugging), Prompt Trackers (for SEOs needing citation maps), and SEO-Integrated Solutions (for general reporting). Choose your starting point based on whether you are analyzing external platforms (ChatGPT) or your own internal AI tools.

How do LLM observability tools differ from traditional SEO tools?

SEO tools track static rankings on a results page. LLM observability tools track dynamic traces. They monitor the reasoning chain of the AI, the costs per token, the latency of the response, and the probabilistic frequency of your brand appearing across varying prompts.

Can these tools integrate with technical workflows?

Yes. Most observability platforms support OpenTelemetry (OTel) and offer SDKs for Python and JavaScript. They are designed to fit into modern DevOps pipelines. If you are non-technical, proxy-based integration is the lowest-effort path.

How can brands act on LLM visibility insights?

Don’t just watch the numbers. In the next 2 weeks:

- Build your “Prompt Universe” (50+ queries).

- Run a baseline audit to see where you stand.

- Pick a tool category (Marketing vs. Engineering).

- Update your top 5 product pages with clear entity definitions and schema.

- Measure the difference in 30 days.

It is an iterative process, not a one-time fix. But the sooner you start measuring, the sooner you can start managing.