Big Data SEO Tools: The Tools Built for Large-Scale Enterprise Environments

Editorial Note: This is not a hype piece or a generic vendor listicle. This is a practical decision and implementation guide for enterprise leaders who need to manage SEO at scale. Examples provided are illustrative, and vendor features change frequently—always verify current specifications.

When I’m handed a site with 3 million URLs, two legacy CMS platforms, and a faceted navigation system that accidentally generates 50,000 new parameter URLs overnight, I don’t open a basic rank tracker. I open a log file analyzer and an enterprise crawler.

That is the reality of enterprise SEO. It isn’t about tweaking a meta tag; it is about managing an ecosystem.

If you are reading this, you are likely dealing with the specific pain of scale: crawl budgets that run out before half your site is indexed, reporting that varies wildly depending on which tool you check, and approval processes that take months.

In this guide, I will walk you through what an enterprise-ready stack actually looks like, how to evaluate vendors without getting distracted by shiny demos, and how to modernize your workflow for the era of GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization).

What big data SEO tools are (and why enterprise teams need them)

“Big data SEO” isn’t just a buzzword; it refers to SEO decision-making powered by massive, often unstructured datasets rather than simple keyword lists. We are talking about merging server log files, real-time crawl data, analytics, and conversion data to see the whole picture.

For a small business, a missing title tag is a task. For an enterprise, a template error causing missing title tags on 200,000 product pages is a revenue disaster.

What “enterprise-ready” actually means:

- RBAC (Role-Based Access Control): You can control who edits, who views, and who approves.

- SSO (Single Sign-On): Your IT team won’t block the purchase because it doesn’t integrate with your identity provider.

- API Access: You can pull data out of the tool and into your own data warehouse.

- Audit Logs: When something breaks, you know who changed the setting.

- Segmentation: You can slice data by market, language, or page template.

You know you have outgrown basic tools when you start relying on “sampling” that hides your actual problems, or when you spend more time manually combining spreadsheets than actually fixing the site. With the global SEO software market projected to reach US$154.6 billion by 2030, the shift toward these robust platforms is undeniable.

Furthermore, the game has changed. Roughly 60% of Google searches now end without a click (zero-click results). We aren’t just fighting for rankings anymore; we are fighting for visibility in AI-generated answers, which requires a completely different level of data granularity.

Big data SEO vs. traditional SEO: what changes at scale

The fundamentals of SEO—search intent, technical accessibility, content quality—remain the same. But the mechanics are different.

What stays the same: You still need to solve user problems better than anyone else.

What changes: You cannot manually audit every page. You need automated governance.

I remember moving from an agency role handling small sites to an in-house enterprise role. I used to manually check pages for broken links. At enterprise scale, if I did that, I’d never finish. Instead, I had to build automated alerts based on crawl trends. I stopped being a mechanic and started being an air traffic controller.

Key datasets these platforms pull together

Here is what I look for first when evaluating the data capabilities of a platform:

- Server Logs: Tells you what Googlebot actually crawled, not just what you think it saw.

- Crawl Data: Simulates a search bot to find technical flaws at scale.

- Google Search Console (GSC) API: Provides actual query and click data, ideally stored longer than 16 months.

- Web Analytics: Connects traffic to revenue or conversions.

- Internal Link Graph: Shows how authority flows through your millions of pages.

A practical stack of big data SEO tools for enterprise teams

Beginners often think they need one “magic tool” that does everything. In reality, most successful enterprise teams operate a stack. Here is how I categorize the landscape.

Table 1: The Enterprise SEO Tool Taxonomy

| Category | Primary Job | Typical Outputs | Primary User |

|---|---|---|---|

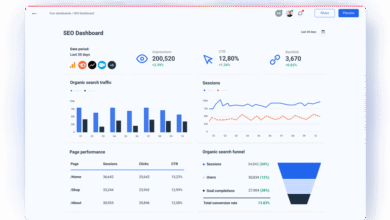

| Enterprise Platform | Unified reporting & workflow | Dashboards, share of voice, task lists | SEO Managers, VPs |

| Technical Crawler | Deep technical diagnostics | Log analysis, render audits, orphan pages | Technical SEOs, Devs |

| Content Intelligence | Relevance & intent scaling | Content briefs, topic clusters, optimization scores | Content Leads, Editors |

| BI / Warehouse | Custom data blending | Cross-channel attribution, revenue impact | Data Analysts |

Enterprise SEO platforms (all-in-one)

Tools like BrightEdge, Conductor, and seoClarity fall here. They excel at “executive visibility”—showing leadership how SEO is performing against competitors across thousands of keywords. Many now include AI modules for automated insights. Rule of thumb: I choose these when I have complex reporting needs involving many stakeholders.

Crawling & technical auditing tools

This is where tools like Botify, Lumar (formerly DeepCrawl), and Screaming Frog (in CLI mode) shine. They handle JavaScript rendering, which is critical for modern frameworks. At scale, technical issues compound. If a filter combination creates infinite URLs, you can burn your entire crawl budget in a week. These tools are your early warning system.

Content intelligence & optimization tools

Surfer SEO, Clearscope, and MarketMuse operate here. They help you operationalize quality. Instead of “write a good article,” you give a writer a brief with semantic requirements, required entities, and intent matching. When you have 500 pages to update a quarter, you need this standardized scoring.

Data warehousing, BI, and connectors

This is the unglamorous backbone. If your SEO data lives in a silo, it will be ignored. We often pipe GSC and crawl data into BigQuery or Snowflake, then visualize it in Looker Studio or Tableau alongside paid media data. If your SEO dashboard cannot be reproduced from raw data, it’s not a dashboard—it’s just a screenshot.

How I evaluate big data SEO tools (a beginner-friendly enterprise checklist)

I would rather have fewer features that my team actually uses than a Swiss Army knife that sits in the drawer. When evaluating vendors, I use a weighted scoring matrix to remove emotion from the purchase.

Table 2: Vendor Scoring Matrix

| Criteria | Why it matters | How to test | Weight (1-5) |

|---|---|---|---|

| Scalability | Can it handle 5M+ URLs? | Ask for a full crawl of a large segment during trial. | 5 |

| Data Freshness | Old data leads to bad fixes. | Check lag time between change and report. | 4 |

| Integration | Must fit existing workflow. | Test the API or Jira connector. | 4 |

| Support | Enterprise tools are complex. | Ticket response time during pilot. | 3 |

| Cost | TCO matters. | Include implementation & training costs. | 3 |

Define the job to be done (before demos)

Don’t just ask “what can you do?” Define your jobs first. For example:

- Job 1: Reduce non-indexable crawl hits by 20% to save server resources.

- Job 2: Identify decaying content across 1,000 blog posts automatically.

- Job 3: Standardize reporting for 15 regional marketing teams.

Non-negotiables for enterprise environments

The best tool is useless if it cannot pass a security review. Involve your IT/Security team early. If a vendor doesn’t have SOC 2 compliance or support SSO, the conversation might end there. Permissions are also critical—you do not want an intern accidentally no-indexing your homepage.

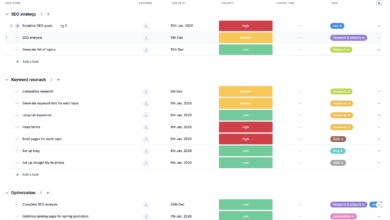

Implementation workflow: how I roll out big data SEO tools without chaos

Buying the tool is the easy part. Rolling it out is where most teams fail. Here is the workflow I use to ensure adoption.

Phase 1: Connect data sources and define segments

Start simple. Do not try to segment everything at once. Create these three buckets first:

- By Template: Product Pages (PDP), Category Pages (PLP), Blog Posts.

- By Market: US, UK, DE (or whatever your core regions are).

- By Performance: Brand vs. Non-Brand traffic.

Phase 2: Build a baseline (crawl, indexation, and content inventory)

Run your first full crawl combined with log data. Create a one-page “State of the Union” for leadership. It should plainly state: “We have 500,000 pages. Google only visits 100,000 of them. Here is why.” This baseline is what you will measure your ROI against later. (I’ve made the mistake of skipping this—don’t do it. You’ll never be able to prove how much you improved things.)

Phase 3: Prioritize with a simple impact model

You will find thousands of errors. Do not fix them all. Use an ICE score (Impact, Confidence, Effort) or a simple logic model:

- Critical: Blocking revenue (e.g., checkout pages de-indexed).

- High: High traffic potential (e.g., product schema broken).

- Low: Cosmetic issues on low-value pages.

Phase 4: Execute at scale (tickets, templates, and QA)

This is where the rubber meets the road. You aren’t fixing pages; you are fixing templates. Create tickets with clear acceptance criteria (e.g., “Canonical tag must self-reference on facet selection”).

For content updates, this is where automation aids the human editor. If you need to refresh 5,000 product descriptions or location pages, manual writing is too slow. You might use a Bulk article generator to create high-quality initial drafts or distinct location copy based on your data, which your editorial team then reviews and approves. This hybrid approach—AI for scale, humans for judgment—is the only way to move fast enough in an enterprise.

GEO/AEO and AI visibility: the new layer enterprise teams can’t ignore

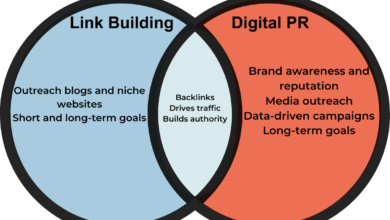

I treat GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization) as an extension of my strategy, not a replacement. But the metrics are shifting. We are moving from “ranking position” to “citation frequency.”

AI search engines like ChatGPT Search or Google’s AI Overviews don’t just list links; they synthesize answers. To be included, your content needs to be the most authoritative, structured source.

This requires precise AI SEO tool capabilities—specifically content intelligence that helps you structure articles and data so machines can easily parse and cite them. It’s about becoming the “fact” that the AI chooses to reference.

Traditional SEO vs. GEO/AEO (plain-English explanation)

| Feature | Traditional SEO | GEO / AEO |

|---|---|---|

| Goal | Rank #1 on a list | Be cited in the answer |

| Optimization Target | Keywords & Backlinks | Entities, Context & Structure |

| Success Metric | Clicks & CTR | Share of Citations & Sentiment |

Which enterprise tools support AI-assisted workflows and AI visibility tracking

We are seeing a new breed of tools emerge. Semrush One has introduced an AI Optimization tier. Evertune AI is focusing specifically on brand presence in LLMs. These tools track how often your brand is mentioned in AI-generated responses for specific prompts. I’d shortlist these as “leading indicators”—they are directional signals, not absolute truths yet.

Structured data for AI discoverability (what to implement first)

If you want AI to understand your content, speak its language: Schema.org markup. For enterprise teams, I recommend this implementation order:

- Organization Schema: Establish your brand entity.

- Product Schema: Essential for e-commerce visibility.

- FAQ & HowTo Schema: These are gold mines for AEO. If you answer a question directly in schema, you increase the odds of being used in the generated answer.

Common mistakes with big data SEO tools (and how I fix them)

I have learned these the hard way, so hopefully, you don’t have to.

Mistake-to-fix checklist

- Mistake: Tool Sprawl. Buying three tools that do 80% of the same thing.

Fix: audit your subscriptions annually. If a tool hasn’t been logged into for 30 days, cancel it. - Mistake: Alert Fatigue. Setting up alerts for every 404 error.

Fix: Set thresholds. Only alert me if 404s increase by >5% week-over-week. - Mistake: Ignoring Log Files. Relying only on crawl simulations.

Fix: Integrate server logs. It’s the difference between theory and reality. - Mistake: Data Without Action. sending dashboards that no one reads.

Fix: Stop sending dashboards. Send “Insight + Recommended Action” tickets. - Mistake: Chasing AI Hype. Rewriting everything for AI without measurement.

Fix: Run a pilot on one directory. Measure citations before rolling out sitewide.

FAQs + next steps: how to choose and use big data SEO tools confidently

FAQ: What is the difference between traditional SEO and GEO/AEO?

Traditional SEO is about winning a spot on a bookshelf (the SERP). GEO/AEO is about getting your content quoted in the book summary (the AI answer). Both require authority, but AEO demands direct, structured answers to specific questions.

FAQ: Which tools offer AI visibility tracking for enterprise brands?

Tools like Evertune AI, Profound, and the AI tiers within platforms like Semrush and Surfer are leading this space. However, use them cautiously. AI models change daily, so treat these metrics as trends rather than static rankings.

FAQ: Do existing enterprise SEO platforms support AI-assisted workflows?

Yes, most major platforms (Conductor, BrightEdge, seoClarity) have integrated AI to help with intent clustering, content briefs, and metadata generation. But remember: governance still matters. AI is an accelerator, not an autopilot.

FAQ: How important is structured data for AI discoverability?

It is critical. AI models rely on structured data to parse relationships between entities. If you don’t use schema, you are asking the AI to guess what your page is about. Don’t make it guess.

FAQ: Is the investment in AI-powered SEO tools justified at enterprise scale?

If you can reduce the time to diagnose a revenue-impacting crawl error from 3 days to 3 hours, the tool pays for itself. The ROI comes from risk reduction and operational efficiency. Pilot it for 60 days to prove the value.

Conclusion and Next Steps

If I were starting from zero today with an enterprise site, I wouldn’t panic about the complexity. I would simplify. I would audit my data sources, pick one platform that serves my “air traffic control” needs, and start segmenting.

Your 3-step plan for this week:

- Inventory your tools: Kill what you don’t use.

- Run a baseline crawl: Find out how much of your site is actually indexable.

- Start a content pilot: Use an AI article generator to draft a cluster of intent-matched content, manually review it, and measure the speed improvement.

Big data SEO isn’t about having the most data. It’s about having the clarity to act on it.