Monitor competitors with AI in generative search results

Monitor competitors with AI in generative results: what this guide covers (and who it’s for)

There is a specific frustration I hear constantly from marketing leaders lately. You search for a category-defining question in ChatGPT or Gemini—something like “best CRM for small agencies”—and your top competitor is the star of the answer. They get the recommendation, the citation link, and the glowing review. You run the same prompt an hour later, and the answer changes slightly, but your brand is still missing. It feels arbitrary, unmeasurable, and frankly, a bit alarming.

If you are an SEO manager or growth marketer trying to make sense of this, you aren’t alone. We are moving from a world of static rankings to dynamic, conversational answers. This guide isn’t about abstract theory; it is a pragmatic workflow for the intermediate marketer. I’m going to walk you through exactly how to monitor competitors with AI, from setting up a simple spreadsheet tracker to understanding sophisticated metrics like “share of voice.” We will cover the manual sampling method I use, look at emerging tools that automate this process, and discuss how to turn that messy data into content updates that actually move the needle.

From SEO to GEO: why monitoring competitors in AI search matters now

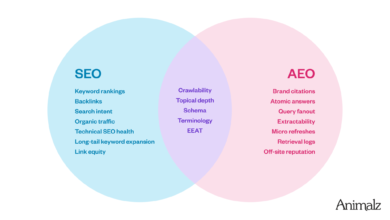

We are witnessing a fundamental shift from traditional Search Engine Optimization (SEO) to Generative Engine Optimization (GEO). While SEO is about ranking links on a page, GEO is about optimizing your brand’s visibility, relevance, and authority within AI-generated responses. This matters because user behavior is becoming “zero-click.” People want the answer immediately, without clicking through ten blue links.

However, monitoring this new landscape is tricky. Unlike a Google search result page (SERP) which is relatively static for a specific location, AI models are probabilistic. They “roll the dice” every time they generate an answer. This means a single screenshot of a ChatGPT response is anecdotal, not data. To truly understand your competitive position, you need to think like a researcher: using sampling, looking for patterns over time, and measuring frequency rather than absolute rank.

What is Generative Engine Optimization (GEO)?

In plain English, GEO is the practice of shaping content to maximize the likelihood that a Large Language Model (LLM) will mention, cite, or recommend your brand in response to relevant user queries. It focuses less on keywords and more on citation frequency (how often you are linked) and mention context (are you the “best for X” or just a footnote?).

How generative results differ from classic SERPs (and why screenshots mislead)

When you type a query into Google, the algorithm retrieves an index. When you prompt an AI, the model generates a response based on training data and live retrieval. This introduces variability. I often see “hallucinations” or “narrative drift” where a brand is described incorrectly. Furthermore, different models (Claude vs. ChatGPT vs. Perplexity) have different personalities and data sources. If you base your strategy on a single screenshot sent by your CEO, you are chasing ghosts. You need a logged history of prompts to see the real picture.

What to track when you monitor competitors with AI (beginner-friendly metrics)

The biggest mistake I see teams make is trying to track everything. In the beginning, you need a baseline. You need to know if you are part of the conversation or invisible. When setting up your monitoring, focus on these foundational metrics. Note that while enterprise tools process millions of responses (Evertune, for example, processes over one million AI answers per brand monthly), you can start smaller.

| Metric | How to measure | Why it matters | Common Pitfall |

|---|---|---|---|

| Citation Frequency | Count of times your URL appears as a source in the answer. | It is the new “backlink.” It drives direct traffic and signals trust to the model. | Confusing a text mention with a clickable citation link. |

| AI Share of Voice (SOV) | Percentage of total tracked prompts where your brand appears. | Shows your dominance relative to competitors across a topic cluster. | Expecting 100% SOV. Even market leaders rarely get cited in every response. |

| Query Triggers | Specific prompts that consistently surface your brand. | Identifies your “winning” topics to double down on. | Tracking generic keywords instead of natural language questions. |

| Citation Sources | The third-party domains the AI cites when talking about you (e.g., G2, Capterra, specific blogs). | Tells you where you need to get press coverage or reviews. | Ignoring source domains and only focusing on your own website. |

| Sentiment | Is the mention positive, neutral, or negative? | Visibility is useless if the AI says your product is “outdated.” | Overreacting to one neutral mention; look for patterns of negativity. |

Core metrics: mentions vs citations vs ‘recommended position’

These terms get used interchangeably, but they are distinct. A mention is simply your brand name appearing in the text. A citation is when that name is a clickable link to a source. The recommended position is the gold standard—this is when the AI explicitly suggests your solution as the primary answer. For example: “For small businesses, I recommend Brand X because…” is a recommendation. “Other options include Brand Y…” is just a mention.

A simple dashboard layout I’d start with (weekly + monthly)

You don’t need a complex BI tool yet. A spreadsheet works fine. I recommend columns for: Date, Model (e.g., GPT-4), Prompt Intent (TOFU/BOFU), Top Recommended Brand, My Brand Mentioned (Y/N), My Brand Cited (Y/N), and Notes. I keep a “Notes” column specifically for weird outputs—like the time an AI hallucinated a feature we don’t have—because context is king when explaining data to stakeholders.

My step-by-step GEO workflow to monitor competitors with AI (prompts, sampling, and logging)

So, how do you actually do this without losing your mind? You need a process that is repeatable. If you just random-walk through ChatGPT, you’ll get random results. Here is the workflow I use to bring order to the chaos.

Step 1: Define the ‘competitor set’ (direct, adjacent, and substitutes)

Start by listing your rivals, but don’t stop at the obvious ones. AI often recommends “substitutes” you might not consider direct competitors. If you sell email marketing software, your direct competitor is Mailchimp. But an adjacent competitor might be a CRM with email features. A substitute might be a freelance agency. Include 3-5 key players to keep your manual tracking manageable.

Step 2: Build a prompt library by customer intent (TOFU/MOFU/BOFU)

Don’t just use keywords like “accounting software.” People talk to AI like a human. Build a library of 6–10 prompts mapped to the funnel:

- TOFU (Problem Aware): “How do I automate payroll for a team of 10?”

- MOFU (Solution Aware): “What are the best payroll tools for US small businesses?”

- BOFU (Decision Aware): “Gusto vs ADP pricing comparison.”

Pro tip: Pull these prompts directly from your sales call recordings or support tickets. Real language yields real visibility gaps.

Step 3: Run sampling the right way (so you’re not chasing noise)

Since AI answers vary per prompt, single runs are dangerous. For a manual workflow, I suggest a “minimum viable monitoring” cadence: Run your priority prompts 3 times each on your target engines (e.g., ChatGPT and Perplexity). Do this once a week. If you appear in 2 out of 3 runs, mark it as a “High Visibility.” If you appear in 0, it’s a gap. This simple sampling rule flattens out the randomness.

Step 4: Capture citations and sources (what domains AI pulls from)

When you run your tests, pay close attention to where the AI gets its info. In Perplexity or Bing Chat, you will see footnotes. Log these domains. If your competitor is consistently cited via a specific review on Capterra or a “Top 10” list on a niche industry blog, you have found a source domain win. Your goal is to get your brand onto those same pages.

Step 5: Score AI share of voice and ‘trigger prompts’

After a month, look at your data. Calculate your Share of Voice: (Number of responses containing your brand / Total number of responses generated) * 100. Identify your “trigger prompts”—the specific questions where you always win. These are your assets. Protect them.

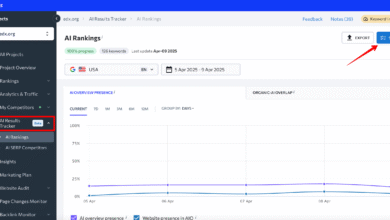

Tooling options: spreadsheets first, then GEO platforms (what to look for)

If you are tracking 10 prompts, a spreadsheet is perfect. If you are tracking 500 prompts across 4 regions, you will burn out manually. This is where dedicated AI SEO tools and GEO platforms come in. The market is evolving fast with players like Ranketta, Evertune, and Otterly.ai gaining traction. But be careful—metrics vary wildly between tools.

Here is how I categorize the current landscape based on recent market intelligence:

| Tool Category | Leading Examples | Best For | Limitations |

|---|---|---|---|

| Enterprise GEO Platforms | Evertune, Ranketta | High-volume sampling (1M+ responses), statistical significance, and large catalog benchmarking. | Higher cost; can be overkill for small teams or solo marketers. |

| Agile Trackers | Otterly.ai, Search Party, Wellows | Marketing teams needing quick insights, sentiment analysis, and funnel categorization. | Data depth may vary compared to enterprise giants; new features rolling out fast. |

| SEO Suite Extensions | Ahrefs Brand Radar | Teams already using Ahrefs who want to blend backlink data with AI mentions. | Might lack the granular “prompt engineering” testing of dedicated GEO tools. |

What ‘enterprise-ready’ looks like in GEO tooling

If you are evaluating these tools for a larger organization, “enterprise-ready” means API access for raw data export, the ability to schedule runs automatically (so you don’t have to click “generate” 500 times), and sophisticated benchmarking that tracks narrative drift over time. You want to see how the AI’s description of your brand changes, not just if you appeared.

Comparison table: leading GEO platforms vs extensions

If I were starting out today, I would shortlist two tools and run a 2-week pilot. Compare them against your manual spreadsheet. If the tool detects the same visibility gaps you found manually but saves you 10 hours a week, it’s worth the budget.

Turning competitor visibility insights into content that wins in AI answers (without hype)

Data without action is just vanity. Once you know that you are missing from the “best project management tools” conversation, how do you fix it? You don’t just “stuff keywords.” You need to create content that is easy for LLMs to parse, verify, and cite. This connects directly to your content production workflow—using tools like a smart AI article generator can help you structure this information efficiently, provided you feed it the right strategic brief.

Quick content brief template I use for GEO-driven pages

When I find a gap, I create a brief for a new page (or a refresh of an old one) using this structure:

- Target User Question: (The exact trigger prompt you want to win)

- AI Intent: (Is the user looking for a list, a comparison, or a definition?)

- Required Entities: (Competitors, specific features, and industry terms the LLM associates with this topic)

- Proof Points: (Original data, case study stats, or primary source quotes that make the content unique)

- Structure: (Must use clear H2s/H3s and HTML tables for data comparison—LLMs love tables)

- Schema: (FAQ or HowTo schema to help machines understand the page structure)

On-page elements that often influence AI citations

In my experience, clarity wins. AI models are essentially predicting the next most likely word based on trusted patterns. To increase your “cite-ability,” ensure your key definitions are concise (under 40 words) and placed immediately after a heading. Use clear author bylines to signal expertise (E-E-A-T). If you are updating a product page, add a “Key Specs” table. These structured elements act as handles for the AI to grab onto when constructing an answer.

Scaling the process: from one-off checks to an automated monitoring + publishing cadence

Monitoring is a habit, not a project. To make this sustainable, you need to integrate it into your regular content operations. This is where an automated blog generator or a consistent publishing system becomes vital—not to churn out spam, but to keep your topical authority fresh and relevant at a pace that matches the speed of AI changes.

Suggested cadence: daily spot checks, weekly sampling, monthly insights review

For a small team, I suggest this rhythm:

- Weekly: Run your top 10 “money prompts” manually or check your tool dashboard. Log any major shifts.

- Monthly: Deep dive into “Source Domains.” Are there new blogs or review sites citing your competitors? Add them to your outreach list.

- Quarterly: Refresh your prompt library. User behavior changes, and new questions emerge.

Quality controls that keep scaled content trustworthy

Scale can be dangerous if you lose quality. Never publish for the sake of publishing. Every piece of content aimed at GEO needs a human review layer. Verify your facts, check your internal links, and ensure your tone remains distinct. I keep a simple change log: “Updated X page on [Date] to clarify pricing structure because ChatGPT was hallucinating our old rates.” This creates an audit trail for your results.

Common mistakes when you monitor competitors with AI (and how I’d fix them)

I have made plenty of errors trying to reverse-engineer these black boxes. Here are the most common ones to avoid:

- Mistake: Trusting a single output.

Why it happens: It’s fast and easy to take one screenshot.

Fix: Always sample. Run the prompt 3 times. If the result isn’t consistent, it’s not a trend yet. - Mistake: Ignoring the “Why.”

Why it happens: We focus on the mention, not the context.

Fix: Read the full answer. Did the AI cite a specific review? Did it mention a specific feature? That context is your roadmap to fixing the gap. - Mistake: Only tracking brand terms.

Why it happens: It’s ego-gratifying to see your brand name.

Fix: Focus on unbranded, problem-aware queries (TOFU). That is where new customers find you. - Mistake: Panic-changing content.

Why it happens: A competitor appears for a day, and you scramble to rewrite your homepage.

Fix: Wait for data stability. Narrative drift happens. Only make structural changes based on consistent visibility gaps.

FAQ: competitor monitoring in generative results

What is Generative Engine Optimization (GEO)?

GEO is the process of optimizing content to increase visibility, citations, and positive sentiment in AI-generated answers (like ChatGPT or Google’s AI Overviews) rather than just ranking in traditional search results.

Why is competitor monitoring in AI search important?

As search behavior shifts to “zero-click” answers, being recommended by an AI builds immense trust. If your competitors are cited and you aren’t, you are effectively invisible to a growing segment of buyers.

What metrics do AI visibility tools track?

They typically track Share of Voice (percentage of visibility), Citation Frequency (how often you are linked), Sentiment (positive/negative context), and Source Domains (where the information comes from).

Are AI visibility platforms enterprise-ready?

Yes. Platforms like Evertune and Ranketta are designed for scale, offering APIs, historical benchmarking, and the ability to process thousands of prompts to provide statistically significant data for large brands.

Summary and next steps: a simple plan I’d follow this week

Monitoring competitors in AI isn’t magic; it’s just a new form of digital listening. To wrap up, remember that variability is normal, citations are the currency of trust, and structured content is your best defense.

If I were starting from zero this week, here is my Monday morning plan:

- Build a list of 10 priority prompts (mix of “best X tool” and “how to solve Y problem”).

- Run them through ChatGPT and Perplexity three times each and log the results in a simple spreadsheet.

- Identify one content gap where a competitor is cited, and you aren’t.

- Update one relevant page on your site with clearer definitions and a data table to better answer that specific prompt.

Consistency wins here. Start measuring, stay curious, and if you need a system to turn these insights into high-quality articles at scale, contact us for more information on how we can help you build a content engine that performs.