The 2026 List: Best AI Brand Monitoring Software to Track Your Brand’s Presence in AI

When I search for a new project management tool or a payroll provider today, I don’t always start with ten blue links on Google. Increasingly, I ask ChatGPT, Claude, or Perplexity. I ask for comparisons, I ask for “best for” lists, and I ask for pricing transparency. If you work in marketing or SEO, you know I’m not alone.

This shift in behavior has created a blind spot. Traditional social listening tools tell you what people are saying on X (Twitter) or Reddit, but they are often silent on what the world’s most powerful Large Language Models (LLMs) are telling your potential customers. If an AI recommends your competitor because your brand entity isn’t clearly defined in the training data or retrieval index, you lose revenue without ever knowing why.

That is where AI brand monitoring comes in. In this guide, I’m skipping the hype. I will walk you through the practical landscape of 2026: the difference between AI-native GEO tools and legacy platform add-ons, a curated list of the best software available right now, and a 60-minute workflow to establish your baseline visibility this week.

What AI brand monitoring is (and how it differs from traditional brand monitoring)

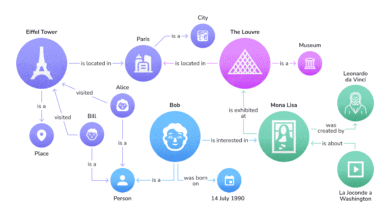

To put it simply, AI brand monitoring tracks how your brand appears inside AI-generated outputs, not just on the web pages that feed them. While traditional brand monitoring (social listening) counts mentions, likes, and shares across social media and news sites, AI brand monitoring focuses on the answers generated by models like GPT-4, Claude 3.5, and Gemini.

Here is the practical difference. In the old world, you measured success by how many people linked to your press release. In this new world, you measure success by whether ChatGPT cites you as the primary source when someone asks, “Who offers the most reliable enterprise hosting?”

It’s a shift from tracking links to tracking recommendations. When I run a query like “best CRM for small agencies,” I might never click a citation link if the AI answer is comprehensive enough. This is why “Zero-Click” behavior is the defining challenge for 2026. If you aren’t monitoring the text of the answer itself, you are flying blind.

Why it matters for US businesses in 2026 (trust, discovery, and competitive awareness)

For US-based SaaS and e-commerce companies, the stakes are financial. AI recommendations shape perception before a user ever lands on your site. If an LLM hallucinates your pricing structure or claims you lack a critical feature (which happens more often than we’d like to admit), that misinformation scales instantly to thousands of users.

I watch for three specific risks:

- Brand Displacement: Is the AI recommending a competitor for a category you used to own?

- Hallucinations: Is the model saying you don’t offer API access when you do?

- Citation Quality: Is the AI citing your actual product page, or a third-party review site that ranks above you?

The two tool categories you’ll see: platform add-ons vs AI-native GEO tools

As you evaluate the market, you will see two distinct camps:

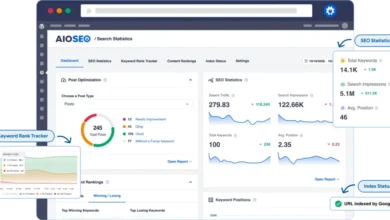

- Traditional Platforms with AI Modules: Established players like Semrush and Ahrefs that have integrated AI visibility metrics into their existing SEO suites. These are convenient if you want one bill.

- AI-Native GEO Specialists: New entrants built specifically for Generative Engine Optimization (GEO). These tools often provide deeper data on prompt variance, answer sentiment, and real-time LLM changes.

What to measure: the metrics that define brand presence in AI

One of the hardest parts of this transition is learning a new vocabulary. “Rankings” matter less; “inclusion” and “sentiment” matter more. Here is the framework I use to determine if a brand is actually winning in AI.

| Metric | What it tells you | Business Use Case |

|---|---|---|

| Share of Voice (AI) | How often your brand appears in answers for a specific topic compared to competitors. | Competitive benchmarking and market share analysis. |

| Citation Frequency | How often the AI links to your URL as a source for its claims. | SEO and traffic attribution; validating content authority. |

| Sentiment Analysis | Whether the AI describes your brand positively, neutrally, or negatively. | PR crisis management and brand perception. |

| Recommendation Rate | The percentage of times the AI explicitly recommends your product in “best for” lists. | Bottom-funnel conversion and sales enablement. |

| Brand Relevance Score | A proprietary score (varying by tool) measuring how strongly the model associates your brand with a topic. | Long-term brand building and category ownership. |

If I could only track one thing, it would be Citation Frequency. It is the bridge between the “black box” of the AI and the traffic landing on your site.

How tools generate these insights (prompts, panels, APIs, and variance)

It is important to understand that no tool has a perfect view of every AI conversation. Unlike Google Search Console, there is no single “truth.” Tools typically generate insights by running thousands of automated prompts through LLM APIs (or occasionally via scraping/consumer panels) to mimic user behavior.

The Variance Problem: If I ask ChatGPT the same question three times, I might get three slightly different answers. Good monitoring software accounts for this “temperature” or variance by sampling the same prompt multiple times to give you a confidence interval, rather than a single snapshot. Always ask vendors how they handle this fluctuation.

How I evaluate the best AI brand monitoring software (a beginner-friendly checklist)

When I’m comparing tools, I start with a simple reality check: does this tool help me make a decision, or does it just give me a pretty chart? The market is flooded with “AI wrappers,” so you need to be rigorous.

Here is my evaluation checklist:

- Coverage: Does it track the models my customers use? (Usually ChatGPT, Claude, Perplexity, Gemini, and Google AI Overviews).

- Sampling Depth: Does it run the prompt once a month (useless) or weekly/daily with multiple runs to account for variance?

- Attribution: Can it show me exactly which URL was cited, so I can optimize that specific page?

- Price-to-Value: Is the pricing transparent, or does it require a massive enterprise contract?

The ‘must-have vs nice-to-have’ features for beginners

Must-Haves:

- Multi-LLM tracking (don’t settle for just ChatGPT).

- Citation/Source tracking.

- Historical trend lines (to prove your work is having an impact).

- Export capabilities (CSV/API) to blend with your other data.

Nice-to-Haves:

- Persona-level tracking (simulating an “IT Manager” vs. a “CFO”).

- Automated recommendations for fixing content gaps.

- Slack/Teams integration for alerts.

Where content intelligence fits: turning monitoring into action with an AI SEO tool

Monitoring is only useful if you can fix the problems you find. If you discover that Claude loves your competitor’s comparison page but ignores yours, you need to update your content immediately. You need to close the entity gaps, add the missing pricing details, and structure your data so the LLM can parse it.

This is where an AI SEO tool like Kalema fits into the workflow. Once monitoring identifies the gap, you use content intelligence to plan and produce the update, ensuring your brand entity is authoritative enough to win the citation next time.

The 2026 list: best AI brand monitoring software (what each tool is best for)

The landscape below is divided into two categories. If you are building a dedicated “GEO” (Generative Engine Optimization) strategy, look at the AI-native list. If you just want to keep an eye on things without adding a new login, look at the integrated platforms.

| Tool | Category | Key Models Tracked | Standout Feature | Best For |

|---|---|---|---|---|

| Mentionable | AI-Native | ChatGPT, Claude, Perplexity, Google AIO | Real-time citation tracking | Content & SEO teams focused on citations |

| Ranketta | AI-Native | Multi-LLM | Product-level visibility & sentiment | SaaS & Product Marketers |

| Evertune | AI-Native | Major LLMs | Statistical relevance scoring | Data-driven Enterprise brands |

| Semrush Ent. AIO | Integrated | ChatGPT, Google AIO | Share of Voice & Shopping Analytics | Existing Semrush users (Enterprise) |

| Brand24 | Integrated | Social + Web + AI | Massive listening scale | PR & Reputation Management |

AI-native GEO / AI visibility specialists (built specifically for LLM answers)

These tools were born in the era of ChatGPT. They handle prompt engineering and variance natively, often providing more granular data than traditional SEO tools.

Mentionable

What it tracks: Mentionable is laser-focused on citations across the big four: ChatGPT, Claude, Perplexity, and Google’s AI Overviews. It provides real-time insights into how your brand is being sourced.

Standout feature: Its ability to guide content strategy. By showing you exactly which sources are powering the answers, it tells you whether you need to update your own blog or fight for a mention in a third-party review.

Best for: SEO and Content leads who need to prove the value of their “top of funnel” work.

Watch-outs: As a newer specialist tool, it may not have the massive backlink databases of an Ahrefs. Pricing: .

Ranketta

What it tracks: Ranketta queries multiple LLMs to track product-level visibility. It doesn’t just look for your brand name; it looks for your products in specific solution contexts.

Standout feature: Market validation. Ranketta secured €1 million in pre-seed funding in November 2025 and reached roughly $100,000 ARR within two months of launch. This momentum suggests they are building features fast based on real user feedback.

Best for: Startups and growth teams who need to move fast and track specific product lines.

Watch-outs: Early-stage tools can have UI changes frequently as they iterate. Pricing: .

Evertune

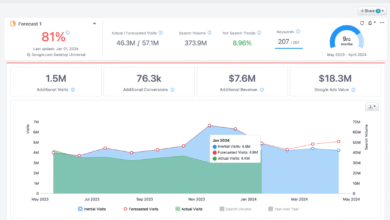

What it tracks: Evertune is the heavy hitter for data. It processes over one million AI responses per brand monthly to measure presence. It produces ‘Topic Relevance’ and ‘Brand Relevance’ metrics that are statistically significant.

Standout feature: The sheer volume of sampling. By running thousands of prompts, it smooths out the “hallucination noise” that plagues smaller tools.

Best for: Enterprise brands that need rigorous data to report to a board of directors. If you need to defend your budget with math, this is a strong contender.

Watch-outs: It is priced for serious players, often around $3,000/month , so it’s likely overkill for a small local business.

Scrunch (and similar platforms)

What it tracks: Scrunch and similar emerging tools introduce advanced capabilities like Agent Experience Platform (AXP) features. They look at funnel-stage performance—how your brand appears when a user is “just looking” vs. “ready to buy.”

Standout feature: Persona tracking. You can see how an “IT Director” gets a different answer than a “Marketing Intern” for the same query. Some tools in this class even help generate AI-friendly versions of your site.

Best for: Complex B2B sales cycles where buyer personas matter immensely.

Watch-outs: These advanced features often require more setup time to define your personas correctly.

Integrated platforms adding AI visibility modules (best if you already live in their ecosystem)

If your team already lives in Semrush or Ahrefs daily, the “switching cost” of adding a new tool is high. These platforms are closing the gap quickly.

Semrush Enterprise AIO

What it tracks: As of mid-2025, Semrush Enterprise AIO added LLM tracking with metrics like ‘Share of Voice‘ and a unique ChatGPT Shopping Analytics report.

Standout feature: The Shopping Analytics. For e-commerce brands, seeing how you appear in ChatGPT’s shopping recommendations is critical revenue data.

Best for: Large e-commerce and enterprise SEO teams who want to consolidate their stack.

Watch-outs: Some features are gated to the Enterprise tier, so check your plan limits.

Ahrefs (Brand Radar / brand features)

What it tracks: Ahrefs is legendary for its link data, which is the fuel for many AI citations. While their specific AI monitoring modules evolve, their ability to track brand mentions across the web is a foundational proxy for AI visibility.

Standout feature: The link graph. If you want to know why an AI is citing a specific article, Ahrefs likely knows the backlink authority of that article better than anyone.

Best for: SEO purists who view AI visibility as an extension of technical authority.

Watch-outs: Verify the current specific AI-response tracking capabilities, as they are often labeled under beta or new modules. Pricing: .

Meltwater and Brand24

What it tracks: Brand24 is a beast of scale. As of October 2025, they monitored 100,000 brands and collected 25 billion online mentions. Meltwater similarly dominates the enterprise media intelligence space.

Standout feature: The volume of historical data. If you are facing a PR crisis, these tools will catch the sentiment shift across the web instantly, which is the leading indicator for what AI models will eventually learn.

Best for: PR and Communications teams who need to manage reputation across every channel, not just AI.

Watch-outs: Their strength is traditional “listening” (social/web); ensure their specific LLM-response modules meet your technical GEO needs.

How I’d implement AI brand monitoring in 60 minutes (then run it weekly)

You don’t need a month to set this up. If I were starting from scratch on a Monday morning, here is exactly how I would do it. The goal is not to track everything, but to track the money.

Step 1: Set goals + define your ‘money queries’ and personas

Don’t start with 200 prompts. Start with the 20 questions that actually lead to revenue. I group them like this:

- Category Discovery: “Best project management software for creative agencies.”

- Comparison: “Asana vs [Your Brand] for small teams.”

- Pricing/Features: “Does [Your Brand] offer enterprise SSO?”

Pro tip: If you sell to different roles, write a prompt for each. “Act as a CTO and tell me the risks of [Your Software].”

Step 2: Run a baseline across multiple models (and record variance)

Take those 20 prompts and run them through ChatGPT, Perplexity, and Claude. If you are using a tool, load them in. If you are doing this manually (bootstrapping), use a simple spreadsheet:

- Column A: Prompt

- Column B: Model

- Column C: Did we appear? (Yes/No)

- Column D: Sentiment (Pos/Neut/Neg)

- Column E: Sources Cited

Your first baseline will be messy. You will find the AI says things that are outdated. That is normal. The goal of the baseline is just to document the mess so you can clean it up.

Step 3: Turn insights into content updates with an AI article generator

This is the most critical step. Monitoring creates a backlog of problems; you need a system to fix them. If the AI says your pricing is “opaque,” you need to publish a clear pricing page.

I use an AI article generator to speed up this response time. I can take the missing topic—say, “How our security protocols compare to Competitor X”—and generate a comprehensive draft in minutes. I still edit it for voice and accuracy, but the speed allows me to patch holes in our “entity coverage” much faster than writing from a blank page.

Step 4: Report outcomes in business terms (not vanity metrics)

When I send a report to leadership, I keep it to one page. No one cares about “prompt variance temperature.” They care about:

- Share of Voice Trend: “We appeared in 40% of answers this week, up from 30% last month.”

- Correction Rate: “We fixed the misinformation regarding our API limits in ChatGPT.”

- Competitive Gap: “Competitor Y is winning the ‘enterprise’ queries; we are winning ‘SMB’.”

Common mistakes I see (and how to fix them quickly)

I’ve made plenty of mistakes in this space so you don’t have to. Here are the big ones that derail progress.

Mistake #1–#6 list (with fixes)

- Tracking only one model.

The Fix: Users are fragmented. You must look at Perplexity and Google AIO at a minimum, not just ChatGPT. - Overreacting to a single answer.

The Fix: Never panic over one screenshot. Look for trends over 3-4 weeks. The models hallucinate; don’t let a glitch dictate your strategy. - Ignoring the cited sources.

The Fix: If the AI cites a G2 review or a random blog, your job isn’t to fix the AI—it’s to fix that review or get mentioned in that blog. - Vague prompting.

The Fix: “Tell me about [Brand]” is a bad prompt. Use specific, intent-driven questions like users actually type. - Not shipping content updates.

The Fix: Analysis paralysis is real. If you don’t publish new content to feed the AI, the monitoring is wasted budget. - Forgetting the date.

The Fix: AI models have knowledge cutoffs (though they are getting better with live browsing). Always check if the AI is pulling from its training data or the live web.

FAQs + my next steps checklist for 2026 (so you can start this week)

FAQ: What is AI brand monitoring and how does it differ from traditional brand monitoring?

AI brand monitoring tracks your presence in the text answers generated by Large Language Models (LLMs) like ChatGPT, whereas traditional monitoring tracks mentions on social media and websites. The key difference is the output: one is a conversation/recommendation, the other is a feed of links.

FAQ: Why is monitoring brand presence in AI outputs important for businesses?

It impacts discovery and trust. If an AI recommends your competitor, you lose the prospect before they even search for you. Furthermore, incorrect information in AI answers can damage your brand credibility without you knowing it.

FAQ: Which types of platforms provide AI brand monitoring?

There are two main types: AI-native GEO tools (like Mentionable, Ranketta, Evertune) built specifically for this purpose, and integrated marketing platforms (like Semrush, Brand24) that have added AI visibility modules to their existing suites.

FAQ: What metrics are key in AI brand monitoring?

Start with these three: Share of Voice (visibility frequency), Citation Frequency (how often you are linked), and Sentiment (positive/negative context). Advanced teams also look at Persona Visibility.

FAQ: How do these tools generate insights into AI responses?

They typically use APIs (or sometimes automated browsers) to send thousands of prompts to various LLMs, capturing the responses. They then analyze these text responses for mentions, sentiment, and citations, aggregating the data to smooth out variance.

Next actions: scale what works with an Automated blog generator (optional)

If you follow this guide, you will eventually hit a point where you know exactly what content you are missing, but you lack the hands to write it all. Once you have a stable monitoring and QA process in place, that is the time to look at an Automated blog generator. This allows you to scale your “entity defense” content—creating the glossaries, comparisons, and definition pages that LLMs love to cite—while you focus on high-level strategy and review.

Your Checklist for this Week:

- Select 20 “money queries” relevant to your product.

- Run a manual baseline on ChatGPT and Perplexity.

- Pick one tool from the list above that fits your budget and start a trial.

- Identify one piece of misinformation and publish a blog post to correct it.