Best AI brand monitoring tools to Track LLM Mentions

Introduction: Finding your “AI rank” (and what this guide will help you do)

When I first tried asking ChatGPT about the “best payroll software for startups” to see where a client stood, I realized the limitations of manual checking immediately. The same few brands kept repeating, but I couldn’t explain why they were chosen or why my client was ignored. It felt like a black box.

If you are in marketing, SEO, or PR, you likely share this anxiety. You suspect that Large Language Models (LLMs) like ChatGPT, Claude, and Gemini are influencing your customers’ decisions, but you lack a reliable way to measure it. You don’t know your “AI rank,” and checking manually is inconsistent and unscalable.

This guide is designed to move you from guessing to measuring. We aren’t just going to list software; we will look at a decision framework for selecting the best AI brand monitoring tools based on your business size—whether you are an SMB, a mid-market agency, or an enterprise team. We will cover the specific KPIs that matter (beyond just “sentiment”), compare the top players in the market for 2025–2026, and provide a repeatable workflow you can implement this week.

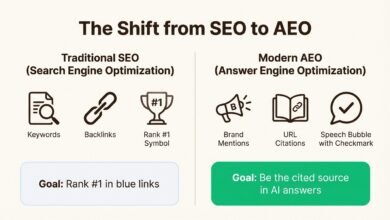

What an AI brand monitoring tool for LLMs is (and why it matters now)

An AI brand monitoring tool for LLMs is specialized software designed to track how generative AI models perceive, reference, and recommend your brand. Unlike traditional social listening tools that scrape tweets or news articles, these platforms query LLMs (like ChatGPT, Perplexity, and Gemini) with specific prompts to analyze the generated responses.

Typically, these tools track and analyze:

- Brand Mentions: How frequently your brand appears in answers.

- Context & Sentiment: Whether the AI describes you as expensive, reliable, or innovative.

- Share of Voice (SOV): Your visibility compared to direct competitors.

- Citations: Which URLs the AI links to as evidence (crucial for Perplexity and SearchGPT).

- Risky Hallucinations: Incorrect claims the AI might be making about your products.

This emerging field is often called Generative Engine Optimization (GEO). It is becoming critical because user behavior is shifting. With consumer daily usage of AI tools jumping significantly between 2024 and 2025, and AI-influenced traffic projected to reach 14.5% of organic search by 2026, being invisible to LLMs means being invisible to a growing segment of your market. If I can measure this visibility, I can improve it.

Quick definition: “AI rank” vs traditional rankings

In traditional SEO, you have a specific rank: you are position #1 or #5 for a keyword. “AI rank” is more fluid. Think of it less like a leaderboard and more like a repeating survey. If we ask an LLM the same question 100 times, how often do you appear? If you appear 60 times, your “rank” or appearance rate is 60%. It varies by model, prompt phrasing, and even the time of day.

Why monitoring LLM brand mentions is important for US businesses

Imagine a potential customer asks Gemini, “What is the best project management tool for creative agencies?” If the answer lists Asana, Monday, and Trello, but omits your tool, you haven’t just lost a click—you’ve lost consideration entirely. Monitoring helps you protect your brand reputation by catching hallucinations early, aids in competitive discovery, and defends your market share in a world where AI answers are the new “zero-click” search result.

The GEO KPIs that actually matter (and how to interpret them)

One of the hardest parts of starting with GEO is the lack of standardized metrics. Every tool calls them something slightly different. However, after testing multiple platforms, I’ve found that a few core metrics are essential for making business decisions.

Below is a glossary of the KPIs I rely on. A word of caution: these metrics are directional. Because LLMs are probabilistic (they predict the next word), a score of “85/100” isn’t an absolute grade like a math test—it’s a statistical probability based on that vendor’s specific sampling method.

How GEO metrics differ from traditional SEO metrics

In SEO, we track impressions and clicks. In GEO, we track presence and context. I might get zero clicks from a ChatGPT answer, but if that answer recommends my brand as the “top choice for enterprise,” that value is immense. I track these metrics weekly for trend lines, but I do a deep dive monthly to see if our content updates are actually moving the needle.

A beginner-friendly KPI glossary (table)

Here is how to read the data you will see in these dashboards:

| Metric | What it measures | Why it matters | Common Pitfalls |

|---|---|---|---|

| Generative Appearance Score | The percentage of times your brand appears in responses for a specific set of prompts. | This is your baseline visibility. If you aren’t seen, you aren’t chosen. | Don’t obsess over 1-2% fluctuations; look for double-digit shifts. |

| Share of Voice (SOV) | Your appearance frequency compared to your competitors in the same prompt set. | Tells you who is dominating the conversation in your niche. | Can be skewed if you only track branded keywords (e.g., “Is [Brand] good?”). |

| Citation Rate | How often the LLM provides a link to your website as a source. | Critical for driving traffic (Perplexity/SearchGPT) and proving authority. | Not all LLMs cite sources (e.g., standard ChatGPT often doesn’t). |

| Sentiment Score | Whether the AI speaks positively, neutrally, or negatively about you. | Protects reputation. You don’t want to be “visible” for a data breach. | Nuance is often lost. A “negative” score might just be discussing a “pain point” you solve. |

| Prompt Coverage | The percentage of your target buyer questions where you show up at all. | Highlights gaps in your content strategy. | Tracking too few prompts gives a false sense of security. |

Note: Always verify the methodology of the tool you choose. If they can’t explain how a score is calculated, treat it as a rough estimate only.

Best AI brand monitoring tools: comparison, strengths, and who each is for

The market for these tools exploded in 2025–2026. We are seeing a mix of agile startups and established SEO giants entering the ring. I have categorized the best AI brand monitoring tools below based on who they serve best, rather than a generic ranking.

How I evaluated tools (the criteria that matter for beginners)

When selecting a tool for my own workflows, I look for:

- Multi-model coverage: Does it track ChatGPT, Gemini, Claude, and Perplexity?

- Prompt management: Can I save and tag my own custom prompts?

- Consistency: Does it run the scan multiple times to average out LLM randomness?

- Citation mapping: Does it tell me which URL caused the mention?

- Alerting: Will it email me if a negative claim surfaces?

- Budget: Is the pricing accessible for an SMB or gated for enterprise?

SMB-friendly picks (simple setup, fast insights)

If I had a small team and limited budget, I would start here. These tools focus on getting you a baseline quickly without overwhelming you with data.

Otterly.ai is a standout for accessibility. Founded in 2024 and partnering with Semrush in early 2025, it is designed for ease of use. It allows you to track mentions across major models and provides clear visibility scores. It’s best for marketers who need to answer “are we showing up?” without a steep learning curve.

Key strengths: Low barrier to entry, clean UI, and reliable tracking of the “Big 3” LLMs.

Mid-market options (share-of-voice, benchmarks, and reporting)

For agencies or growth teams where leadership is asking, “Are we beating Competitor X?”, you need more robust benchmarking.

Peec AI, founded in 2025, has gained significant traction (and funding) by focusing on detailed analytics. It offers excellent Share-of-Voice capabilities and prompt-level insights, making it easier to pinpoint exactly where you are losing ground. Brand24 has also evolved with its “Chatbeat” module (and similar AI features), leveraging its massive history of media monitoring to bring reliability to AI tracking.

Serpstat’s LLM Brand Monitor is another strong contender here, offering automated scanning and risk detection. These tools are best for teams that need to report monthly progress on specific KPIs like SOV.

Enterprise-grade platforms (compliance, scale, and governance)

At the enterprise level, the biggest difference isn’t just more charts—it’s governance and brand safety.

Semrush (Enterprise AIO / AI Visibility Toolkit) is a heavyweight here. If you are already in the Semrush ecosystem, the integration is seamless. They provide deep data on prompt trends and sentiment. Profound and Riff Analytics are excellent for organizations where compliance is key—ensuring that AI responses aren’t just visible, but accurate and legally safe.

Scrunch offers persona-driven visibility, which is powerful for large brands targeting specific audience segments. These tools often include API access and custom reporting suitable for the C-suite.

Vertical-specific notes: e-commerce & D2C vs SaaS/services

Your needs will change based on what you sell.

- For E-commerce/D2C: You need product-level tracking. Ranketta is a tool to watch here; it focuses on product visibility and citations, helping brands understand if their specific SKUs are being recommended.

Prompts to test: “Best running shoes under $100”, “Alternatives to Nike Pegasus”, “Reviews for [Product Name]”. - For SaaS/Services: You care about “Best of” lists and solution comparisons. General tools like Peec AI or Semrush work well here.

Prompts to test: “Top CRM for small business”, “[Competitor] vs [Your Brand]”, “Is [Your Brand] secure?”.

Feature comparison table (LLMs covered, prompts, sentiment, citations, alerts, compliance)

| Tool | Best For | Multi-Model | Sentiment | Citation Mapping | Alerts |

|---|---|---|---|---|---|

| Otterly.ai | SMB / Starters | ✅ | ✅ | Basic | ✅ |

| Peec AI | Mid-Market Growth | ✅ | ✅ (Advanced) | ✅ | ✅ |

| Semrush AIO | Enterprise / SEO Pros | ✅ | ✅ | ✅ | ✅ |

| Ranketta | E-commerce | ✅ | ✅ | ✅ | ✅ |

| Brand24 | Reputation Mgmt | ✅ | ✅ | ✅ | ✅ |

| Profound | Ent. Compliance | ✅ | ✅ | ✅ | ✅ |

How I pick and implement the best AI brand monitoring tools (a beginner workflow)

Buying the tool is the easy part. Building a workflow that actually improves your rankings is where the work happens. I have seen companies buy expensive software only to log in once a month. To get value, you need to integrate this into your AI SEO tool stack effectively.

Here is the exact 5-step workflow I use to go from “blind” to “optimized” in about two weeks.

Step 1: Set a clear goal (visibility, reputation, or competitive intel)

Don’t try to do everything at once. If my goal is Visibility, I focus on the “Generative Appearance Score” for category prompts. If my goal is Reputation, I set strict alerts for negative sentiment. If it’s Competitive Intel, I obsess over Share of Voice vs. the market leader.

Step 2: Build a prompt library that matches real buyer questions

Most people fail here because they only track their brand name. You need to track the questions before they know you exist. I usually spend 60–90 minutes building a first draft. Try these templates:

- Category Discovery: “What are the best [Your Industry] tools for [Persona]?”

- Comparison: “[Competitor A] vs [Competitor B] vs [Your Brand]”

- Alternatives: “Free alternatives to [Major Competitor]”

- Specific Feature: “Which [Industry] software has the best [Feature]?”

Step 3: Choose models and run a baseline scan

Select the models that matter most to your audience (usually ChatGPT, Gemini, and Perplexity). Run your initial scan. Important habit: I always freeze a “Baseline Set” of 50 prompts that I never change. This allows me to track true progress over time without skewing the data by adding easier prompts later. Note the date and model versions, as an update to GPT-4o or Claude 3.5 can shift results overnight.

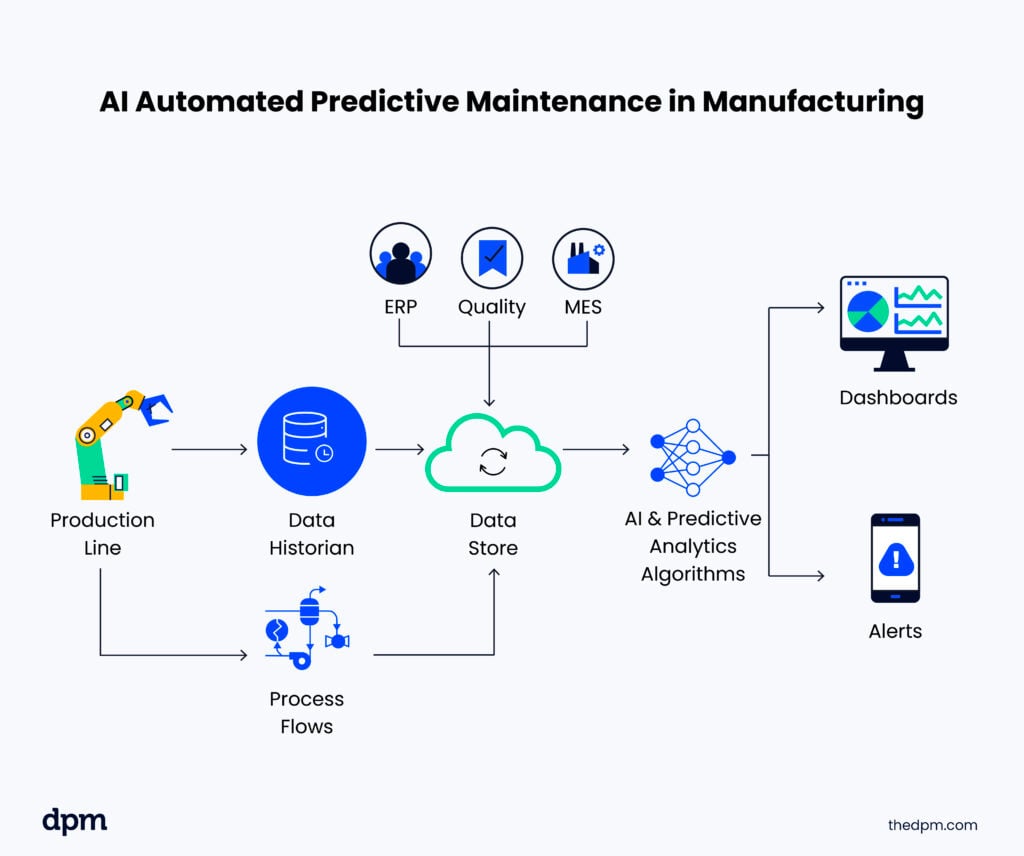

Step 4: Turn insights into content fixes (citations, entity clarity, and pages to improve)

This is where the magic happens. If you notice Perplexity is citing a competitor’s blog post for a definition, that’s a content gap. If ChatGPT says your pricing is “expensive” when it’s actually mid-tier, your pricing page might be unclear to the crawler.

Use these insights to update your “About” page, refresh your FAQs, and ensure your entity signals (who you are, what you do) are crystal clear. I often use monitoring data to inform briefs, then use an AI article generator to help draft the comprehensive, structured content that LLMs prefer to cite.

Step 5: Reporting cadence and a simple dashboard for beginners

Don’t overcomplicate reporting. I recommend a weekly quick check (15 mins) to look for alerts, and a monthly deep dive (1 hour) to report on trends.

Your One-Page Dashboard:

- Top Level: Overall Visibility Score (Current vs Last Month).

- Wins: 3 prompts where you gained a recommendation.

- Losses/Risks: Any negative sentiment or lost citations.

- Action: 3 pages to update this month based on the data.

Common mistakes when tracking brand mentions in LLMs (and how I fix them)

I’ve made plenty of mistakes when I started tracking GEO metrics. Here are the most common ones so you can avoid them:

- Mistake: Relying on a single prompt iteration.

Fix: LLMs are random. Always use tools that run the prompt 3–5 times and average the results. - Mistake: Ignoring the “Why”.

Fix: Don’t just look at the score. Read the actual answer text to see why the competitor was chosen (e.g., “cited for better security features”). - Mistake: Tracking only your brand name.

Fix: 80% of your tracking should be unbranded category keywords (e.g., “best CRM”) to capture new demand. - Mistake: Forgetting about personalization.

Fix: Remember that real user results vary by location and history. Treat your tool’s data as a “clean slate” baseline, not absolute truth. - Mistake: No action loop.

Fix: Data without action is vanity. Assign an owner who is responsible for updating content based on the findings.

Mistake-to-fix checklist (5–8 items)

- Prompt Variance: Did you test slightly different phrasings? (Fix: Use variations).

- Citation Blindness: Are you ignoring sources? (Fix: Map top sources and try to get mentioned there).

- Sentiment nuances: Is “cheap” bad or good? (Fix: Manually review sentiment flags).

- Cadence: Are you checking sporadically? (Fix: Set a calendar reminder).

- Integration: Is this data siloed? (Fix: Share reports with the content team).

FAQs: best AI brand monitoring tools and GEO basics

What is an AI brand monitoring tool for LLMs?

It is software that automates the process of asking questions to AI models (like ChatGPT) to see if and how they mention your brand. It tracks frequency, sentiment, and the context of your appearance in generated answers.

Which features are essential in LLM brand monitoring tools?

The must-haves are multi-model coverage (tracking more than just ChatGPT), sentiment analysis (knowing if mentions are positive), citation tracking (knowing the source), and trend alerts (notifying you of spikes or drops).

Which tools are best for different business sizes?

For SMBs or single marketers, Otterly.ai or similar lightweight tools are best. For mid-market growth teams needing benchmarks, Peec AI or Brand24 are great choices. For enterprises needing compliance and governance, Semrush Enterprise or Profound are ideal.

Conclusion: My 30-day plan to improve how LLMs mention your brand

The era of “Zero-Click” search is shifting to “Answer-First” search. If you aren’t monitoring your presence in LLMs, you are flying blind in the fastest-growing traffic channel of the decade. But you don’t need to overcomplicate it.

Here is your 30-day plan:

- Week 1: Pick a tool (start with a free trial of an SMB or Mid-market option) and build your 50-prompt baseline.

- Week 2: Run your first full scan. Identify the “low-hanging fruit”—prompts where you are mentioned but not recommended.

- Week 3: Update your core entity pages (About, Homepage, Product) to clarify the facts the AI got wrong.

- Week 4: Re-scan and report on the delta.

Start small, be consistent, and treat this as a long-term reputation strategy. Measure what’s happening, decide on the fix, update your content, and retest. It’s a brave new world for search—time to get visible.