Finding Your AI Rank: The Best LLM Monitoring Tools to Monitor Your Presence in LLMs

Introduction: Why I’m tracking “AI rank” now (and what you’ll get from this guide)

It happened on a Tuesday. A prospect mentioned they had asked ChatGPT for a shortlist of enterprise software in our category—and we weren’t on it. We were ranking #1 on Google for that specific keyword, but in the AI answer, we were invisible.

That was the moment I realized traditional rank tracking wasn’t enough anymore. If you are in SEO or marketing today, you are likely facing the same anxiety: customers are having conversations with AI models about your products, and you have no idea what is being said.

In this guide, I’m cutting through the hype to give you a practical system for “LLM visibility.” We will cover exactly what metrics matter (it’s not just rankings), a comparison of the best LLM monitoring tools on the market, a decision framework to help you choose the right one for your budget, and a step-by-step roadmap to start tracking your AI presence this week. This isn’t about predicting the future; it’s about managing your brand reputation right now.

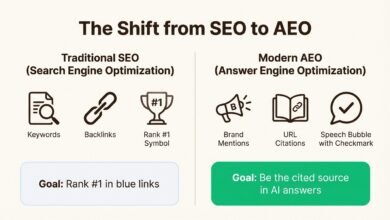

What are LLM monitoring tools—and why traditional SEO can’t fully measure AI visibility

To understand why we need new tools, we have to look at how the mechanism of discovery has changed. Traditional SEO is like stocking a shelf: you optimize a page, Google indexes it, and it sits in a relatively stable position (rank) until a competitor bumps it down.

LLM answers, however, are like a conversation. They are dynamic. The answer ChatGPT gives to “Best CRM for small business” might differ from the answer it gives to “Best CRM for small business under $50.” It varies by model (Claude vs. Gemini), by user location, and even by the time of day.

LLM monitoring tools (sometimes called GEO tools or AI visibility platforms) are designed to handle this volatility. Instead of checking a static rank, they simulate thousands of queries to identify patterns. They track how often your brand is mentioned, whether the sentiment is positive, and—crucially—whether the AI provides a citation (link) back to your site. Traditional rank trackers simply cannot parse the conversational nuance or the “share of voice” across fifty different generated responses.

Quick answer: LLM monitoring tools in one minute

What they are: Software that tracks brand mentions, citations, and sentiment in AI-generated responses (ChatGPT, Gemini, Perplexity, etc.).

What they do: They automate the process of asking AI models questions about your industry to calculate your “Share of Voice” and “Recommendation Rate.”

Who needs them: SEOs, Brand Managers, and PR teams who need to quantify their visibility in AI search.

Why “AI rank” isn’t a single number

If you are looking for a simple “We are #3” metric, you might be disappointed. AI visibility is probabilistic, not absolute.

Consider this real-world variability:

- Prompt A: “Best invoicing software for freelancers.” → Model lists Freshbooks and QuickBooks.

- Prompt B: “Best invoicing software for freelancers looking for a free tier.” → Model lists Wave and Zoho.

If you only tracked Prompt A, you’d think you were invisible. If you tracked Prompt B, you’d think you were winning. The goal of these tools isn’t to give you a single rank, but to measure your presence across hundreds of these variations over time.

The metrics I watch: how to measure presence, quality, and business impact in LLM answers

When I report to stakeholders, I don’t dump raw data on them. I group metrics into three buckets: Presence, Quality, and Evidence. This helps leadership understand if we are seen, how we are seen, and why.

Here is the measurement model I use:

| Metric Bucket | What it tells me | How to improve it |

|---|---|---|

| Presence (Mentions & Share of Voice) | “Are we part of the conversation?” | Increase brand awareness, PR, and co-occurrences in industry text. |

| Quality (Sentiment & Accuracy) | “Is the AI telling the truth about our pricing/features?” | Correct public data sources, update schema, and fix misinformation on high-authority sites. |

| Evidence (Citations & Sources) | “Is the AI linking to us as the proof?” | Publish structured data, original research, and high-authority content. |

Presence metrics: mentions, recommendation rate, share of voice

This is your baseline. If I run 100 prompts related to “best project management tools,” how many times does my brand appear? Recommendation Rate is often the key KPI here. It’s simple math: if you appear in 40 out of 100 answers, you have a 40% visibility rate for that topic category.

Quality & risk metrics: sentiment, accuracy, and “wrong-but-confident” answers

This is where things get risky. I’ve seen AI models confidently state that a SaaS product has a “free plan” when that plan was sunsetted two years ago. Monitoring for Sentiment and Hallucinations is critical for brand safety. If you see a spike in negative sentiment, it usually means the model is ingesting recent negative reviews or press coverage.

Evidence & trust: citations, sources, and “why the model picked you”

For SEOs, this is the holy grail. We want the AI to not just mention us, but to cite us. Tools that track Citation Frequency show you which of your URLs are serving as the “source of truth” for the AI. If your product page is structured clearly (great headers, schema, clear pricing tables), it is far more likely to be cited than a vague marketing landing page.

Categories of best LLM monitoring tools (and what each category is good at)

The market is messy right now. You have brand new startups, open-source developer tools, and legacy SEO giants all claiming to do the same thing. In my experience, they fall into four distinct buckets:

1. GEO / AI visibility platforms (brand perception at scale)

Best for: Brand managers and Enterprise SEOs.

These tools focus on the “big picture.” They simulate thousands of queries to give you a statistically significant view of your brand health. For example, Evertune AI reportedly processes over one million AI responses per brand monthly to determine visibility signals. They are less about “fixing one prompt” and more about “fixing brand perception.”

2. Real-time trackers & prompt-level analytics (monitoring what customers actually ask)

Best for: SEOs and Content Leads.

These tools function like a rank tracker for LLMs. You give them specific keywords (prompts), and they track your visibility over time. LLM Tracker and Sitechecker fit here. They are great for spotting immediate drops in visibility on your most important commercial terms.

3. Open-source observability (for teams building with LLM APIs)

Best for: Engineers and Product Teams.

Don’t confuse these with SEO tools. Platforms like Helicone and Langfuse are for monitoring your own AI applications (e.g., your company’s chatbot). They track latency, cost, and output quality. While powerful, they won’t tell you what ChatGPT thinks of your brand unless you build a custom scraper on top of them.

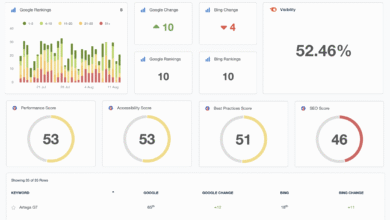

Best LLM monitoring tools: comparison table + what each tool is best for

I evaluated these tools based on model coverage, metrics depth (do they just count mentions or do they analyze sentiment?), ease of setup, and reporting capabilities. Remember, “best” depends entirely on whether you are trying to impress a CMO with share-of-voice charts or help a content team fix a specific citation.

Comparison table: the best LLM monitoring tools at a glance

| Tool | Category | Models Covered (Examples) | Core Metrics | Best For |

|---|---|---|---|---|

| Evertune AI | GEO / Brand Visibility | ChatGPT, Gemini, Claude | Share of Voice, Topic Relevance, Sentiment | Enterprise Brand Management |

| Ranketta | GEO / Brand Visibility | ChatGPT, Gemini, Perplexity | Recommendation Rank, Visibility Score | E-commerce & D2C Brands |

| LLM Tracker | Real-time Tracking | 50+ Models | Mentions, Sentiment, Context | Agencies & High-Volume Tracking |

| Otterly.ai | SEO Extension | ChatGPT, Claude, Gemini | Mentions, Citations | SEOs using Semrush workflows |

| Sitechecker | SEO Suite | Major LLMs | Prompt Rankings, Citations | SMBs needing all-in-one SEO |

| Helicone | Observability | Any via API Proxy | Latency, Cost, Output Logs | Dev Teams building AI apps |

GEO/visibility picks: Evertune and Ranketta

If your primary goal is benchmarking, Evertune stands out for its volume. By processing huge datasets, it smooths out the noise of individual random answers. Ranketta is a newer player (launched in 2025 with €1M pre-seed funding ) that is specifically aggressive on measuring visibility for e-commerce and D2C brands. If I were running a skincare brand, I’d look at Ranketta to see how often my Vitamin C serum is recommended against competitors.

Prompt-level trackers: LLM Tracker, Sitechecker, and Otterly.ai

LLM Tracker is a powerhouse for coverage, monitoring over 50 models with high uptime guarantees . It’s great if you need to know if a specific niche model is hallucinating about your product. Sitechecker integrates citation tracking well, showing you exactly which sources the AI is pulling from. Otterly.ai is interesting because of its integration with Semrush—if you already live in Semrush for SEO, it streamlines your workflow significantly.

Social/listening crossover: Brand24 Chatbeat

Brand24 has pivoted smartly with Chatbeat. They monitor brand mentions at a massive scale (aggregating billions of mentions ). This is less about “SEO” and more about listening. I use this when I want to know if a PR crisis is bleeding into AI answers.

Developer observability: Helicone, Langfuse, and Arize Phoenix

If you are a developer, Helicone is a favorite because of its “one-line code change” integration—you just change your API base URL . Langfuse and Arize Phoenix offer deeper tracing for complex chains. Again, these are for monitoring your apps, not your external brand reputation.

How I choose the right tool: a decision framework for beginners

Choosing software is exhausting. To save you time, here is the mental framework I use. It depends entirely on your role and your available time.

Scenario mapping

- Scenario A: The SMB Marketing Team of One.

Goal: “Just tell me if we are showing up.”

Recommendation: Sitechecker or Otterly.ai. You don’t have time for a complex new platform. You need something that fits into your existing SEO weekly check-in. - Scenario B: The Enterprise Brand Lead.

Goal: “I need to show the Board our Share of Voice vs. Competitors.”

Recommendation: Evertune or Ranketta. You need polished dashboards, trend lines over time, and statistically significant data, not just screenshots of ChatGPT. - Scenario C: The Product Engineer.

Goal: “Why is our internal chatbot hallucinating?”

Recommendation: Helicone or Langfuse. You need logs, traces, and cost analytics.

Questions I ask on every demo

- How do you sample prompts? (Do you run the same prompt 10 times to average the results, or just once?)

- How do you handle personalization? (Are these results generic, or location-specific?)

- Can I export the data? (Dashboards are nice, but I usually need a CSV to merge with my own data.)

- What is your model update frequency? (GPT-4 gets updated often; does your tool reflect the latest version?)

Implementation roadmap: how I set up LLM monitoring + the SEO/content loop that improves results

Buying the tool is the easy part. The hard part is building a workflow that actually improves your visibility. Here is the 4-step process I use. It takes about 2-4 weeks to get fully running.

Step 1: Pick the models and surfaces your customers actually use

Don’t try to track everything. If you are B2B, focus on ChatGPT (Plus & Team), Claude, and Perplexity. If you are B2C/Local, you must include Google Gemini (since it integrates with Maps/Flights) and potentially Bing Copilot. Start with the big 3; expand later.

Step 2: Build a prompt library that mirrors real buyer questions

This is where most people fail—they write marketing prompts like “Tell me about [My Brand].” Real users don’t do that. Build a library of 20-50 prompts based on intent:

- Discovery: “Best [industry software] for [use case]”

- Comparison: “[My Brand] vs [Competitor] pros and cons”

- Pricing: “How much does [My Brand] actually cost?”

- Safety: “Is [My Brand] legitimate?”

Step 3: Capture a baseline and set reporting you can sustain

Run your initial batch. Do not panic if the results are bad. This is your baseline. I recommend a simple monthly report: Recommendation Rate % (Are we showing up?) and Sentiment Score (Do they like us?). Don’t over-complicate it with 50 charts.

Step 4: Turn insights into SEO + content actions

This is the money step. If you find you are not being cited for a specific topic, you likely have a content gap. You need to create authoritative, structured content that directly answers those questions. This is where using an advanced AI article generator can help you scale—not to spam, but to quickly build the comprehensive, structured knowledge base that LLMs prefer to cite. Update your FAQs, add schema markup, and refresh your pricing pages.

Common mistakes (and fixes) + FAQs + my next steps checklist

Common mistakes and fixes

- Mistake: One-off screenshots.

Fix: AI answers change. Never rely on a single screenshot. Use a tool that tracks trends over weeks. - Mistake: ignoring citations.

Fix: Mentions are vanity; citations are sanity. Focus on getting your URLs linked as the source. - Mistake: No clear owner.

Fix: Assign this to SEO or Content. If “everyone” owns it, nobody owns it.

FAQs about LLM monitoring tools

What are LLM monitoring tools?

They are platforms that track how your brand appears in AI-generated responses, measuring metrics like mentions, sentiment, and citations.

Why can’t traditional SEO tools meet AI visibility needs?

Traditional tools track static list positions. AI answers are conversational and dynamic, requiring prompt-based simulation to measure accurately.

How do brands benefit from GEO tools?

They allow you to protect your reputation (spotting hallucinations) and grow your market share by optimizing content to be recommended more often.

Conclusion: my 3 takeaways + next actions I’d do this week

We are in the early innings of Generative Engine Optimization. The brands that start measuring now will be the ones that define the rules later. Here is my final checklist for you:

- Visibility is probabilistic: Stop looking for a single rank. Look for Share of Voice trends.

- Content structure matters: LLMs cite easy-to-read, authoritative sources.

- Start small: You don’t need enterprise tools to start. Pick 20 prompts and check them manually if you have to—just start measuring.

Your next actions:

- Draft your top 10 “money prompts” (the questions you must win).

- Run a manual check on ChatGPT and Perplexity today to see where you stand.

- If you find gaps, schedule a content refresh sprint to update those topics on your site using high-quality automated tools to speed up the process.