The Authority Tracker: Top LLM rank tracking tools for monitoring your LLM rankings

Introduction: Why I treat LLM visibility like a new “rankings” surface (and how this guide helps)

I distinctly remember the moment the shift became real for me. We were ranking #1 in Google for “best payroll software for startups,” driving consistent leads every day. Then, out of curiosity, I pasted that exact query into ChatGPT. The result? We didn’t even make the list. Our competitor, who was languishing on page two of Google, was the first recommendation.

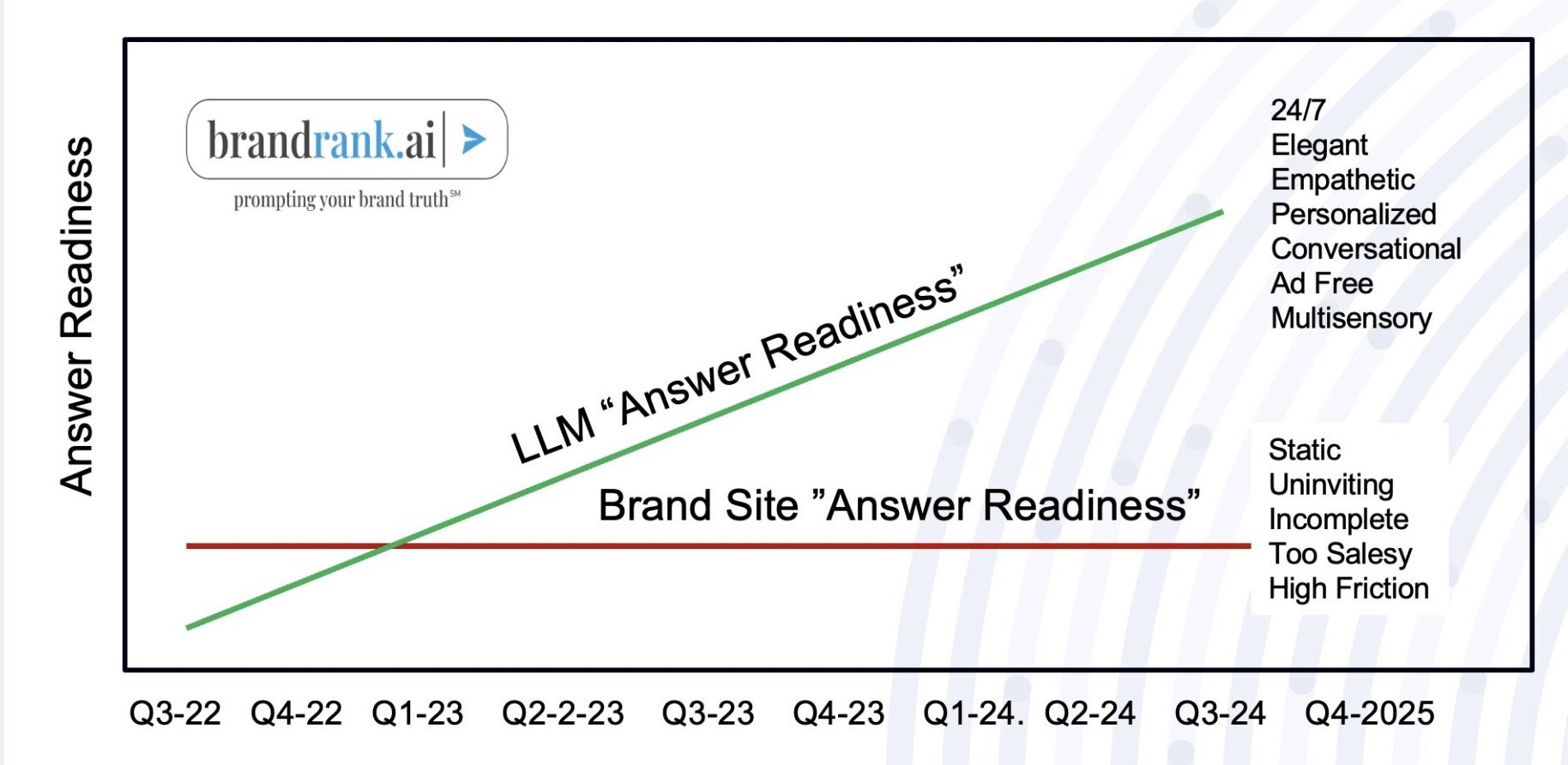

That disconnect is becoming the new normal. Traditional rank trackers are excellent at telling you where you sit on a search engine results page (SERP), but they are completely blind to the conversational results driving a growing 29% of consumer search behavior . If you are invisible in the answer engine, you are invisible to the user.

I’ve noticed that the same page can be cited one day and ignored the next—it’s volatile, probabilistic, and frustratingly opaque. That is why I wrote this guide. I’m going to walk you through the emerging landscape of LLM rank tracking tools, explain the critical difference between specialized GEO platforms and SEO suite add-ons, and give you the exact weekly workflow I use to monitor this new visibility surface without losing my mind.

What “LLM rankings” actually mean (and why LLM rank tracking tools aren’t the same as SEO rank trackers)

To understand LLM rank tracking tools, you first have to unlearn how you think about Google rankings. In traditional SEO, a rank is like a thermometer reading: you are either #1 or you aren’t. It is a fixed position at a specific moment in time.

LLM rankings are more like a weather forecast—a probability. Because Large Language Models (LLMs) are non-deterministic, they generate responses token by token. This means if five different users ask Claude or Gemini the same question, they might get slightly different answers. Therefore, “ranking” in this context isn’t a position; it is a frequency.

This shift has birthed two related disciplines: GEO (Generative Engine Optimization) and AEO (Answer Engine Optimization). While SEO focuses on winning the click, GEO focuses on winning the citation and the sentiment within the answer itself. With AI platforms influencing approximately 6.5% of organic traffic in 2025 , and projections suggesting LLM traffic may surpass traditional search by 2027 , understanding this distinction is no longer optional.

The simplest definition: visibility = mentions + citations + sentiment over repeated prompts

When I look at a dashboard, I’m not looking for a “position.” I’m tracking three specific components that impact business decisions:

- Mentions: How often does the brand name appear in the text? If I ask “best payroll software,” do we exist?

- Citations: Is the model linking back to us? Are they citing our pricing page, a third-party review, or a hallucinated URL?

- Sentiment: Is the mention positive? I’ve seen tools cite a brand but describe it as “expensive and complex,” which is arguably worse than not ranking at all.

Most sophisticated tools aggregate these into a “Share of Voice” (SoV) metric, comparing your visibility against competitors across hundreds of query variations.

What to measure first as a beginner (so you don’t drown in dashboards)

If I’m starting from zero, I track these metrics before I pay for advanced enterprise features:

- Mention Rate by Prompt Cluster: What percentage of the time do we appear for “bottom of funnel” queries?

- Citation Sources: Which of our pages are actually being referenced? (It’s often not the homepage).

- Competitor Comparison: Are we losing ground to a specific rival in ChatGPT?

- Trend Line: Is our visibility moving up or down over a 30-day period?

- Alerts: Notify me immediately if our mention rate drops by more than 10%.

How LLM rank tracking tools work under the hood: prompts, models, and statistical sampling

So, how do these tools actually gather this data? It’s not as simple as scraping a results page. The mechanics involve running a library of prompts against API endpoints for major models (ChatGPT, Gemini, Claude, Perplexity, etc.).

The process generally looks like this:

- Prompt Library: You upload a list of questions your customers ask (e.g., “top cybersecurity tools”).

- Model Runs: The tool sends these prompts to the selected LLMs. Crucially, it sends them multiple times (sampling).

- Parsing: The tool analyzes the text response to detect brand names and extract URLs (citations).

- Aggregation: It averages the results to tell you, “You appeared in 80% of responses.”

Tools like Evertune reportedly process over one million AI responses per brand monthly just to achieve statistical significance . This scale is necessary because a single run is just anecdotal evidence.

Why repeatability is the whole game (and what ‘significance’ means in practice)

I’ve seen the same prompt give three different “top tools” lists in the span of ten minutes. If you only check once, you are chasing ghosts. Sampling is how we normalize that variance.

There is no perfect number for “statistical significance” in marketing, but confidence comes from consistency. My rule of thumb: if the budget allows, look for tools that run your priority prompt clusters 20–50 times. If you are on a budget, look for tools that run fewer samples but trend them weekly so you can see the direction of travel rather than the noise of the day.

What tools can reliably detect (and what they still get wrong)

These tools save massive amounts of time, but they aren’t magic. They can struggle with nuances that a human spots immediately. I still spot-check about 10% of results, especially when brand safety is high stakes.

Common limitations include:

- Hallucinated Citations: The tool might report a link, but when you click it, it’s a 404.

- Implied Recommendations: Sometimes a model recommends a product by describing it without naming it directly; tools often miss this.

- Paraphrasing: If your brand is “Acme Corp” but the model says “the makers of Roadrunner Traps,” a strict keyword matcher might miss the mention.

The LLM rank tracking tools landscape: specialized GEO platforms vs SEO suite add-ons

The market has split into two distinct categories. Understanding which one you need will save you from overspending or under-equipping your team.

- Specialized GEO Platforms: (e.g., xSeek, Peec AI, Profound, Evertune). These are built solely for AI visibility. They offer deep prompt libraries, sentiment analysis, and rigorous sampling.

- SEO Suite Add-ons: (e.g., Semrush AI Visibility, SE Ranking, Nightwatch). These are modules added to existing SEO platforms. They are great for unified reporting but often have lighter sampling methodologies.

When a specialized tool is worth it

I’d recommend a specialized platform if you are in a highly competitive category (like SaaS or FinTech) where a 1% shift in market share is worth millions. If you need to track share of voice across multiple product lines and require granular citation mapping to defend your brand reputation, the specialized tools offer the depth you need.

When an SEO suite add-on is the pragmatic choice

For many mid-sized businesses, an add-on is perfectly adequate. If your team already lives inside Semrush or SE Ranking, adding an AI visibility module reduces tool sprawl. The best tool is the one your team actually checks weekly. If a separate login means the data gets ignored, stick with the suite.

What to look for in LLM rank tracking tools: a beginner-friendly checklist (features that matter)

It’s easy to get sold on flashy features like “predictive AI” that you’ll never use. Here is the checklist I use to evaluate vendors, focused on what actually helps you do the job.

| Feature | Why it matters | Question to ask on the demo call |

|---|---|---|

| Prompt-Level Tracking | Aggregate scores hide problems. You need to know exactly which question triggered a negative sentiment. | “Can I see the raw response text for a specific prompt?” |

| Multi-LLM Coverage | Your customers aren’t just on ChatGPT. You need visibility on Perplexity and Gemini. | “Which specific models do you query, and how often are they updated?” |

| Citation/Source Mapping | You need to know where the AI found its info so you can optimize that page. | “Does the report list the specific URLs cited in the response?” |

| Competitor Benchmarking | Visibility is relative. You need to know who is replacing you. | “How many competitors can I track side-by-side without extra fees?” |

| Evidence Logs | You need proof to show stakeholders. | “Do you store screenshots or raw HTML of the responses?” |

Minimum viable feature set (what I wouldn’t skip)

- Prompt Library & Clustering: Ability to group prompts (e.g., “Pricing,” “Alternatives”).

- Multi-Model Coverage: At least ChatGPT, Perplexity, and Gemini.

- Citation Extraction: Knowing the URL is critical for optimization.

- Change Alerts: I don’t want to log in every day; I want an email when we drop.

- Export Functionality: Because eventually, you’ll need to put this in a slide deck.

Data quality checks: sampling, freshness, and reproducibility

Be skeptical of tools that present a single run as absolute truth. If a vendor can’t explain their sampling methodology—how many times they run a prompt to verify the result—it’s a red flag. Always ask if they store the raw prompt-response pairs; if they don’t, you have no way to verify if the “sentiment analysis” was accurate or a hallucination.

The top LLM rank tracking tools to monitor LLM rankings (comparison + who each is for)

There is no single “best” tool, only the tool that fits your current maturity level. Below is a comparison of the key players I encounter most often in the market. Note that pricing and specific feature sets change rapidly in this space, so treat this as a landscape guide.

| Tool | Best For | LLM Coverage | Sampling Depth | Key Differentiator |

|---|---|---|---|---|

| xSeek / Evertune | Enterprise / High-Stakes | Extensive (ChatGPT, Gemini, Claude, etc.) | High (Statistically significant sampling) | Deep share-of-voice analysis and rigorous data quality. |

| Peec AI | SaaS / Growth Teams | Major Models | Medium | Focus on brand recommendation frequency. |

| Semrush AI Visibility | Existing Semrush Users | ChatGPT focused (typically) | Standard | Integrated directly into the SEO dashboard you already use. |

| SE Ranking AI Add-on | Agencies / SMBs | Major Models | Standard | Good balance of cost vs. utility for agencies. |

| Nightwatch | Rank Tracking Purists | Growing coverage | Standard | Known for accurate rank tracking, expanding into AI. |

Specialized GEO/LLM visibility platforms (deeper sampling, stronger insight layers)

xSeek and Evertune represent the heavy hitters. These platforms are designed for teams where a drop in AI visibility is a boardroom issue. They typically process millions of responses to ensure that when they say your visibility dropped 5%, it’s a real trend, not a random fluctuation. They excel at citation mapping—showing you exactly which third-party review site is feeding data to the LLM.

Profound and Peec AI occupy a strong middle ground, offering specialized dashboards that are often more intuitive than the enterprise giants, perfect for growth teams who need to move fast.

SEO suite add-ons (good enough visibility inside a workflow you already use)

Semrush and SE Ranking have integrated AI visibility directly into their suites. The trade-off here is usually depth of sampling; you might get fewer runs per prompt compared to a specialized tool. However, the ability to correlate an AI visibility drop with a traditional backlink loss in the same interface is powerful. For 80% of businesses, this “good enough” visibility is better than a perfect tool that sits in a silo.

A quick decision tree: which tool type fits my business right now?

- If you need to defend brand reputation across multiple product lines: Go Specialized (Evertune/xSeek).

- If you are a solo marketer or small team managing SEO + Content: Go SEO Suite Add-on (Semrush/SE Ranking).

- If your boss demands “Share of Voice” charts against 10 competitors: Go Specialized.

- If you just want to know if ChatGPT knows you exist: Start with a manual audit or a lower-tier plan on an SEO add-on.

How I set up LLM rank tracking tools: a step-by-step workflow you can copy

Buying the tool is the easy part. The hard part is building a workflow that turns data into traffic. Here is the exact sequence I follow to ensure the data actually leads to changes.

The 7-Step LLM Visibility Workflow:

- Define Goals: Are we chasing brand awareness (mentions) or traffic (citations)?

- Build Prompt Library: Gather the real questions users ask.

- Cluster Prompts: Group them by intent (Informational, Commercial, Navigational).

- Choose Models: Track the 2–3 models your audience actually uses.

- Set Cadence: Weekly sampling is usually sufficient for most.

- Dashboarding: Create a view that executives can scan in 30 seconds.

- Optimization Loop: This is where the magic happens. You analyze the gap, then you create or update content to fill it.

Naturally, once you know what to write, you need to produce it efficiently. This is where execution matters. I use an AI SEO tool like Kalema to help structure the content updates. Whether I need a full rewrite or just to add FAQ schema to a target page, using a robust SEO content generator speeds up the process significantly. It serves as my AI article generator for creating new support articles or as an AI content writer to expand on specific definitions LLMs are missing.

My Prompt Library Template:

| Prompt | Intent | Target Page | Competitors | Success Signal |

|---|---|---|---|---|

| “Best payroll software for small business” | Commercial | /best-payroll-software | Gusto, Rippling | Mention in top 3 + Link |

| “How much does [Brand] cost?” | Navigational | /pricing | N/A | Accurate price table citation |

| “[Brand] vs [Competitor]” | Comparative | /vs-competitor | Competitor X | Positive sentiment preference |

Step 1–2: Start with outcomes, then build a prompt library that reflects real customer language

Do not guess what people ask. I borrow wording directly from sales call recordings, our own site search data, and the “People Also Ask” boxes in Google. If customers ask “is X legitimate?” on Reddit, that goes into my prompt library. The language must be natural, US-centric (or relevant to your market), and intent-driven.

Step 3–5: Cluster prompts, pick models, and set a sampling cadence you can sustain

Don’t try to track 500 prompts on day one. Start with 20–50 priority prompts clustered by product or feature. I typically review these on Fridays. It’s better to have high-quality tracking on 20 prompts you actually optimize than 500 prompts that gather digital dust.

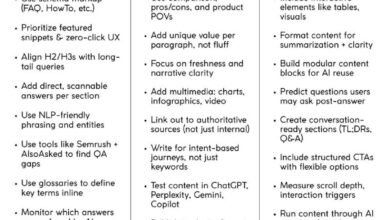

Step 6–7: Turn visibility data into on-page updates (titles, FAQs, schema, internal links)

When I see LLMs citing aggregator sites instead of us, I know I need to make our content easier for the AI to parse. My checklist for optimization includes:

- Clear H2s: Make sure the question is in the heading.

- Concise Definitions: Answer the question in the first sentence of the paragraph (LLMs love this).

- FAQ Schema: Mark up the content so it’s machine-readable.

- Internal Linking: Ensure the target page has authority flowing to it.

- Refresh Dates: Update the content so the model sees it as current.

Common mistakes with LLM rank tracking tools (and the fixes that actually work)

I’ve made plenty of mistakes implementing these tools. Here are the pitfalls to avoid so you don’t waste your budget.

- Treating single-run outputs as truth: I used to panic over one bad result. Fix: Always look at the trend line over 3+ runs.

- Tracking too many prompts too soon: It dilutes your focus. Fix: Prioritize the “money prompts” that drive revenue.

- Ignoring citations: Focusing only on brand mentions is vanity. Fix: Obsess over where the data comes from and optimize those sources.

- No competitor baseline: You can’t know if 40% visibility is good without knowing the leader has 80%. Fix: Always track Share of Voice against peers.

- No change log: If visibility spikes, you need to know why. Fix: Annotate your dashboard every time you ship a content update.

A lightweight weekly routine I use to keep the data actionable

I timebox this to 45 minutes a week so it actually gets done:

- Check Alerts (5 mins): Did any major drops happen?

- Spot Check Evidence (10 mins): Read 5–10 raw responses to check sentiment accuracy.

- Log Changes (5 mins): Note any site updates we pushed.

- Pick 1 Update (25 mins): Identify one page to improve based on missing citations.

FAQs + wrap-up: what to do next with LLM rank tracking tools

FAQ: Why can’t traditional SEO tracking suffice for LLM visibility?

Traditional trackers measure a static list. LLMs are conversational and probabilistic. A rank tracker can’t tell you that ChatGPT mentioned your brand but got your pricing wrong, or that it recommended you only for “enterprise” when you want “SMB” leads. You need prompt-level testing to see these nuances.

FAQ: What features should I look for in an LLM ranking tool?

Look for multi-model coverage (not just ChatGPT), citation mapping (source URLs), sentiment analysis (good vs. bad mentions), and the ability to export data. If it doesn’t have an alert system, you’ll eventually stop checking it.

FAQ: How can brands use visibility data to improve performance in AI answers?

When I see a gap, I take three actions: 1) I rewrite the target section to be more direct and factual. 2) I add Schema markup to help the machine understand the context. 3) I look at who is being cited and see if I can get a mention or link on that third-party site (digital PR).

Conclusion: Your Next Move

The shift from “ten blue links” to “one best answer” is happening. You don’t need to overhaul your entire strategy overnight, but you do need to start watching the weather.

If you do only one thing this week:

- Pick your top 20 “money keywords.”

- Convert them into conversational prompts (questions).

- Run them through ChatGPT and Perplexity manually to set a baseline.

- Then, decide if you need a specialized tool or an add-on to automate it.

The brands that start measuring this now will be the ones that define the answers of the future.