Global Discovery: A Practical Guide to Cross-Cultural Research Methods for Business Teams

Introduction: Global Discovery for Beginners (What I’ll Cover and Why It Matters)

I still remember the precise moment I realized a six-figure global study was going to fail. We had translated our US survey into Japanese perfectly—linguistically speaking—but the data coming back looked like random noise. The problem wasn’t the translation; it was the premise. We were measuring “individual achievement” in a culture where professional worth was tied to collective success. We weren’t just getting different answers; we were asking a nonsensical question.

If you are reading this, you are likely trying to avoid that exact sinking feeling. You might be a Product Marketer or UX Researcher tasked with “going global,” facing the daunting reality that what works in Chicago might flop in Cairo or Kyoto.

This isn’t an academic lecture. It is a practical, newsroom-grade guide to cross-cultural research methods designed for business operators. I will walk you through a defensible workflow to protect your data integrity, explain how to fix construct validity before it breaks your dashboard, and offer a candid look at where AI tools can actually help (and where they are dangerous).

What Are cross-cultural research methods? (And Why Construct Validity Breaks Across Borders)

In a business context, cross-cultural research methods are the specific techniques we use to understand behaviors, preferences, and meanings across different societies without letting our own cultural lens distort the results. It is about ensuring that a “4 out of 5” on a satisfaction scale means roughly the same thing in Germany as it does in Brazil.

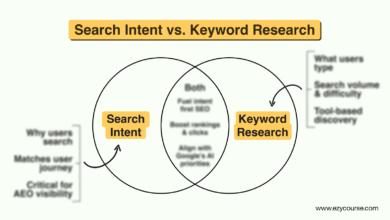

The biggest enemy here is construct validity. Simply put: Are you actually measuring what you think you are measuring? Concepts like “trust,” “leadership,” or “brand loyalty” are not universal constants. They are cultural agreements.

When we ignore this, we see common failure modes:

- Translation Drift: The words translate, but the nuance evaporates.

- Norm Differences: A question about “confronting your boss” might measure assertiveness in the US but insubordination or recklessness elsewhere.

- Response Styles: Some cultures gravitate toward the middle (neutrality), while others favor extremes (strong agreement).

- Context Effects: The physical or social setting of the research changes the answer.

- Sampling Mismatch: Comparing a mass-market audience in one country to a luxury niche in another.

Recent methodological reviews, including a 2025 Cambridge methods paper , emphasize that we can’t just “translate and deploy.” We need better approaches—like ethnography and community-engaged research—to ensure our instruments are valid before we ever launch a survey.

A quick mental model: ‘Same words’ ≠ ‘Same meaning’

Think of translation like currency conversion. You can mathematically convert $100 to the local currency, but you also need to adjust for purchasing power to understand what that money actually buys. Cross-cultural research requires that same “purchasing power” adjustment for meaning.

A Step-by-Step Workflow I Use for cross-cultural research methods (From Scoping to Decisions)

The most common mistake I see is rushing to fieldwork. Teams get a budget, translate a SurveyMonkey link, and blast it out. To get data you can actually use for business decisions (pricing, positioning, retention), you need a sturdier workflow.

Here is the process I use to keep projects on the rails:

- Define the Decision: What specifically needs to be decided? (e.g., “Should we launch Feature X in India?”)

- Define the Construct: What human behavior drives that decision?

- Check Equivalence: Do these concepts exist in the target market?

- Choose Method Mix: Select the right balance of quant and qual.

- Adapt Instruments: Translate, back-translate, and adapt.

- Pilot: Test the instrument in-market.

- Recruit & Collect: Execute fieldwork with local norms in mind.

- Analyze & Interpret: Look at the data through a cultural lens.

- Validate: Check findings with local experts.

- Document: Create an audit trail for future teams.

| Step | Goal | Deliverable | Quality Check |

|---|---|---|---|

| Scoping | Align on the business risk | Research Brief | “If the results are negative, will we actually stop the launch?” |

| Design | Create a culturally valid tool | Draft Instrument | Cognitive interviews with 3-5 locals per market. |

| Fieldwork | Collect comparable data | Raw Data / Transcripts | Daily checks for flatlining or response bias. |

| Analysis | Interpret without bias | Insight Report | Local expert review of the draft narrative. |

One critical tip: If you only do one thing from this list, do the construct definition (Step 2). I’ve seen projects saved just by realizing that “convenience” meant speed in one market and “human support” in another.

Step 1–2: Define the business decision and the construct (what I’m actually measuring)

I start every project by forcing stakeholders to be specific. If they want to measure “Brand Love,” I ask, “What does a customer doing that look like?” In the US, it might look like repeat purchase or social sharing. In Japan, it might look like quiet loyalty and lack of complaints. If you don’t map these distinct behaviors back to the business decision, your data will lead you astray.

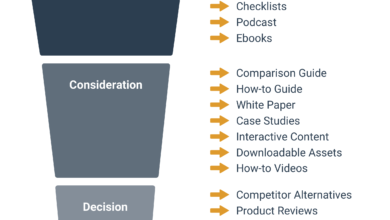

Step 3–5: Choose the right method mix (quant + qual + context)

Mixed methods are your safety net. Relying on a single survey is risky because you have no way to explain why the numbers look weird.

- Use Surveys when: You have a validated construct and need to size the market or track metrics over time.

- Use Interviews when: You are exploring a new market and need to understand the “why” behind behaviors.

- Use Ethnography when: You suspect users can’t articulate their needs, or when context (home environment, device usage) drives behavior.

Step 6–8: Pilot, collect, and document decisions (so results are repeatable)

Never lock a survey without a pilot. I look for specific red flags: Are respondents dropping out at a specific question? Are they finishing too fast (indicating they are just clicking through)? I always document these decisions in a “Field Notes” log. Two years from now, when a new Product Manager asks why the 2025 data looks different, that documentation will save your reputation.

Language and Translation: How I Keep Meaning Intact (Not Just Words)

Translation is not an administrative task; it is a research method. If you treat it like a commodity, you get commodity data.

Here is how I evaluate different translation approaches:

| Approach | Best For | Pros | Cons |

|---|---|---|---|

| Forward-Only | Low-risk, simple feedback forms | Fast, cheap | High risk of error; misses nuance entirely. |

| Back-Translation | Clinical or legal instruments | Catches literal errors | Can result in awkward, stilted phrasing that sounds “translated.” |

| Committee / Decentering | Strategic constructs (Brand, UX) | Ensures natural flow and meaning | Slower; requires bilingual experts discussing terms. |

My Translation QA Checklist:

- Reading Level: Is it accessible to the average participant?

- Anchors: Do the scale words (e.g., “Somewhat Agree”) carry equal weight?

- Taboos: Did we accidentally ask about income or family in an offensive way?

- Idioms: Did we remove phrases like “hit the ground running”?

Cognitive interviews: my go-to test for whether respondents interpret questions the same way

Before launching, I run cognitive interviews. I sit with a participant (often remotely), ask them the survey question, and then ask them to “think aloud.” It’s not about their answer; it’s about their comprehension.

My favorite probes:

- “In your own words, what is this question asking you?”

- “What does the word ‘reliable’ mean to you in this context?”

- “Why did you choose that specific option?”

- “Was there an answer choice missing that you wanted to pick?”

I recall one session where a participant stared at a “household income” question. It turned out that in their multi-generational home, “household” was an ambiguous concept. We adjusted the phrasing to “your personal contribution,” and the data quality improved immediately.

Design, Sampling, and Fieldwork: Capturing Cultural Nuance Without Losing Rigor

Once your instrument is solid, execution becomes the bottleneck. A brilliant survey sent to the wrong sample is still useless.

Fieldwork Readiness Checklist:

- Recruiting: Do we have local consent forms that meet GDPR/local privacy laws?

- Incentives: Is the reward culturally appropriate and valuable? (Gift cards don’t work everywhere).

- Scheduling: Have we accounted for local holidays and time zones?

- Escalation: If a participant reports harm, do we know the local protocol?

Sampling equivalence: how I avoid comparing apples to oranges

This is where business teams often get overconfident. You might target “VPs of Marketing” in the US. But in some markets, titles inflate, and a “VP” might be a mid-level manager. In others, titles are suppressed.

I focus on functional equivalence: I recruit based on responsibilities and budget authority, not just job titles. If a segment simply doesn’t exist in a market (e.g., a specific type of mature SaaS buyer), I document that mismatch rather than forcing a comparison that isn’t there.

Interviews across cultures: practical moderation adjustments I make

When I moderate across cultures, I have to check my American tendency to fill silence. In many Asian and Nordic cultures, silence is a thinking space, not a void to be filled. If I interrupt, I lose the insight.

Moderation Hypotheses (Treat these as things to test, not rules):

- Directness: In low-context cultures (US, Germany), I can ask “Why didn’t you like this?” In high-context cultures, I might soften it: “Some people might find this feature difficult; what is your view?”

- Hierarchy: Be aware that participants might agree with you just to be polite or because you represent the “expert” brand. I emphasize that “there are no wrong answers” repeatedly.

AI and Emerging Tools for Culturally Sensitive Research (What’s Real vs Hype)

There is a lot of noise about AI solving localization. The reality is nuanced. AI is incredible at synthesis and speed, but it lacks lived experience.

I use AI tools to draft materials and check for obvious blind spots. For instance, an AI content writer can be excellent for drafting initial research briefs or summarizing vast amounts of background literature, provided you validate the output. However, I never let AI define the cultural norm without human verification.

| Tool/Framework | What it’s for | Strengths | Limitations |

|---|---|---|---|

| CROSS Benchmark | Evaluating cultural safety in vision-language models | Identifies gaps in cultural awareness | Benchmarks are static; culture is fluid. |

| CultureSynth | Generating culturally informed Q&A pairs | Scales evaluation across regions | Synthetic data is still a simulation, not reality. |

| Soft-Prompt Tuning | Routing queries to culturally aligned models | Improves nuance without retraining | Requires high-quality local data to tune effectively. |

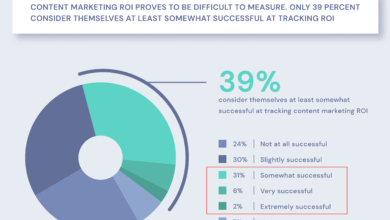

It is worth noting that recent benchmarks like CROSS show significant gaps. Even top-performing models often achieve only around ~61.8% cultural awareness and ~37.7% compliance . This means you cannot blindly trust a model to “localize” your strategy.

When you are ready to turn your verified findings into a scalable knowledge base, a specialized AI article generator can help you format insights for different internal stakeholders. If you need to roll out localized content updates across multiple market blogs, a Bulk article generator can assist, but I always insist on a “human-in-the-loop” review process to catch those subtle contextual errors.

Benchmarks and evaluation: why CROSS-style testing matters before I deploy AI in sensitive contexts

If you are using AI to analyze open-ended text from different countries, run a 30-minute safety test:

- Stereotype Check: Does the summary rely on lazy tropes?

- Politeness Check: Did the AI misinterpret indirect feedback as “positive”?

- Harm Check: Are there political or social sensitivities flagged?

- Refusal Behavior: Did the model refuse to answer a valid question due to US-centric safety filters?

Training and data resources I’d actually recommend (for beginners)

You don’t need a PhD, but you do need credible sources. I recommend checking out:

- HRAF (Human Relations Area Files): Their advanced cross-cultural research program is a gold standard.

- Ethnolab: A great emerging resource for semantic search and visualization of ethnographic data.

My advice: Spend week 1 just reading about the “Etic vs. Emic” distinction. Then, practice by reviewing your existing surveys for cultural bias.

Common Mistakes in Cross-Cultural Research (and How I Fix Them)

I’ve made most of these mistakes, so you don’t have to.

- Assuming “Validated” Means Universal: Just because a scale works in the US doesn’t mean it works in Vietnam. Fix: Re-pilot locally.

- Literal Translation: Ignoring idioms and tone. Fix: Use committee translation or decentering.

- Ignoring Response Styles: Treating a “4” in France the same as a “4” in the US. Fix: Analyze relative to local benchmarks, not global averages.

- Non-Equivalent Samples: Comparing mismatched user segments. Fix: Document the differences and caveat the findings.

- The “One City” Fallacy: Thinking Shanghai represents all of China. Fix: Be precise about your geographic scope in your report.

- Trusting AI Blindly: Using LLMs to “simulate” a persona. Fix: Use AI for synthesis, humans for source truth.

- No Audit Trail: Forgetting why you changed a question. Fix: Keep a decision log.

FAQs + Summary: What I’d Do Next on Your First Global Study

FAQ: Why is construct validity a concern in cross-cultural research?

Because if the concept doesn’t mean the same thing, the data is worthless. It’s like measuring height in inches and weight in pounds, then trying to average them. Without validity, you are just collecting noise.

FAQ: How can AI help improve cultural sensitivity in research tools?

AI helps by flagging potential bias, simulating different linguistic structures, and scaling the analysis of qualitative data. Tools like soft-prompt tuning allow us to adapt models to local norms, but they must always be paired with human oversight.

FAQ: What are emerging resources for researchers to learn cross-cultural methods?

Institutions like HRAF are opening up incredible courses. Tools like Ethnolab are making ethnographic data more accessible via APIs. The barrier to entry for learning these methods has never been lower.

FAQ: How is the business sector responding to the need for cross-cultural competence?

Companies are realizing that “global” is the default setting now. With the cross-cultural training market projected to grow significantly—from $1.32 billion in 2024 to $1.81 billion by 2029 —businesses are investing heavily because the cost of cultural failure is too high.

Recap:

- Construct Validity First: Ensure the concept travels before the question travels.

- Rigorous Workflow: Use a step-by-step process that includes piloting and cognitive interviews.

- Human + AI: Leverage technology for scale, but rely on local humans for meaning.

Your Monday Morning Plan:

- Audit your current survey: Flag 3 questions that might be culturally specific.

- Schedule a pilot: Plan 3 cognitive interviews for your next target market.

- Start a decision log: Document why you are asking what you are asking.

Cross-cultural research is challenging, but it is also the most rewarding work you can do. It forces you to question your assumptions and listen—really listen—to the world.