Entity extraction techniques: Top methods for building semantic connections

I’ve watched smart data teams spend weeks manually copying insights from PDFs, earnings transcripts, and support tickets into spreadsheets. It usually starts well, but eventually, the sheer volume of unstructured text breaks the process. You end up with a mess of disconnected data points rather than actionable intelligence.

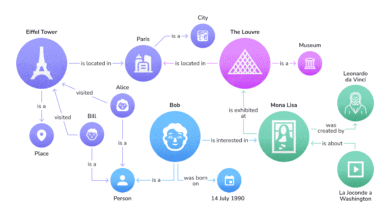

This is where entity extraction techniques—done right—change the game. It’s not just about highlighting keywords anymore. It’s about building semantic connections: identifying real-world entities (people, companies, products), verifying exactly who they are, and mapping how they relate to one another in a structured format.

In this guide, I’ll walk you through the fundamentals, a practical workflow I use for business pipelines, and the next-generation techniques—like structure-aware decoding and retrieval-based frameworks—that are solving the problems where traditional models fail.

Search intent and who this is for

If you are looking for a simple definition of a noun, this isn’t for you. This article is designed for informational and how-to purposes, targeting SEOs, Content Strategists, and Data Operators who need to turn messy text into structured data. You don’t need a PhD in NLP, but you do need to understand how to move beyond basic keyword spotting to build pipelines that actually work in production.

What you’ll be able to do by the end

- Distinguish clearly between NER, entity linking, and relation extraction to stop error propagation in your dashboards.

- Design a robust implementation workflow that handles real-world data issues like OCR errors and overlapping terms.

- Choose between advanced techniques like retrieval-based relation extraction or zero-shot schemas based on your specific constraints.

- Conduct a proper evaluation using precision, recall, and F1 scores so you know your data is trustworthy before you ship.

The basics: NER vs entity linking vs relation extraction (and why pipelines break)

Before we jump into the advanced stuff, we need to agree on the vocabulary. I’ve seen projects fail simply because the team conflated detecting a name with understanding its identity.

The traditional process usually involves three distinct tasks. Named Entity Recognition (NER) finds the text span. Entity Linking (EL) maps that text to a unique ID in a database. Relation Extraction (RE) determines how two entities interact.

| Task | Input | Output | Typical Failure Mode | Example |

|---|---|---|---|---|

| NER | Raw Text | Labeled Spans (Text + Type) | Misses nested entities (e.g., “Bank of America”) | “Apple” (ORG) |

| Entity Linking | Labeled Span | Canonical ID (e.g., Q312 from Wikidata) | Links to fruit instead of company | ID: org_apple_inc |

| Relation Extraction | Two Entities + Context | Triple (Subj, Predicate, Obj) | Fails if entities are far apart | (Apple, released, iPhone 15) |

Quick definitions with one shared example sentence

Let’s stick to one example to make this concrete:

“CloudCorp announced that Q3 revenue fell due to supply chain delays in Vietnam.”

- Spans: “CloudCorp” (Organization), “Q3 revenue” (Metric), “Vietnam” (Location).

- Labels: These are the categories assigned to the spans.

- Relations: CloudCorp → reported_metric → Q3 revenue; Q3 revenue → impacted_by → supply chain delays.

Why traditional NER → RE pipelines cause error propagation

The standard way to build this is a “pipeline approach”: first you run NER, then you run RE on the results. The problem? Error propagation. If your NER model misses “CloudCorp” or accidentally tags “CloudCorp announced” as the entity, your Relation Extraction model has zero chance of success. It never even sees the correct candidate. In my experience, this is the number one reason dashboards show misleading trends—the initial extraction was slightly off, cascading into zero valid relationships found.

Where things get hard: nested and overlapping entities

Real business text is messy. Consider the phrase “Bank of America Merrill Lynch“. A simple model might extract “Bank of America” as one organization and “Merrill Lynch” as another, or miss the larger entity entirely. These are nested and overlapping entities. If you are analyzing legal contracts or product bundles, standard flat NER models often fail here, creating noise in your data.

Table: What each component produces (and what to store for later semantic connections)

When you are designing your database, you need to store more than just the text. Here is what I persist to ensure the data is debuggable later:

| Component | Output to Store | Why I Keep It |

|---|---|---|

| Entity | Text, Start/End Offsets, Type, Confidence Score | Offsets are critical for highlighting the text in the UI later. |

| Linking | Canonical ID (KB_ID), Knowledge Base Source | Allows aggregation (e.g., grouping “Google” and “Alphabet Inc.”). |

| Relation | Source Entity ID, Target Entity ID, Relation Type, Evidence Span | I always keep the evidence span so humans can verify why the model created the link. |

| Metadata | Document ID, Timestamp, Model Version | Essential for provenance and debugging drift. |

A practical workflow to apply entity extraction techniques in a business pipeline

Don’t just throw an LLM at a folder of documents and hope for the best. To build a system that lasts, you need a workflow. Here is the process I follow when deploying entity extraction workflows for clients.

Step 1: Start with the decision that matters—what semantic connections do I actually need?

I’ve seen teams try to “extract everything” and end up with a noisy graph that nobody uses. Start with your business question.

- Use Case Definition: Are you mapping competitors to features? Tracking regulatory risks?

- Entity Taxonomy: List the exact 5-10 entity types you care about.

- Relation Schema: Define the relationships explicitly (e.g., Competes_With, Acquired_By). If you don’t define these, your model will hallucinate connections that don’t exist.

Step 2: Choose schema-based vs open-schema (and what zero-shot can/can’t do)

You have a fundamental choice: do you want a fixed list of types (Schema-based) or do you want the model to discover new types (Open-schema)?

When I’m under strict compliance constraints or populating a specific database table, I prefer schema-based extraction. It’s rigid but predictable. However, zero-shot entity extraction is powerful for exploring a new domain where you don’t know what you don’t know yet. Just remember: open schema requires heavy schema governance later to clean up the mess of synonyms.

Step 3: Prepare text so your model doesn’t fail for avoidable reasons

Garbage in, garbage out. If you are parsing PDFs, OCR errors can ruin text preprocessing. A common trick I use: sample 50 documents and manually read the raw text. If you can’t read it, the model can’t either. Ensure you handle sentence splitting correctly—if a relationship spans across a sentence break that your splitter removed, that connection is lost forever.

Step 4: Pick an approach (rule-based, classic ML, LLM, or hybrid)

If I only had a week to ship a prototype, I’d use an LLM with few-shot prompting. It’s slow and expensive at scale, but the accuracy is high out of the gate. For long-term production pipelines processing millions of documents, I look at training a smaller BERT-based model or a hybrid NLP approach where rules handle the obvious stuff (like dates and phone numbers) and models handle the ambiguity.

Step 5: Evaluate like a grown-up (before you ship)

Don’t just look at a few examples and say “looks good.” You need metrics. Calculate precision (are the found entities actually correct?) and recall (did we find all of them?). Combine them into an F1 score. I’ve seen systems with 99% precision that were useless because they had 10% recall—they were right when they spoke, but they stayed silent on most of the important data.

Step 6: Store outputs so semantic connections stay queryable

Finally, where does the data go? If you are building a knowledge graph, a graph database (like Neo4j) is ideal. For search use cases, Elasticsearch works well if you flatten the relationships. The key is graph-ready output: ensuring every entity has a unique ID so you can traverse connections (e.g., find all companies connected to “Supplier X”).

Next-gen entity extraction techniques for richer semantic connections (what works and when)

The standard pipeline works for simple tasks, but if you are dealing with complex data, you need modern methods. Here are the entity extraction techniques that are currently pushing the boundaries of what’s possible.

| Technique | Solves | Best For | Complexity |

|---|---|---|---|

| Structure-Aware Decoding | Nested/Overlapping Entities | Legal, Medical, Finance | High |

| Retrieval-Based (ROC) | Limited Training Data | Complex Relations | Medium |

| Zero-Shot Open-Schema | Unknown Taxonomies | New Domains/Discovery | Low (Setup) |

| Joint EL + RE | Error Propagation | High-Speed Pipelines | High |

1) Structure-aware decoding for nested and overlapping entities

Standard models treat text as a flat sequence, which is why they fail on “[University of [Washington]]”. Structure-aware decoding introduces hierarchical constraints. It forces the model to recognize that an entity can exist inside another. This is crucial for tasks involving nested NER or overlapping entities. If you are in legal or biomedical fields, don’t start without this.

2) Retrieval-based frameworks (retrieval over classification) for relation extraction

Instead of training a classifier to pick from 50 fixed labels, retrieval over classification treats relation extraction like a search problem. It matches the sentence context to a natural language description of the relationship. This leverages contrastive learning to understand semantic similarity. If your relation types change frequently, this is a lifesaver because you don’t have to retrain the whole model—you just update the descriptions.

3) Zero-shot open-schema methods (e.g., ZOES) when you can’t predefine a taxonomy

Sometimes you don’t know the schema yet. Zero-shot entity extraction allows you to discover entities and relations without a predefined list. This is excellent for schema discovery in a new vertical. However, don’t rely on it for final production without a review loop, or you’ll end up with five different labels for “Acquisition”.

4) Mixture-of-experts (MoE) + dependency parsing for varied relation types

Mixture-of-experts models route different parts of the extraction task to specialized sub-models. When combined with dependency parsing (which maps the grammatical structure of a sentence), these models excel at relation classification in complex sentences where the subject and object are far apart. It’s powerful, but harder to debug.

5) Semantic role labeling (SRL) and entity position modeling to reduce overlap errors

Semantic role labeling identifies the “who did what to whom” structure (predicate-argument). By using SRL embeddings, you give the model explicit hints about the role of each entity, which significantly improves accuracy in entity position modeling. It clarifies ambiguity in sentences like “Google acquired YouTube,” ensuring the direction of the relationship is correct.

6) Joint EL + RE with retriever–reader architectures (speed + consistency)

Joint entity linking and relation extraction handles both tasks in one pass. The modern retriever-reader architecture can achieve this with incredible speed—up to 40x faster inference has been reported in research benchmarks. If low latency is your priority (e.g., processing live news feeds), this is the architecture to investigate.

7) Hybrid Graph-ML + LLM + Knowledge Graph integration for multilingual pipelines

This is the cutting edge: combining graph machine learning with LLMs. The graph structure helps maintain consistency (reasoning), while the LLM handles the language understanding. This knowledge graph integration is particularly effective for multilingual NER, allowing you to transfer insights from high-resource languages (like English) to lower-resource ones (like Swahili) within the same graph structure.

Table: Which next-gen technique should I use? (decision matrix)

If I were starting a new project today, this is how I’d decide:

| Constraint | Recommended Technique | Implementation Hint |

|---|---|---|

| Nested/Overlapping Entities | Structure-Aware Decoding | Look for span-based models. |

| Relation Schema Changes Often | Retrieval-Based (ROC) | Write clear relation descriptions. |

| Zero Training Data | Zero-Shot / Open-Schema | Add a human review step. |

| High Speed Required | Joint EL + RE (Retriever-Reader) | Focus on inference latency. |

Tools and implementation: from prototype to production (without losing trust)

Knowing the theory is great, but eventually, you have to buy a tool or write code. The market is shifting toward enterprise SDKs that offer pre-trained models you can fine-tune securely.

Build vs buy: a beginner-friendly decision checklist

- Build in-house if your domain is highly specific (e.g., proprietary chemical formulas) or if data privacy prevents you from sending text to a cloud API. Be warned: the hidden cost is maintenance and labeling.

- Buy/Use SDK if you need speed, multilingual extraction, and standard entities (People, Places, Organizations). A good SDK handles the infrastructure scaling for you.

What modern extraction SDKs deliver (and what to verify)

Modern solutions are delivering high throughput and graph-ready outputs out of the box. When evaluating a vendor or open-source library, verify the taxonomy configurability. Can you add a custom entity type easily? Run a pilot with your messiest documents—that is the only test that matters.

Turning extracted entities into SEO-ready content intelligence

Once you have this structured data, you can do more than just analysis. You can power your content strategy. By understanding the entities in your niche and their relationships, you can build topic clusters that dominate search results. I use this data to create detailed content briefs. For example, if I’m using an AI article generator, I feed it the extracted entity graph to ensure factual depth. This is where an advanced AI content writer or SEO content generator truly shines—turning raw content intelligence into structured, authoritative articles that cover the topic completely, including internal linking opportunities you might have missed.

Common mistakes with entity extraction techniques (and how I fix them)

I’ve made plenty of mistakes deploying these systems. Here are the most common entity extraction pitfalls I see, so you can avoid them.

Mistake 1–2: Weak schema design + inconsistent labeling

If your annotators can’t agree on what a “Product” is, your model won’t either. Ambiguous labeling guidelines destroy performance. Taxonomy consistency is key. Fix this by writing a clear guideline document with examples of edge cases (e.g., “Is ‘Apple’ a product or a company in this sentence?”).

Mistake 3–4: Ignoring nested/overlapping entities + not storing evidence spans

Ignoring nested entities leads to data loss. If you flatten your data, you lose the nuance. And if you don’t store evidence spans (the exact snippet of text), your users won’t trust the data because they can’t verify it. Always keep the offsets.

Mistake 5–6: Shipping without the right evaluation + no monitoring loop

Shipping a model once is easy; keeping it working is hard. Data drift is real. If you don’t have model monitoring in place, you won’t know when your accuracy drops because the input data changed. Run sliced evaluation—check performance on each entity type separately.

Table: Mistake → what you’ll see → the fix

| Mistake | What You’ll See (Symptom) | The Fix |

|---|---|---|

| Inconsistent Labeling | Low precision, confusion on similar types | Create a “Gold Standard” dataset & guidelines. |

| Ignoring Overlaps | Missing entities in complex names | Switch to structure-aware or span-based models. |

| No Evidence Stored | Users doubting the data validity | Persist text offsets and source snippets. |

| No Monitoring | Sudden dashboard spikes/drops | Implement weekly drift checks on samples. |

FAQs about entity extraction and relation modeling (beginner-friendly answers)

What are the main challenges in entity extraction and relation modeling today?

The biggest entity extraction challenges are handling nested entities, maintaining multilingual robustness, and preventing error propagation in pipelines. Dealing with messy, unstructured data at scalability while keeping the schema consistent is the operational hurdle most teams face. If this feels messy, that’s because real data is messy.

Why use retrieval-based frameworks instead of classification for relation extraction?

Retrieval-based frameworks allow you to match relations using natural language descriptions rather than fixed classes. This improves interpretability and makes the model more robust to new relation types without retraining. It captures relation semantics better than just assigning a class ID.

How do zero-shot approaches help in entity extraction?

Zero-shot and open-schema approaches let you extract entities in new domains without training a custom model. They are crucial for domain adaptation when you need to launch quickly. For example, if you enter a new vertical, zero-shot helps you discover the relevant entities on day one.

What advantages do mixture-of-experts models offer in entity–relation tasks?

Mixture-of-experts models route specific types of relations to specialized “expert” sub-models. This helps significantly with imbalanced datasets where some relations are rare. Combined with dependency parsing, it allows the model to handle complex sentence structures more effectively than a generalist model.

How can enterprise content workflows benefit from recent extraction SDKs?

Modern enterprise SDKs provide graph-ready outputs and easy integration into existing content workflows. They handle the heavy lifting of throughput and infrastructure, allowing your team to focus on using the data rather than maintaining the extraction pipeline.

Conclusion: how I’d choose the right entity extraction techniques next week

We’ve covered a lot, from basic pipelines to structure-aware decoding. Here is the reality: no single technique is perfect for every scenario.

- Recap: Traditional pipelines propagate errors; next-gen techniques like joint extraction and structure-aware decoding fix this.

- Recap: Always define your schema and use case before writing code.

- Recap: Evaluation (Precision/Recall) and monitoring are not optional.

If I were you, I would start small. Pick one pilot project—maybe analyzing customer support tickets—and define your entity extraction techniques based on that specific data. Run a test, measure the results, and iterate. The semantic web is built one connection at a time.