Analysis at Scale: The Best Software for Analyzing Your Presence in AI (Best AI Analytics Software)

Introduction: Analyzing Your Presence in AI (and why it now matters at scale)

Last month, I did something that scared me. I asked a popular AI assistant to recommend the top three solutions in my specific industry niche—tools I compete with every day. I expected to see my product listed. Instead, the AI confidently recommended two competitors and a company that went out of business six months ago. My brand? Nowhere to be found.

That moment was a wake-up call. We spend thousands on SEO and paid ads, but for a growing slice of buyers, discovery isn’t happening on a search engine results page anymore. It’s happening inside a chat interface.

This article isn’t about chasing trends; it’s about the practical reality of analyzing your presence in AI. I’m going to break down how to measure this new form of visibility, how to evaluate the best AI analytics software to do the heavy lifting, and share a comparison of the platforms I trust—from traditional BI giants adding generative capabilities to new “visibility” tools. Whether you are a Marketing Ops lead or a Data Manager, here is how we move from guessing to measuring.

What I mean by “presence in AI”: definitions, signals, and KPIs I actually track

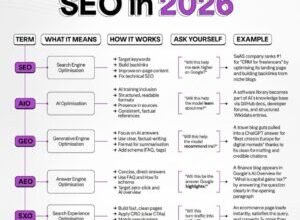

When I talk about “AI presence,” I’m not just talking about using AI to crunch numbers (though that is part of the tooling discussion later). I am talking about how your brand, products, or services are cited, recommended, and perceived by Large Language Models (LLMs) like ChatGPT, Gemini, and Claude.

The marketplace for analytics software is currently splitting into three distinct buckets:

- Classic Analytics (Web/App): What happens on your site (GA4, Mixpanel).

- AI-Assisted BI: Tools like Tableau AI+ that use AI to help you analyze internal data faster.

- AI Visibility / GEO Measurement: Emerging tools that tell you how often AI talks about you.

For that third category—measuring presence—I don’t rely on vanity metrics. Here are the KPIs that actually matter to my stakeholders.

A simple mental model: discovery → recommendation → action

Think of this like the classic marketing funnel, but adapted for the black box of algorithms. It’s similar to how we used to think about PR combined with SEO.

First, there is Discovery: Does the model know you exist? Next is Recommendation: When a user asks “what is the best X?”, are you in the list? Finally, Action: Does the output include a link or a strong enough endorsement to drive a search for your brand name?

KPIs checklist (beginner-friendly)

- Share of Voice (SOV): Percentage of times you appear in answers for your target keywords. (Must-have)

- Citation Frequency: How often the model provides a source link to your domain. (Must-have)

- Rank/Order: Are you listed first, second, or in the “others to consider” footer? (Nice-to-have)

- Sentiment Score: Is the AI describing your product as “expensive” or “reliable”? (Must-have)

- Brand Search Lift: Correlating spikes in AI mentions with branded Google searches. (Directional only)

Tool categories in 2025: where AI analytics software fits

The phrase “AI analytics software” is tricky because it covers a lot of ground. In 2025, the market has fragmented. If you are looking to buy, you need to know which lane you are in, or you will waste budget on features you don’t need.

We see established giants embedding generative AI to make dashboards easier. Tableau AI+, Google Looker AI, and Microsoft Fabric AI are leading this charge, allowing you to ask questions in plain English. Then you have the “AI-native” platforms like Snowflake Cortex AI and Databricks Mosaic AI, which bring the analytics directly to where the data lives. On the cutting edge, we have agentic frameworks and the new wave of observability tools. There is no single “best” tool—only the tool that fits your current data maturity.

How to pick the right category based on your starting point

- If you live in Excel/Power BI: Stick with Microsoft Fabric AI or Tableau AI+. Don’t reinvent the wheel; upgrade the vehicle you already drive.

- If you have a massive Data Warehouse: Look at Snowflake Cortex AI or Databricks Mosaic AI. Moving data is expensive; analyze it in place.

- If you need to measure Brand Visibility in AI: You need a GEO tool like Ranketta. Traditional BI tools cannot see outside your firewall.

How I evaluate the best AI analytics software (a beginner-safe checklist)

When I test these tools, I don’t just watch the demo video. Demo data is always clean, perfectly structured, and produces beautiful charts instantly. Real business data is messy, incomplete, and often breaks the “magic” AI features on day one.

To evaluate software effectively, I use a reproducible method: I feed the same dataset to every tool and ask the same five questions. Here is the framework I use to score them. I also check specifically for US compliance baselines like SOC 2 (and HIPAA where applicable), because if IT won’t approve it, the features don’t matter.

The evaluation table (scorecard)

| Criterion | Why it Matters | Target Score (1-5) |

|---|---|---|

| Natural Language Query (NLQ) | Can a non-technical manager get an accurate answer without SQL? | 4+ |

| Data Governance | Does it respect row-level security so interns don’t see payroll? | 5 (Critical) |

| Auditability | Can I see how the AI calculated the number? (No black boxes). | 4+ |

| Connectivity | Does it connect natively to my CRM, ERP, and Ad networks? | 3+ |

| Cost Transparency | Is pricing flat or based on unpredictable “compute credits”? | 3+ |

Quick tip: Don’t over-score shiny generative features if the governance is weak. Trust is the only feature that keeps you employed.

Shortlist questions I ask vendors (or my internal team)

- Can you show me the audit log for a query generated by the AI?

- What happens to my costs if the executive team starts asking 50 questions a day?

- Does our data train your public models? (The answer must be “No”).

- How does the tool handle conflicting data sources (e.g., Salesforce vs. NetSuite)?

- Can I export the logic/code if we decide to leave this platform next year?

- Is there a “human-in-the-loop” validation step before a dashboard goes live?

- What is the setup time for a non-technical user to get their first insight?

- Do you have SOC 2 Type II certification?

Comparison table: best AI analytics software by use case (what I’d choose and why)

There are dozens of tools, but these are the recognized leaders in 2025. I’ve grouped them by “best for” so you can skip to the section that matches your team.

| Platform | Best For | Key Strength | Limitation |

|---|---|---|---|

| Microsoft Fabric AI | Power BI Shops | Copilot achieves ~94% accuracy in code gen . | Heavy reliance on Azure ecosystem. |

| Tableau AI+ | Visual Data Explorers | “Pulse” features automate data narratives beautifully. | Can be pricey for casual users. |

| Snowflake Cortex AI | Data Warehouse Teams | Analyzes data without moving it (security wins). | Requires SQL/Data engineering knowledge. |

| Zoho Analytics AI | SMBs / Budget Conscious | Great value; “Zia” assistant is surprisingly robust. | Less enterprise-grade governance than Fabric. |

| Ranketta | AI Visibility / SEO | Dedicated to tracking brand mentions in LLMs. | Niche tool; not for general BI. |

- How to read this: Use “Key Strength” to sell it to your team; use “Limitation” to prepare for implementation hurdles.

BI + embedded AI (dashboards first)

If you already use Tableau or Power BI, stay put. The new AI features (Tableau AI+ and Microsoft Fabric Copilot) are designed to speed up what you already do. In my experience, week one is about learning the prompts. By week four, you aren’t building dashboards from scratch anymore; you’re reviewing what the AI built and tweaking the logic. It changes your job from “builder” to “editor.”

AI-native analytics/data platforms (warehouse/lakehouse first)

For teams where data governance is the bottleneck, Snowflake Cortex AI and Databricks Mosaic AI are game changers. Instead of exporting CSVs to a BI tool (which creates security risks), you bring the AI models to the data. If your data lives in a secure warehouse, you will feel significant friction trying to move it elsewhere. These tools eliminate that friction.

AutoML/predictive analytics (forecasting and models)

When you need to predict churn or forecast inventory, tools like Qlik AutoML, SAS Viya AI, and Zoho Analytics AI shine. They democratize data science. You don’t need to know Python to run a regression analysis here; the systems guide you through it. Just remember: the output is a probability, not a crystal ball.

Enterprise decision platforms (workflow + analytics)

For massive organizations dealing with complex operations (like logistics or supply chain), Palantir Foundry AI and IBM Watsonx Analytics integrate analytics into workflows. These aren’t just charts; they are decision engines. The tradeoff is implementation time—these are months-long rollouts, not weekend projects.

AI visibility (GEO) tools: how to measure brand mentions inside LLM answers

Now, let’s pivot back to that “missing brand” problem I mentioned in the intro. Traditional BI tools won’t tell you if ChatGPT is recommending your competitor. For that, you need AI Visibility (sometimes called GEO) tools.

Ranketta (founded in 2025) is currently the primary platform dedicated to this. It runs thousands of prompts relevant to your business against major LLMs and reports back on your “Share of Voice.” Ideally, you want a report that tells you:

| Metric | How to Capture | Why it Matters |

|---|---|---|

| Citation Rate | Log outputs containing your URL. | Drives referral traffic. |

| Recommendation Share | Count instances of your brand in “Top 3” lists. | Key for middle-of-funnel consideration. |

| Attribute Association | Analyze adjectives near your brand name. | Tells you if the AI thinks you are “cheap” or “premium.” |

If you don’t have budget for a dedicated tool yet, you can do this yourself (I did for months). Create a spreadsheet with 50 “buyer intent” questions. Ask them to ChatGPT and Gemini once a week. Log the results. It’s tedious, but it gives you baseline data immediately.

What a good AI visibility report includes

If I’m paying for this, I want to see a Prompt Library (what questions were asked), Model Coverage (results across GPT-4, Claude, Gemini), a Competitor Comparison matrix, and a Trend Line showing if my visibility is improving or declining over time.

Agentic AI in analytics: automation upside, failure modes, and what to monitor

We are moving past chatbots that just answer questions. We are entering the era of Agentic AI—autonomous agents that can execute tasks. Imagine an AI that doesn’t just tell you “churn is up,” but automatically generates a list of at-risk customers and drafts a retention email campaign.

It sounds great, but let’s look at the reality. While 25% of enterprises started agentic pilots in 2025 , Gartner predicts that over 40% of these projects may fail by 2027 due to data infrastructure shortcomings . The risk isn’t that the AI won’t work; it’s that it will work too well on bad data, automating mistakes at scale.

This has birthed a new category of “Observability” tools like GAICo (an open-source framework) and AgentSight. These tools monitor the agents. Think of them as the security guards for your digital workers.

Where agentic analytics helps most (beginner examples)

- Drafting Narratives: Automatically writing the text summary for the Weekly Business Review deck.

- Anomaly Detection: Alerting you to a sales dip and pre-running the diagnostic queries to explain why.

- Ticket Tagging: Reading support tickets and categorizing them by product issue.

- Data Cleaning: flagging duplicate CRM records for human review.

My rule of thumb: I automate safe, reversible tasks (like drafting a report). I do NOT automate high-stakes actions (like changing pricing or auto-sending emails to VIPs) without a human approval step.

Observability basics: what I monitor so agents don’t break things

If you deploy agents, you need a checklist to keep them in check. I monitor Input Freshness (is the agent using yesterday’s data?), Query Costs (did the agent run a $500 query?), and Audit Trails. If an agent takes an action, I need a log that says exactly why it did that. Tools like AgentSight help correlate intent with system actions, but even a simple log file is better than nothing.

Implementation roadmap: how I roll out AI analytics at scale (without breaking trust)

Most AI projects fail because people buy the tool first and figure out the use case later. To succeed, you need to flip that script. Here is the 30-to-60-day roadmap I use to roll out analytics capabilities without overwhelming the team.

The foundation of this roadmap is Data Architecture. You might hear terms like “Data Fabric.” All that means is ensuring your data is unified and governed before you let an AI loose on it. If your data is a mess, AI will just help you make bad decisions faster.

Step 1–2: Start with questions, then map data sources

I start by ignoring the software. I go to the stakeholders—Sales, Marketing, Finance—and I ask: “What are the three questions you wish you could answer every morning?”

Once I have that list (usually things like “Which campaign drove the highest LTV leads?”), I map those questions to data sources. Do we have this data? Is it in the CRM? Is it clean? Only then do I look at tools.

Step 3–4: Pilot a tool in one department before scaling

Don’t try to boil the ocean. I pick one department (usually Marketing Ops because they love data) and run a pilot. Success looks like this: One dashboard, one automated insight, and one happy team. If we can’t get that right in 30 days, we don’t scale.

Step 5–7: Governance, automation, and ROI loops

Once the pilot works, I lock down governance. Who has access? Who approves the metrics? Then, we look at automation. This is where you can start turning insights into consistent action. For example, using a Automated blog generator to turn trending topic insights directly into content drafts can save your team hours of manual work.

Finally, measure ROI. It’s rarely immediate revenue. Usually, the early ROI is “hours saved” and “fewer arguments about data validity.”

Common mistakes beginners make with AI analytics software (and how I fix them) + FAQs + next steps

I’ve seen smart teams burn budget on AI tools that sit unused. It usually comes down to a few repeatable errors.

Mistakes & fixes checklist (5–8 items)

- Mistake: Buying for features, not problems.

Fix: Define your KPIs before you schedule a demo. - Mistake: Ignoring governance.

Fix: Set permissions on day one. Trust is hard to regain once lost. - Mistake: Over-trusting the “Magic” box.

Fix: Always have a human verify AI insights for the first 90 days. - Mistake: Neglecting the “Presence” metric.

Fix: Don’t just analyze your data; analyze your visibility in AI responses. - Mistake: Underestimating change management.

Fix: Budget time for training. The tool is easy; changing habits is hard.

FAQs (from what people ask in 2025)

What qualifies as the “best” AI analytics software?

There is no universal best. For BI, Microsoft Fabric or Tableau AI+ are leaders. For data warehouses, Snowflake Cortex AI is top-tier. For AI visibility, Ranketta is the specialist.

What is agentic AI?

Agentic AI refers to AI systems that can autonomously perform tasks (like running a query and emailing the result) rather than just answering questions.

What are GEO tools?

Generative Engine Optimization (GEO) tools measure how often and how favorably your brand appears in LLM-generated responses.

Why does data architecture matter?

Because AI models are garbage-in, garbage-out. Without a unified data fabric, your AI agents will hallucinate or provide conflicting answers.

Do I need a data scientist to use these tools?

Increasingly, no. Tools like Zoho Analytics AI and Tableau AI+ are built for business users, using Natural Language Query (NLQ) to bypass code.

Conclusion: 3-bullet recap + next actions

We are in a transition period. The tools are getting smarter, but the principles of good analysis remain the same: clean data, clear questions, and verified outputs.

- Presence matters: You must measure your visibility in AI answers (GEO), not just your internal data.

- Choose by fit: Don’t buy a Ferrari for a grocery run. Match the tool (BI vs. Native vs. AutoML) to your team’s maturity.

- Governance first: The more powerful the AI, the stricter your rules need to be.

If I were starting this week, I would download a scorecard to evaluate my current stack, run a manual “AI Visibility” audit on ChatGPT, and identify one repetitive reporting task to automate. Once you have your data strategy in place, you can use an AI article generator to scale your content production based on those hard-earned insights. If you need help identifying the right content opportunities first, an AI SEO tool can pinpoint the gaps your competitors are missing.

The future of analytics isn’t just about staring at dashboards—it’s about acting on intelligence.