Tracking topical authority in the world of large language models (LLMs): a beginner-friendly guide

It happened during a routine client call last month. We were reviewing a solid quarter of organic growth—traffic was up, rankings were stable—when the marketing director paused and asked, "That’s great, but I just asked ChatGPT about the top vendors in our space, and it didn’t even mention us. Why?"

That moment clarified a shift that I—and likely many of you—have felt coming for a while. We aren’t just optimizing for Google’s ten blue links anymore. We are optimizing for the topical authority that feeds the answers in ChatGPT, Gemini, and Perplexity.

If you are a content lead or SEO manager, you know the pressure. You need to prove that your content isn’t just filler; it needs to be the definitive source. But how do you measure that? How do you track visibility in a black box like an LLM?

In this guide, I’ll walk you through a practical, newsroom-grade framework to measure topical authority. We will look at the specific metrics that matter for both SEO and AI search, and I’ll share the exact weekly workflow I use to keep our content strategy on track. We’ll cut through the hype and focus on what you can actually control: structure, clarity, and consistency.

What “topical authority” means in an LLM-first world (and what it doesn’t)

I like to think of topical authority as "beat reporting" in a newsroom. A beat reporter doesn’t just write one viral article about a subject and move on. They cover the subject from every necessary angle—trends, key players, legislation, and history—until they are the go-to source for that topic.

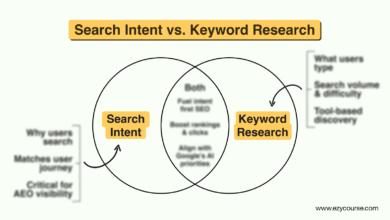

In the past, SEO topical authority was largely about keywords and backlinks. If you had enough high-authority sites linking to you and you used the right terms, Google trusted you.

In an LLM-first world, it is different.

Topical authority for AI is about semantic coverage and entity relationships. LLMs (Large Language Models) ingest massive amounts of data and build maps of concepts. If your brand is consistently associated with specific entities (e.g., "payroll compliance" or "enterprise cybersecurity") across valid sources, the AI begins to recognize you as a relevant part of the answer.

Here is my personal rule of thumb: If I can’t map my articles to a clear topic map, I’m not building authority—I’m just publishing.

To clarify exactly what we are dealing with:

- It Is: A measurable density of high-quality, interconnected content that covers a subject exhaustively.

- It Is Not: Just publishing 100 AI-generated posts with the same keyword stuffed into the H1.

- It Is: Being cited because your content provides unique data or logical structure that the model finds useful.

- It Is Not: Guaranteed visibility just because you rank #1 on Google.

Quick answer: topical authority for SEO vs topical authority for LLMs

While the two concepts overlap, the metrics for success differ slightly. SEO focuses on retrieval (finding the best page), while LLMs focus on generation (constructing the best answer).

- SEO Authority: Measured by rankings, organic traffic, and backlink profile strength. It relies heavily on external validation (links).

- LLM Authority: Measured by citations, brand mentions in generated answers, and semantic proximity. It relies heavily on content depth, accuracy, and consistent patterns across the web.

How LLMs decide whether a source is authoritative (signals you can actually influence)

Let’s be honest: we don’t know the exact weights inside GPT-4 or Gemini’s algorithms. However, by observing how these models retrieve and synthesize information, we can identify specific signals that we can influence.

When I audit a page for "LLM friendliness," I’m not looking for keywords. I’m looking for logical structure that makes it easy for a machine to parse facts and relationships. Here is what I look for:

- Semantic Richness: Does the content use the correct vocabulary and related entities naturally? (e.g., discussing "project management" alongside "agile," "kanban," and "sprint planning").

- Entity Relationships: Is the brand clearly linked to the service it provides? (e.g., "[Brand Name] is a leading provider of [Service].")

- Logical Structure: Are headings (H2s, H3s) questions or clear statements of fact? LLMs rely on structure to understand hierarchy.

- Citations and Sourcing: Does the content cite external, authoritative sources? This signals research depth.

- Consistency: Do you contradict yourself across different pages, or is your definition of a core concept stable?

There is also a growing focus on trust frameworks. Concepts like COMPASS (looking at Compliance, Privacy, and Security) are emerging as ways enterprises evaluate if an LLM is safe to use, but they also reflect how LLMs themselves might eventually filter sources—prioritizing those that align with safety and accuracy standards. While we can’t force an LLM to cite us, optimizing these signals significantly improves the odds.

Why businesses should care: visibility, trust, and downstream pipeline impact

It is easy to dismiss this as "future tech," but the business impact is already here. Your potential buyers are using AI to build their shortlists.

- Marketing Impact: If you aren’t in the AI’s answer, you are invisible to a growing segment of searchers who prefer chat over clicking links.

- Sales Enablement: Prospects often fact-check sales claims with AI. If the AI confirms your authority, that’s instant third-party validation.

- Support & Compliance: Accurate presence in AI models means your customers get better automated answers when they ask questions about your product category.

A unified measurement framework: tracking topical authority across SEO + LLMs

The biggest frustration for intermediate SEOs is the lack of a dashboard. Google Search Console (GSC) gives us one view; AI gives us… silence. To fix this, I’ve developed a unified framework that tracks both sides of the coin.

I don’t try to track everything. Instead, I focus on Ranking Breadth (how many related terms we rank for) and LLM Citations (how often we appear in AI answers). For example, experts have noted that true topical authority often looks like growing from ranking for 5 keywords to 47 related terms on a single URL. That expansion proves Google sees you as an authority on the concept, not just the word.

Here is the framework I use to report to leadership:

Table: SEO metrics vs LLM visibility metrics (what to track and where)

| Metric Category | What it Measures | Where to Pull It | What "Good" Looks Like | Common Pitfall |

|---|---|---|---|---|

| Ranking Breadth | Volume of related keywords a single page ranks for. | GSC / Ahrefs / Semrush | Ranking for 20+ long-tail variants of the main topic. | Only tracking the "head term" (e.g., just "CRM" vs "CRM for small business"). |

| Cluster Coverage | Percentage of the "topic map" actually published. | Manual Audit / Project Mgmt Tool | 1 Pillar + 6-10 supporting cluster posts interlinked. | Publishing random posts that don’t link back to the pillar. |

| LLM Citations | Frequency of brand mentions in AI-generated answers. | AmICited / Manual Prompts | Appearing in "Top 5" lists generated by ChatGPT/Perplexity. | Expecting 100% attribution; AI sources are volatile. |

| Internal Link Health | Crawlability and connection between cluster pages. | Site Audit Tools (Screaming Frog) | Zero orphan pages in the cluster. | Links that are buried in footers instead of contextual body text. |

| Engagement | Time on page & Scroll depth. | GA4 / Microsoft Clarity | High time-on-page for long-form guides. | Confusing "bounce rate" with "user found the answer quickly." |

My step-by-step workflow to track (and grow) topical authority month over month

Theory is great, but you have deadlines. Here is the actual workflow I use to manage this process. Whether I am writing manually or using an AI article generator to draft the initial structure, the strategic oversight remains the same.

When I’m short on time, I prioritize steps 1, 4, and 7. Those move the needle the most. If you are starting from zero, don’t try to boil the ocean—pick one topic and execute this cycle fully before moving to the next.

Step 1–3: Define the topic boundary and build a simple topic map (pillar + clusters)

First, I stop the random acts of content. I define a topic boundary. If we sell "Employee Onboarding Software," I don’t write about general HR trends yet. I stick to the core.

- Identify the Pillar: Create a "center of gravity" page. Title: The Ultimate Guide to Employee Onboarding Processes.

- Map the Clusters: I sketch a diagram. The pillar is in the center. The spokes are specific, search-intent driven subtopics:

- Onboarding checklist for remote employees

- Best employee onboarding software (comparison)

- How to write an onboarding welcome email

- Compliance requirements for new hires

- Onboarding metrics to track

- Assign Entities: For each article, I list 3-5 entities that must be mentioned (e.g., "I-9 form," "retention rate," "HRIS integration").

Step 4–6: Improve on-page clarity for humans and machines (headings, entities, schema, internal links)

Once the drafts are in motion, I put on my editor hat. If I can’t summarize a section in one sentence, it’s probably not clear enough for a human or an AI.

- Headings: I ensure H2s and H3s clearly describe the content below them.

- Schema: I add FAQ schema to pages that answer direct questions. This is prime fodder for Google’s Knowledge Graph and LLM training data.

- Internal Links: This is critical. The pillar page must link to every cluster page, and every cluster page must link back to the pillar. I use exact match or highly descriptive anchor text (e.g., "see our onboarding checklist" rather than "click here").

Step 7–9: Measure outcomes (ranking breadth, long-tail impressions, and AI mentions)

Finally, I measure. I don’t just look at the primary keyword rank.

- Check Ranking Breadth: I go to GSC, filter by the page URL, and look at the "Queries" tab. Are we ranking for more queries this month than last? If we went from ranking for 5 terms to 47 terms related to "onboarding," our authority is growing.

- Monitor Impressions: Even if clicks are flat, rising impressions on long-tail queries (e.g., "how to onboard gen z remote") signal that Google is testing our authority.

- AI Spot Check: I ask ChatGPT and Perplexity: "What are the key considerations for employee onboarding?" I look to see if our frameworks or brand name appear. It’s directional, not exact science, but seeing your brand pop up is a massive win.

Tools and dashboards I use to monitor topical authority (SEO + generative AI)

You don’t need an enterprise budget to track this. In fact, tools don’t create authority—coverage and clarity do. However, having the right data helps.

I rely on a mix of standard SEO tools and newer AI-specific platforms. For production, I use the AI SEO tool Kalema to help plan and structure these clusters, ensuring I don’t miss key entities during the drafting phase. When we need to scale out a massive cluster (like 50 glossary terms), a Bulk article generator can be a lifesaver for maintaining consistency without burning out the writing team.

Here is my stack:

Table: beginner-friendly authority dashboard (what I check weekly vs monthly)

| Tool | What I Track | Frequency | Beginner Tip |

|---|---|---|---|

| Google Search Console | Impressions, Ranking Breadth, Indexation | Weekly | Look at the "Pages" report to find unindexed content first. |

| AmICited / Perplexity | Brand Mentions in AI Answers | Monthly | Use incognito mode when spot-checking manually. |

| Screaming Frog (or similar) | Internal Link Counts, Broken Links | Monthly | Sort pages by "Inlinks" to find orphan pages. |

| GA4 | Engagement Time, User Flow | Monthly | Focus on "Key Events" (conversions) over raw views. |

Common mistakes that stall topical authority (and how I fix them)

I’ve made plenty of mistakes in my career. I once launched 50 articles in a single week without linking them together—Google indexed them, then promptly forgot them because they had no structural support. Here are the most common pitfalls I see:

-

The "One-and-Done" Approach

Symptom: You write one great guide and never touch the topic again.

Fix: Commit to a cluster. Don’t publish the pillar until you have at least 3-4 supporting posts ready to go live with it. -

Weak Internal Linking

Symptom: Pages exist in silos. Users (and bots) hit a dead end.

Fix: Every page needs a "Next Step" link. Audit your site specifically for orphan pages and link them back to the nearest relevant pillar. -

Ignoring the Long Tail

Symptom: You only track the "money keyword" and get discouraged when it doesn’t move.

Fix: Celebrate the small wins. Ranking for "benefits of X for small business" is the stepping stone to ranking for "X software." -

Inconsistent Terminology

Symptom: Calling it "staff onboarding" in one post and "new hire orientation" in another without connecting them.

Fix: Define your entities early. Pick a primary term and stick to it, or explicitly state that the terms are interchangeable in your content.

FAQs: tracking authority in ChatGPT, Gemini, Perplexity, and Google

My inbox is often full of these questions from colleagues. Here is the straight talk on what we currently know.

How do LLMs determine whether a source is authoritative?

It is rarely about a single metric like Domain Authority. LLMs analyze patterns across the vast data they have been trained on. They look for semantic depth (how well you cover the nuances of a topic), entity relationships (how frequently your brand is associated with the topic in other credible texts), and logical consistency. If your content is well-structured, cited by others, and factually consistent, the model assigns it a higher probability of being part of a correct answer.

What metrics can I use to track my topical authority in AI systems?

Since we don’t have a "Search Console" for ChatGPT yet, we use proxies. Start with these three:

- Share of Voice in AI Answers: How often is your brand mentioned in prompted answers?

- Ranking Breadth: Are you ranking for more questions and long-tail queries in Google? (This correlates with being an entity in the Knowledge Graph).

- Brand Search Volume: Are people searching for "[Your Brand] + [Topic]"? This signals strong user association.

Are there tools to measure visibility in ChatGPT, Gemini, or Perplexity?

Yes, tools like AmICited and various "Generative Engine Optimization" (GEO) trackers are emerging. These platforms automate the process of prompting LLMs with relevant queries to see if your brand is cited. However, treat these as directional. LLM answers are probabilistic—they change. Use these tools to spot trends (e.g., "we are appearing more often") rather than absolute truth.

How can I see growth in topical authority in search engine data?

You don’t need fancy tools to see early signals. Go to Google Search Console > Performance > Search Results. Click on "Pages" and select your pillar page. Then switch to the "Queries" tab. If the list of queries ranking (even at position 50) is growing longer every month, your topical authority is increasing. You are capturing a wider surface area of the topic.

Why is authority tracking important in AI for businesses?

It comes down to trust and reach. As search behaviors shift toward conversational answers, being the "cited source" is the new "ranking #1." It impacts brand perception, helps support teams (when AI bots read your docs to answer users), and ensures your product is part of the consideration set before a user ever visits your website.

Conclusion: my 3-point recap and next actions to build topical authority

We have covered a lot, but building topical authority doesn’t have to be overwhelming. If you take nothing else away from this guide, remember these three points:

- Authority is structure, not just volume. You can’t spam your way to trust. You must map your topics logically.

- Metrics must be unified. Stop looking at SEO and AI in silos. Use Ranking Breadth and LLM Citations together to get the full picture.

- Consistency wins. A regular cadence of updating pillar pages and adding cluster content beats a sporadic viral hit every time.

Your next actions for this week:

- Pick one core topic you want to own and draw a simple pillar-cluster map.

- Audit the internal links for that cluster—are there any orphan pages? Link them up.

- Set up a simple "Ranking Breadth" check in GSC for your top 3 pages.

- Run a manual test in ChatGPT/Perplexity for your top keywords and document if you are mentioned.

Building this level of structured, high-quality content at scale is exactly where SEO content generator tools like Kalema shine—not by replacing your strategy, but by ensuring every piece you publish adheres to the strict structural standards that build authority.