Introduction: AI or manual—how I approach meta descriptions in 2026

I still remember the first time I watched a technically perfect page flatline. The keyword research was solid, the content was deep, and the backlinks were growing. But when I checked the SERP, the snippet was a disaster: a truncated sentence cut off mid-thought, pulled randomly from the footer. It was bland, confusing, and completely invisible to the user.

That was a wake-up call. In 2026, the tension isn’t just about writing a good description; it’s about battling for attention in a search environment where Google rewrites snippets constantly and AI Overviews are physically pushing organic results down the page.

The solution isn’t to hand-write thousands of descriptions until you burn out, nor is it to let a basic AI generator flood your site with generic fluff. The winning approach—the one I use to protect click-through rates (CTR)—is a hybrid workflow. It leverages automation for scale and human editorial judgment for intent. In this guide, I’ll walk you through exactly how I audit, generate, and refine meta descriptions to win clicks when it matters most.

Do meta descriptions still matter when Google rewrites them and AI Overviews steal clicks?

If you hang around SEO forums long enough, you’ll hear the argument that meta descriptions are dead. The logic usually points to two massive shifts in how Google presents results. First, Google rewrites approximately 62–70% of meta descriptions to better match the user’s specific query. Second, the introduction of AI Overviews has significantly compressed organic visibility.

The data is undeniably tough. We’ve seen Position #1 CTR drop from historical highs of ~7.3% down to ~2.6% when an AI Overview is present . On informational queries, where the answer is provided directly on the SERP, click-through rates have plummeted from ~1.41% to ~0.64% .

So, why do I still obsess over them? Because on the pages that actually drive revenue, you cannot afford to cede control. Even if Google rewrites your snippet 60% of the time, that leaves 40% of the time where your copy is the only thing standing between a user clicking you or your competitor. Furthermore, a well-optimized description often influences the rewrite itself—giving Google better text to pull from than your navigation menu.

In practice, I don’t write custom descriptions for every single tag page or archive. I prioritize relentlessly. My homepage, core service pages, and top-performing blog posts get the white-glove treatment. Everything else gets a systematized, automated approach. It’s about being strategic, not obsessive.

What meta descriptions control (and what they don’t)

Let’s be clear about the mechanics: meta descriptions are not a direct ranking factor. Google doesn’t crawl them to decide if you rank #1 or #10. However, I treat them exactly like ad copy for my organic listing. They control the promise of the click.

When your description matches the user’s search intent perfectly, it improves your CTR. A higher CTR sends a strong engagement signal to search engines. While you can’t control whether Google decides to rewrite your snippet based on a random long-tail query, you can control the default message for your primary keywords. If you leave it blank, you are essentially telling Google, “You figure it out.” That’s a risk I’m not willing to take on money pages.

How AI Overviews change the CTR game

AI Overviews have raised the bar for specificity. When an AI summarizes the answer at the top of the page, the user no longer needs to click for basic definitions or simple lists. This means your meta description can no longer just say, “Learn about X.” It has to offer something the AI didn’t.

Given this, here’s what I optimize for: depth, perspective, and utility. If the AI Overview gives the “what,” my meta description promises the “how” or the “why.” Brands that are featured inside the AI overviews themselves can see modest CTR lifts, but for the standard organic links below, the copy must be punchier and more benefit-driven than ever before.

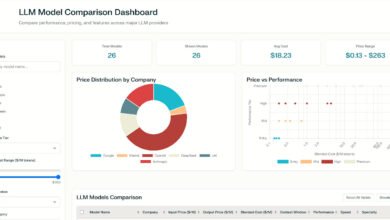

What a meta description generator actually does (and when I’d use one)

For those new to the tool stack, a meta description generator is software that drafts snippet text based on your page content, keywords, or a specific prompt. In the past, these were simple rule-based scripts. Today, they are sophisticated AI tools that can mimic brand voice and adhere to strict character limits.

But I don’t use them blindly. If I’m staring at a spreadsheet of 500 product URLs, I’m not going to write those by hand—that’s a bad use of my time. I use generators to scale content production, standardize format, and ensure every page at least has a baseline optimized tag. It’s also incredibly useful for A/B testing; I can ask the AI to generate three distinct variations (e.g., one focused on price, one on quality, one on speed) to see which angle resonates.

However, I draw a hard line at high-stakes pages. If a page represents a significant portion of my revenue or requires nuanced compliance language (like in finance or health), I might use a generator for a first draft, but a human always reviews the final output.

Best use cases for businesses (ecommerce, SaaS, local services)

In my experience, generators shine in these specific scenarios:

- Ecommerce Product Pages: You have 2,000 SKUs of screw drivers. A generator can pull attributes (size, material, brand) into a compelling pattern: “Shop durable [Brand] screwdrivers in [Size]. perfectly weighted for [Use Case]. Fast shipping available.”

- Local Service Pages: If you serve 50 cities, you need unique descriptions for each. A generator handles the location swaps flawlessly: “Expert roof repair in [City]. Licensed, insured, and available 24/7 for emergency leaks. Get your free estimate.”

- Large Blog Archives: When auditing a site with hundreds of old posts missing metadata, a generator can rapidly fill the gaps, lifting the overall site quality score.

When automation backfires

I’ve seen this happen more times than I care to admit: a site owner automates their metadata, and suddenly their impressions tank. Why? Because the AI generated nearly identical descriptions for ten similar pages. Google saw them as duplicates and filtered them out of the main index.

Another common pitfall is intent mismatch. The AI might see the keyword “best running shoes” and write a description about selling shoes, when the page is actually an informational review guide. This mismatch leads to high bounce rates and eventually, lower rankings. Automation without supervision is just scaling mediocrity.

AI vs manual vs Google-generated snippets: what the data says (and how I interpret it)

There is a prevailing myth that “AI is better at everything now.” When it comes to CTR, the data suggests otherwise. While results vary heavily by industry, studies generally show that human-written descriptions still hold the edge in earning clicks.

According to recent industry data, manually written meta descriptions have been shown to increase CTR by approximately ~16.25% . In contrast, raw ChatGPT-4 generated descriptions—without significant prompt engineering—actually decreased CTR by ~21.5% in some tests , likely due to their generic, “robot-like” phrasing. Interestingly, Google-generated snippets (where Google ignores your tag and writes its own) resulted in a ~10.5% lift compared to bad manual tags.

This doesn’t mean AI is useless—it means I don’t publish AI drafts unchecked. The “generic AI” voice uses fluff words like “delve,” “comprehensive,” and “unlock,” which users have learned to ignore. Humans are better at injecting the specific, verifiable details that trigger a click.

Quick comparison table (CTR impact, pros/cons, best use)

If you remember one thing, remember this: Manual effort is for the money pages; AI is for the massive middle.

| Method | CTR Impact (Est.) | Strengths | Risks | Best For |

|---|---|---|---|---|

| Manual / Human-Edited | ~ +16.25% | High specificity, emotional hook, perfect intent match. | Time-consuming, hard to scale. | Homepage, Landing Pages, Core Services. |

| Standard AI (Raw) | ~ -21.5% | Instant scale, consistent formatting. | Generic phrasing, hallucinated claims, duplication. | Bulk product pages, archive fills. |

| Google Auto-Generated | ~ +10.5% | Zero effort, matches query terms exactly. | No control over messaging, can look broken. | Low-value pages, long-tail queries. |

Why a human edit still matters (specificity, intent match, trust signals)

The reason manual edits win is specificity. An AI might write: “We offer the best plumbing services in the area with great prices.” A human editor—specifically me—would look at that and say, “That says nothing.”

I would rewrite it to: “24/7 emergency plumbing in Austin. Arriving in under 60 mins. Licensed, bonded, and $0 dispatch fee on weekends.”

See the difference? The human edit adds trust signals (licensed), specifics (Austin, 60 mins), and value propositions ($0 fee). AI struggles to prioritize these details unless heavily prompted. That layer of editorial judgment is what captures the click.

My hybrid workflow: using a meta description generator + human review to raise CTR

This is the exact process I use. It balances the speed of tools like Kalema’s AI article writer capabilities with the precision of human SEO.

Step 1: Prioritize pages (where CTR improvements actually matter)

I start in Google Search Console. I don’t optimize blindly. I filter for pages that have:

- High Impressions (>1,000/mo) but Low CTR (<1%).

- Commercial Intent (words like “price,” “service,” “buy”).

- Rankings in positions 2–8. These are the “striking distance” keywords where a better snippet can jump you up a spot or steal a click from the #1 result.

If a page is ranking #50, a new meta description won’t save it. I focus my energy where the traffic is already looking.

Step 2: Lock the search intent and the page promise

Before I generate anything, I define the angle. If the query is “how to fix a leaky faucet,” the intent is informational—the user wants a tutorial, not a sales pitch. If the query is “plumber near me,” the intent is urgency and trust.

I ensure my description aligns with the H1 and the first paragraph of the page. If the snippet promises a “Step-by-Step Guide” and the page is a product category, Google will rewrite the snippet, and the user will bounce.

Step 3: Generate 3–5 options (then edit like an editor)

I never ask for just one description. I ask for variants. I might prompt the generator for:

- Variant A (Benefit-led): Focus on the outcome (e.g., “Save money…”).

- Variant B (Proof-led): Focus on authority (e.g., “Rated 4.9 stars…”).

- Variant C (Question-led): Focus on the problem (e.g., “Struggling with…?”).

Then I edit. I cut vague adjectives like “amazing” or “leading.” I front-load the most important keyword because mobile screens cut off descriptions early. I treat the character limit (approx 155 chars) as a guideline, not a law, but I ensure the hook is in the first 100 characters.

Step 4: Publish + validate in Google Search Console

SEO testing isn’t a lab—so I focus on directional wins. I document the change: “Date: Oct 12. Changed snippet from Generic to Benefit-focused.” Then I wait 2-3 weeks.

I look at the Average CTR for that specific page in GSC. Did it tick up? Did impressions stay stable? If CTR drops, I revert or try a new angle. It’s a game of incremental gains.

QA checklist table: what “publish-ready” looks like

I keep this checklist next to my CMS when I’m moving fast to ensure quality control.

| Criteria | Why it matters | Pass/Fail |

|---|---|---|

| Front-loaded Keyword | Ensures relevance even if truncated on mobile. | [ ] |

| Active Verb CTA | “Shop,” “Learn,” “Discover” drives action. | [ ] |

| Specific Detail | Numbers, prices, or locations prove it’s real. | [ ] |

| Intent Match | Does the snippet promise exactly what the page delivers? | [ ] |

| Unique | Is this description different from other pages? | [ ] |

Choosing a meta description generator: features that matter (and what I’d ignore)

If you’re in the market for a tool to help with this, it’s easy to get distracted by flashy features. I view tools like Kalema not just as writers, but as content intelligence platforms. I need a tool that understands intent, not just one that strings words together.

When evaluating a generator, I look for control. If I can’t control the tone and I can’t prevent duplicates, I skip it. Here is my pragmatic buyer’s guide.

Must-have features (beginner-friendly shortlist)

- Intent Selection: The ability to specify if the output should be informational, transactional, or navigational.

- Bulk Generation: Can I upload a CSV of 100 URLs and get 100 drafts?

- Tone Controls: Can I set it to “Professional,” “Urgent,” or “Witty”?

- Uniqueness Check: Does it flag if I’m using the exact same phrase on 10 pages?

- SERP Preview: A visualizer that shows me where the text cuts off on mobile vs. desktop.

Nice-to-haves (when you’re ready to scale)

If you manage thousands of pages, you might look for emerging tech like MetaSynth or similar retrieval-augmented generation (RAG) models. These advanced systems use performance feedback loops—meaning they look at historical CTR data to inform the next batch of descriptions. It’s the difference between a writer guessing what works and a marketer knowing what works.

Templates and examples I actually use (with prompts you can copy)

Let’s get practical. You can plug these formulas directly into your AI content writer or use them for manual drafting.

3 high-performing formulas (benefit + proof + next step)

- The Problem-Solver: “Stop struggling with [Problem]. Get [Solution] that [Benefit] in under [Timeframe]. Trusted by [Number] users. [CTA].”

- The E-commerce Spec: “Shop [Product Name] at [Brand]. Featuring [Feature 1] and [Feature 2]. Free shipping on orders over $[Price]. [CTA].”

- The Local Authority: “[City]’s top-rated [Service]. We offer [Differentiator] with [Guarantee]. Available [Hours]. Call for a free quote today.”

Worked example: 3 generated options + my final edited version

Scenario: A blog post about “How to Clean White Sneakers.”

AI Option 1: “Learn how to clean white sneakers with this guide. We cover everything you need to know about keeping shoes clean.” (Too generic, boring).

AI Option 2: “Best white sneaker cleaning tips 2026. Discover the secrets to bright shoes using household items like baking soda.” (Better, mentions specific item).

AI Option 3: “Don’t ruin your kicks! Our step-by-step tutorial shows you how to scrub white canvas and leather without yellowing.” (Good emotional hook).

My Final Edit (Hybrid):

“Make your white sneakers look new again in 10 minutes. Use our baking soda hack to remove stains from canvas and leather without yellowing. See the before/after photos.”

Why I chose this: I combined the speed (10 mins), the method (baking soda), the safety (without yellowing), and a visual promise (before/after photos).

Common mistakes (and the fixes I recommend)

I see smart people make these mistakes constantly. If you’re short on time, fixing the first two will give you the biggest lift.

Mistake-to-fix list (7 items)

- Mistake: Duplication across pages.

Fix: Use a canonical tag if pages are identical, or rewrite descriptions to highlight one unique attribute (e.g., color or year) per page. - Mistake: Keyword Stuffing.

Fix: Write for the human eye. Use the keyword once near the beginning, then focus on readability. - Mistake: Ignoring Search Intent.

Fix: Google your keyword. If the top results are guides, don’t write a sales pitch. Match the SERP type. - Mistake: Missing a Call-to-Action.

Fix: Always end with a verb: “Read more,” “Shop now,” “Get the checklist.” - Mistake: Being Vague.

Fix: Swap “great prices” for “starting at $19.” Specificity sells. - Mistake: Forgetting Mobile Truncation.

Fix: Keep the core value prop in the first 120 characters so it survives the mobile cut. - Mistake: Making Unprovable Claims.

Fix: Avoid “Best ever.” Use “Rated 5-stars” or “Award-winning” if true. Trust is fragile.

FAQs + wrap-up: what I’d do next if I were starting today

FAQ: Do meta descriptions still matter if Google often rewrites them?

Yes, absolutely. Even with a rewrite rate of 62–70% , you still control the snippet for a significant portion of traffic. More importantly, a clear meta description helps Google understand your page context, which can improve the quality of the rewrite if it happens. I treat them like guardrails for the search engine.

FAQ: Should I use AI to generate all my meta descriptions?

I wouldn’t use it for all of them blindly. My rule of thumb is: hand-review the top 20% of your pages that drive revenue. For the bottom 80% (archives, low-traffic tags), AI tools are excellent for getting the job done efficiently. It’s a resource allocation game.

FAQ: How do AI Overviews impact click-through rates?

They generally lower organic CTR, with Position #1 sometimes dropping from ~7.3% to ~2.6% . This means your snippet needs to be more specific than the AI summary to earn a click. You have to offer the deep dive or the unique data point the summary missed.

FAQ: Are there AI tools that optimize meta descriptions based on real performance feedback?

Yes, this is where the industry is heading. Models like MetaSynth are experimenting with using CTR data as a feedback loop to refine generation. While it’s still an emerging tech, tools that integrate performance data into their content intelligence are becoming the new standard.

If I were starting today, here is my 3-step action plan:

- Audit: Go to Search Console, find your top 10 pages with high impressions but low CTR.

- Hybrid Rewrite: Use a generator to create 3 variants for those pages, then manually edit them to add one specific number or fact.

- Measure: Check back in 3 weeks. If CTR goes up, roll that strategy out to your next 20 pages.

The goal isn’t to be perfect; it’s to be better than the automated text your competitor left blank. Get the descriptions live, measure the results, and keep iterating.