The Top 10 Technical Errors That Silently Kill Your Site Rankings (Technical SEO Mistakes)

I’ve watched a site lose 40% of its traffic immediately after a redesign, even though the new pages looked cleaner, loaded faster for users, and had better copy. It’s a gut-wrenching moment for any marketing manager. The team panicked, blaming the content or the keyword strategy. But the real issue wasn’t visible on the screen—it was hidden in the code.

In that specific case, a misconfigured canonical tag was telling Google to ignore the new pages. It was a five-minute fix, but it cost them two weeks of revenue. This is the reality of technical SEO in 2026. Search engines are sophisticated, but they still rely on specific signals to crawl, index, and rank your content. If those signals are blocked, confused, or contradictory, your content might as well not exist.

This guide isn’t about chasing algorithms. It’s about the foundational hygiene that intermediate SEOs and growth managers need to audit today. We’ll look at a proven diagnosis workflow, the top 10 mistakes I see tanking rankings right now, and how to fix them efficiently.

How I diagnose technical SEO mistakes: a beginner-friendly workflow (with a checklist table)

When rankings drop or indexing stalls, the temptation is to start guessing. Is it the content? Is it backlinks? In my experience, random checking wastes time. I use a strict order of operations: Crawl → Index → Render → Rank.

If Google can’t crawl the page, it can’t index it. If it can’t render the JavaScript, it can’t see the content. Below is the exact logical flow I use to isolate issues. This system prevents you from fixing meta tags on a page that Google isn’t even allowed to visit.

Especially when you are publishing at scale—perhaps using an Automated blog generator—having a pre-publish technical QA process is critical. Even high-quality automated content won’t perform if the technical pipes are clogged.

| Symptom | Likely Cause | Where to Check | Typical Fix |

|---|---|---|---|

| Page not in Google | Crawl block or Noindex | GSC URL Inspection / robots.txt | Unblock via robots.txt or remove noindex tag |

| Rankings dropped significantly | Mobile parity or Redirects | GSC Comparison / Screaming Frog | Fix redirect chains or mobile content gaps |

| High impressions, low clicks | Bad Schema or Poor Title | Rich Results Test / SERP Preview | Validate JSON-LD markup |

| GSC says "Crawled – not indexed" | Quality or Duplication | Siteliner / Content Audit | Consolidate thin pages or improve uniqueness |

Step 1: Confirm what Google can crawl (before I look at rankings)

Before I worry about keywords, I ask: Can Googlebot physically reach the URL? Crawling is the discovery phase; indexing is the filing phase. If the door is locked (crawling blocked), nothing else matters. I start by checking the Crawl Stats report in Google Search Console (GSC) to see if Google is encountering 404s or server errors (5xx). Then, I check robots.txt to ensure we aren’t accidentally disallowing critical folders.

Step 2: Validate indexability signals (robots, meta robots, canonicals)

Once I know Google can visit, I check if we are telling them to stay away. A common issue is conflicting signals. For example, I often find paginated pages like /blog/?page=2 where the robots.txt allows crawling, but a meta tag says noindex. Or worse, a canonical tag points to a different URL entirely. I use the URL Inspection tool to confirm exactly how Google interprets these signals live.

Step 3: Check mobile-first indexing parity

Most beginners don’t think to compare mobile HTML vs. desktop HTML—doing this once can save months of frustration. Since Google uses mobile-first indexing, the mobile version is your site. If your desktop site has robust structured data and internal links, but your mobile site hides them to "save space," Google doesn’t see them. Parity issues are responsible for 40–60% of ranking drops I investigate.

Step 4: Measure Core Web Vitals (including INP) and prioritize fixes by revenue pages

Core Web Vitals aren’t just vanity metrics; they are tie-breakers. Recently, Interaction to Next Paint (INP) replaced FID. INP measures responsiveness throughout the entire life of the page, not just the first click. I look at the Core Web Vitals report in GSC. I don’t try to fix every URL at once; I start with the page templates used by our top 10 revenue-generating pages. If only 39% of sites achieve ‘Good’ scores, fixing this gives us a distinct advantage.

Step 5: Verify structured data and SERP eligibility

Schema markup helps Google understand entities (like products, events, or authors). It doesn’t guarantee a ranking boost, but it increases eligibility for Rich Results (stars, prices, FAQ snippets), which drives CTR. I stick to the basics first: Organization and Breadcrumb schema. I validate these using the Rich Results Test tool to ensure there are no syntax errors preventing Google from parsing the data.

Step 6: Review internal links, orphan pages, and crawl waste

Internal links are the highways Google travels to find new content. If a page has no internal links pointing to it, it’s an "orphan"—effectively invisible. Conversely, generating thousands of low-value URLs (like faceted navigation filters) wastes crawl budget. Log file analysis shows that 40–50% of crawl budget is often wasted on these junk pages. I use a site crawler to visualize the site architecture and ensure authority flows to important pages.

The Top 10 technical SEO mistakes that silently kill rankings (and how I fix each one)

Now that we have a workflow, let’s look at the specific errors that cause these problems. These are the "silent killers"—issues that don’t always break the user experience but devastatingly break search performance.

| Mistake | Primary Symptom | Fastest Check |

|---|---|---|

| Blocking Pages | Zero traffic / De-indexed | Robots.txt Tester |

| Canonical Errors | Wrong page ranks | GSC URL Inspection |

| Redirect Chains | Slow load / Crawl waste | Screaming Frog |

| JS Rendering | Content not indexed | Live Test (View Rendered Source) |

1) Accidentally blocking important pages (robots.txt, noindex, x-robots-tag)

What it is: You inadvertently tell Google not to look at your most important content. This often happens when developers push a staging site (which should be blocked) to production and forget to remove the blocks.

Why it hurts: If Google can’t crawl it, you don’t exist.

How to detect it: Use the GSC URL Inspection tool. If it says "Crawling allowed? No: blocked by robots.txt," you have a problem. Also, check for the x-robots-tag in the HTTP header, which is invisible in the HTML source code.

How to fix it:

- Open your

robots.txtfile (usually domain.com/robots.txt). - Ensure there are no

Disallow: /rules affecting public pages. - Remove

noindextags from production pages. - Request indexing in GSC to prompt a re-crawl.

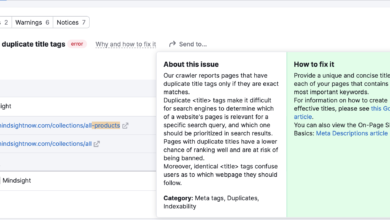

2) Canonical tag mistakes that create self-sabotaging duplicates

What it is: A canonical tag tells Google, "This is the master version of the page." A common mistake is self-referencing canonicals on duplicate versions (e.g., both the HTTP and HTTPS versions claim to be the master) or canonicalizing a page to the homepage.

Why it hurts: It splits your ranking signals. If I have two identical URLs, Google might ignore both or flip-flop between them.

How to detect it: Crawl your site and filter for "Non-Indexable" due to canonicals. I’ve seen sites where every parameter URL (like ?source=email) was indexed because the canonical tag was missing.

How to fix it: Ensure every page points to itself (self-referencing) unless it is a deliberate duplicate. Standardize your URL structure (e.g., always enforce trailing slashes).

3) Redirect chains, loops, and 404s (especially after a rebuild)

What it is: Page A redirects to Page B, which redirects to Page C. Or worse, Page A redirects to B, and B redirects back to A (a loop).

Why it hurts: Google stops following redirects after a certain number (usually 5). It slows down the user and wastes crawl budget. After a site migration, I often see impressions spike (Google crawling everything) but rankings drop because link equity is lost in chains.

How to detect it: Use a crawler like Screaming Frog to report "Redirect Chains."

How to fix it: Update all redirects to go directly to the final destination (Page A → Page C). I don’t assume Google "will figure it out"—I make the path clean.

4) XML sitemap errors that slow indexing

What it is: Your sitemap contains dirty data: 404 pages, redirected URLs, or pages blocked by robots.txt. Nearly half of all XML sitemaps contain errors that slow new content indexing.

Why it hurts: It confuses Googlebot. If half the links in your map are dead ends, Google trusts your map less and crawls you less frequently.

How to detect it: Check the Sitemaps report in GSC. Look for "Sitemap contains URLs which are blocked by robots.txt."

How to fix it: Automate your sitemap generation to only include 200 OK, indexable, canonical URLs. Exclude everything else.

5) Mobile-first indexing parity issues (missing content, links, or blocked resources on mobile)

What it is: Your desktop site shows a full navigation menu and structured data, but your mobile site uses a simplified "hamburger" menu that lacks those links in the HTML code.

Why it hurts: Google judges your site based on the mobile version. Over 70% of websites suffer from parity issues. If it’s not on mobile, it doesn’t count for rankings.

How to detect it: Use the "Test Live URL" feature in GSC and view the rendered HTML. Search for key internal links or schema.

How to fix it: If you use an accordion or tab on mobile, ensure the content is loaded in the DOM (HTML), even if hidden by CSS.

6) Poor Core Web Vitals—especially INP—hurting UX and rankings

What it is: Your site is slow to respond to user inputs (clicks, taps). INP (Interaction to Next Paint) measures this frustration. Benchmarks to aim for are INP < 200ms and LCP < 2.5s.

Why it hurts: It’s a direct ranking factor and a massive conversion killer. Poor INP correlates with significantly higher bounce rates.

How to detect it: Look at the "Core Web Vitals" tab in GSC. Focus on the "Poor" URLs.

How to fix it: This usually requires developer help. You need to free up the main thread by deferring non-critical JavaScript. I prioritize the templates affecting the most users first.

7) JavaScript-heavy pages that Google can’t reliably render or index

What it is: The content of your page relies entirely on JavaScript to load (Client-Side Rendering). If I view the source code, I see a blank page.

Why it hurts: JavaScript-only rendering can lose 15–25% of content in indexing. Google tries to render JS, but it takes resources and time (the "rendering queue").

How to detect it: Disable JavaScript in your browser settings and reload your page. Is the content gone? If yes, you have a JS SEO risk.

How to fix it: Implement Server-Side Rendering (SSR) or Static Rendering. This improves indexing performance by 40–60%.

8) Orphan pages and weak internal linking that wastes crawl budget

What it is: Pages that exist but have no incoming internal links. They are stranded islands.

Why it hurts: Google interprets orphan pages as unimportant. You are wasting crawl budget on them if they are low quality, and losing rankings if they are high quality.

How to detect it: Compare your list of indexable URLs against a crawl of your site. URLs in the sitemap that weren’t found in the crawl are likely orphans.

How to fix it: Add contextual internal links from high-authority pages (like your homepage or category pages) to these stranded assets.

9) Missing or incorrect schema markup (leaving CTR on the table)

What it is: Failing to mark up products, reviews, or articles with structured data.

Why it hurts: You miss out on rich snippets. Product schema can boost e-commerce CTR by 25–35%. FAQ schema increases visibility for informational queries.

How to detect it: GSC "Enhancements" reports.

How to fix it: Use a plugin or hard-code JSON-LD script blocks into your page head. I verify my schema changes immediately; I don’t wait for Google to guess.

10) “Parasite SEO” risk: hosting irrelevant third-party content that drags the whole domain down

What it is: Allowing third parties to publish loosely related content on your high-authority domain (e.g., a reputable news site hosting a "best coupons" section provided by a vendor).

Why it hurts: Google has intensified enforcement against this. They now hold publishers accountable for all content on the domain. Low-quality third-party sections can trigger a site-wide algorithmic penalty.

How to detect it: Check your site for subfolders that don’t match your main brand intent.

How to fix it: Noindex these sections or remove them entirely. Tighten your editorial standards for any outsourced content.

Tools I actually use to catch technical SEO mistakes (and what each tool is best at)

You don’t need 50 tools. You need the right ones for the right job. Here is my personal stack. When I’m working to maintain consistent on-page structure and reduce publishing errors, I might leverage an AI article generator that outputs clean, schema-ready HTML, but I always validate the output with these diagnostic tools.

| Tool | Best For | What I Look For | Common Beginner Mistake |

|---|---|---|---|

| Google Search Console | The source of truth | Index Coverage, Manual Actions | Ignoring the "Excluded" tab |

| Screaming Frog | Deep crawling | Redirect chains, broken links | Not configuring it to render JS |

| PageSpeed Insights | Performance | Core Web Vitals (Lab Data) | Obsessing over score vs. metrics |

| Rich Results Test | Schema validation | Syntax errors in JSON-LD | Assuming valid code = guaranteed snippet |

Log File Analysis: This is an advanced step, but powerful. It shows you exactly where Googlebot visits on your server. It’s the only way to definitely prove crawl budget waste. If I see Googlebot hitting a generated calendar page 50,000 times a day, I know I need to block that path.

Prevention: the lightweight QA system I use so technical SEO mistakes don’t come back

Fixing mistakes is good; preventing them is better. I use a simple QA system to ensure we don’t regress. When scaling content production, having a reliable SEO content generator helps maintain structural consistency, but you must pair it with human oversight. Here is the playbook I use to keep sites healthy.

My pre-publish checklist (5 minutes per page)

- URL Structure: Is it clean, lowercase, and hyphenated?

- Title/H1: Do they match the intent? Is there only one H1?

- Indexability: Is the meta robots tag set to "index, follow"?

- Internal Links: Did I link to at least 2 other relevant pages?

- Schema: Did I validate the JSON-LD using the Rich Results Test?

My post-launch checklist (especially after redesigns/migrations)

I verify before I celebrate. Immediately after a deployment:

- Check Robots.txt: Confirm the staging block was removed.

- Test Top 20 URLs: Manually inspect the top traffic-driving pages. Do they render correctly?

- Sitemap: Resubmit the sitemap in GSC.

- Redirects: Spot-check old URLs to ensure they 301 redirect to the new counterparts.

- Monitor: Watch the "Pages" report in GSC daily for a week to catch any spikes in 404s or 5xx errors.

FAQs about technical SEO mistakes (quick, clear answers)

What is “parasite SEO” and why does it matter?

Parasite SEO involves third parties publishing content (often low quality) on authoritative domains to leverage their ranking power. Google now holds site owners accountable for this content. If you host irrelevant “coupon” or “review” pages from a vendor, you risk site-wide penalties. The fix is strictly overseeing or removing this content.

How has Core Web Vitals changed recently?

Google replaced First Input Delay (FID) with Interaction to Next Paint (INP). INP measures the responsiveness of all interactions during a user’s visit, not just the first one. To stay competitive, you need to ensure your site reacts quickly to clicks and taps under 200ms.

How can JavaScript hinder SEO, and how do I fix it?

If your site uses Client-Side Rendering (CSR), the content generates only after the browser executes JavaScript. Googlebot can struggle to render this, potentially missing up to 25% of your content. The best fix is switching to Server-Side Rendering (SSR) or Static Generation so Google sees the full HTML immediately.

What common technical errors silently damage rankings?

The most frequent offenders are broken internal links, accidental noindex tags, duplicate content caused by URL parameters, and orphan pages. If I only had time to check three things, I would check: 1) Robots.txt blocks, 2) Canonical tags, and 3) Mobile-parity.

Why did rankings drop after my site rebuild?

This is common and usually stems from changing URLs without proper 301 redirects. If Google can’t connect the old URL’s authority to the new URL, you lose that history. Other causes include changes to internal linking structures or accidentally leaving "noindex" tags from the staging environment.

Conclusion: my 3-bullet recap + the next 5 actions I’d take this week

Technical SEO doesn’t have to be overwhelming. It boils down to making your site easy for machines to understand.

- Access is everything: If Google can’t crawl or render it, you can’t rank.

- Details matter: A single wrong canonical tag can hide your best content.

- Parity is key: Your mobile site is the only version Google cares about.

If you do nothing else, do these 5 checks this week:

- Log into GSC and check the Crawl Stats report for failures.

- Run your homepage through the Mobile-Friendly Test and view the rendered HTML.

- Check your Core Web Vitals specifically for INP issues.

- Crawl your site with Screaming Frog to identify redirect chains.

- Review your Sitemaps in GSC to ensure no error pages are submitted.