Eliminating Redundancy: How to Fix Duplicate Meta Descriptions at Scale

Introduction: How I fix duplicate meta descriptions (and why you should care)

When I audit a site and see thousands of duplicate meta descriptions, I don’t panic—I triage. It’s a common sight: you open a crawl report, and suddenly you’re staring at 10,000 product pages that all say the exact same generic thing. The immediate result isn’t just an ugly spreadsheet; it’s lost revenue.

While meta descriptions aren’t a direct ranking factor, they are your organic ad copy. Duplicate or missing descriptions often force Google to generate its own snippets, which frequently miss the mark on user intent. This leads to a lower Click-Through Rate (CTR) and wasted impressions. If you want to fix this at scale without manually rewriting thousands of lines of text, you need a system.

In this guide, I will walk you through the exact workflow I use to audit, prioritize, and fix duplicate meta descriptions. We will cover how to leverage tools like SEO content generators and intelligent automation to solve this efficiently. Whether you are using a standard CMS or exploring an AI content writer workflow, the goal remains the same: regain control of your SERP presentation.

What you’ll get from this guide (the checklist-style promise)

- Identify duplicates fast: How to filter noise from actual issues using audit tools.

- Diagnose the source: Distinguish between duplicate text and technical tag duplication.

- Bulk-fix with templates: Use dynamic variables to solve 80% of the problem instantly.

- Apply technical fixes: When to use canonicals, redirects, or noindex tags.

- QA and Measure: How to validate your work and track CTR improvements.

What “duplicate meta descriptions” really means (and the business impact)

Let’s clear up the definition first. A duplicate meta description issue usually falls into one of two buckets: either multiple distinct URLs share identical text in their description tag, or a single URL has two conflicting description tags in its source code. Both are problematic, but for different reasons.

Why does this matter if Google says they aren’t a ranking signal? It matters because SEO is a game of marginal gains. When you have duplicate descriptions, you dilute your messaging. You are effectively telling search engines that Page A and Page B are the same, which confuses indexing. More critically, you lose the chance to convince a human to click.

The Data Reality:

- Sites with widespread duplicates often see a 5–20% reduction in CTR compared to optimized sites.

- Fixing these issues can yield a similar lift; manual A/B tests have reported CTR improvements in the low double digits (5–20%) after rewriting.

Why duplicates are harmful even if they don’t directly affect rankings

I treat meta descriptions like ad copy. Imagine running the same ad text for a luxury watch and a budget calculator. It doesn’t work. When descriptions are duplicated, Google often ignores them entirely and scrapes content from the page to build a snippet. While Google’s auto-generated snippets are getting smarter, they are often disjointed or irrelevant to the specific query. This lack of control means your carefully researched keywords and calls-to-action never reach the user’s eyes.

When duplicates are “acceptable” vs. when they’re a priority to fix

Not all duplicates are emergencies. If I have two hours to work on a site, I am not fixing the meta descriptions on paginated archives (e.g., /blog/page/2/). Those pages rarely drive revenue.

My Prioritization Framework:

- Revenue Pages: Product pages or service landing pages with high commercial intent. Fix these first.

- High-Impression Pages: Pages that are already ranking but have low CTR. Fixing the snippet is the fastest way to get more traffic.

- Template-Driven Clusters: If one template fix can resolve 5,000 errors (like a tag archive), do it for the efficiency win.

- Low-Value Archives: These can often be ignored or handled with a blanket technical rule (like noindex) rather than a content fix.

How I identify duplicate meta descriptions fast (audit workflow + tools)

You cannot fix what you cannot see. My workflow involves a mix of crawling and spot-checking. While you can use any major crawler, the process is generally the same: crawl, export, pivot.

| Tool | Best For | How to Find Duplicates |

|---|---|---|

| Screaming Frog | Deep technical audits & large sites | Content Tab > Meta Description > Filter: “Duplicate” |

| Sitebulb | Visualizing issues & prioritizing | Audit Report > SEO > Meta Descriptions |

| Ahrefs / SEMrush | Ongoing monitoring & quick wins | Site Audit > Issues > “Duplicate meta descriptions” |

| Google Search Console | Validating impact | Performance > Filter by low CTR pages |

Crawler method (Screaming Frog/Sitebulb): the exact filters I use

When using a crawler like Screaming Frog, I don’t just look at the error count. I look for patterns. I always configure my columns to show: URL, Meta Description, Meta Description Length, Indexability, and Canonical Link Element.

Once the crawl is done, I filter immediately by “Indexable” pages only. There is no point in fixing descriptions on pages that are 301 redirected or canonicalized to another URL. I export this list to a spreadsheet. My first step in the sheet is to separate true content duplicates from parameterized URLs (like `?sort=price`). This distinction saves hours of confusion later.

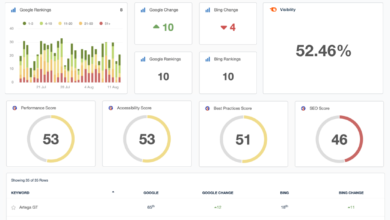

SEO suite method (Ahrefs/SEMrush) + quick GSC validation

If you don’t have a crawler set up, cloud-based tools like Ahrefs or SEMrush work well. They will flag “Duplicate Meta Descriptions” as an error. I export this list and then immediately cross-reference it with Google Search Console (GSC).

I look for pages with high impressions but suspiciously low CTR. If a page has 10,000 impressions and a 0.5% CTR, and I see it has a duplicate meta description, that’s my smoking gun. That is where the money is hiding.

Diagnose the root cause: why mass meta duplication happens (so you fix it once)

Before I write a single description, I check whether two systems are fighting each other. Mass duplication is rarely a copywriter pasting the same text 500 times; it’s almost always a settings issue.

Check #1: Is it duplicate text across pages, or duplicate tags in the HTML?

This is a subtle but critical distinction. I’ve seen sites where the theme injects a meta description based on the post excerpt, while the SEO plugin (like Yoast or Rank Math) injects its own optimized description. The result? The page source has two `<meta name=”description” …>` tags.

How to check: Right-click on a page, select “View Page Source,” and search for “description”. If you see two distinct tags, you have a plugin/theme conflict. You need to disable the theme’s output, not rewrite the text.

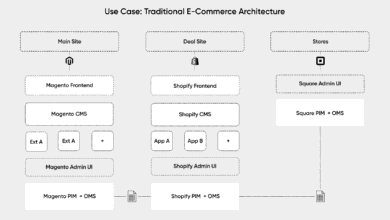

Check #2: Template patterns that create duplicates (archives, filters, variants)

If the code only has one tag, but the text is identical across many pages, look at the page type.

- Faceted Navigation: e-commerce filters for color or size (e.g., “Blue Widgets” vs. “Red Widgets”) often inherit the main category description.

- Tag Archives: In CMSs like WordPress or Shopify, tag pages often default to the site’s general tagline if a specific description isn’t set.

- Product Variants: Different URLs for the same product (varying by size or pack count) usually share the exact same copy.

Workflow to fix duplicate meta descriptions at scale (without rewriting everything by hand)

If you have 50 pages, write them by hand. If you have 50,000, you need a system. My goal is always to get to 80% coverage using intelligent templates, and then manually polish the top 20% of high-value pages. This is where modern tools, including AI article generators for content drafting and bulk article generator workflows, can support your strategy by ensuring consistent quality across the board.

Step 1: Segment URLs into “templates” vs. “handwritten” pages

I segment the URLs from my audit into groups.

Group A (Manual Rewrite): Homepage, core service pages, top 50 performing blog posts. These get human love.

Group B (Template Fix): Product pages, category archives, location pages. These get dynamic variables.

Group C (Technical Fix): Faceted navigation, paginated series, thin tag pages. These get canonicals or noindex.

Step 2: Build dynamic meta description templates (variables that guarantee uniqueness)

For Group B, dynamic templating is a lifesaver. Most SEO plugins allow you to use variables. Instead of typing “Buy our Blue Shoes,” you create a pattern.

Example Template Pattern:

Shop the best %%title%% at %%sitename%%. We offer high-quality %%category%% with fast shipping to %%location%%. Prices start at %%price%%.

The Output:

Shop the best Blue Suede Shoes at ShoeStore. We offer high-quality Men’s Footwear with fast shipping to New York. Prices start at $59.99.

This guarantees uniqueness as long as your product data is distinct. A word of caution: avoid using variables that change daily (like exact stock counts) as it forces search engines to re-crawl too often.

Step 3: When you should bulk-write unique descriptions (and how to keep quality)

Sometimes templates feel robotic. For mid-tier pages where you want a unique voice but can’t afford hours of manual writing, batch production is key. I keep a shared spreadsheet with columns for “Primary Intent” and “Unique Angle.” This prevents writers—or AI tools—from repeating the same phrase. If you are exploring AI solutions, emerging tech like MetaSynth has shown CTR lifts of over 10% in testing by optimizing metadata based on implicit feedback , suggesting that AI-generated, performance-based metadata is the future of this workflow.

Step 4: QA before publish (uniqueness checks + snippet sanity check)

I never push a site-wide template change without testing it on a small section first. I’ll apply the template to one category, clear the cache, and re-crawl those URLs. I check for:

- Truncation: Did the variable make the text too long (over ~160 chars)?

- Missing Data: Did the `%%price%%` variable output blank because the data was missing?

- Readability: Does it sound like a human wrote it?

Technical fixes that eliminate meta duplication (canonical, redirects, noindex, and conflicts)

Sometimes the best description is no description—or rather, telling Google to ignore the page entirely. Here is the decision matrix I use when the content itself is the duplicate issue.

| Problem | Best Fix | Who Implements? |

|---|---|---|

| Theme + Plugin both outputting tags | Disable Theme Output (Code Snippet) | Developer / Advanced SEO |

| Parameterized URLs (filters, sort) | Canonical Tag to main category | SEO Plugin Settings |

| Old/Moved URL versions | 301 Redirect | Server / Plugin |

| Thin/Low-value archives (tags) | Noindex Tag | SEO Plugin Settings |

Fix #1: Stop duplicate meta tags at the source (theme/plugin conflicts)

If your audit showed two tags in the source code, you need to turn one off. If you are using WordPress with a theme like Hello Elementor and a plugin like Rank Math, this is common. You typically need to add a small filter to your `functions.php` file to disable the theme’s meta description support. Check your theme documentation or ask a developer; deleting the text from the excerpt field is just a band-aid.

Fix #2: Canonical tags for duplicates you must keep

Canonical tags are hints, not commands, but they are powerful. If you have a product accessible via `/shop/shoes/blue-shoe` and `/shop/all/blue-shoe`, the content is identical. You don’t want to rewrite the meta description for both. Instead, pick one as the “master” and point the canonical tag of the other version to it. This consolidates ranking signals and tells Google, “Ignore the duplicate meta on this secondary page; index the main one.”

Fix #3: 301 redirects when duplicates shouldn’t exist

If you find duplicate descriptions on pages that are simply old versions of current pages, 301 redirect them. This isn’t just a meta description fix; it’s a site hygiene fix. Consolidating these pages preserves link equity and removes the duplicate error from your audit report entirely.

Fix #4: Noindex for low-value archives (tag pages, thin filters)

I am ruthless with tag pages. If you have a tag page for “summer-vibes” that lists two blog posts and shares a meta description with 50 other tag pages, it likely doesn’t need to be in the index. Applying a `noindex` tag removes it from search results. If it’s not in the index, the duplicate description doesn’t matter. I noindex only when I’m confident the page doesn’t serve a real search intent.

Meta description best practices (so the new ones earn clicks) + ready-to-use templates

Fixing the technical error is only half the battle. The new description needs to perform. I’ve found that the best descriptions aren’t keyword-stuffed; they are benefit-driven.

A simple formula I use: Intent + Specific benefit + Proof/Differentiator + Next step

Don’t just describe the page; sell the click.

Weak: “This is a page about plumbing services in Austin. We do repairs and installs.”

Strong: “Reliable 24/7 plumbing services in Austin. 500+ 5-star reviews for emergency leak repair and installation. Get a free quote today.”

Templates you can adapt (service, product, category, blog post)

Feel free to steal these patterns for your bulk uploads or template settings:

- E-commerce Product: “Buy [Product Name] at [Brand]. Features [Key Feature 1] and [Key Feature 2]. Free shipping on orders over $50. Shop now.”

- Local Service: “Expert [Service] in [City]. Licensed and insured professionals specializing in [Specialty]. Call now for same-day scheduling.”

- Blog Post: “Learn how to [Solve Problem] with this step-by-step guide. We cover [Key Point A], [Key Point B], and [Key Point C]. Read more.”

- Category Page: “Browse our wide selection of [Category Name]. Top-rated brands including [Brand A] and [Brand B]. Find your perfect match today.”

How I measure results after I fix duplicate meta descriptions (CTR, rewrites, and testing)

You can’t claim success just because the spreadsheet says “fixed.” You need to verify the impact. I typically wait for a re-crawl (which can be accelerated by submitting sitemaps in GSC) and then check the data.

| Metric | Where to Check | Success Signal |

|---|---|---|

| CTR | GSC Performance Report | Increase (e.g., from 1.5% to 1.8%) |

| Snippet Rewrites | Manual SERP Check / Tools | Google is displaying your text |

| Duplicate Count | Crawl Tool (Screaming Frog) | Count drops to near zero |

Will Google always use my meta descriptions? (What to do when it rewrites)

Here is the hard truth: You can influence snippets, but you can’t force them. Google rewrites meta descriptions frequently—some studies suggest over 60% of the time for certain queries. If you see this happening, don’t view it as a failure. It’s a signal. Google likely thinks your description didn’t match the specific query well enough. Review the keyword intent and try to align your description more closely with what the user is actually typing.

Common mistakes, FAQs, and my next-step checklist

Common mistakes I see (and how to fix them)

- Mistake: Rewriting descriptions on pages that are about to be deleted.

Fix: Check the 301 redirect list first. - Mistake: Using the same CTA (“Click here”) on every single page.

Fix: Vary the CTA based on intent (“Shop now” vs “Learn more”). - Mistake: Forgetting to re-crawl after implementing a template fix.

Fix: Always validate code changes with a fresh crawl. - Mistake: Optimizing for keywords but ignoring clickability.

Fix: Read it aloud. Does it sound like a human wrote it?

FAQs (quick answers)

Do duplicate meta descriptions hurt rankings?

Not directly. However, they can lower your CTR, which is a negative user signal, and they dilute the uniqueness of your pages in Google’s index.

What is the fastest way to fix 1,000+ duplicates?

Use dynamic templating in your SEO plugin. Map variables like Product Name and Brand to the meta description field to generate unique text instantly.

Should I fix duplicates on noindex pages?

No. If a page is set to `noindex`, it won’t appear in search results, so the meta description is irrelevant to SEO.

Conclusion: recap + next actions

Fixing duplicate meta descriptions is one of the highest-ROI maintenance tasks you can do. It cleans up your technical health, improves your professional appearance in SERPs, and often drives a measurable lift in traffic without needing new content.

Your 3-Step Action Plan:

- Run a Crawl Today: Use Screaming Frog or a free audit tool to get your baseline number of duplicates.

- Apply Templates First: Don’t start writing manually. Apply dynamic templates to your largest content clusters (products, tags, categories).

- Triage the Rest: Manually rewrite your top 20 revenue pages and use technical fixes (canonical/noindex) for the low-value leftovers.

That’s the process I use—simple, repeatable, and measurable. Now, go save your CTR.