How to fix SEO indexing issues: The Consultant’s Guide to Solving Complex Technical Indexing Problems (2025)

There is no sinking feeling quite like hitting “publish” on a piece of content you know is excellent, checking your sitemap a week later, and seeing that Google hasn’t indexed it. Or worse, seeing the dreaded “Crawled – currently not indexed” status in Google Search Console.

I’ve been there. In my experience auditing sites ranging from local SMBs to massive enterprise publishers, indexing is rarely broken by magic. It is almost always a specific, diagnoseable friction point in the pipeline between discovery and rendering.

This guide is a consultant’s playbook. It’s not a list of vague “best practices”; it is the specific workflow I use to diagnose why URLs aren’t indexing and how to fix them. We will cover the new reality of 2025—where AI crawlers, mobile-only indexing, and Core Web Vitals determine your fate—and walk through a step-by-step triage process. Note: These steps increase the probability and speed of indexing; in the modern search ecosystem, no one can guarantee indexing timeline, as it depends heavily on your site’s overall authority and crawl demand.

The intent (and who this is for)

This guide is written for intermediate growth marketers, in-house SEOs, and business owners who are tired of guessing. You aren’t just looking for definitions; you have a specific URL or a cluster of pages that won’t show up in search, and you need a systematic way to fix it.

If you are looking for a magic button to force Google to index low-quality content instantly, this isn’t it. But if you need to unclog your technical pipeline and ensure your high-value pages get the visibility they deserve, you are in the right place.

Crawling vs. indexing vs. ranking (60-second definitions)

Before we open our tools, let’s agree on terms. People often conflate these, which leads to solving the wrong problem.

- Crawling (Discovery): Googlebot visits your page and downloads the code. Think of this as a librarian picking up a book.

- Indexing (Storage): Google analyzes the content, renders it, and decides it is worth storing in its database. This is the librarian filing the book on the shelf.

- Ranking (Display): Google shows that stored page in search results for a query. This is the librarian recommending the book to a reader.

The takeaway: You cannot rank if you aren’t indexed. And you cannot be indexed if you aren’t crawled effectively. But just because you are crawled (the book was picked up) doesn’t mean you will be indexed (the book was filed).

Indexing in 2025: what changed (mobile-only indexing, AI understanding, and demand-driven crawl)

If you feel like indexing has gotten harder recently, you aren’t imagining things. In 2025, the bar for entry into the index is higher. Google doesn’t just index everything it finds anymore; it prioritizes based on signals.

First, indexing is mobile-only. This is not just “mobile-first.” If content exists on your desktop site but is hidden, truncated, or missing from the mobile version, as far as Google is concerned, it does not exist. I often tell clients that their desktop site is just a vanity project for the office; the mobile site is the only one the crawler respects. Parity issues here can correlate with 40–60% lower rankings or complete indexing failures.

Second, crawling is demand-driven. We used to assume that if we submitted a sitemap, Google would crawl it. Now, AI-driven systems (including updated logic often associated with “GeminiBot” behaviors) assess whether a URL is worth the resources to crawl based on signals like internal link depth, social traffic, and historical quality. If your new page has zero internal links and no external signals, it may sit in the queue indefinitely.

Finally, Core Web Vitals 2.0 matters for indexing, not just ranking. Metrics like Interaction to Next Paint (INP) and Total Blocking Time (TBT) indicate if a page is computationally expensive to render. If your JavaScript execution is heavy, Googlebot may bail out before it even sees your content.

The new indexing reality: “possible to crawl” isn’t “worth indexing”

This is the hardest conversation I have with clients. They show me a technically perfect page—no errors, 200 OK status—and ask why it is ignored. The answer is usually prioritization.

Google has a finite amount of resources. When you see “Crawled – currently not indexed,” Google is essentially saying: “We saw it, we read it, but we didn’t find it unique or valuable enough to store right now.” This often happens with programmatic content or thin pages that look too similar to existing URLs. Indexing is a choice, not a right.

Mobile content parity is non-negotiable

I cannot stress this enough: check your mobile view. Parity means the main content, headings, internal links, and structured data must be identical on mobile and desktop. A quick self-check: open your page on your phone. Can you see the same H1? Are the breadcrumbs there? Is the FAQ section expandable or hidden behind a click that Googlebot might not execute? If it’s missing on mobile, it’s missing from the index.

My consultant workflow: how I diagnose and fix SEO indexing issues (step-by-step)

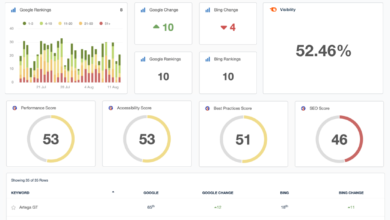

When I start an audit, I don’t guess. I follow a strict process to rule out variables one by one. I recommend you build a similar repeatable process—or use a comprehensive AI SEO tool and content intelligence stack like Kalema to monitor these signals automatically.

Here is the exact mapping I use to decipher what Google Search Console (GSC) is trying to tell me:

| GSC Status / Symptom | What it usually means | Top 3 Checks I Perform |

|---|---|---|

| Discovered – currently not indexed | Google knows the URL exists (via sitemap or link) but hasn’t crawled it yet to save resources. | 1. Check internal link count (is it orphaned?). 2. Check server performance (is it slow?). 3. Inspect URL to request a priority crawl. |

| Crawled – currently not indexed | Google read the page but decided not to index it. Usually a quality or duplication issue. | 1. Check for thin/duplicate content. 2. Verify unique intent vs. other pages. 3. Ensure the page isn’t “soft 404” (empty content). |

| Excluded by ‘noindex’ tag | You (or your dev) told Google not to index this. | 1. Check source code for <meta name=”robots”>. 2. Check HTTP headers (X-Robots-Tag). 3. Verify it wasn’t left over from staging. |

| Duplicate without user-selected canonical | Google thinks this page is a copy of another one and you didn’t specify a preference. | 1. Check if content is unique. 2. Add a self-referencing canonical tag. 3. Consolidate pages if they serve the same intent. |

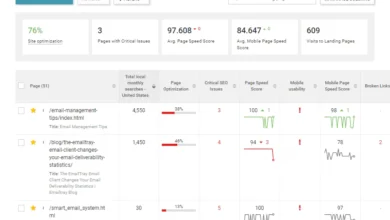

Step 1: Confirm the real symptom in Google Search Console

Start in the Indexing > Pages report. Don’t panic if you see gray bars—focus on the specific error reasons below the graph. Use the URL Inspection tool on a specific non-indexed URL to see the current status. Remember: “URL is not on Google” is the symptom; the “Why” is listed right below it.

Step 2: Rule out hard blockers fast (robots.txt, noindex, auth walls)

Before deep diving, check the “stupid” stuff. I once spent hours auditing content quality only to find a developer had left a `noindex` tag on the entire header during a migration. Run these yes/no checks:

- Robots.txt: Is the URL path blocked? (e.g., `Disallow: /blog/`)

- Meta Tags: View Page Source and search for “noindex”.

- X-Robots-Tag: Check the HTTP response headers (dev tools > Network tab).

- Login Walls: Can you view the page in an Incognito window? If it requires a login, Google can’t see it.

Note: Removing a `noindex` tag makes indexing possible again; it doesn’t force it instantly.

Step 3: Validate canonicals, duplicates, and parameters (the “indexing choice” layer)

This is where things get messy. If you have a canonical tag pointing to a different URL, you are explicitly telling Google, “Don’t index this, index that one instead.” Verify that your page has a self-referencing canonical tag if you want it indexed.

Also, watch out for URL parameters. If `example.com/shoe` and `example.com/shoe?color=black` show the exact same content, Google will likely index only one. This is a “choice,” not an error.

Step 4: Check discovery signals (internal links, orphaned pages, crawl paths)

An orphaned page (a page with zero internal links) is a dead end. Why should Google value a page you don’t even link to yourself? My rule of thumb: every indexable page should be reachable within 3 clicks from the homepage. Practical action: go to a relevant, high-authority page on your site and add a link to the non-indexed page. This passes “crawl juice” and signals importance.

Step 5: Rendering & performance checks (especially for JavaScript sites)

If your content relies on JavaScript (React, Vue, Angular), Googlebot might be seeing a blank page. If content only appears after you click or scroll, treat it as a rendering risk. We’ll dive deeper into this in the technical section, but for now, ask yourself: is the main text in the initial HTML source?

Step 6: Request/notify and monitor (URL Inspection, sitemap, IndexNow)

Once you’ve applied fixes, use the Request Indexing button in GSC. Then, wait. I typically check back in 48–72 hours. For faster notifications on larger sites, I implement IndexNow. Just remember: these tools initiate crawling; they do not guarantee indexing.

Fix the fundamentals first: the indexing blockers I see most often (with exact fixes)

You can’t out-strategy a broken foundation. Below is my “surgery list” of the most frequent technical blockers I encounter, along with exactly how to fix them.

| The Issue | How to Detect | How to Fix | Verification Step |

|---|---|---|---|

| Rogue Noindex Tag | View Source > search “noindex” or use SEO extension. | Remove tag in CMS (Yoast/RankMath settings) or header.php. | GSC > URL Inspection > Live Test (ensure “Indexing Allowed: Yes”). |

| Robots.txt Block | GSC > Settings > Open Report or visit /robots.txt. | Remove the `Disallow` rule affecting that path. | Use GSC Robots.txt Tester tool. |

| Redirect Chains | Screaming Frog or HTTP status checker (301 > 301 > 200). | Update links to point directly to the final destination (200). | Crawl the URL list again to ensure direct 200 codes. |

| Orphaned Pages | Crawl site; filter for URLs with “Inlinks = 0”. | Add links from related, high-traffic pages or menu/footer. | Re-crawl or check “Links” report in GSC later. |

| Sitemap Errors | GSC > Sitemaps (look for “Could not fetch” or errors). | Remove 301s, 404s, and non-canonicals from XML. | Resubmit sitemap in GSC; status should be “Success”. |

Robots.txt and meta robots: blocking by accident

I recently audited a site where the developer blocked the entire `/wp-content/` folder in robots.txt to “save crawl budget.” The problem? That folder held all the images and scripts. Google rendered the site as a broken mess and de-indexed it. Safe approach: Only disallow admin paths or internal search results. Never block resources (CSS/JS) needed to render the page layout.

Canonicals & duplicates: when Google ignores your preference

Sometimes you set a canonical, and Google ignores it. GSC will report: “Google chose different canonical than user.” This happens when the signals are mixed. For example, if you canonicalize Page A to Page B, but you put Page A in your sitemap and link to it internally 100 times, Google gets confused. Fix: Align your signals. The canonical version should be the one in the sitemap and the one receiving internal links.

Redirect chains, broken links, and soft 404s

Long redirect chains (A > B > C > D) waste crawl budget and dilute signals. Googlebot may just give up after the second or third hop. Similarly, “Soft 404s” occur when a page says “Product Not Found” but sends a `200 OK` status code. Google hates this. Ensure error pages actually send a `404` header.

Sitemaps: make them accurate, not just present

A sitemap isn’t a dump of every URL you’ve ever created. It should be a clean list of your best, indexable content. Industry data suggests that sitemaps filled with dirt (404s, redirects) have lower trust. If your sitemap has a 45% error rate, Google may stop trusting it for discovery entirely. Keep it clean.

Solve complex technical indexing issues: JavaScript rendering, headless setups, and Core Web Vitals 2.0

If you are running a standard WordPress site, you can usually skip this section. But if you are on a headless CMS, React, Next.js, or a Single Page Application (SPA), this is likely where your problem lives.

JavaScript-heavy sites face a double hurdle: crawling and rendering. Google has to execute the JS to “see” the page. This takes time and computing power. Statistics indicate that client-side rendered (CSR) sites can lose 15–25% of their indexable content because the crawler times out or fails to render specific blocks.

| Rendering Approach | Indexing Risk | Best Fit For | Recommended Fix |

|---|---|---|---|

| Client-Side Rendering (CSR) | High. Google sees a blank page initially. | Logged-in dashboards, private apps. | Avoid for public SEO pages. Move to SSR or Prerendering. |

| Server-Side Rendering (SSR) | Low. Server sends full HTML. | Dynamic content (news, social feeds). | Ensure fast server response time (TTFB). |

| Static Site Generation (SSG) | Lowest. Pre-built HTML files. | Blogs, marketing sites, documentation. | Ideal for SEO. Use wherever possible. |

| Dynamic Rendering | Medium. Serves HTML to bots only. | Legacy JS apps. | Google now discourages this; prefer SSR/SSG/Hydration. |

How to tell if Google is missing your content (beginner-friendly checks)

You don’t need to be a developer to check this. Here is the script I give clients to hand off to their dev teams:

- View Source vs. Inspect Element: Right-click and “View Page Source.” Search for your main article text or important internal links. Are they there? If not, they are being injected by JS (Client-Side Rendering).

- Google Mobile-Friendly Test (or URL Inspection): Use the “View Crawled Page” > “HTML” tab in GSC. This shows exactly what Googlebot saw. If your content is missing here, it won’t be indexed.

Mobile parity (again), but technical: responsive vs dynamic serving vs separate m-dot

Technically, most sites use Responsive Web Design (same HTML, different CSS). This is safe. But if you use “Dynamic Serving” (server detects mobile user agent and sends different HTML), things break easily. I often see developers hide heavy footer links on mobile to improve speed. The result? Googlebot Mobile stops crawling those links, and the destination pages get orphaned and de-indexed.

Performance & indexing reliability: where Core Web Vitals can indirectly hurt indexing

It’s not just about ranking anymore. If your Interaction to Next Paint (INP) is terrible, it often means your main thread is blocked by scripts. If the main thread is blocked, the crawler (which acts like a user) struggles to render the page efficiently. Optimizing TBT (Total Blocking Time) by reducing JS payloads isn’t just UX—it’s crawl efficiency insurance.

Accelerate indexing (ethically): internal links, IndexNow, structured data, and content signals

Once you’ve fixed the errors, how do you speed things up? When I need a page indexed faster, I don’t wait for Google to stumble upon it. I create demand signals.

One of the most powerful tools available today is using an AI article generator that understands semantic structure, ensuring your content is born with the right schema and formatting. But beyond content creation, here is how to push the accelerator:

- Internal Linking Pathways: Add links to the new page from your “Power Pages” (homepage, high-traffic blog posts). This is the strongest signal you control.

- External Signals: Share the URL on social media or email newsletters. Traffic (and the associated DNS/Chrome data) signals activity to Google.

- IndexNow: This protocol allows you to ping search engines immediately when a URL is added or updated.

IndexNow in 2025: how I use it alongside sitemaps (not instead of them)

Think of your XML sitemap as the “annual inventory list” and IndexNow as the “daily shipment alert.” In 2025, Google has adopted IndexNow support to varying degrees. If you are on WordPress, use a plugin that supports IndexNow. It automatically pings search engines the moment you hit update. It doesn’t guarantee indexing, but it cuts the discovery time from days to minutes.

Structured data for clarity: what to add first (and where it backfires)

Structured data (Schema.org) helps AI crawlers understand what your page is. A product page with `Product` schema is easier to categorize than a blob of HTML. I recommend starting with `BreadcrumbList` (helps crawl paths) and `Article` or `Product` schema depending on your page type. Warning: Don’t spam `FAQ` schema on every page if it’s not truly an FAQ; misuse can lead to manual penalties.

Common mistakes, realistic timelines, and FAQs (what I tell clients when pages won’t index)

Even with perfect technical SEO, things take time. I typically advise clients to wait 4–12 weeks for a large batch of programmatic pages to fully settle in the index. Prioritization takes time.

If you are managing a large site, consider using an Automated blog generator that respects these technical constraints—publishing at a controlled cadence to avoid “index bloat” (publishing too many low-quality pages too fast).

5–8 common indexing mistakes (and my fixes)

- Mistake: Relying solely on URL Inspection for 1000s of pages.

Fix: Use XML Sitemaps and IndexNow for scale. - Mistake: Leaving “Disallow: /” in robots.txt after launch.

Fix: Always double-check robots.txt immediately after go-live. - Mistake: Canonicalizing to the homepage to avoid duplicate content.

Fix: Never do this. Soft 404s kill indexing. Canonical to the true source or 404 it. - Mistake: Ignoring “Crawled – currently not indexed” for months.

Fix: Rewrite the content, merge it, or delete it. It’s a quality signal. - Mistake: Blocking CSS/JS files.

Fix: Allow Googlebot to access all assets to render the page correctly.

FAQ: Why is “Crawled, currently not indexed” still happening after I fixed noindex?

I get this question weekly. Think of it like a restaurant queue. You were kicked out of line (noindex). You fixed the issue and got back in line. But now you are at the back. Google has to re-evaluate the page against everything else it crawled today. If the content is thin or duplicative, it might stay in this status. Plan: Add 2–3 strong internal links and unique value, then wait 2 weeks.

FAQ: How does IndexNow help fix indexing delays?

It solves the “discovery” lag. Without it, Google might not visit your site for days. With IndexNow, Google knows the URL changed instantly. However, it does not solve the “quality” evaluation. It gets the librarian to pick up the book faster, but it doesn’t force them to keep it.

FAQ: Why are JavaScript-heavy sites problematic for indexing?

Imagine if every time you opened a book, you had to assemble the pages yourself before reading. That is Client-Side Rendering. If Google’s rendering queue is backed up, or if your JS throws an error, the pages remain blank. SSR (Server-Side Rendering) hands Google the fully assembled book.

FAQ: How critical is mobile content parity?

It is the difference between ranking and invisibility. If your mobile page lacks the H1 or the main body text found on desktop, Google simply won’t index that content. Audit your key revenue pages on your phone today.

Summary: my 3-bullet recap + the next actions I’d take this week

We’ve covered a lot of ground, but you don’t need to do everything at once. If you are feeling overwhelmed, here is the simplified game plan:

- Diagnosis is 80% of the battle: Use my table to map GSC status to the real problem (Quality vs. Technical Block vs. Discovery).

- Mobile Parity & JS are the hidden killers: Ensure what you see on your phone and in the HTML source is what Googlebot sees.

- Indexing is earned, not given: Build demand signals (links) to prove your page is worth the storage space.

Your next actions this week:

1. Open GSC and export the “Crawled – currently not indexed” report.

2. Run the “Hard Blocker” checklist (robots.txt, noindex) on those URLs.

3. Pick your top 5 most important missing pages and add 3 internal links to each from high-traffic posts.

4. Submit them via URL Inspection.

Start there. Document the dates. Then watch the graphs move.