Coding for SEOs: SEO migration automation for automated file checks in large-scale content migrations

Introduction: Why I rely on SEO migration automation in large content moves

I still remember the anxiety of my first massive migration: 50,000 URLs, three weeks to launch, and five different stakeholder teams pushing for a “go” decision. We were moving from a legacy CMS to a headless architecture, and the URL structure was changing entirely. I tried to manually spot-check the top 500 pages, but on launch day, we realized a template logic error had canonicalized 15,000 product pages to the homepage. It took days to recover.

That experience changed how I work. In large-scale moves, manual QA isn’t just slow; it is a liability. You cannot eyeball 50,000 rows of data effectively.

Today, I rely on SEO migration automation. This isn’t about replacing strategy with robots; it’s about running scripted file checks to catch parity diffs, one-hop redirects, and metadata regressions before they hit production. Whether you are managing a move on WordPress, Shopify, or a custom Next.js stack, you need a workflow that scales. In this guide, I’ll walk you through the exact QA gates, file-level checks, and automated thresholds I use to launch confidently, without the 3 a.m. panic.

What “SEO migration automation” actually means (and what it doesn’t)

To be clear, SEO migration automation acts like a spell-checker for your site architecture. It is a set of repeatable checks run by scripts or tools to identify regressions before and after launch. It does not replace the human strategy of deciding why a URL should redirect, but it flawlessly executes the check to ensure it did redirect.

When you are dealing with common migration types—URL structure changes, platform replatforming, or infrastructure updates—the risks are predictable. You risk losing indexability, suffering from canonical drift, breaking internal links, or accidentally blocking search engines via robots.txt. Automation pays off most when:

- You are migrating 5,000+ URLs.

- You have multiple page templates (e.g., product, category, blog, landing pages).

- Your development team pushes frequent staging releases.

- You need to regenerate or refresh content at scale using tools like an SEO content generator to fill gaps found during the audit.

Common migration types and the SEO failures they trigger

Different moves break different things. Here is what I check first based on the project type:

- Site Redesign: Often triggers heading (H1-H6) drift and metadata loss as new components replace old hard-coded HTML.

- Platform Migration: The biggest risk here is URL pattern changes and parameterized URL handling, leading to redirect loops or chains.

- Infrastructure/Domain Move: Watch for server-side performance issues (Time to First Byte) and domain migration redirects failing to resolve HTTPS correctly.

The minimum toolset I use (beginner-friendly)

You do not need a computer science degree to automate this. If you don’t code daily, start with copy/paste scripts and small wins. Here is my neutral, practical stack:

- Crawler: Screaming Frog or Sitebulb (for generating the raw CSV data).

- Data Processing: Excel (for small sites) or Python/Pandas (for Screaming Frog export manipulation on large sites).

- Monitoring: Google Search Console API or reliable third-party monitoring tools.

- Performance: Lighthouse CI or PageSpeed Insights.

The step-by-step workflow I use for SEO migration automation (with QA gates)

If you rely on ad-hoc checks, you will miss things. I structure every migration around five distinct “Gates.” If a gate fails, we do not launch. This binary approach saves hours of subjective debate with developers or product managers.

My SEO migration automation workflow looks like this:

- Gate 0: Inventory & Benchmarks (The “Before” picture)

- Gate 1: Mapping Strategy (The Plan)

- Gate 2: Staging Parity (The Test)

- Gate 3: Launch Validation (The Button Push)

- Gate 4: Post-Launch Monitoring (The Safety Net)

I used to skip the benchmarking phase, thinking I knew the site well enough. Then, after a launch, a stakeholder claimed the site “felt slower.” Because I didn’t have the data to prove the Core Web Vitals were identical, we spent a week chasing ghost issues. Never skip Gate 0.

Gate 0: Inventory and benchmarks (what I export before touching anything)

You don’t need perfection—just a baseline you trust. Before a single line of code changes, I run a full crawl of the legacy site and export a content inventory export containing these essential columns:

- URL

- Status Code

- Page Title & H1

- Meta Description

- Canonical Link Element

- Meta Robots (noindex/nofollow status)

- Word Count

- Schema Types (e.g., Article, Product)

- Core Web Vitals baseline metrics (LCP, CLS, INP)

Gate 1: URL mapping strategy (rules first, then automation)

The goal is to map old URLs to new URLs without losing link equity or user intent. I always start with high-level rules (Regex) because they cover the most ground with the least effort. For example, if /blog/seo-tips is becoming /guides/seo-tips, a simple regex rule handles the entire subdirectory.

Only after applying rules do I move to URL mapping rules for individual exceptions. This is where automation helps verify that every legacy URL has a destination, avoiding the dreaded “soft 404” where a page redirects to a generic category instead of a specific article.

Gate 2: Staging parity checks (crawl old vs new and diff)

This is the dress rehearsal. I ask dev teams for a staging environment URL and basic auth credentials early—otherwise, you’ll be debugging 401 Unauthorized errors at midnight. I run a crawl of the staging site and compare it against my Gate 0 benchmark. This is the crawl parity check.

If 10% of my pages are suddenly non-indexable on staging, or if the average word count has dropped by 500 words across my blog templates, I know we have a template regression. We fix it here, not in production.

Gate 3: Launch day validation (fast checks that catch disasters)

When the switch flips, you don’t have time for a full crawl. You need a launch day SEO checklist or “smoke test” that takes 15 minutes:

- Robots.txt: Verify the production

robots.txtisn’t blocking the site. - Meta Robots: Check 10 random pages to ensure

noindextags from staging were removed. - Canonicals: Ensure canonical validation points to the new production domain, not the staging domain.

- Redirects: Spot-check top 20 traffic-driving URLs manually.

- Analytics: distinct verification that GA4/GTM tags are firing.

Gate 4: Post-launch monitoring (first 48 hours and first 30 days)

Don’t panic if traffic wobbles. It is common to see volatility as Google recrawls the site. I monitor Search Console coverage reports and server logs intensely for 48 hours. Specifically, I look for spikes in 404s and 5xx errors. A reported case study noted a traffic dip for 48 hours followed by a 12% increase after fast issue resolution , which aligns with my experience: speed of correction matters more than the initial error.

Coding for SEOs: automating file checks (redirects, metadata, schema) using crawl exports

Top-ranking articles often tell you what to check but skip how to automate it. If you have 20,000 pages, you can’t compare Excel sheets manually. You need a scripted comparison.

When automated file checks reveal that huge sections of content are missing metadata or introductions, teams sometimes use an AI article generator to draft structured updates at scale—but remember, automation is for detection; editorial review is for correction. Here is how I structure my diffing workflow using Python SEO scripts.

The CSV columns I standardize before diffing

I learned this the hard way: if your column names don’t match, your script fails. Before I run any comparison, I standardize my crawl CSV columns. I ensure both my “Old Site” export and “Staging Site” export have these exact headers:

url(normalized: lowercase, no trailing slash differences)status_codetitlemeta_descriptionh1_1canonicalmeta_robotsword_countschema_types

A simple parity-diff script flow (beginner-friendly)

You don’t need to be a developer to understand this logic. The script performs a “VLOOKUP” on steroids. Here is the pseudo-code flow for a parity diff:

- Load Data: Read

old_crawl.csvandnew_crawl.csvinto dataframes (tables). - Join: Match rows based on the

urlcolumn (or themapped_urlif URL structures changed). - Compare: Loop through key columns.

Example logic:If old_title != new_title, flag as "Title Mismatch". - Score Severity: Assign a priority.

- P0 (Critical): Page was 200 OK, now is 404 or Noindex.

- P1 (High): Title tag missing or H1 completely changed.

- P2 (Medium): Meta description changed or word count dropped by <10%.

- Output: Save to

migration_issues.csv.

This won’t catch everything (like subtle tone shifts), but it catches the big SEO regression detection items fast.

Schema and structured data presence checks (fast wins)

Automation struggles to validate the accuracy of schema (is this the right price?), but it is excellent at checking presence. I check for schema parity: if the old product page had Product, BreadcrumbList, and FAQPage schema, does the new one have them too?

I once saw a migration where the new theme stripped out BreadcrumbList entirely. We lost rich snippets overnight. Now, checking for the existence of JSON-LD checks is a standard line item in my script.

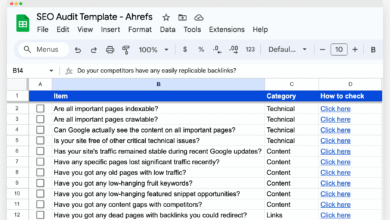

Table placement: “Field-by-field parity checks”

| Element | Why it matters | How to Check | Common Failure |

|---|---|---|---|

| Title Tag | Rankings & CTR | String match (Old vs New) | CMS default overrides custom titles (e.g., “Home – BrandName”) |

| Meta Robots | Indexability | Check for “noindex” | Staging settings carried to Prod |

| H1 Tag | Relevance signal | Check existence & match | Empty H1s or used for styling (logo in H1) |

| Canonical | Duplicate content | Check target URL | Self-referencing canonicals pointing to HTTP or staging domain |

| Status Code | Access | 200 OK verification | 500 errors on specific templates |

Redirect mapping at scale: my approach to SEO migration automation without losing intent

When you have 100,000 URLs, Excel will crash. Manual mapping is impossible. This is where I use a hybrid approach to redirect mapping.

I combine three methods: Rules (Regex), Similarity Matching, and AI-Assisted verification. Emerging tools and scripts now allow for AI-assisted URL mapping, often utilizing algorithms like TF-IDF URL matching (Term Frequency-Inverse Document Frequency) to analyze the content of an old page and find the closest match on the new site. While some vendors claim their tools process tens of thousands of URLs in minutes , I always treat these results as suggestions, not commands.

How I set confidence thresholds (and what I do with low-confidence matches)

I assign a confidence scoring metric to every automated match. This helps me decide where to spend my human energy:

- Score ≥ 90%: Auto-approve. (Usually exact slug matches or clear regex rules).

- Score 60-89%: Review Queue. (The AI thinks these match, but I need a human to scan them).

- Score < 60%: Reject/Manual Map. (These are usually pages that need to redirect to a parent category because a direct equivalent doesn’t exist).

Redirect QA checklist (chains, loops, and one-hop rules)

Once the map is generated, don’t just upload it. Test it. I run the list through a crawler to check for the “One-Hop” rule. Ideally, A redirects to B. Not A → B → C.

Redirect QA Checklist:

- Check Chains: Ensure zero redirect chains > 2 hops.

- Check Loops: Confirm no redirect loops (A → B → A).

- Check 404s: Ensure the final destination is a 200 OK.

- Check Query Strings: Ensure valuable UTMs or filter parameters aren’t being stripped (unless intentional).

- Check Protocols: Ensure no mixed content (HTTP → HTTPS) issues remain.

Table placement: “Redirect mapping methods” comparison

| Method | Best For | Speed | Accuracy Risk | Review Needed |

|---|---|---|---|---|

| Manual Mapping | Small sites (<500 URLs) or high-value pages | Slow | Low (Human intent) | None |

| Rules / Regex | Consistent directory patterns (e.g., /blog/ to /news/) | Instant | Low (if rules are tested) | Low |

| Similarity (Levenshtein/TF-IDF) | Large sites with messy URLs | Fast | Medium (False positives) | High |

| Hybrid AI-Assisted | Enterprise migrations (10k+ URLs) | Fast | Low-Medium | Medium (Low confidence items) |

QA thresholds I use before launch (parity, performance, and indexing)

“Is it safe to launch?” is the hardest question to answer. I use data, not gut feelings. I set explicit migration QA thresholds that we must meet. These aren’t universal laws, but they are strong guidelines I’ve refined over years of projects. Industry data suggests enterprise migrations (100k+ URLs) often take 12–20 weeks , so rushing QA is rarely an option.

I focus on my “Priority URLs”—usually the top traffic-driving and converting pages that account for 80% of value.

Table placement: “Pre-launch QA thresholds”

| Metric | Target Threshold | How to Measure | Status |

|---|---|---|---|

| Template Parity | ≥95% match | Diff Crawl (Meta/H1/Schema) | Go / No-Go |

| Priority Redirects | ≥98% One-Hop 200 OK | List Mode Crawl | Critical |

| Metadata Presence | ≥90% Parity | Custom Extraction | Needs Attention |

| Core Web Vitals | No regression >10% | Lighthouse / CrUX | Performance |

| Indexability | 100% of Priority URLs | Inspect URL / Crawl | Critical |

FAQs about SEO migrations (quick beginner answers)

What types of automation are most useful?

Focus on URL mapping, redirect mapping validation, crawl parity checks (diffing old vs. new), and automated performance monitoring. These save the most time and reduce human error.

How can I ensure redirect mapping scales accurately?

Use a hybrid approach. Start with Regex rules for patterns, use automated similarity matching for the rest, and apply confidence scoring to identify which URLs need manual human review.

What QA thresholds should be set?

Aim for ≥95% parity on templates and ≥98% one-hop redirects for priority pages. Never accept critical errors (like 404s) on your top revenue-generating pages.

How long does a large-scale migration take?

It varies, but mid-market sites (5k–100k URLs) often need 8–12 weeks. Large enterprise sites (100k+ URLs) can easily take 12–20 weeks to plan, map, and test properly.

Common mistakes I see in automated migration checks (and how I fix them)

Even with scripts, things go wrong. Automation is only as good as the logic you program into it. Here are the migration mistakes I see most often, and how I catch them:

- The “Staging Noindex” Leak:

Symptom: Developers leaveX-Robots-Tag: noindexon the production server headers.

Fix: I run a dedicated header check on 10 random URLs immediately after launch. Automation flags this as P0. - Canonical Drift:

Symptom: New pages canonicalize to the old domain or the staging subdomain.

Fix: My parity script checks that the canonical domain matches thefinal_urldomain. - Redirect Chains from Bulk Rules:

Symptom: A broad Regex rule conflicts with a specific 1-to-1 map, causing a loop.

Fix: I always run a “List Mode” crawl of the map before handing it to devs. If I see a 301 followed by another 301, I refine the rule. - Internal Link Rot:

Symptom: Navigation menus are updated, but in-content links still point to old URLs (relying on redirects).

Fix: I check internal linking parity. We update database links to point directly to the new 200 OK version to save crawl budget. - Metadata Wiped by Templating:

Symptom: The migration script transferred the content body but missed the custom Title Tags.

Fix: The “Gate 2” diff check highlights this immediately.

I have a habit that saves me every time: I run the same “sanity check” script after every single staging deploy. It takes 30 seconds and catches regressions the moment they happen.

Conclusion: a beginner-friendly next-actions checklist for SEO migration automation

Migration automation isn’t about magic; it’s about confidence. By implementing these checks, you move from “I hope it works” to “I know it works.”

Summary of what matters most:

- Respect the workflow gates: Don’t move to Staging QA until your Inventory is solid.

- Automate the file checks: Use scripts to diff metadata, schema, and status codes.

- Trust but verify redirects: Use confidence thresholds to manage scale without losing intent.

Your Next Actions this week:

- Export your baseline crawl (Gate 0) today—even if the migration is weeks away.

- Draft your URL mapping rules for the top 3 subdirectories.

- Build a simple CSV comparison sheet (or script) to practice diffing data.

- Create your migration runbook with specific owners for each check.

Once your migration is complete and your technical foundation is stable, you can shift focus back to growth. Many teams then look to scale their content production responsibly using an Automated blog generator to regain any organic visibility lost during the transition gaps. But for now, focus on the move. Run the scripts. Check the gates. And launch with confidence.