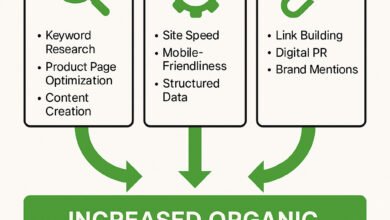

Technical SEO for SaaS: a beginner-friendly playbook for better crawling and indexing

The first time I audited a SaaS site, I nearly had a heart attack. After a major staging push, the engineering team had accidentally left the noindex tag on the public pricing page. Traffic didn’t drop immediately, but within 48 hours, the pipeline metrics flatlined. It was a brutal lesson in how fragile SaaS technical SEO can be.

Unlike simple blogs, SaaS platforms are complex beasts—often built on JavaScript frameworks like React, full of gated content, and prone to sprawling documentation. For beginners in marketing or product, the goal isn’t just “good SEO”; it’s ensuring your money pages are actually discoverable.

Here is the reality: Crawling is Google discovering your page. Indexing is Google storing your page. Ranking is Google showing your page. You cannot rank if you aren’t indexed, and you won’t be indexed if you can’t be crawled effectively.

This playbook is my standard operating procedure. We will cover how to fix crawlability and indexability, stabilize Core Web Vitals (specifically INP), handle JavaScript rendering without a headache, and structure your architecture for scale. Let’s get to work.

Why SaaS platforms struggle with indexing (and what “good” looks like)

SaaS websites face unique structural challenges that brochure websites don’t. You aren’t just managing content; you are managing an application ecosystem. Googlebot is a busy visitor with limited time, and if you make its job hard, it leaves.

The most common struggles I see fall into three buckets:

- Dynamic Complexity: Single Page Applications (SPAs) often rely on client-side JavaScript to render content. If Googlebot hits a page and sees an empty

<div id="root"></div>container, that page is invisible. - URL Sprawl: Features like faceted navigation in integration directories or endless URL parameters (e.g.,

?sort=date&filter=active) create thousands of low-value pages that waste crawl budget. - Mixed Intent: Marketing pages sit right next to app login screens (`app.yourdomain.com`). Without clear instructions, search engines waste time crawling user-specific dashboards that should never be indexed.

We are also living in the era of strict mobile-first indexing. It is no longer just about responsive design; it’s about parity. If your desktop site has internal links that your mobile site hides behind a hamburger menu that doesn’t load until clicked, Google might not count those links.

What does “good” look like?

Imagine a simple decision tree for every URL on your domain:

- Is it a public marketing asset? (Pricing, features, blog) → Index it.

- Is it user-specific or gated? (Dashboard, settings, staging) → Block it.

- Is it a duplicate or filter? (Search results, print views) → Canonicalize or Noindex it.

My baseline checklist for technical SEO for SaaS (crawlability + indexability first)

When I start a project, I don’t look at keywords first. I look at access. There is no point in optimizing a title tag on a page Google can’t see. Over 1 in 4 SaaS websites contain critical crawlability issues, like blocking robots.txt files or orphan pages.

Here is the triage workflow I use to audit SaaS sites efficiently:

| Step | What I Check | Common Symptom | The Fix |

|---|---|---|---|

| 1. Access | robots.txt & Status Codes | Googlebot is blocked; site returns 403/500 errors. | Update robots.txt allow rules; fix server permissions. |

| 2. Signals | Meta Robots & Canonicals | “Crawled – currently not indexed” in GSC. | Remove accidental noindex; align self-referencing canonicals. |

| 3. Structure | Sitemaps & Internal Links | New product pages take weeks to appear. | Implement segmented sitemaps; link orphan pages. |

| 4. Rendering | JavaScript Parsing | Page is indexed but description is blank/wrong. | Implement Server-Side Rendering (SSR) or dynamic rendering. |

If you only do 3 things this week: Check your robots.txt for broad disallows, verify your home page status code is 200 (not a redirect loop), and log into Google Search Console (GSC) to see if your sitemap is read successfully.

Step 1: Confirm Google can access the site (robots.txt, firewalls, login walls)

I always start with the robots.txt file. It’s a simple text file, but one typo can de-index your entire business. I’ve seen staging environments pushed to production that carried over this line:

User-agent: *

Disallow: /This tells every bot to stay away. For SaaS, you also need to watch out for Web Application Firewalls (WAF) or bot protection services (like Cloudflare) being too aggressive. If legitimate bots get hit with a “Verify you are human” captcha, they can’t crawl your site.

My test: I run the URL through the Google Search Console “Robots.txt Tester” and then check the live HTTP headers. If I see x-robots-tag: noindex in the header, it doesn’t matter what the HTML says—Google will drop it.

Step 2: Validate indexability signals (canonicals, duplicates, parameters)

SaaS sites are duplication factories. You might have a docs page at /docs/v1/install and /docs/latest/install. Or marketing teams might use aggressive UTM parameters for internal tracking, creating URLs like /pricing?utm_source=header.

I look for the Canonical URL. This is your way of telling Google, “I know I have five versions of this page, but this is the one to rank.”

A quick rule I follow: If I have a cluster of near-identical pages (like a software comparison for “vs Competitor A” and “vs Competitor B” where only the logo changes), I have to make a choice. Either I write unique content for both, or I canonicalize the weaker one to the stronger one. Don’t let Google choose for you.

Step 3: Fix status codes and redirects that waste crawl budget

Crawl budget is the number of pages Googlebot is willing to crawl on your site today. If it spends 30% of its budget hitting 404 (Not Found) errors or following long redirect chains (301 -> 301 -> 301 -> 200), it might not have enough budget left to find your new integration page.

I prioritize fixes in this order:

- 5xx Errors (Server Errors): These are urgent. If the server fails often, Google slows down crawling to avoid hurting your site.

- Redirect Chains: Simplify them. Go directly from A to C, skipping B.

- Soft 404s: This happens when a page says “Content Not Found” but returns a 200 OK status. This is confusing for bots. Force a real 404.

Step 4: Prove indexing with Search Console (not assumptions)

Never assume a page is indexed just because you published it. I use the URL Inspection tool in GSC as my source of truth. It tells you exactly what Google knows.

I keep a simple log when troubleshooting specific pages:

- URL: /features/reporting

- GSC Status: Crawled – currently not indexed

- Last Crawl: Oct 12, 2025

- Rendered Screenshot: Shows blank white screen (JS issue)

If the status is “Discovered – currently not indexed,” it often means Google knows the page exists but decided it wasn’t worth the crawl budget yet—usually a quality or internal linking signal.

Core Web Vitals for SaaS: how I optimize LCP, INP, and CLS without breaking conversion

For SaaS, performance isn’t just an SEO metric; it’s a UX requirement. However, I’ve seen marketers strip helpful elements (like chat widgets or demo forms) just to chase a perfect score. That is a mistake. We optimize to drive business, not to win a high score contest.

Core Web Vitals focuses on three metrics. Note that INP (Interaction to Next Paint) replaced FID in March 2024, focusing on responsiveness.

| Metric | Target | Common SaaS Cause | Quick Fix |

|---|---|---|---|

| LCP (Loading) | < 2.5s | Heavy hero images or background videos on landing pages. | Preload the hero image; use WebP formats. |

| INP (Interactivity) | < 200ms | Heavy JavaScript (HubSpot, Intercom, GTM tags) freezing the main thread. | Defer non-critical JS; debug with Chrome DevTools. |

| CLS (Stability) | < 0.1 | Cookie banners or promo bars injecting dynamic content that shifts layout. | Reserve explicit height/width space for banners/embeds. |

A note on AMP: While AMP pages load instantly, they often strip away the dynamic elements SaaS companies need to capture leads. Data suggests AMP can reduce lead generation by up to 59%, so I weigh this carefully. I usually prefer a well-optimized standard mobile page over AMP for B2B SaaS.

A simple prioritization rule: optimize money pages first

I treat performance like a product requirement, but I don’t try to fix every single blog post immediately. I prioritize the pages that pay the bills. My list usually looks like this:

- Pricing Page: High intent. Must load instantly.

- Demo / Sign-up Page: Any friction here kills conversion.

- Feature / Product Tour Pages: These convince users to buy.

- Comparison Pages (Us vs Them): High intent traffic lands here.

- Top 10 High-Traffic Blog Posts: The main entry points to the brand.

JavaScript-heavy sites and technical SEO for SaaS: getting React/Vue pages indexed reliably

This is the elephant in the room. If your marketing site is built on React, Vue, or Angular, standard crawling can be a gamble. Google renders JavaScript, but it takes more resources and happens in a second wave (the “render queue”). If your content relies entirely on client-side execution, you are delaying your own indexing.

Here is how I compare the rendering approaches:

| Approach | Pros | Cons | Best For |

|---|---|---|---|

| Server-Side Rendering (SSR) | Complete HTML delivered instantly. Best for SEO. | Higher server load; slower Time to First Byte (TTFB). | Highly dynamic pages (Pricing, Product). |

| Static Site Generation (SSG) | Fastest load times; secure. HTML is pre-built. | Build times can get long with thousands of pages. | Blogs, Docs, Marketing pages. |

| Client-Side Rendering (CSR) | Smooth transitions; feels like an app. | Bots may miss content; slower indexing. | User Dashboards (App), gated content. |

For most public-facing SaaS content, SSG or SSR is the standard. If you must use Client-Side Rendering, ensure you use Dynamic Rendering, where you serve a pre-rendered static HTML version to bots while users get the heavy JS version.

Minimum viable JS SEO checklist for beginners

If you are stuck with a JS-heavy site today, check these immediately to stop the bleeding:

- Links are

<a href>: Googlebot does not click buttons ordivelements withonclickevents. Links must be standard HTML anchors. - Unique Titles/Meta: Ensure the JS updates the document title and meta tags when the route changes.

- Content is in the DOM: Inspect the page source (not just the Inspect Element tool). If your text isn’t in the initial HTML or rendered quickly, it’s at risk.

- Internal linking works without JS: Disable JavaScript in your browser settings and try to navigate your menu. If you can’t, neither can a basic crawler.

- HTTP Statuses: Ensure your SPA returns a 404 code for non-existent routes, not just a “Page Not Found” component on a 200 OK page.

Architecture that scales: segmented sitemaps, internal linking, and crawl budget for SaaS

As your SaaS grows, you will launch new features, integrations, and thousands of programmatic pages. If you dump 50,000 URLs into a single sitemap.xml, you are flying blind.

I always implement segmented sitemaps. This means breaking your sitemap into logical chunks (e.g., sitemap-blog.xml, sitemap-integrations.xml, sitemap-docs.xml). This lets you see exactly where indexing issues live in Search Console. If the “Integrations” sitemap has 50% errors, you know exactly where to look.

| Sitemap Segment | Example URLs | Strategy |

|---|---|---|

| Core Pages | /pricing, /about, /demo | Priority crawl. Keep this small and clean. |

| Products/Features | /features/analytics, /product/crm | Ensure strict canonicals here. |

| Integrations | /integrations/zapier, /integrations/slack | Great for programmatic SEO. Monitor for thin content. |

| Resources/Blog | /blog/seo-tips, /guides/pdf | Update frequently to encourage crawl frequency. |

Internal Linking Strategy:

Internal links are the highways bots use to travel your site. I use a hub-and-spoke model. For example, a “SOC 2 Compliance” hub page links out to relevant blog posts, documentation, and the product feature page. This clusters authority.

When you are scaling content production—perhaps using an AI article generator to build out consistent drafts for these clusters—you must ensure your templates automatically include these internal links. Orphan pages (pages with no internal links) are dead ends for SEO.

How I prevent crawl traps (filters, search pages, faceted navigation)

These URLs look harmless, but they can quietly eat your crawl budget. A common trap is a blog filter that allows combinations like /blog?category=seo&year=2024&author=kalema. This can generate infinite URL variations.

The Fix:

- Use

robots.txtto disallow specific parameters (Disallow: /*?sort=). - Add a

noindex, followtag to search result pages. - Self-canonicalize filter pages back to the main category page if the content doesn’t change significantly.

Structured data + on-page signals for AI Overviews and zero-click visibility (without spam)

structured data schema markup enabling rich snippets and AI overviews”

structured data schema markup enabling rich snippets and AI overviews”class=”article-image”

style=”max-width: 100%; height: auto; border-radius: 8px; box-shadow: 0 4px 8px rgba(0,0,0,0.1);”

loading=”lazy” />

The future of search isn’t just 10 blue links; it’s AI Overviews and rich snippets. To be cited by generative engines, you need to speak their language. That language is Structured Data (Schema).

Research indicates that while 87% of sites use HTTPS, fewer than 25% utilize advanced structured data effectively. This is a massive opportunity.

| Page Type | Schema Type | Must-Have Properties |

|---|---|---|

| Software Product | SoftwareApplication |

applicationCategory, operatingSystem, price |

| How-to Guide | HowTo |

step, text, image (per step) |

| Pricing Page | Product (with Offer) |

priceCurrency, price, availability |

| FAQ Page | FAQPage |

mainEntity (Question/Answer pairs) |

Consistency is key here. If you are using an AI SEO tool or an SEO content generator like Kalema to help scale your content production, use it to enforce these schema standards across every new page. I use these tools not to replace expertise, but to ensure that every single integration page launches with perfect JSON-LD schema without me having to hand-code it.

My title/meta approach when Google rewrites them anyway

Google rewrites about 61% of title tags. That’s frustrating, but it usually happens because the provided title was keyword-stuffed or didn’t match the user’s query intent. I focus on clarity over cleverness.

My formulas for SaaS pages:

- Integration Page: [Your Brand] + [Partner] Integration | Connect & Sync Data

- Comparison Page: [Your Brand] vs [Competitor]: Top Feature Differences (2025)

- Use Case: Best [Category] Software for [Industry] | [Your Brand]

Wrap-up: how I measure SaaS SEO success, common mistakes to avoid, and quick FAQs

Technical SEO is a means to an end. The goal isn’t a perfect audit score; it’s pipeline. When I report to stakeholders, I don’t talk about “crawl stats.” I talk about how our technical fixes opened up the floodgates for qualified traffic.

Here is how I map technical work to business outcomes:

| SEO Activity | Leading Indicator | Business Metric |

|---|---|---|

| Fixing 404s / Redirects | Increase in Indexed Pages | More Organic Impressions |

| Improving Core Web Vitals | Lower Bounce Rate | Higher Demo Conversion Rate |

| Schema Implementation | Higher Click-Through Rate (CTR) | More Qualified Trials (MQLs) |

Conclusion: If you are overwhelmed, start small. 1) Fix your robots.txt. 2) Ensure your money pages are indexable. 3) Set up GSC to watch for errors. Consistency beats intensity every time.

How I measure SEO impact in a SaaS business (traffic → trials → pipeline)

I focus on these 6 KPIs monthly:

- Non-Branded Organic Traffic: Are we reaching people who don’t know us yet?

- High-Intent Keyword Rankings: Are we winning for “[Category] software”?

- Organic Trial Signups / Demo Requests: The money metric.

- Pipeline Generated: How much potential revenue did SEO influence?

- Content Decay: Which older high-performing pages are losing traffic?

- Crawl Errors: Are we blocking our own success?

Common technical SEO for SaaS mistakes (and the fix I use)

- Mistake: Leaving

noindexon production after staging.

Fix: Add a pre-launch SEO checklist to your deployment pipeline. - Mistake: Canonicalizing all params to the home page.

Fix: Canonicalize to the clean version of the same page. - Mistake: Ignoring the blog architecture.

Fix: Use categories and internal links so blog posts aren’t orphaned islands. - Mistake: Relying on hash URLs (e.g.,

site.com/#/about).

Fix: Use pushState history API for clean URLs. - Mistake: Blocking JS files in robots.txt.

Fix: Allow Googlebot to access your .js and .css files to render the page properly.

FAQs

Why is Core Web Vitals especially important for SaaS technical SEO?

SaaS platforms are interactive tools, not static brochures. Optimizing LCP, INP, and CLS directly reduces friction for users trying to sign up or use the product, aligning with Google’s goal of rewarding good user experiences. Since INP replaced FID in 2024, the focus is heavily on how quickly the interface responds to user clicks, like opening a chat widget.

How can SaaS platforms ensure their JavaScript-heavy content gets indexed?

The most reliable method is Server-Side Rendering (SSR) or Static Site Generation (SSG) for critical public pages, ensuring bots see full HTML immediately. For complex apps, use dynamic rendering to serve a pre-rendered version to bots while users get the client-side version. Always verify your setup using the URL Inspection tool in GSC.

What is programmatic SEO and why does it matter for SaaS?

Programmatic SEO involves using templates and data to generate large volumes of landing pages (like “Integrations with X” or “Best Tool for Y”) to capture long-tail search intent. It matters because it allows SaaS companies to scale indexing and traffic rapidly—sometimes 520% growth—by addressing thousands of specific user queries efficiently.

How should SEO success be measured in a SaaS business?

Success should be measured by business impact, not just vanity metrics like traffic or rankings. Focus on conversions: demo requests, trial activations, Marketing Qualified Leads (MQLs), and pipeline attribution. I recommend a monthly dashboard that connects technical improvements directly to these revenue-focused outcomes.

How can SaaS companies improve visibility in AI Overviews and zero-click results?

To improve AI visibility, you must become the most extractable answer. This means using advanced structured data (SoftwareApplication, FAQ, HowTo), maintaining high metadata freshness, and using clean, semantic HTML. I optimize content to be the best direct answer to a question, making it easy for AI models to cite the information.